How two frontier agentic coding platforms compare across architecture, benchmarks, safety, pricing, and the workflows that actually matter

Executive Summary

The AI coding landscape of 2026 bears almost no resemblance to the one that existed when OpenAI published the original Codex research paper in 2021. Back then, the conversation was about pass@k rates on HumanEval, a benchmark Codex itself introduced. Today, both OpenAI Codex and Anthropic Claude Code have matured into full agentic coding environments: systems that read repositories, plan multi-step changes, execute shell commands, run tests, iterate on failures, and deliver committed code — often with user-controlled sandboxing, fine-grained permission systems, and optional network access.

The most meaningful comparison between these products in 2026 is no longer “which model scores higher on HumanEval.” It is a four-dimensional evaluation: (1) the quality and design of the agent harness and tool layer, (2) the governance and safety architecture surrounding autonomous code execution, (3) the economics of operating the system at scale, and only then (4) the underlying model family’s performance on modern software-engineering benchmarks like SWE-bench Pro, Terminal-Bench 2.0, and SWE-rebench — the evaluations that actually measure the kind of work these agents do.

This article provides a rigorous, sourced comparison across all four dimensions. Claims are grounded in first-party documentation, official announcements, system cards, and independent benchmark leaderboards. Where information is not publicly specified, it is labeled as such rather than inferred. The goal is to give engineering teams and technical decision-makers the honest, complete picture they need to evaluate these platforms on real workloads.

Part 1 — Product Lineage and the Timeline That Matters

From Research Paper to Agentic Platform (2021–2026)

The story of modern agentic coding tools begins in July 2021 when OpenAI published the Codex paper, describing a GPT-family model fine-tuned on publicly available GitHub code. That paper introduced HumanEval — a benchmark of 164 handwritten Python programming problems — and reported that Codex solved 28.8% of problems (pass@1). It also disclosed that a production version of the model was powering GitHub Copilot. The Codex paper is a landmark of AI research transparency: it named its training data source, introduced a reproducible benchmark, and provided ablations. That level of disclosure would not be matched by either vendor when their respective 2026 successors launched.

The gap between 2021 and 2025 saw code models improve dramatically on HumanEval and MBPP, but the product framing remained largely focused on single-function or single-file completion. The shift to the agentic era came with OpenAI’s “Introducing Codex” in May 2025 and Anthropic’s general availability announcement for Claude Code in the same month. Both products reframed the unit of work from “a suggestion in your IDE” to “a software engineering task run autonomously in a sandboxed environment.”

OpenAI’s Codex Product Arc

The modern Codex product line has iterated aggressively. By February 5, 2026, OpenAI introduced GPT-5.3-Codex, described as “the most capable agentic coding model to date.” The announcement was notable for several reasons beyond its benchmark numbers. First, OpenAI disclosed that early versions of GPT-5.3-Codex were used to help train and deploy later versions of itself — a genuinely recursive development loop.

Second, the model’s scope expanded well beyond pure code synthesis to include what OpenAI called the full “software lifecycle”: debugging, deploying, monitoring, writing PRDs, editing copy, and user research. The framing of Codex shifted from a code-generation specialist to “a general-purpose agent that can reason, build, and execute across the full spectrum of real-world technical work.”

On February 12, 2026, OpenAI followed up with GPT-5.3-Codex-Spark, a speed-optimized variant running on Cerebras’ Wafer Scale Engine 3 hardware, delivering over 1,000 tokens per second — roughly 15× faster than the standard model. Spark was positioned for real-time, interactive coding: quick edits, rapid iteration, “vibe coding” workflows. The tradeoff was reduced depth on complex multi-file reasoning tasks.

On March 5, 2026, OpenAI launched GPT-5.4, described by analysis from NxCode as effectively absorbing GPT-5.3-Codex’s frontier coding capabilities into a general-purpose flagship model. The Codex CLI itself reached version 0.120.0 on April 11, 2026, adding Realtime V2 improvements, hook UX enhancements, and multiple sandbox and Windows fixes.

Anthropic’s Claude Code Arc

Claude Code’s timeline is defined by the pace of Claude model releases and an unusually dense release cadence in the CLI itself. After general availability in May 2025, the product has shipped dozens of releases per month with substantive feature additions.

The most significant model upgrade for Claude Code came on February 5, 2026 with Claude Opus 4.6, followed by Claude Sonnet 4.6 on February 17, 2026. Anthropic described Opus 4.6 as an “industry-leading model” across agentic coding, computer use, tool use, search, and finance — “often by wide margin.” Both Opus 4.6 and Sonnet 4.6 received 1M token context windows that became generally available at standard pricing on March 13, 2026.

On the CLI side, the Claude Code changelog reflects the pace of iteration: version 2.1.108 (April 14, 2026) introduced prompt caching TTL controls, a session recap feature, and improved error messages distinguishing server rate limits from plan usage limits. Version 2.1.109 (April 15, 2026) added improved extended-thinking indicators. Version 2.1.110, also released April 15, added a /tui fullscreen rendering command, push notification support, and numerous bug fixes including hardening “Open in editor” against command injection from untrusted filenames.

Zooming out, the Anthropic platform release notes show a parallel track of infrastructure investment: Claude Managed Agents in public beta (April 8, 2026), the Advisor tool (April 9, 2026), automatic caching for the Messages API (February 19, 2026), and the compaction API for effectively infinite conversations. Each of these directly enhances what Claude Code can do in practice, even when users interact only through the CLI.

Part 2 — Under the Hood: Models, Architecture, and Training Disclosures

What Is — and Is Not — Publicly Known

A responsible comparison of these platforms must start by acknowledging the gap between what is publicly disclosed and what is commonly inferred.

For historical Codex (2021), the disclosure was unusually rich: training data source (publicly available GitHub code), benchmark methodology (HumanEval, with full description and reproducible test harness), and performance figures with ablations. That transparency has not been replicated for the 2026 successors. The GPT-5.3-Codex announcement disclosed that the model was co-designed for, trained with, and served on NVIDIA GB200 NVL72 systems. It disclosed benchmark numbers. It did not provide a full dataset composition, architectural parameter count, or detailed contamination controls for the benchmarks it cited. The GPT-5.3-Codex System Card provides safety-oriented disclosures but does not fill the training-data transparency gap.

Anthropic’s disclosures follow a similar pattern. The Sonnet 4.6 system card provides responsible scaling framing, ASL-3 safeguards, safety evaluation results including refusal/harmlessness rates, and tool-use red-teaming references. The Opus 4.6 system card exists and is referenced in product announcements, but its detailed training-stage descriptions and contamination statements were not independently verifiable in this article’s research process. Anthropic’s Claude Code data usage policy specifies that commercial users — Team/Enterprise/API/third-party platforms — are not used for training unless explicitly opted in through a partner program, which is relevant to enterprise buyers.

The practical takeaway: both vendors have moved toward minimal training-data disclosure for their frontier models, making rigorous contamination accounting impossible without internal access. Any article that presents specific training-data mixture fractions for GPT-5.3-Codex or Claude Opus 4.6 should be treated with skepticism unless it cites a specific, retrievable dataset card.

Context Management: Where the Real Architecture Story Lives

Rather than model architecture internals, the more practically significant “under the hood” story for both products in 2026 is context management — how the system handles long-running agent sessions, preserves useful state, and manages the cost of large inputs.

Claude Code has invested heavily in this dimension. The 1M token context window for Opus 4.6 and Sonnet 4.6 became generally available at standard pricing on March 13, 2026, eliminating the previous beta-header requirement. Prompt caching in Claude Code 2.1.108 now includes an environment variable (ENABLE_PROMPT_CACHING_1H) to opt into a 1-hour cache TTL on API key, Amazon Bedrock, Vertex AI, and Microsoft Foundry, dramatically reducing the cost of large, repeated-prefix sessions. The platform also introduced automatic caching (February 2026), where a single cache_control field triggers automatic cache point advancement as conversations grow. The compaction API, also launched in February 2026 on Opus 4.6, provides server-side context summarization for effectively infinite conversations — the equivalent of never hitting a “context limit” during a multi-hour coding session. Claude Code 2.1.108’s recap feature adds a related benefit: when a developer returns to an interrupted session, they can invoke /recap to receive a summary of what the agent was doing, reducing the cognitive cost of re-establishing context.

OpenAI Codex addresses long-running sessions primarily through its credit-fallback system. When a session hits rate limits, a hybrid mechanism falls back to credits, allowing work to continue without a hard stop. The system is designed for real-time correctness and auditability — credits aren’t silently consumed, and users maintain visibility into usage. GPT-5.3-Codex-Spark’s WebSocket connection (with 80% roundtrip overhead reduction, 30% per-token overhead reduction, and 50% time-to-first-token improvement) makes the interactive loop feel faster in practice, even if it doesn’t directly address multi-hour session persistence in the same way as compaction.

The underlying model context windows are comparable at the frontier: GPT-5.4 supports up to 1M tokens via the API (with price doubling above the 272K standard window), and Claude Opus 4.6 and Sonnet 4.6 support 1M tokens at standard pricing throughout.

Part 3 — Capabilities Deep Dive: The Workflows That Actually Matter

The Agentic Workflow Taxonomy

Modern agentic coding platforms need to be evaluated across a taxonomy of work types, not a single “code generation” task. Here is how both products compare across the categories that matter most for software engineering teams.

1. Greenfield Code Generation and Prototyping

Both products excel at building working software from high-level descriptions, but their interaction models differ. GPT-5.3-Codex’s Codex app demonstrated this with a published example of building two playable games autonomously over millions of tokens using only generic follow-up prompts like “fix the bug” or “improve the game.” This showcases the model’s ability to run long, autonomous creative sessions with minimal human steering. GPT-5.3-Codex-Spark, by contrast, is optimized for rapid iteration — generating a React component before your fingers leave the keyboard at 1,000+ tokens per second, making it ideal for “vibe coding” where you try ten variations in the time a slower model would produce two.

Claude Code’s greenfield capability is supported by Opus 4.6’s planning improvements. The model has been specifically trained for better planning in coding workflows — building a clear plan before executing, asking clarifying questions upfront, and handling large unfamiliar codebases. The ANTHROPIC_DEFAULT_OPUS_MODEL=claude-opus-4-6 flag used in agentic evaluations demonstrates how the platform enables model-level configuration that influences scaffolding behavior.

2. Repository-Aware Multi-File Refactoring

This is the key capability that separates agentic coding from IDE autocomplete. Both products make explicit claims here. OpenAI frames GPT-5.3-Codex as capable of complex, multi-file changes across an entire repository. Anthropic positions Claude Code as a system that “reads your codebase, makes changes, runs tests, and can deliver committed code.”

The SWE-rebench leaderboard offers relevant evidence. SWE-rebench notes that Claude Code (using Claude Opus 4.6 in headless mode with specific tool restrictions) has the highest pass@5 across all models evaluated, meaning that on repeated attempts at the same task, Claude Code is the most likely to eventually solve it. This matters for real-world multi-file refactoring where you may want the agent to try several approaches. The evaluation also notes that Claude Opus 4.6 “outperforms Sonnet 4.6 not by coverage, but by higher reliability on the same tasks” — suggesting that for mission-critical refactoring work, the quality of the underlying model matters more than raw task coverage.

OpenAI’s GPT-5.3-Codex and GPT-5.4 perform strongly on SWE-bench Pro’s multi-language tasks (Python, Go, TypeScript, JavaScript), which are specifically designed to test multi-file, larger-scope fixes. GPT-5.3-Codex leads SWE-bench Pro (vendor-reported) at 56.8% — more on benchmark methodology below.

3. Test Generation and Test-Driven Development

Both products support running tests as part of the agent loop. Claude Code’s permission system explicitly controls Bash tool usage, which includes pytest, npm test, go test, and equivalent commands. OpenAI Codex agents can execute shell commands in sandboxed environments and will iterate on test failures. GPT-5.3-Codex-Spark explicitly does not run tests by default (it makes minimal, targeted edits) — you must ask it to run tests. The standard GPT-5.3-Codex and Claude Code in their default configurations will proactively run tests as part of the edit-run-iterate loop.

Claude Code’s /review and /security-review slash commands, now discoverable by the model via the Skill tool (added in 2.1.108), add structured review workflows that can be invoked mid-session. The model can independently discover these commands and invoke them when appropriate, rather than requiring the user to know the command syntax.

4. Code Review and GitHub Integration

OpenAI Codex includes code review as a named capability in its plan limits documentation (e.g., “Code Reviews / 5h” usage windows). The Codex app, CLI, and IDE extension allow for reviewing PRs in a structured agentic workflow. Anthropic’s Claude Code supports @claude tagging on GitHub per the repository documentation, enabling review workflows triggered directly from pull requests. The April 2026 Claude Code releases also include improvements to session management that benefit multi-PR review sessions: better session resumption, the /tui fullscreen mode for distraction-free review, and push notifications when Claude needs input or completes a task.

5. Long-Horizon Autonomous Tasks

This is the frontier capability that both products are racing toward — the ability to run for hours or days on a complex software project with minimal human intervention.

OpenAI’s framing from the GPT-5.3-Codex announcement is explicit: “you can steer and interact with GPT-5.3-Codex while it’s working, without losing context.” The “Follow-up behavior” setting in the Codex app lets users choose how much real-time steering they want. Frequent progress updates let developers ask questions and course-correct without losing the agent’s working state.

Claude Code addresses long-horizon work through compaction (automatic context summarization), the recap feature (reorienting to a session after an interruption), and the Cowork integration. Claude Cowork, generally available as of April 9, 2026, brings Claude Code’s agentic capabilities to the desktop for knowledge work beyond coding — running locally in an isolated VM with access to local files and MCP integrations. The March 2026 addition of computer use (research preview) lets Claude open files, run dev tools, and navigate the screen directly, further expanding the scope of autonomous operation.

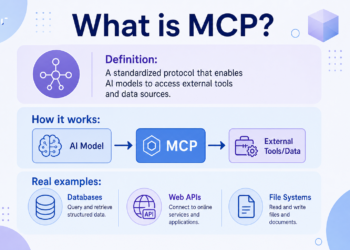

6. MCP and Tooling Integrations

Both products support the Model Context Protocol, and both provide configuration systems for managing tool access. Codex’s configuration docs describe hooks, rules, and “trust project config files” as the core of its extensibility model. Claude Code’s permissions architecture allows explicit allow/ask/deny rules for specific tools (Bash, Read, Edit, WebFetch, MCP tools), individual file paths, and URL domains.

The practical difference here is governance granularity. Claude Code’s permission system is more granular and more documented — you can explicitly deny reading .env files, deny all WebFetch, or deny specific shell commands. Codex’s enterprise configuration docs describe enforcement of sandbox mode, web search mode, and MCP server allowances at the organization level, with conflict resolution behavior for policy disagreements between project and global configs.

7. Multi-Agent and Subagent Workflows

Both platforms now expose multi-agent primitives. Codex’s docs describe “subagents” with runtime overrides for sandbox and approval settings propagating to child agents. Claude Code’s Claude Managed Agents, launched in public beta on April 8, 2026, provides a fully managed agent harness for running Claude as an autonomous agent with secure sandboxing, built-in tools, and server-sent event streaming. The platform also added an Advisor tool (April 9, 2026) that pairs a fast executor model with a higher-intelligence advisor model, providing strategic guidance mid-generation — achieving close to advisor-quality output while keeping bulk token generation at executor rates.

Part 4 — Benchmarks: What the Numbers Say (and Don’t Say)

The Scaffolding Problem: Why Raw Numbers Mislead

Before reviewing benchmark scores, it is essential to understand the single most underappreciated factor in agentic coding evaluation: scaffolding matters as much as the model. Scaffolding refers to everything surrounding the model — the agent framework, prompt engineering, tool access, context retrieval, and iteration strategy. The same underlying model can produce wildly different benchmark scores depending on scaffolding design.

As the SWE-rebench documentation explains: “Claude Code and Codex behave differently from SWE-style agents. They may ask clarifying questions, wait for confirmation, or produce conversational responses instead of making a code change.” For headless evaluation, you must explicitly set --permission-mode acceptEdits and --output-format stream-json for Claude Code, or --yolo --json for Codex — otherwise the agent will stall waiting for human approval. These configuration choices affect scores materially.

Scale AI’s SEAL leaderboard (part of SWE-bench Pro) addresses this by running every model through standardized scaffolding with a 250-turn limit. The result: top-6 models separate by less than 5 percentage points under controlled conditions — suggesting that model capability differences are real but smaller than uncontrolled leaderboards imply.

SWE-bench Verified: Strong Numbers, Contamination Caveats

SWE-bench Verified is a human-validated subset of 500 Python-only tasks from real GitHub repositories. It remains the most widely cited benchmark for agentic coding performance. As of March 2026, per the SWE-bench leaderboard at marc0.dev:

| Rank | Model | Score |

|---|---|---|

| 1 | Claude Opus 4.5 | 80.9% |

| 2 | Claude Opus 4.6 | 80.8% |

| 3 | Gemini 3.1 Pro | 80.6% |

| 4 | MiniMax M2.5 | 80.2% |

| 5 | GPT-5.2 | 80.0% |

| 6 | Claude Sonnet 4.6 | 79.6% |

GPT-5.3-Codex is notably absent from the SWE-bench Verified top list because OpenAI stopped reporting Verified scores after confirming data contamination. As the Morph SWE-bench Pro analysis explains: “OpenAI’s audit found that every frontier model tested — including GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash — could reproduce verbatim gold patches or problem statement specifics for certain SWE-bench Verified tasks.” OpenAI has publicly recommended SWE-bench Pro as the appropriate replacement.

The contamination concern also applies to Claude’s high scores on Verified. The 80.8% for Claude Opus 4.6 may reflect some memorization of the benchmark’s Python-only, relatively small (500-task) test set. This does not mean the model isn’t capable — but it means the number should be read as a ceiling, not a precise measure of current genuine capability on unseen tasks.

SWE-bench Pro: The More Honest Standard

SWE-bench Pro, created by Scale AI to address contamination, uses 1,865 multi-language tasks (Python, Go, TypeScript, JavaScript) across 41 repositories, with an average of 4.1 files changed per task and a minimum of 10 lines changed. Every task has been augmented with human-written problem statements and interface specifications.

Vendor-reported scores (using custom scaffolding):

| System | Base Model | SWE-bench Pro Score |

|---|---|---|

| GPT-5.3-Codex (CLI) | GPT-5.3-Codex | 57.0% |

| Claude Code | Opus 4.5 | 55.4% |

| Auggie | Opus 4.5 | 51.8% |

| Cursor | Opus 4.5 | 50.2% |

Under SEAL’s standardized scaffolding, the picture changes:

| Rank | Model | SEAL SWE-bench Pro Score |

|---|---|---|

| 1 | Claude Opus 4.5 | 45.9% |

| 2 | Claude Sonnet 4.5 | 43.6% |

| 3 | Gemini 3 Pro | 43.3% |

| 6 | GPT-5.2 Codex | 41.0% |

The gap between vendor-reported and SEAL-standardized scores is significant. Claude Code’s 55.4% versus Opus 4.5’s 45.9% represents a ~10-point scaffolding premium — Anthropic’s custom harness provides real capability lift over generic scaffolding. The same applies to OpenAI’s CLI-optimized result.

For GPT-5.3-Codex specifically, OpenAI reports SWE-bench Pro Public at 56.8% on the announcement page, noting that this represents an “industry high” — narrowly ahead of GPT-5.2-Codex at 56.4% and GPT-5.2 at 55.6%. The improvements are real but incremental: the benchmark is genuinely hard, and pushing scores materially higher requires either better models, better scaffolding, or both.

Morph’s internal benchmarks show that adding WarpGrep v2 (an RL-trained search subagent) to either Codex 5.3 or Opus 4.6 yields an additional 2+ points — evidence that search scaffolding quality remains a meaningful differentiator at the frontier.

Terminal-Bench 2.0: Terminal Fluency Matters

Terminal-Bench 2.0 measures the terminal skills a coding agent needs — navigating filesystems, running commands, managing environment state. The current leaderboard per marc0.dev shows:

- Gemini 3.1 Pro: 78.4% (current leader)

- GPT-5.3-Codex: 77.3%

- Claude Opus 4.6: 74.7%

GPT-5.3-Codex’s strong Terminal-Bench 2.0 result (77.3% at launch) was part of OpenAI’s positioning as a “far exceeded previous state-of-the-art” claim — and the numbers bear that out, with the caveat that Gemini 3.1 Pro has since moved slightly ahead. Claude Opus 4.6’s jump to 74.7% (from 65.4% in January 2026) reflects meaningful improvement in terminal fluency that tracks with the model’s increased emphasis on planning and agentic execution.

SWE-rebench: Production Conditions and Pass@5

SWE-rebench is a continuously updated benchmark that evaluates agents on tasks released within a specific time window (to control contamination) using a minimal bash-tool-only harness. The February 2026 edition found:

- Claude Opus 4.6: #1 spot (highest reliability; 34 tasks solved 5/5, 40 pass@5)

- GPT-5.4: top-5 with notably low token consumption

- Claude Code pass@5 is higher than that of all other models

The pass@5 metric is practically significant: it tells you whether, if you let the agent try five times, it will eventually succeed. Claude Code’s leading pass@5 suggests it has the highest “eventual success rate” — important for long-horizon tasks where you’re willing to let the agent iterate.

Historical Benchmarks: HumanEval, MBPP, CodeXGLUE

These benchmarks deserve mention both for historical context and honest scope-setting.

The original Codex paper’s 28.8% pass@1 on HumanEval (2021) was considered remarkable. Today, frontier models approach 90%+ on HumanEval — rendering it effectively saturated. MBPP (~1,000 crowd-sourced Python problems) similarly shows ceiling effects for frontier models. CodeXGLUE (10 tasks across 14 datasets) covers broader code understanding tasks but is not actively reported by either vendor for current product comparisons.

These benchmarks remain useful for (1) establishing the historical baseline that shows how far the field has progressed, and (2) evaluating smaller or fine-tuned models where SWE-bench Pro scores would be near zero. For comparing GPT-5.3-Codex against Claude Opus 4.6 in 2026, they provide limited signal.

Part 5 — Safety, Security, and Governance

Why This Dimension Is More Important Than Benchmarks

When an AI coding agent has permission to edit files, execute shell commands, access the network, and commit to version control, the governance architecture becomes as important as the model’s capability. A highly capable agent with poor permission controls is a larger attack surface than a less capable agent with strong sandboxing. Both vendors have made safety and governance first-class product features — but their architectures differ in instructive ways.

OpenAI Codex: Sandboxed Execution and Cybersecurity Classification

The GPT-5.3-Codex System Card contains the most significant safety disclosure: GPT-5.3-Codex is the first model OpenAI classifies as “High capability” for cybersecurity-related tasks under their Preparedness Framework. This classification triggered their most comprehensive cybersecurity safety stack to date, including:

- Safety training targeted at cybersecurity scenarios

- Automated monitoring for elevated-risk requests

- Enforcement pipelines including threat intelligence

- Potential routing of certain elevated-risk requests from GPT-5.3-Codex to GPT-5.2 (a weaker model) as a mitigation

The Trusted Access for Cyber program, launched alongside GPT-5.3-Codex, is a pilot for accelerating cyber defense research while gating advanced capabilities behind verified identity.

For sandbox architecture, Codex uses:

- Cloud containers: isolated, network-disabled by default; network access requires explicit user allowlist/denylist configuration

- macOS: Seatbelt sandbox

- Linux: seccomp + landlock

- Windows: WSL or other configurable options

User approval flows are built into the agent harness, and the system card notes specific reinforcement training to avoid destructive actions (e.g., reverting user changes) and additional CLI prompting to clarify conflicting edits before executing them.

Enterprise customers can enforce policies for approval mode, sandbox mode, web search mode, and MCP server allowances centrally, with documented conflict resolution behavior.

Claude Code: Permissions as First-Class Controls

Claude Code’s governance architecture separates two complementary layers: permissions (what the agent is authorized to attempt, enforced at the harness level) and sandboxing (OS-level enforcement of what Bash can actually do, enforced at the kernel level). The documentation is explicit: “permissions and sandboxing are complementary layers; you need both.”

The permission system supports explicit rules for each tool:

- Allow/ask/deny patterns for the Bash tool, Read tool, Edit tool, WebFetch tool, and MCP tools

- File-path whitelisting and blacklisting: e.g., deny reading

.env, deny editing*.lockfiles - URL domain filtering for WebFetch: e.g., deny

curlto external domains while allowing internal documentation

The /config system allows these rules to be set per-project (in .claude/settings.json) or globally, with project settings taking precedence. The February 2026 Sonnet 4.6 system card documents the ASL-3 responsible scaling framework, providing safety evaluation results including refusal rates on harmful requests, tool-use red-teaming benchmark references, and an assessment process for capability thresholds.

A notable April 2026 improvement from the Claude Code 2.1.110 changelog: a fix for PermissionRequest hooks where returning updatedInput was not being re-checked against permissions.deny rules, and setMode:'bypassPermissions' updates not respecting disableBypassPermissionsMode. These are exactly the kinds of security-relevant edge cases that responsible production deployments depend on being patched promptly.

The Shared Threat Model: Prompt Injection, Hallucinated Packages, and Supply Chain Risk

Both products face the same fundamental threat model for agentic coding: model outputs that become executable actions create a larger attack surface than chat-only interactions. Three threat vectors deserve specific attention:

Indirect prompt injection occurs when malicious instructions are embedded in files the agent reads (e.g., a README.md in a dependency, a comment in a third-party file, or a web page fetched during research). Both products mitigate this by making network access opt-in (disabled by default in Codex cloud containers; requires explicit WebFetch allowance in Claude Code). The OWASP Top 10 for LLM Applications identifies prompt injection as the #1 risk for LLM-integrated applications and specifically calls out the increased risk when the model can take actions, not just generate text.

Hallucinated package names (sometimes called “slopsquatting”) represent a supply chain risk specific to AI code generation. A USENIX Security paper (“We Have a Package for You!”) studied package hallucinations across many models and large-scale code generations, finding that hallucinated package names can be registered by attackers, turning a model’s inventory of fictional package names into a real-world attack vector. Both Claude Code and Codex inherit this risk at the model level; neither provides automated package-existence verification before generating pip install or npm install commands. This remains an open problem in the field that enterprise users should address through CI/CD pipeline checks rather than relying on the model.

Destructive action prevention is specifically addressed in GPT-5.3-Codex’s system card, which notes reinforcement training to avoid reverting user changes, and additional prompting to resolve conflicting edits. Claude Code’s permission system addresses this through the permissions.deny mechanism — you can explicitly deny rm -rf, git reset --hard, or other irreversible commands.

Data Retention and Training Policies

Both vendors have explicit policies that enterprise buyers should understand before deploying these tools on proprietary codebases.

OpenAI states that as of March 1, 2023, data sent to the OpenAI API is not used to train or improve models unless explicitly opted in. Business product inputs/outputs (including API) are not used for training by default.

Anthropic’s Claude Code documentation states that commercial users — Team/Enterprise/API/third-party platforms — are not used for training unless explicitly opted into a partner program. Enterprise plans with Zero Data Retention (ZDR) are available for additional compliance assurance. Local plaintext transcript caching defaults in Claude Code are operationally relevant for compliance review — organizations should understand where session transcripts are stored and who can access them.

Open-Source Licensing

The Codex CLI repository is licensed under Apache-2.0 — the tooling layer, not the model. The Claude Code repository includes a LICENSE.md; users should review the specific terms before redistribution or modification. In both cases, the model weights and API access are governed by separate Terms of Service, not the tooling license.

Part 6 — Economics and Deployment

API Token Pricing (April 2026)

Understanding the economics of these platforms requires separating the product tier (subscription plans) from the API tier (per-token pricing). For teams using these tools at scale via API, per-token rates are the dominant cost driver.

OpenAI (GPT-5.3-Codex and GPT-5.4)

| Model | Input $/1M | Cached Input | Output $/1M |

|---|---|---|---|

| GPT-5.3-Codex (Standard) | $1.75 | $0.175 | $14.00 |

| GPT-5.3-Codex (Priority) | $3.50 | $0.35 | $28.00 |

| GPT-5.4 (Standard, ≤272K) | $2.50 | $0.25 | $15.00 |

| GPT-5.4 (Standard, >272K) | $5.00 | $0.50 | $15.00 |

Priority tier for GPT-5.3-Codex costs exactly 2× standard across input and output — a straightforward multiplier for teams that need guaranteed throughput. GPT-5.4 Mini, at approximately $0.40/$1.60 per MTok per the NxCode analysis, provides a budget tier for high-volume, lighter workloads.

Anthropic (Claude model family)

| Model | Input $/1M | Cached Read | Output $/1M |

|---|---|---|---|

| Claude Opus 4.6 | $5.00 | ~10% of input | $25.00 |

| Claude Sonnet 4.6 | $3.00 | ~10% of input | $15.00 |

| Claude Haiku 4.5 | $1.00 | ~10% of input | $5.00 |

Claude’s caching economics are particularly favorable for long-session agentic work: with 1-hour cache TTL (available via ENABLE_PROMPT_CACHING_1H), a large repository context uploaded at the start of a session can be cached and reused across many subsequent tool calls. For a 100K-token codebase context that is referenced in every agent step, caching can reduce effective input costs by 80–90% within a session.

The practical cost comparison for a “typical Claude Code session” vs. a “typical Codex session” is workload-dependent and not publicly specified in a way that enables rigorous calculation. Key variables include: number of tool calls per session, proportion of tokens that hit cache, whether you use the cloud sandbox (Codex) vs. local execution (both support), and which model tier you use.

Product Plan Access

For users accessing these tools through subscriptions rather than API keys:

Codex is included in ChatGPT paid plans (Plus at $20/mo, Pro at $200/mo, and above), with usage limits that vary by model and task type. The Pro plan at $200/mo was running at 10× Plus limits through May 31, 2026 per a temporary promotion noted in Codex pricing documentation. Credits extend access beyond plan limits, with the hybrid system designed to avoid hard stops during agentic sessions.

Claude Code is included with Claude API access and is now bundled with every Team plan standard seat (added January 2026). Enterprise plans with ZDR, HIPAA readiness (available January 2026), and role-based access controls (April 2026) provide the compliance infrastructure that regulated industries require. The Anthropic platform now supports self-serve Enterprise purchase without a sales conversation — a friction reduction that benefits teams who want to evaluate at scale before committing.

Deployment Options: Where the Code Actually Runs

Both products support multiple deployment surfaces, with different tradeoffs for security, latency, and compliance:

Codex: Local CLI (code runs on developer machine, sandbox via OS-level controls); cloud containers (isolated, network-off by default); IDE extension (VS Code and others); Codex app (macOS, web); API for programmatic access (API access was noted as “coming soon” at the Feb 2026 launch; consult current OpenAI API docs for status).

Claude Code: Local CLI (terminal); IDE integration; Claude Desktop app (with Cowork for non-coding tasks); browser; Amazon Bedrock; Google Cloud’s Vertex AI; Microsoft Foundry; Claude Managed Agents API (April 2026). The multi-cloud availability is a meaningful differentiator for enterprises with existing cloud commitments — a team standardized on AWS can run Claude Code via Bedrock under their existing AWS billing and data governance.

Rate Limits and Throughput

Both products use rate limiting systems, but their documentation differs in transparency. Claude’s API documents that for most models, cached input tokens do not count toward ITPM (input tokens per minute) limits, effectively multiplying throughput for cached-prefix workloads. Token-bucket rate limiting applies, and the 1-hour cache TTL significantly improves the economics of large agentic sessions.

OpenAI’s credit fallback system is designed to blend rate limits and credits continuously, avoiding hard stops. Exact tier-by-tier limits (RPM/TPM/ITPM) are documented in the API console rather than as fixed public tables — a pattern that means rate limit information should be verified against your specific tier in the dashboard rather than relying on any external source.

Part 7 — Decision Framework: Choosing and Operationalizing Your Agentic Coding Stack

This Isn’t a Binary Choice

The honest conclusion from a rigorous comparison is that neither OpenAI Codex nor Anthropic Claude Code is universally superior. Both are excellent agentic coding platforms with genuine strengths. The right choice depends on a small number of high-signal variables specific to your team.

Variable 1: Benchmark Priority and Workload Type

If your primary workload is Python-heavy bug fixing and refactoring on established codebases, the SWE-bench Verified numbers suggest Claude Opus 4.6 (80.8%) and even Sonnet 4.6 (79.6%) offer excellent performance at strong value — Sonnet 4.6 costs 5× less than Opus 4.6 while scoring only 1.2 percentage points lower on this benchmark.

If your workload is multi-language, cross-repository, larger-scope changes — the kind SWE-bench Pro is designed to measure — GPT-5.3-Codex leads vendor-reported scores (56.8%) and GPT-5.4 is close behind. Under SEAL standardized scaffolding, the gap narrows significantly and Claude Opus 4.5 leads, suggesting that Anthropic’s custom Claude Code harness is providing meaningful lift.

For terminal-intensive DevOps workflows, Terminal-Bench 2.0 currently favors Gemini 3.1 Pro (78.4%) and GPT-5.3-Codex (77.3%) over Claude Opus 4.6 (74.7%) — though the margins are narrow enough that real-world factors like latency, price, and integration quality will dominate.

For long-horizon autonomous tasks where the agent runs for extended periods with minimal interruption, Claude Code’s pass@5 leadership on SWE-rebench and the compaction/recap infrastructure suggest it is currently better optimized for this paradigm.

Variable 2: Speed vs. Depth Tradeoff

If interactive, real-time coding is your priority — making small targeted edits, getting instant feedback, iterating rapidly — GPT-5.3-Codex-Spark (1,000+ tokens/second on Cerebras hardware, available to ChatGPT Pro subscribers) offers a qualitatively different experience. The sub-100ms first-token latency changes the interaction model from “wait for AI” to “think alongside AI.”

Claude Code’s fast mode (research preview for Opus 4.6, up to 2.5× faster at premium pricing) partially addresses this, but it does not yet match Spark’s extreme latency performance. For teams willing to pay the Pro subscription cost, Spark is genuinely differentiated for IDE-adjacent, rapid-iteration workflows.

Variable 3: Enterprise Compliance Requirements

For teams with strict compliance requirements, Claude Code’s deployment story is more mature. Multi-cloud availability (Bedrock, Vertex AI, Foundry), Zero Data Retention options, HIPAA-ready Enterprise plans, role-based access controls, and Claude’s Managed Agents infrastructure provide a compliance-friendly deployment path that doesn’t require operating entirely in OpenAI’s infrastructure.

OpenAI’s enterprise controls are real (central policy enforcement, sandbox mode, MCP server allowances) but the deployment options are primarily OpenAI-hosted, which may not satisfy data residency requirements for regulated industries. OpenAI’s data residency controls (available at 1.1× pricing as of February 2026) partially address this.

Variable 4: Cost Sensitivity at Scale

At equivalent capability tiers, the math favors Anthropic for high-volume production workloads: Claude Sonnet 4.6 at $3/$15 per MTok delivers near-Opus performance on many coding tasks, while GPT-5.4 Standard at $2.50/$15 is comparable on input but slightly cheaper. GPT-5.3-Codex at $1.75/$14 is notably cheaper for input-heavy workloads where caching is not effective. Claude Haiku 4.5 at $1/$5 is the clear winner for latency-sensitive, lighter-weight tasks where Opus/GPT-5.4 capabilities are unnecessary.

Cache economics heavily favor Claude for large-codebase sessions: the 1-hour TTL combined with cached-tokens-not-counting-toward-ITPM can make Claude’s effective cost per session substantially lower than the listed input rate when the repository context is large.

Variable 5: Governance Tolerance for Autonomous Action

If your organization is risk-conservative about autonomous code execution — working on regulated codebases, codebases with financial or safety implications, or simply early in your agentic tooling adoption — Claude Code’s more granular and more publicly documented permission system gives compliance and security teams a clearer surface to audit and control. The explicit allow/ask/deny rules for each tool, combined with OS-level sandboxing, provide defense in depth that security teams can reason about.

OpenAI’s approach is equally safety-conscious at the architecture level (sandbox defaults, network-off-by-default, approval flows), and GPT-5.3-Codex’s cybersecurity safety stack — triggered by its High Capability classification — is the most extensive safety mitigation stack OpenAI has deployed for a model to date. But the surface area of cybersecurity capabilities that triggered that classification is itself something organizations should evaluate carefully.

Operational Recommendation

For most software engineering teams evaluating these platforms in April 2026, we suggest:

- Start with Claude Sonnet 4.6 + Claude Code CLI for Python-heavy, long-session agentic work where cost matters. The performance-per-dollar is exceptional, the governance model is well-documented, and multi-cloud deployment is straightforward.

- Evaluate GPT-5.3-Codex + Codex app for interactive, real-time coding sessions, particularly if you already subscribe to ChatGPT Pro. Codex-Spark’s speed advantage is real and meaningful for rapid iteration workflows.

- Use GPT-5.4 as the default general-purpose choice when you want coding, computer use, and knowledge work in a single model without managing multiple tiers.

- Upgrade to Claude Opus 4.6 for the highest-stakes autonomous tasks where pass@5 reliability and long-horizon coherence matter more than cost.

In all cases, treat the agent harness as a first-class engineering concern. Ten percentage points of benchmark performance are available from scaffolding improvements alone — and the security posture of your deployment depends entirely on how carefully you configure permission rules, sandbox boundaries, and network access controls.

Conclusion: Agents, Not Models

The arc from the 2021 Codex paper to the April 2026 releases of Claude Code 2.1.110 and Codex CLI 0.120.0 is a story of abstraction level shift. HumanEval measured whether a model could write a function given a docstring. Modern agentic coding benchmarks — SWE-bench Pro, Terminal-Bench 2.0, SWE-rebench, long-horizon agent evaluations — measure whether a system can understand an unfamiliar codebase, navigate its structure, execute a plan across multiple files and languages, recover from failures, and deliver working changes. The benchmarks themselves have grown up alongside the products.

What this comparison reveals is that both OpenAI and Anthropic have built genuinely capable agentic coding systems, and both are iterating at a pace that makes any specific benchmark number obsolete within weeks. GPT-5.3-Codex’s SWE-bench Pro lead (56.8%) and Terminal-Bench strength (77.3%) are real, but GPT-5.4 has already moved beyond it in general capability. Claude Opus 4.6’s SWE-bench Verified performance (80.8%) and SWE-rebench pass@5 leadership are real, but the contamination caveats on Verified are real too.

The most durable insight is structural: the agent harness is the product. The model is the engine; the harness is the car. A team that invests in understanding permission systems, sandboxing configurations, caching architectures, and multi-agent patterns will outperform a team that simply selects the model with the highest benchmark score. The vendors understand this — it is why both Codex and Claude Code are shipping harness improvements in every release alongside model upgrades.

The question for 2026 is not which product wins a benchmark. It is which platform your team can govern safely, operate economically, and integrate into your existing workflow — and run autonomously on the kinds of tasks that actually slow down your engineering organization.

This article was researched and written in April 2026. Benchmark scores, pricing, and product features change rapidly; verify current information against primary sources before making purchasing decisions. All factual claims are sourced from first-party announcements, official documentation, or named third-party research.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.

Comments 3