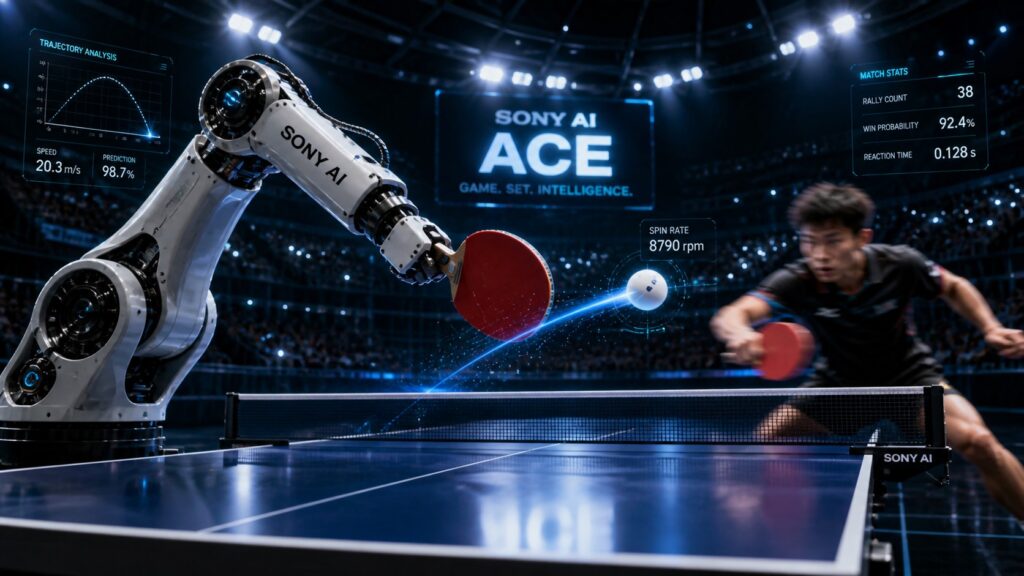

The AI-powered table tennis machine that’s rewriting the rules of physical intelligence — one rally at a time

Meet Ace: The Robot That Came to Play

Let’s be honest. When most people think of robots, they picture something clunky, slow, and about as graceful as a shopping cart with a broken wheel. Sony just blew that image to smithereens.

Meet Ace — Sony AI’s autonomous table tennis robot. It doesn’t have fluid joints, It doesn’t have dexterous fingers. It doesn’t even look particularly intimidating. But put a paddle in its mechanical grip and set it across the table from an elite human player? That’s where things get wild.

Ace recently competed against some of the best table tennis players on the planet — and it won. Not in a lab. Not in a simulation. On a regulation table, under official International Table Tennis Federation (ITTF) rules, with licensed umpires calling the shots. The results were published in the prestigious journal Nature, and the robotics world hasn’t stopped buzzing since.

This isn’t just a cool party trick. This is a milestone. A genuine, peer-reviewed, “we-need-to-talk-about-this” moment in the history of artificial intelligence.

Why Table Tennis? Seriously, Why?

Fair question. Of all the sports in the world, why did Sony AI pick table tennis?

Because it’s brutally hard. That’s why.

A ping-pong ball can travel faster than 20 meters per second. Players have less than half a second to react. And that’s before you factor in spin — a ball rotating at up to 9,000 revolutions per minute can curve mid-air and bounce in ways that even experienced players find unpredictable.

As TechXplore explains, earlier robotic systems like Omron’s Forpheus simplified the game. They used controlled ball launchers, limited movement, or just ignored spin altogether. Ace does none of that. It plays the full game. Standard equipment. Real opponents. No shortcuts.

“Table tennis is a game of enormous complexity that requires split-second decisions as well as speed and power,” said Peter Dürr, director of Sony AI and project lead for Ace.

He’s not exaggerating. For humans, reading spin is largely intuitive — something built up over years of play. For robots, it has historically been an almost insurmountable obstacle. Ace cracked it.

The Eyes Have It: How Ace Sees the World

So how does a robot actually see a ball moving at 20 meters per second? Spoiler: not with regular cameras.

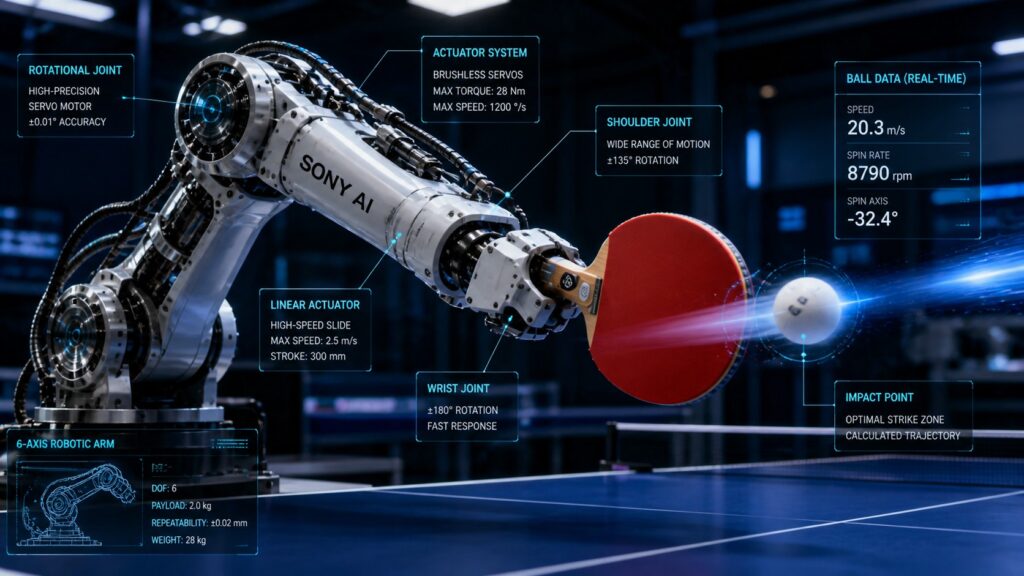

Ace uses three event-based vision sensors — a cutting-edge technology that detects changes in light rather than capturing full images at fixed intervals. Think of it like the difference between taking a photograph and watching a live video feed at 10,000 frames per second. These sensors are complemented by nine high-speed cameras that track the ball, the opponent, and the racket simultaneously.

Together, this vision system gives Ace something remarkable: the ability to estimate the ball’s position, velocity, and spin in real time. By tracking markings on the ball, the system can calculate spin approaching 9,000 rpm — something that has stumped robotic systems for decades.

CNET describes the full setup as “as much a piece of art as it is hardware.” And honestly? That tracks. The engineering here is genuinely beautiful.

But seeing the ball is only half the battle. Ace also has to decide what to do with that information — and fast.

The Brain Behind the Brawn: Reinforcement Learning in Action

Here’s where the AI magic really kicks in.

Ace uses deep reinforcement learning — a type of machine learning where the system learns by trial and error, getting rewarded for good decisions and penalized for bad ones. But here’s the twist: Ace was trained across millions of virtual rallies in simulation, including self-play, before it ever faced a human opponent.

This approach — training in simulation and then deploying in the real world — is called sim-to-real transfer. It’s notoriously tricky. Many AI systems perform brilliantly in virtual environments and then fall apart the moment they encounter real-world noise, unpredictability, and chaos.

Ace didn’t fall apart. It adapted.

The system continuously generates movement commands for its multi-jointed robotic arm, recalculating trajectories every few tens of milliseconds while simultaneously avoiding collisions with the table and itself. That’s not just impressive — that’s operating at the edge of human reaction time.

And the control system? It’s model-free, meaning Ace doesn’t rely on a preprogrammed playbook. It adapts and makes decisions on the fly, without needing a pre-built model of every possible scenario. That’s a big deal. That’s the kind of flexibility that makes robots genuinely useful in the real world.

The Body: Built for Speed

Vision and brains are great. But Ace also needs a body that can keep up.

The robot’s arm combines two prismatic (sliding) joints and six revolute (rotational) joints. This design enables rapid sideways motion and precise striking. The arm is lightweight, with optimized actuation systems that allow Ace to return balls at speeds approaching 20 meters per second.

It can also serve. A built-in ball-handling mechanism allows Ace to perform one-armed serves — and during testing, it scored 16 direct points while serving against elite players, compared to just eight for the human opponents. That’s not a fluke. That’s dominance.

New Atlas captured one particularly jaw-dropping moment: a human’s shot grazed the top of the net, completely changing the ball’s trajectory. Ace readjusted in a split second, returning the shot in real time — better than most humans could. Observers watching the footage called it “superhuman.” That wasn’t hyperbole.

The Scoreboard: How Did Ace Actually Do?

Let’s talk results. Because this is where it gets really interesting.

Ace was tested against five elite players — competitive athletes with over ten years of experience and an average of 20 hours of weekly training. It also faced two professional-level players from Japanese leagues.

The outcome? Ace won three out of five matches against the elite players. It lost both matches against the professional league players — but it did win a game against one of them. As Let’s Data Science notes, the matches were officiated by licensed umpires under full ITTF rules. This wasn’t a controlled demo. This was a real competition.

The researchers themselves were measured in their claims. “AI systems now challenge or surpass human experts in many computer games,” they wrote in the Nature paper. “Physical and real-time sports such as table tennis, however, remain a major open challenge.”

Ace didn’t demolish the professionals. But it competed with them. And that, in itself, is extraordinary.

What Humans Still Do Better (For Now)

Let’s keep it real. Ace isn’t unbeatable. Not yet.

Professional players were still able to exploit its limitations — particularly in reach, speed, and the ability to handle extreme or highly deceptive shots. Humans combine perception, movement, and strategy in ways that remain genuinely difficult to replicate in a machine.

There’s also the question of physical embodiment. Intelligence isn’t just about prediction and control. It’s about having a body that can execute those decisions across a full range of motion, under fatigue, under pressure, with the kind of intuitive adaptability that comes from years of lived experience.

Ace doesn’t have that. Not yet.

But here’s the fascinating flip side: one former Olympic player, after facing Ace, observed that watching it return seemingly impossible shots made them think humans might be capable of more than previously thought. The robot isn’t just a competitor. It might become a coach. A training partner. A mirror that shows human athletes what’s possible.

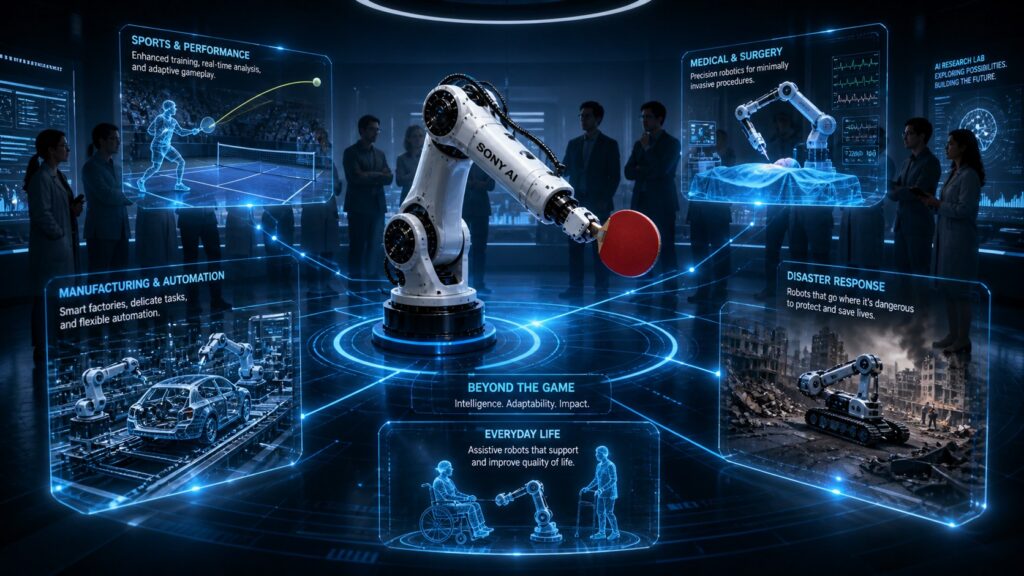

Why This Matters Way Beyond the Ping-Pong Table

Okay, so a robot is good at table tennis. Cool. But why should anyone outside the robotics world care?

Because the implications are enormous.

In manufacturing, robots are typically confined to highly structured, repetitive tasks. The real challenge — handling irregular objects, responding to variation, adapting to the unexpected — has always been out of reach. Ace’s predictive and control capabilities point toward a future where robots can handle that complexity.

In healthcare and home environments, robots need to operate safely alongside humans. Most industrial robots today are kept behind safety barriers because they can’t react quickly or reliably enough to unexpected human behavior. Ace operates at the edge of human reaction time. That changes the equation entirely.

In construction, logistics, and emergency response, robots need to function in unstructured, unpredictable environments. The same sim-to-real transfer capabilities that let Ace handle a spinning ping-pong ball could help a robot navigate a disaster site.

“Once AI can operate at an expert human level under these conditions, it opens the door to an entirely new class of real-world applications that were previously out of reach,” said Peter Stone, chief scientist at Sony AI.

He’s right. And News Minimalist ranked this story in the top 3.3% of significance among nearly 31,000 analyzed articles on the day it broke. The world is paying attention.

The Bigger Picture: Physical AI Is Here

For decades, AI’s greatest victories happened in digital spaces. Deep Blue beat Kasparov at chess. AlphaGo conquered the ancient game of Go. AI systems dominated StarCraft II. Impressive? Absolutely. But those victories all shared one key feature: they happened in controlled, digital environments.

The real world is messier. Noisier. More unpredictable. And that’s exactly where Ace is operating.

This is the new frontier of AI — not just intelligence in abstract problem-solving, but intelligence embedded in the physical world. Sony calls it “physical AI,” and Ace is its most compelling proof of concept yet.

The gap between simulation and reality is closing. Fast.

What Comes Next?

The research community is watching closely. Observers are tracking whether Sony AI will release open datasets, code, or benchmark protocols that allow other researchers to build on this work. Follow-up studies will likely quantify latency budgets, spin estimation error bounds, and safety envelopes for human-robot interaction.

And the broader question looms: if Ace can master table tennis, what’s next? Tennis? Soccer? Surgery? Manufacturing? The answer, increasingly, seems to be: yes, eventually, all of it.

Table tennis coaches may want to start updating their résumés. But for the rest of us? This is one of the most exciting moments in the history of robotics. Grab a paddle. The machines are warming up.

Sources

- New Atlas — “Watch: Sony’s insane autonomous robot shows off ‘superhuman’ skills”

- CNET — “Sony’s AI Robot Can Probably Beat You at Table Tennis”

- TechXplore — “Table tennis robot defeats some of world’s best players”

- Let’s Data Science — “Sony AI’s Ace Defeats Elite Table Tennis Players”

- News Minimalist — “Sony’s Ace robot masters table tennis with rapid learning”

- Nature — Original Research Paper

- Sony AI — Project Ace