Why AI is moving the scarce asset from “can you build it?” to “can you get chosen?”

There is a slogan circulating through venture capital decks, founder Slack channels, and X threads right now: distribution is the moat now. Like most slogans, it is partly true, partly overstated, and entirely worth taking apart carefully — because the specific way in which it is true has profound implications for anyone building, funding, or buying AI software in 2026.

Taken literally, “distribution is the moat now” is wrong. If your company is training frontier models, controlling scarce data flows, fabricating chips, or operating deeply embedded regulated systems, technical moats absolutely still matter. The National Bureau of Economic Research has argued that appropriability and control over complementary assets continue to shape who wins in generative AI. The Stanford Institute for Human-Centered Artificial Intelligence reports that nearly 90% of notable AI models in 2024 came from industry, where compute, talent, and data are concentrated. So if you aim the slogan at the model layer, the chip layer, or critical infrastructure, it breaks.

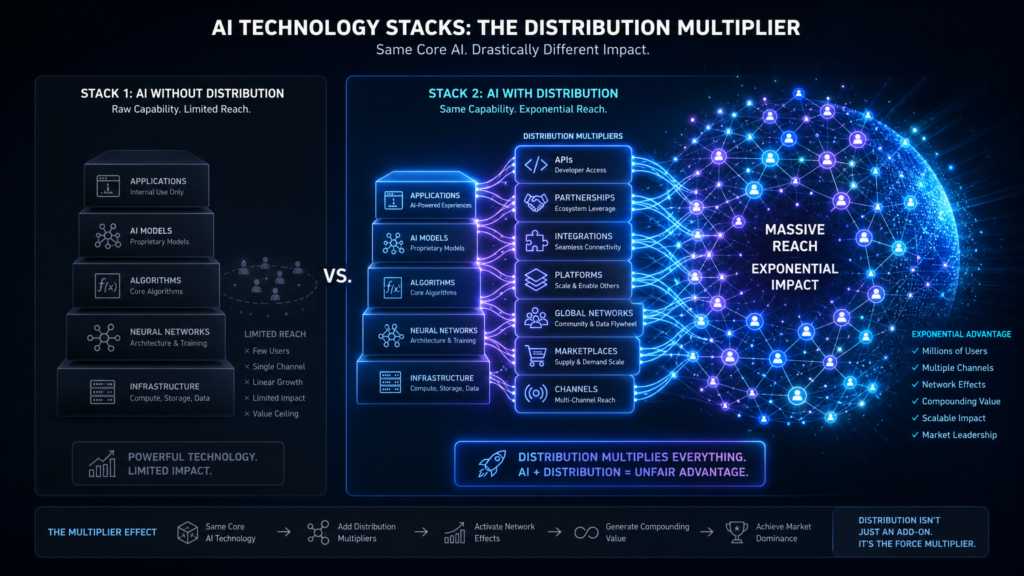

But above the model layer — in AI-native application software, copilots, internal tools, agents, and workflow products — the claim is mostly right. And the right formulation is narrower and sharper than the slogan suggests:

In AI-native software, the intelligence layer is becoming cheaper, faster, and more portable than the demand layer. As technical differentiation compresses, durable advantage shifts toward audience ownership, trust formation, workflow placement, and go-to-market execution.

That is the thesis. The rest of this essay is the evidence and the implications.

Why technical moats are shrinking above the model layer

Capability is increasingly rentable. Frontier-grade reasoning is now a line item on an OpenAI pricing page, an Anthropic invoice, or a Google API bill. It is also increasingly portable: open-weight models have closed much of the gap with closed ones. The Stanford 2025 AI Index found that the inference cost of a GPT-3.5-level system fell more than 280-fold between November 2022 and October 2024, while the performance gap between open-weight and closed models shrank to 1.7% on some benchmarks. The frontier itself is crowding: the gap between the top-ranked and tenth-ranked AI models fell from 11.9% to 5.4% in a single year, with the top two models separated by only 0.7%.

That is not commoditization in the strict economic sense — frontier labs still spend billions on training runs no startup will replicate — but it is more than enough to destroy lazy product strategy. If the underlying model frontier is crowded and your application is built with tools thousands of rivals can access, any surface-level feature lead will decay faster than it used to.

At the same time, AI is collapsing the cost of building software itself. A combined analysis of randomized field experiments across Microsoft, Accenture, and another Fortune 100 company, published on SSRN, found a 26.08% increase in completed tasks among developers using AI coding assistance. In a controlled experiment around GitHub Copilot, developers completed a representative coding task 55% faster.

Inside Google itself, Google Research reports a 37% acceptance rate for AI code completions, and says AI assistance now helps complete 50% of code characters in its internal environment. The Google I/O 2025 keynote underscores how deeply these tools have moved into mainstream production workflows.

Forbes contributor Josipa Majic Predin captured the same shift in a recent venture capital piece: “The martech landscape alone grew from 150 solutions in 2011 to 15,384 in 2025, a 100x proliferation driven precisely by the falling cost of building” (Forbes). When AI lowers the barrier further through vibe coding, autonomous agents, and no-code platforms, “any competent team can ship a functional product in weeks. Technical differentiation, once a durable moat, now has a shelf life measured in quarters.”

The bad news for founders is obvious. In many AI-heavy software categories, we built it first is becoming less meaningful than we reached buyers first, earned trust first, and embedded ourselves first. A thin wrapper can still win — but only if it controls a route to market that competitors cannot cheaply duplicate.

Why lookalike products keep launching at the same time

Walk through Product Hunt or YC’s batch lists today and you will find six near-identical AI meeting summarizers, five competing voice-agent platforms, four sales-coaching copilots, three browser-using agents, and two clones of every successful launch from the previous quarter. This is not a coincidence, and it is not exactly “copying” in the old sense. It is parallel invention on shared primitives.

The same commercial APIs are public. The same open-weight checkpoints are downloadable. The same benchmarks are visible. The same cloud infrastructure is rentable. The same agent scaffolds and evaluation patterns circulate openly on GitHub the moment they work. The builder base is enormous: GitHub’s Octoverse 2025 report notes that more than 1.1 million public repositories now use an LLM SDK, with 693,867 of those projects created in just the previous 12 months.

The demand signal is just as public. The NBER’s working paper on generative AI adoption reports that, as of late 2024, nearly 40% of U.S. adults aged 18–64 were using generative AI, with 23% of employed respondents using it for work at least once in the prior week. Stanford’s AI Index says generative AI reached 53% adoption in three years — faster than the personal computer or the internet.

When capability is rentable and demand is visibly spiking, many founders will arrive at the same product thesis at roughly the same time. The implication is harsh but useful: a large share of AI startups are not being copied. They are entering markets that were always going to fill with substitutes the moment the underlying primitives matured. If your defense rests mainly on the fact that you shipped a feature before everyone else, you do not have a moat. You have a head start.

This is exactly why investors have begun reframing their diligence. As Cobus Greyling argued recently on Medium, “The code can be copied. The architecture can be reverse-engineered. The features can be matched in weeks. But the installed base, the ecosystem, the network of users who depend on the product daily — that cannot be replicated.”

Why attention and trust now produce outsized winners

If features are abundant, then attention and trust become scarce — and scarcity is where moats live. The clearest evidence comes from the consumer side, inside Google Search itself.

Google says its AI Overviews have scaled to more than 1.5 billion users and are driving over 10% growth in usage for the classes of queries that show them in major markets. But there is a downstream cost. Pew Research Center found that when users encountered an AI summary, they clicked a traditional search result in only 8% of visits; when no AI summary appeared, they clicked a search result in 15% of visits. The interface that owns default attention captures more value, while everyone downstream gets less traffic, less data, and less room to differentiate.

The B2B version is harsher. The 6sense Buyer Experience Report 2025 reports that buyers execute about two-thirds of the buying journey before engaging sellers, rank their shortlist before first contact, and buy the initially favored vendor nearly 80% of the time. Eighty-one percent spoke with the winning vendor first. In other words, the decisive battle is often won before the demo is even scheduled. Brand salience, narrative control, and third-party proof are doing far more work than most product teams want to admit.

When markets are noisy and budgets are tight, trust compounds. 6sense found that nearly 70% of buyers reported economic concerns affecting vendor choice, pushing them toward conservative selections — known vendors and incumbents. Research jointly produced by LinkedIn and Edelman found that 73% of B2B decision-makers view thought leadership as a more trustworthy basis for assessing capabilities than product collateral, and nine in ten say high-quality thought leadership makes them more receptive to outreach. LinkedIn’s 2025 research adds that 55% of hidden buyers — people who never identify themselves to vendors — use thought leadership in vendor evaluation, and 35% say C-suite endorsement after consuming thought leadership pushed them to consider a vendor.

When product claims are cheap, trust signals become expensive — and therefore valuable. AI makes functionality more abundant, so attention and trust become more scarce.

The compounding distribution channels

Distribution is not a single thing. It is a portfolio of channels with different half-lives, different costs, and different defensive properties. Some compound, some leak, and some are being actively rewritten by the answer engines themselves. Here is how they look in 2026.

1. Owned audience: pre-sales infrastructure

Owned audience is the purest defensive asset because it is direct and repeatable. Newsletters, recurring webinars, communities, podcasts, customer education programs, advisory councils, founder media, and events are not side projects in an AI-native company. They are pre-sales infrastructure. The LinkedIn-Edelman data shows that strong thought leadership influences trust and shortlist formation far earlier than most teams assume — and the 6sense research shows that this happens before sellers ever enter the conversation. If a founder can reliably reach the market without paying tolls to every platform in the chain, that founder has something closer to a moat than another prompt wrapper does.

2. Answer-surface visibility: the new SEO

Search and answer-surface presence still matter, but the job description has changed. The old model assumed the page that ranked would receive the visit. That world is weakening. Pew shows lower click-through when AI summaries appear; industry estimates suggest AI platforms generated more than 1.1 billion referral visits in June 2025, with referrals to transactional sites converting at roughly 7%. The real objective is no longer “rank another article.” It is “be the source that answer engines cite, and that users trust enough to seek out by name.” That changes what content is worth producing — original research, benchmarks, and proprietary data sets vastly outperform recycled list-icles in this environment.

3. Sales relationships: more important, not less

The “sales will disappear” narrative is sloppy. In expensive, risky, or implementation-heavy categories, AI has actually increased the burden of explanation. 6sense found that 62% of buyers needed sellers to answer AI-related questions about capabilities, cost, training data, privacy, and security — and 58% engaged vendors earlier than they otherwise would have, specifically to evaluate AI claims. Trusted human relationships matter more in enterprise AI, not less.

4. Ecosystem partnerships: distribution arbitrage

Marketplaces are some of the most underrated distribution channels in software. Salesforce’s AppExchange gives partners access to more than 175,000 customers; over 91% of Salesforce customers use AppExchange, and the marketplace has passed 13 million installs. Shopify’s 2026 ecosystem update reports more than 21,000 apps, millions of merchants served, and over $1.3 billion paid to developers in the prior year. HubSpot’s marketplace offers more than 2,600 apps. In AI-native software, being built into an existing workflow often beats being somewhat better as a standalone destination. Morningstar’s analysis of software moats argues precisely this: system-of-record platforms like Salesforce and ServiceNow remain strategically advantaged in distribution because customers are already there and increasingly want to adopt agentic solutions inside the functional platforms they already trust.

5. Developer communities: GTM disguised as DevRel

When developers are the buyer, the implementer, or the recommender, community is GTM. GitHub reports more than 180 million developers on the platform, more than 36 million new developers in 2025 alone, and over 1.1 million public repositories using an LLM SDK. Documentation quality, starter kits, open-source repos, templates, issue response time, conference presence, and active forums all reduce adoption friction while increasing default preference. Developer communities are also where switching costs accrue invisibly — every plugin, integration, and Stack Overflow answer makes the dominant tool slightly more sticky.

6. Category creation: the most underrated channel

Category creation is the distribution channel most founders underrate, because it feels vague until it works. But it is simply the work of defining the problem, the vocabulary, the metrics, and the buying criteria before competitors do. The 6sense data showing that buyers favor vendors before they ever talk to them, combined with the LinkedIn/Edelman finding that thought leadership helps hidden buyers advocate for lesser-known brands, points to a powerful play: define the category early enough and you stop fighting only for demand capture. You start shaping demand itself.

Five distribution moats that actually compound

Rupa Ganatra Popat, Managing Partner of Arāya Ventures, has reviewed more than 3,000 deals and backed more than 20 AI companies. In a recent Grit Daily profile, she identified five distribution moats she now actively looks for at the seed stage. They map cleanly onto the research above and are worth restating:

- Compounding data that gets more valuable over time — products that get smarter the longer someone uses them, where walking away means losing years of personalized intelligence. Healthcare, with its fragmented patient data, is the exemplar.

- Collaborative network effects that make switching a team decision — products designed so that one user pulls in two, three, or four colleagues, turning every new user into a retention mechanism for everyone else.

- Deep workflow integration, not surface-level bolt-ons — embedding into existing processes rather than asking customers to change behavior. Once the integration is deep enough, switching becomes operational disruption, not a subscription cancellation.

- Ecosystem building that extends beyond the core product — developer tools, plugins, tutorials, and third-party integrations that turn a vendor into infrastructure.

- Trust, security, and habit formation at the organizational level — internal playbooks, prompt evaluation toolkits, and security protocols that codify the buyer’s relationship with a specific vendor.

Or as a Forbes Tech Council post put it bluntly in May 2026: “Ecosystems as Capital… Distribution as a Moat: embed yourself into customer workflows to become indispensable” (Forbes).

What founders should build alongside the product

The right build list for an AI-native startup is not just a product roadmap. It is a distribution stack. Founders who internalize this early will look less clever in demos and more dangerous in markets. Founders who ignore it will ship elegant products into a void.

- A public point of view. Publish original research, benchmarks, opinionated memos, or sharply argued category writing that gives buyers language for their problem. Per LinkedIn-Edelman, thought leadership influences trust and shortlist formation long before sellers enter the conversation.

- A capture mechanism you own. Email lists, communities, webinars, customer academies, and creator-style media presence matter because relying purely on rented discovery channels is getting riskier as AI answer layers absorb more of the clicks. The Pew data on declining click-through is a leading indicator.

- Proof systems, not just pitches. Reviews, case studies, reference calls, ROI calculators, and customer evidence matter because 6sense shows buyers rank vendors before first contact and overwhelmingly buy the vendor they already prefer.

- Native workflow placement. Build into the platforms buyers already inhabit. Salesforce AppExchange, Shopify, HubSpot, Slack, and the major IDEs are not side bets. They are how AI-native startups inherit distribution from systems with installed bases and habitual usage.

- Human trust where the stakes are real. In categories involving data governance, implementation risk, or procurement complexity, founder-led sales, solution engineering, and expert credibility close the gap after the product gets attention. AI usually increases the need for explanation rather than removing it.

- A proprietary data loop after acquisition. The strongest post-distribution defense is not secret prompts. It is operational data, user interaction history, and workflow knowledge that make the product better once customers are inside. Google Research emphasizes that high-quality usage data across tools is essential for model quality, and the SSRN-published Brynjolfsson-Li-Raymond research shows that AI systems can capture and disseminate best practices from stronger workers to the rest of the organization — but only if the system has the data to do so.

If that list sounds demanding, it is supposed to. In AI-native markets, a company with only a product is easy to imitate. A company with a product, an audience, trust, workflow placement, and a data loop is much harder to dislodge.

The data-versus-distribution debate, settled

A nuanced view from Insignia Business Review puts the data-vs-distribution argument to bed. Foundation models are now largely trained on public or web-scale data. As Bessemer Venture Partners observed, “foundation models are primarily built on public data… the value of private data is limited.” Each new LLM release narrows the performance gap that proprietary data might have once provided. Even reinforcement learning from human feedback, often touted as a way to lock in advantage through user data, “isn’t a durable moat unless you already have a large, engaged user base providing that feedback. It’s a secondary moat… the result of a distribution advantage or network effect. That is the real moat.”

In other words: data moats only exist when distribution feeds them. Distribution is the prior condition. Without users, you have no data flywheel. With users, data quality compounds automatically.

This is why the Insignia analysis points to companies like Glean — whose AI search is technically replicable, but whose early enterprise distribution and integrations into Slack, Zendesk, and Salesforce created switching costs incumbents now struggle to match. Or HeyGen, which scaled from $1M to $35M in ARR in roughly a year not because its underlying generative video tech was uniquely defensible, but because its go-to-market beat everyone else’s. Or Abridge, which embedded directly into Epic’s electronic health record platform and inherited distribution across major U.S. health systems. The technology in each case was replicable. The distribution position was not.

When distribution isn’t the moat

To keep the argument honest, it is worth restating where the slogan breaks. Distribution is not the dominant moat in:

- Frontier model training. Compute, talent, and data scale matter enormously, and those advantages are concentrated. Stanford’s AI Index shows nearly 90% of notable AI models in 2024 came from industry, where these resources sit.

- Chips and accelerators. Manufacturing capacity, fab partnerships, and software ecosystems (CUDA, TPUs) are durable technical moats.

- Heavily regulated systems. Defense, certain medical-device categories, certain financial-infrastructure categories, and similar areas create regulatory moats that distribution alone cannot manufacture.

- Exclusive data partnerships in specialty domains. The Insignia piece notes Eleos Health and Abridge both benefit from real clinical data partnerships that are not generally accessible. These create real, narrow moats — though they are typically complementary to distribution, not substitutes for it.

For everyone else — the vast majority of founders building AI-native applications — the slogan holds.

Recommended thesis, title, and structure

Founders, marketers, and operators looking to write or speak about this shift have a strong title to anchor on: Your Product Isn’t the Moat. Your Distribution Is. It is blunt, memorable, and directionally correct once the argument is scoped to the application layer rather than the frontier-model layer.

Other viable framings:

- Distribution Is the Moat Now

- If Anyone Can Build It, Whoever Can Reach Buyers Wins

- AI Commoditizes Features. Distribution Defends Margins.

- In the Age of AI, Attention Beats Novelty

A tighter thesis statement that survives skeptical scrutiny:

In AI-native software, the intelligence layer is becoming cheaper, faster, and more portable than the demand layer. As technical differentiation compresses, durable advantage shifts toward audience ownership, trust formation, workflow placement, and go-to-market execution.

The strongest outline for the essay or memo is straightforward: open by rejecting the overstatement (technical moats still matter in frontier models and infrastructure); narrow the claim to the application layer; show how AI compresses build cycles and narrows feature-led advantages; explain why parallel invention creates swarms of lookalike products; show why attention, trusted defaults, and shortlist formation create winner-take-most outcomes; break down the compounding channels — owned audience, answer-surface visibility, sales relationships, ecosystem partnerships, developer community, and category creation; and close with the founder imperative.

The closing argument

There is a tempting nostalgia in technical founders for the world in which the better algorithm won. That world is not entirely gone. In the rare categories where the model itself is the product, technical moats remain real. But that world has shrunk, and it will keep shrinking as inference cost drops, open weights catch up, and another 36 million developers join GitHub.

For the much larger world of AI-native applications — the copilots, the agents, the workflow tools, the vertical SaaS rebuilt around language models — the binding constraint is no longer engineering. It is getting chosen. Buyers form preferences before they form contracts, as 6sense has documented in detail. They form those preferences from media, peers, ecosystems, search results, AI overviews, founder voices, and the workflows they already inhabit. They form them long before a demo. They form them often without a vendor ever knowing they were being evaluated.

Founders who treat distribution as a thing they will get to after product-market fit are walking into an ambush. By the time their product is undeniably good, three competitors will be selling something equivalent, two will be embedded into a marketplace they don’t have a presence on, one will be the default citation in ChatGPT and Google AI Overviews for their category, and the buyer will already have a preferred vendor — picked from a shortlist the founder was never on.

The closing line is also the real conclusion of the research. AI did not make moats disappear. It changed where they live. When intelligence becomes abundant, distribution becomes the scarce asset. The companies that internalize this in 2026 will be the ones still standing in 2030 — not because they built the cleverest model, but because they built the audience, the trust, the placement, and the data loop around it.

Build the product. Then build the moat that the product, on its own, can no longer be.