The currency of work is changing. And most companies haven’t noticed yet.

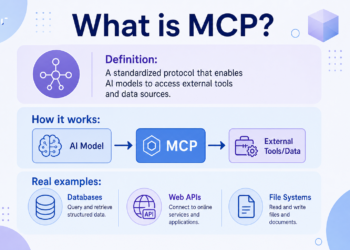

There’s a new number being whispered in Silicon Valley boardrooms, and it has nothing to do with revenue, headcount, or lines of code committed. It’s tokens — the atomic units of AI computation — and the question increasingly being asked of knowledge workers isn’t what did you produce, but how much compute did you burn to produce it.

In March 2026, a single unnamed engineer at Meta processed 281 billion tokens through Anthropic’s Claude model over the course of 30 days. To put that in perspective: at Claude’s cheapest public pricing of $5 per million tokens, that one employee may have cost Meta more than $1.4 million in a single month. The person was celebrated. The metric wasn’t questioned. The leaderboard that surfaced it was briefly made into a game.

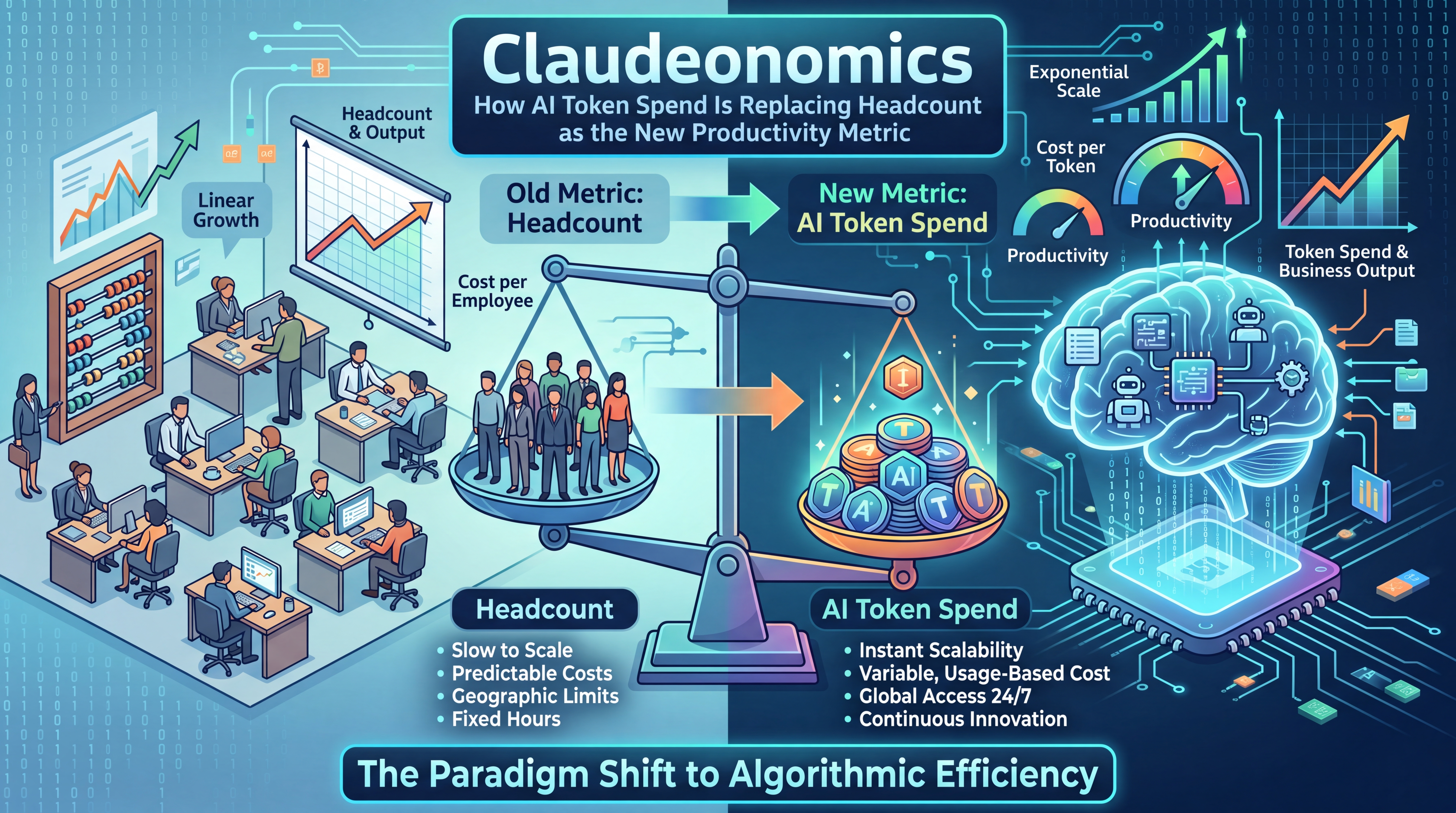

Welcome to the age of Claudeonomics. The era when the volume of text you run through an AI model is beginning to matter as much — sometimes more — than the work that text was supposed to produce.

The Leaderboard That Changed Everything

In early April 2026, a Meta employee built something remarkable on the company’s internal intranet: a live dashboard called “Claudeonomics” that ranked the AI token consumption of more than 85,000 employees. The name was a direct nod to Anthropic’s Claude, which has become Meta’s preferred AI model, particularly for technical and coding work.

The leaderboard tracked the top 250 users by token volume. It gamified consumption with a tiered badge system — Bronze, Silver, Gold, Platinum, Emerald — and awarded titles that could have come from a video game: Token Legend. Model Connoisseur. Cache Wizard. Session Immortal.

Collectively, Meta’s workforce burned through 60 trillion tokens in 30 days. That figure staggers the mind. One trillion tokens is enough text to fill the entire English-language Wikipedia roughly 166 times. Meta’s employees consumed 60 of those in a month. The top individual averaged 281 billion — or about Wikipedia 47 times over, solo, in 30 days.

Neither CEO Mark Zuckerberg nor CTO Andrew Bosworth made the top 250. This was noted by the press as an irony. It is more accurately described as a foreshadowing: the people doing the most token-intensive work were not the executives directing strategy, but the engineers and individual contributors building, researching, and shipping products.

The dashboard was shut down two days after details surfaced publicly. A message posted to the internal site read: “It was meant to be a fun way for people to look at tokens, but due to data from this dashboard being shared externally, we’ve made the decision to shutter Claudeonomics for now.” Meta told Fortune the employee removed the dashboard voluntarily and the company did not request its shutdown.

But the genie was out of the bottle. What Claudeonomics revealed — however briefly — was that token consumption had already become a live, real-time signal of how deeply AI was embedded in a workforce. And that signal was increasingly being treated as a proxy for productivity itself.

Jensen Huang Fires the Starting Gun

The Claudeonomics story didn’t emerge in a vacuum. It arrived weeks after Nvidia CEO Jensen Huang turned token economics into dinner table conversation at the tech industry’s highest levels.

At Nvidia’s GPU Technology Conference in San Jose in March 2026, Huang floated an idea so audacious it sounded like a thought experiment: give engineers token budgets as part of their compensation. Real tokens. Spendable AI compute, sitting alongside salary, bonus, and equity as a formal component of what it means to be employed.

“I could totally imagine in the future every single engineer in our company will need an annual token budget,” Huang said at GTC 2026. “They’re going to make a few hundred thousand dollars a year as their base pay. I’m going to give them probably half of that on top of it as tokens so that they could be amplified ten times.”

Then, on the final day of GTC, Huang appeared on the All-In Podcast and sharpened the blade further. He proposed a mental model for evaluating any high-paid engineer through the lens of their token spend. “If that $500,000 engineer did not consume at least $250,000 worth of tokens, I am going to be deeply alarmed,” he told the hosts. “If that person said $5,000, I will go ape something else.”

When asked whether Nvidia itself was spending around $2 billion per year in tokens for its engineering team, Huang’s answer was unambiguous: “We’re trying to.”

His analogy: “This is no different than one of our chip designers who says, ‘Guess what? I’m just going to use paper and pencil.'” An engineer who underuses AI is, in Huang’s worldview, making the same category error as a chip designer who refuses to use EDA software.

CNBC reported that Huang also positioned tokens as a recruiting tool, predicting that the question “How many tokens come along with my job?” would soon be as standard in Silicon Valley interviews as “What’s the equity package?”

Tomasz Tunguz of Theory Ventures had been circulating a similar idea since mid-February 2026, describing inference costs as a “fourth component” of engineering compensation alongside salary, bonus, and equity. Using data from Levels.fyi, his analysis suggested that a top-quartile software engineer at $375,000 with a $100,000 token budget was effectively a $475,000 hire — with roughly one dollar in five going to compute.

The Internal Arms Race

Meta and Nvidia are not alone. The same competitive dynamic is playing out across the industry.

OpenAI, the company whose models have done more than any other to make token economics real, runs its own internal employee leaderboard. The New York Times reported in March 2026 that OpenAI’s top power user processed 210 billion tokens in a single week in March — enough text to fill Wikipedia 33 times, in seven days, by one person.

Meta CTO Andrew Bosworth publicly described a top engineer who was spending the equivalent of his own salary in tokens and, in return, had become “5x to 10x more productive.” His editorial: “It’s like, this is easy money. Keep doing it. No limit.”

Ali Ghodsi, CEO of Databricks, reportedly highlighted an engineer who spent over $7,000 in AI tokens in just two weeks in January 2026 — and rather than questioning the cost, had the entire engineering team applaud the person as a positive example.

Andrej Karpathy, former AI scientist at Tesla and OpenAI, now running an AI education startup, framed it most starkly in podcast remarks: “The name of the game is tokens. How can you maximize your token throughput and not be in the loop.”

And according to TechCrunch’s reporting on the NYT tokenmaxxing story, an Ericsson engineer in Stockholm told the Times he likely spends more on Claude than he earns in salary — with the employer covering the bill.

What’s emerging is an unmistakable pattern: companies are instrumentalizing token consumption as a visibility layer into how “AI-native” their workforce is. Whether or not it maps to outcomes is a question being asked with less urgency than perhaps it should be.

Measuring the Right Thing or the Wrong Thing?

Here’s where Claudeonomics gets genuinely complicated — and where serious people are starting to push back.

The Decoder put it most cleanly: “Measuring token consumption as a proxy for productivity is a bit like judging a truck driver by how much gas they burn. It tells you the engine is running, but not whether any freight is actually getting delivered.”

The problem is almost built into the incentive structure. The moment a leaderboard surfaces — even a playful one — the metric becomes the goal. And token consumption is extraordinarily easy to game.

The Engineering Leadership newsletter documented this bluntly: some Meta employees, in order to climb the Claudeonomics rankings, were deliberately letting AI agents run continuously for hours on research tasks, not because the research was urgent, but because the running agents racked up tokens. One engineer in a separate company with an official token-usage policy offered an even more damning description: “It’s as stupid and easily gamed as you would expect. Right up there with measuring lines of code or using story points to gauge productivity. The best way to rack up tokens seems to be keeping a chat context going for a long time, telling it to read tons of code, and pasting as much text into the chat as you can.”

This is the classic Goodhart’s Law problem, applied to AI. When a measure becomes a target, it ceases to be a good measure. Lines of code were replaced by story points. Story points were replaced by velocity. Now velocity is being supplemented by token consumption — and none of these proxies have ever fully captured what it means to produce something of value.

Meta’s own official position gestured at this tension. While Claudeonomics briefly existed as an informal, gamified leaderboard, the company also maintains a separate, official dashboard for token usage geared specifically toward software engineers. And last year, Meta’s Chief People Officer Janelle Gale formally told employees that “AI-driven impact” would be a “core expectation” for 2026 — with performance reviews overhauled in January 2026 to offer bonuses of up to 200% for the highest performers, tied to that AI-driven contribution. The signal from the top is clear: use more AI. The measurement of what that use actually produced is still being designed.

The Economics That Make This Make Sense

Before dismissing Claudeonomics as a productivity fad, it’s worth sitting with the genuine economic logic underneath it.

When Bosworth says his best engineer spends the equivalent of their salary in tokens and produces 10x the output, what does that actually mean in practice? Consider the numbers: if that engineer’s salary is $200,000, and they consume $200,000 in token compute annually, the total cost to Meta is $400,000. But if that engineer is producing the equivalent output of what five engineers at $200,000 each would produce — a conservative interpretation of “5x” — the company is getting $1,000,000 of output for $400,000. That’s a 2.5x return on their people investment before accounting for benefits, management overhead, or the transaction costs of additional headcount.

This is the genuine disruption. It’s not that tokens are interesting. It’s that tokens are cheap relative to what they displace.

CNBC’s reporting on the broader economic picture frames this through Goldman Sachs’s analysis: AI could automate tasks accounting for 25% of all U.S. work hours and deliver a 15% productivity boost. The displacement that follows — Goldman estimates 6% to 7% of jobs over the adoption period — represents the other side of that ledger. Token spend doesn’t just measure individual productivity. It measures the rate at which human cognitive labor is being substituted by compute.

From this perspective, tracking token consumption makes a kind of brutal sense. A team that collectively consumes 10 trillion tokens a month is probably doing more — or doing the same work with fewer people — than a team consuming 10 billion. The number isn’t perfect, but it isn’t meaningless.

The challenge is what happens when organizations skip past the “correlate-and-validate” step and just start managing to the number directly.

Tokens as Compensation: The Hidden Trap

TechCrunch’s Connie Loizos offered one of the most sober analyses of what token compensation actually means for workers — and it’s not unambiguously good news for employees.

Former VC and financial services CFO Jamaal Glenn pointed out that a token budget doesn’t vest. It doesn’t appreciate. It doesn’t show up in your next offer negotiation the way equity or base salary does. If your total “compensation” is $300,000 salary plus $100,000 in token allocation, that $100,000 in tokens may disappear if you change roles or companies. It doesn’t compound. It can’t be liquidated.

More pointedly: if companies successfully normalize tokens as a component of compensation packages, they have a new lever for keeping cash compensation flat while claiming to increase total rewards. “That’s a good deal for the company,” Loizos writes. “Whether it’s a good deal for the engineer depends on questions most engineers don’t yet have enough information to answer.”

There’s an even more disconcerting implication. At the point where a company’s token spend per employee approaches or exceeds that employee’s salary — which is precisely what Jensen Huang is celebrating and Andrew Bosworth is explicitly endorsing — the financial calculus of headcount starts to shift in ways that aren’t favorable to the human in the equation. If the compute is doing the work, the number of humans needed to coordinate it becomes an open question for the CFO.

Sam Altman has framed this in a way that sounds more benign. He proposed in a May 2024 All-In Podcast episode the idea of Universal Basic Compute — a world where instead of a Universal Basic Income, every person receives a slice of AI compute, which they can use, sell, or donate. “What you get is not dollars but this slice, you own part of the productivity,” Altman said. In this framing, tokens become a form of democratized economic participation. The concern, of course, is who controls the issuance of that compute — and whether the people receiving it have enough of it to matter.

The Legitimate Case for Token Metrics

None of this should be taken to mean that measuring AI adoption is inherently misguided. The intention behind initiatives like Claudeonomics is sound, even if the execution created problematic incentives.

Companies have long struggled to answer a simple question: which employees are genuinely integrating AI into their work, and which are window-dressing? Resume mentions of “proficiency with AI tools” are essentially meaningless. Self-reported surveys are unreliable. Token consumption data — when correlated with output, not just measured in isolation — is actually one of the most honest signals available about whether AI is being used substantively.

The engineering leaders who are doing this well are looking at four things in combination: token volume (as a baseline signal), the complexity and outcomes of tasks the tokens were used for, the quality of outputs produced, and the change in overall throughput over time. Token spend as one of four inputs is a very different thing than token spend as a leaderboard.

Meta’s official engineering dashboard — the one that existed before Claudeonomics and survived its shutdown — is presumably doing something closer to this. The problem with the public leaderboard wasn’t the underlying data. It was the severing of that data from any context about what the tokens were actually accomplishing.

Bosworth’s framing at least included the outcome: his engineer wasn’t just spending tokens, they were producing 10x. Huang’s framing similarly tied token spend to amplification of individual output. The issue is that when those observations trickle into corporate culture as token spend = productivity, the nuance that made the original claim interesting gets lost in translation.

Governance in an Era of Token Economics

Beyond the individual performance implications, the Claudeonomics story surfaces a real governance challenge that most organizations are not prepared for.

When Meta’s leaderboard data leaked externally — triggering its shutdown — the immediate concern was about competitive intelligence: what could outside observers infer about Meta’s internal AI workflows, research priorities, or product roadmap from aggregate token consumption patterns? Sixty trillion tokens is a signal. The distribution across teams, models, and task types tells a story.

This is a new category of data risk that didn’t exist three years ago. Usage data for AI systems is not just a cost figure. It can reveal strategic priorities, staffing decisions, R&D investments, and operational workflows in ways that traditional expense reports never did. Companies building internal token dashboards need data governance frameworks specifically designed for AI consumption data — and most are starting from scratch.

HRKatha’s reporting on Meta’s reversal noted that the episode “highlights a larger transition underway, as organisations explore new ways to quantify performance in an AI-driven workplace while managing emerging risks around data governance.” This is the unsexy but essential work that needs to accompany the enthusiasm around tokenmaxxing: who sees the data, how is it stored, what controls exist on its external distribution, and how does it intersect with employee privacy expectations?

There’s also a labor relations dimension that the current conversation almost entirely ignores. If token consumption data is used in performance reviews — which Meta has explicitly moved toward — it constitutes a new form of surveillance of employee behavior, one that is continuous, granular, and automatic. The implications for employment law, particularly in jurisdictions with strong worker privacy protections, are only beginning to be explored.

What’s Actually Coming

There’s a version of this future that works, and a version that doesn’t.

In the version that works: token budgets become a genuine tool for amplifying human expertise. Engineers have access to substantial compute, use it to run sophisticated multi-agent workflows, and are evaluated on the quality and impact of what those workflows produce. Token consumption is one input to a richer picture of contribution. Companies invest in helping employees understand how to use compute effectively — prompt engineering, agent design, orchestration skills become core competencies. Token data is used to identify where AI adoption is lagging, not to rank humans against each other.

In the version that doesn’t work: token leaderboards proliferate, gaming becomes endemic, employees optimize for metrics that look like productivity without actually delivering it, and organizations develop a false confidence that their AI integration is succeeding because consumption numbers are high. Companies quietly shift the composition of compensation packages toward token budgets to hold cash flat, while the employees who most need job security — those whose roles are most exposed to AI displacement — are the least equipped to compete in a token-consumption race. Governments, moving at their customary pace, arrive too late with frameworks that address the previous era’s problems.

The distance between those two futures is not primarily technological. The models exist. The token infrastructure exists. What doesn’t yet exist is the managerial and organizational discipline to measure what actually matters, and the intellectual honesty to separate signal from performance theater.

A New Question for Your Next Job Interview

Jensen Huang may have been engaging in theater of his own when he said “How many tokens comes along with my job?” would become the new Silicon Valley interview question. Or he may have been describing something that is already true in practice at the companies competing hardest for AI talent.

Tom’s Hardware’s reporting on Huang’s All-In appearance noted that Huang’s analogy — comparing an engineer who doesn’t use tokens to a chip designer who refuses to use EDA software and insists on drafting with paper and pencil — is actually the most clarifying frame he offered. He’s not saying tokens are good. He’s saying that in a world where compute is available and affordable, refusing to use it is a form of professional negligence.

That framing lands differently than a leaderboard. It shifts the conversation from competition to expectation. Not who uses the most, but whether you’re using enough to do your job well by the standards of the current era.

That’s a fair question to ask of a workforce in 2026. The answer, for most organizations, is that they don’t yet have the data, the benchmarks, or the cultural frameworks to answer it honestly.

Claudeonomics, for all its flaws — the gaming, the shutdown, the governance fumble — at least asked the question out loud. The more important work is figuring out how to answer it properly.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.

Comments 3