Artificial intelligence companies love to talk about the future. Faster models. Smarter assistants. AI agents that book flights, write code, and maybe someday negotiate your internet bill while you sleep. But this week, OpenAI moved in a very different direction. Less sci-fi. More human.

The company introduced a new feature inside ChatGPT called “Trusted Contacts.” At first glance, it sounds simple. A user can designate another person to receive an alert if a conversation appears to involve serious self-harm risk or imminent danger.

That sentence alone marks a dramatic moment in AI history.

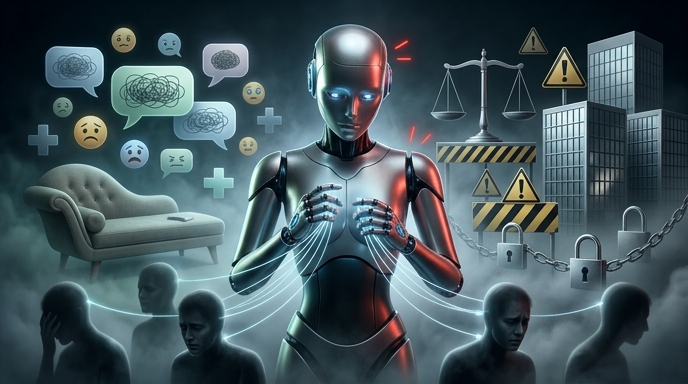

For years, AI companies positioned chatbots as tools. Helpful tools, yes. Sometimes entertaining. Occasionally bizarre. But still tools. Now the largest AI firms increasingly acknowledge something obvious that the industry spent years trying to dance around: people form emotional relationships with these systems.

And once that happens, the stakes change.

The rollout immediately sparked debate across the tech world. The Verge framed the feature as part of a broader safety effort around mental health crises. Gizmodo focused on the uncomfortable implications: if an AI can decide when a conversation becomes dangerous, then the AI is no longer just a passive machine. It becomes an active participant in human intervention.

That line matters. A lot.

The Feature Is Simple. The Implications Are Not.

Here is how Trusted Contacts works.

Users can voluntarily add a trusted person inside ChatGPT settings. If OpenAI’s systems detect discussions involving severe self-harm risk or immediate physical danger, ChatGPT may encourage the user to contact help. In some cases, the system may also suggest notifying the trusted contact.

Importantly, OpenAI says users remain in control. The feature is opt-in. It does not automatically alert police. It does not secretly notify family members without setup. And according to OpenAI, the user still decides whether to send the alert.

That distinction matters because automated escalation systems have become deeply controversial in tech. Social platforms and mental health apps have struggled for years with false positives, privacy concerns, and accidental interventions that sometimes make situations worse instead of better.

OpenAI appears aware of that minefield.

Rather than creating a fully automated emergency reporting pipeline, the company built something softer. More collaborative. More human. The AI nudges. The user chooses.

Still, the feature represents a philosophical shift. ChatGPT is no longer acting purely as an information engine. It is now entering territory traditionally occupied by therapists, crisis counselors, support systems, and social infrastructure.

That is new ground for generative AI.

And frankly, the industry was heading here whether companies admitted it or not.

People Already Treat AI Like Emotional Companions

The most important fact in this entire story has nothing to do with software architecture or trust-and-safety protocols.

It is this: millions of people already talk to AI systems like they are confidants.

Some users ask ChatGPT for coding help. Others use it to brainstorm recipes or summarize PDFs. But a growing number use it for emotional support, loneliness, anxiety, depression, relationship advice, and existential spirals at 2:13 a.m.

The industry knows this. Researchers know this. Users definitely know this.

The old Silicon Valley narrative — “it’s just a tool” — stopped matching reality a long time ago.

Large language models simulate conversation with astonishing fluency. They remember context. They mirror tone. They respond instantly. They never seem distracted. They never appear judgmental. For many people, especially isolated people, that creates emotional attachment almost automatically.

That attachment can become powerful very quickly.

OpenAI’s Trusted Contacts feature quietly acknowledges that reality. The company is effectively saying: yes, people use ChatGPT during vulnerable moments, and yes, the platform needs guardrails for those situations.

Critics argue this creates dangerous dependency. They are not entirely wrong.

An AI system cannot genuinely understand suffering. It predicts language patterns. That distinction matters even if the experience feels emotionally real to the user. But here is the uncomfortable counterpoint: humans often respond emotionally to things that simulate empathy effectively enough. Fiction does it. Movies do it. Video games do it. Pets sometimes do it across species boundaries.

AI just does it interactively.

That changes the psychological equation.

OpenAI Is Trying to Avoid the “Therapist Bot” Trap

The company’s wording around the launch feels carefully engineered. OpenAI repeatedly avoids describing ChatGPT as a therapist or crisis counselor. That is deliberate.

Because once an AI company openly markets emotional support, liability explodes.

If a chatbot gives dangerous advice during a crisis, who becomes responsible? The developer? The model provider? The user? Regulators have not fully answered those questions yet.

So OpenAI is attempting a balancing act.

On one side, the company cannot ignore the reality that users discuss deeply personal and dangerous topics with ChatGPT. On the other side, it cannot position the product as licensed medical support.

Trusted Contacts sits directly in the middle of that tension.

The feature essentially says: “We recognize these conversations happen, but we want human beings involved.”

That last part is probably the smartest design decision in the entire rollout.

Many AI safety proposals assume more automation solves problems. But fully automated emotional intervention systems could become dystopian almost instantly. Imagine a chatbot silently contacting authorities after misreading sarcasm or dark humor. Imagine abusive family members exploiting emergency alerts. Imagine governments demanding direct escalation pipelines.

OpenAI avoided that route.

At least for now.

Instead, the company built something closer to a social safety net extension. The AI encourages connection back into the real world.

That may sound modest. It is not modest at all.

The Privacy Questions Are Going to Get Loud

No matter how carefully OpenAI frames this feature, privacy concerns are inevitable.

And some of them are legitimate.

For Trusted Contacts to function, ChatGPT must evaluate conversations for signs of severe distress. That means users must trust OpenAI’s detection systems. They also must trust how the company handles sensitive emotional data.

That is a huge ask.

AI companies already sit on mountains of deeply personal information. Users confess fears, medical concerns, romantic issues, financial problems, and private thoughts to chatbots with startling openness. Sometimes more openly than they speak to friends.

Now imagine layering crisis detection on top of that.

Even if the system remains opt-in, users will inevitably wonder:

How does ChatGPT determine danger?

What counts as imminent risk?

Could jokes trigger intervention prompts?

Could future versions become more aggressive?

Could governments pressure companies for access?

Those concerns are not paranoia. They are rational questions about infrastructure that increasingly mediates emotional life.

OpenAI says the feature is designed with privacy protections and user control in mind. But skepticism will remain, especially because the broader tech industry has repeatedly trained users not to trust vague reassurances about sensitive data handling.

And there is another problem lurking beneath the surface.

AI systems are probabilistic. They make mistakes. Sometimes weird mistakes.

A false positive in movie recommendations is harmless. A false positive in crisis detection is not.

The Real Story Is Bigger Than One Feature

Most headlines framed Trusted Contacts as a new safety tool. That is technically correct. But the deeper story involves the evolution of AI itself.

The generative AI industry is moving from productivity software toward social infrastructure.

That transition changes everything.

When software becomes emotionally significant, people demand different standards from it. Nobody expects Excel to care about their well-being. People increasingly expect conversational AI systems to respond responsibly during emotional crises.

That creates pressure from every direction at once.

Users want empathy.

Governments want safeguards.

Companies want scale.

Investors want growth.

Those incentives do not naturally align.

Trusted Contacts reveals how AI companies are trying to navigate that collision without fully admitting how socially powerful these systems have become.

And make no mistake, they are powerful.

Not because they are conscious. They are not. Not because they “understand” emotion in a human sense. They do not. They are powerful because humans are social creatures with brains wired for interaction. If something talks fluently, remembers context, and responds instantly, people attach meaning to it.

That dynamic is already reshaping digital life.

The industry spent years debating whether AI could pass the Turing Test. Meanwhile, millions of users skipped the philosophical debate entirely and just started talking to the machines as if they mattered.

That happened faster than almost anyone predicted.

This Could Become an Industry Standard

Once one major platform introduces a feature like Trusted Contacts, competitors rarely stay still.

Expect the rest of the AI sector to watch this rollout very carefully.

Anthropic, Google DeepMind, Meta AI, and other major players now face the same underlying problem: users increasingly rely on conversational AI during emotionally vulnerable moments.

That reality creates pressure to implement some kind of safety response system.

The alternative is politically dangerous. Imagine future congressional hearings after a widely publicized tragedy involving chatbot interactions. Lawmakers would immediately ask why companies failed to create intervention mechanisms despite knowing users formed emotional dependencies.

Trusted Contacts may therefore represent the first visible step toward a much larger category of AI-human safety infrastructure.

And that future gets complicated fast.

Will AI systems eventually contact emergency services directly?

Will governments require mandatory reporting standards?

Will insurance companies get involved?

Will enterprise AI systems monitor employee mental health signals?

Will schools deploy AI wellness monitoring for students?

Some of those possibilities sound useful. Others sound horrifying.

Probably because they are both.

Technology rarely arrives as purely utopian or purely dystopian. It usually arrives messy. Half-built. Contradictory. Useful in one context and invasive in another.

Trusted Contacts fits that pattern perfectly.

The Human Element Still Matters Most

The most interesting part of this entire story is not technological. It is cultural.

For decades, the internet trained people to communicate through platforms instead of directly with each other. Social media quantified attention. Messaging apps compressed relationships into notifications. Algorithms mediated conversation.

Now AI systems are becoming conversational participants themselves.

That is an enormous shift in human communication.

OpenAI’s new feature implicitly recognizes something many people resist admitting: emotional isolation has become one of the defining conditions of digital life. Otherwise users would not be turning to chatbots during moments of despair in the first place.

An AI feature cannot solve that problem.

A notification cannot solve it either.

But the feature does reveal where technology companies think the world is heading. AI assistants are no longer just productivity engines sitting beside human life. They are becoming woven into emotional life itself.

That reality creates both opportunity and danger.

The optimistic interpretation is that AI can help bridge moments of crisis and reconnect people with real human support. The darker interpretation is that society may normalize emotional dependence on systems designed by corporations.

Both interpretations can be true simultaneously.

That is the uncomfortable truth underneath all of this.

Trusted Contacts is not just another product update. It is a signal flare from the future of AI-human interaction. A future where conversational systems increasingly sit inside moments once reserved only for friends, partners, counselors, family members, and communities.

And once technology enters that territory, there is no easy way back out.