AI-Only Companies Are Not AI-First Companies

The future of work is not “tools versus people.” It is workflows versus org charts.

For the last two years, “AI-first” has become one of the most overused phrases in technology. Companies say they are AI-first because employees use ChatGPT. Startups say they are AI-first because their product has a model inside it. Executives say they are AI-first because every team has been told to “find AI use cases.” Investors say a company is AI-first because it has a small team, high revenue per employee, and a generative AI wrapper somewhere in the stack.

But that is not what AI-first really means.

And it is definitely not what “AI-only” means.

The distinction matters because the next phase of competition will not be won by companies that merely subscribe to AI tools. It will be won by companies that redesign how work happens. That is where the difference between AI-first and AI-only becomes important.

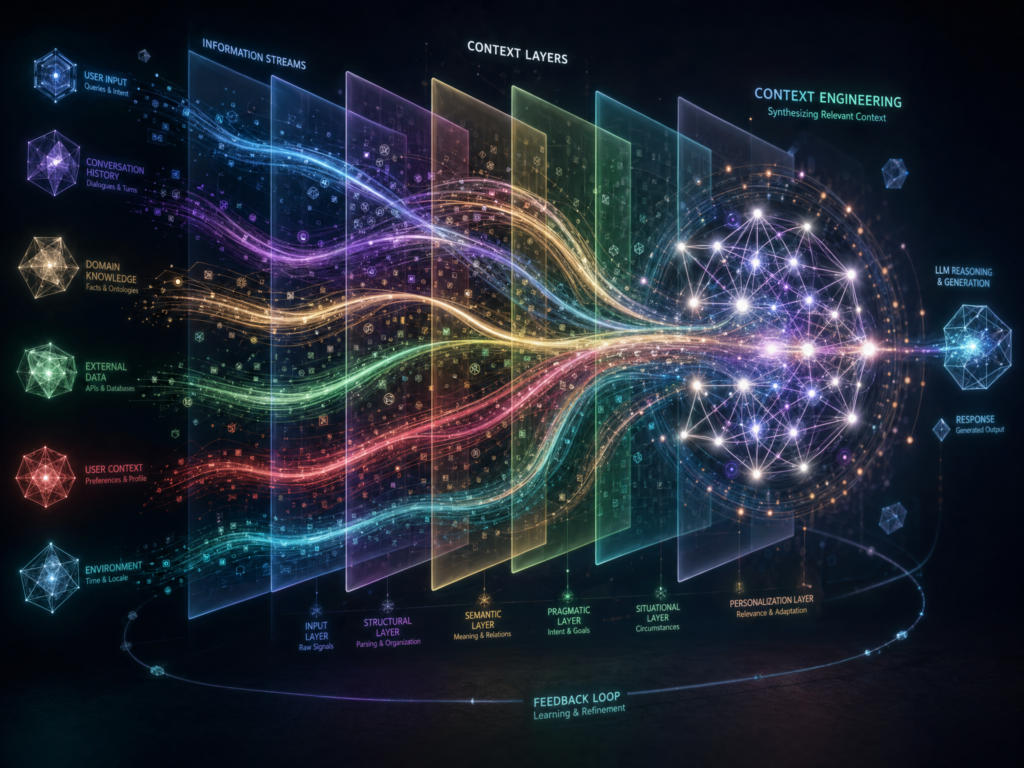

An AI-first company designs its products, workflows, hiring, management systems, operating metrics, and resource-allocation decisions around AI as a core operating layer. AI is not a productivity add-on. It is part of the company’s default way of thinking.

An AI-only workflow, by contrast, removes humans from the execution loop. Humans may still set the goals, values, constraints, risk thresholds, escalation rules, and accountability structures. But the workflow itself does not pause for a person to review, approve, edit, or manually advance the work.

An AI-only company is the more radical version: a firm composed largely, or perhaps entirely, of coordinated AI agents rather than traditional human employees. That version is still mostly speculative. BCG, in its article “Why CEOs Need to Prepare for AI-Only Rivals”, explicitly says that “a fully autonomous, AI-only firm is not yet a reality,” even while arguing that CEOs should prepare for the possibility.

That caveat is essential. Fully AI-only companies do not yet exist at meaningful scale in the way the term is sometimes used online. But AI-only workflows are becoming plausible. And the best way to understand the future is not to ask whether all companies will become humanless. They will not, at least not soon. The better question is:

Which parts of the company still need humans inside the operating loop, which need humans outside the loop, and which should not be automated at all?

AI-first is the transition strategy. AI-only is the architectural endgame some people are now imagining.

The Spectrum: AI-Enabled, AI-First, Agent-Operated, AI-Only

The language around AI companies is messy because several different ideas are being collapsed into one phrase.

A useful spectrum looks like this:

AI-enabled: Humans do the work. AI helps.

AI-first: Workflows are redesigned around AI as the default operating layer.

Agent-operated: Humans supervise teams of AI agents that execute tasks across systems.

AI-only workflow: AI executes a workflow end-to-end, while humans define boundaries and handle exceptions.

AI-only company: A mostly speculative future firm in which networks of specialized agents coordinate most or all company functions with few or no traditional employees.

This spectrum prevents a common mistake: treating a company that uses AI heavily as proof that AI-only companies already exist.

They do not.

Cursor, Lovable, Mercor, Lemonade, Shopify, Duolingo, Microsoft, and other examples all tell us something important about the shift. But none of them should be casually described as a fully AI-only company. They have humans. They have leaders. They have governance needs. They have customers. They have legal obligations. They make strategic decisions. They need judgment, trust, and accountability.

What they show is different: the company is being decomposed into workflows, and more of those workflows are becoming machine-executable.

That is the real story.

AI-First Is Not “Everyone Uses ChatGPT”

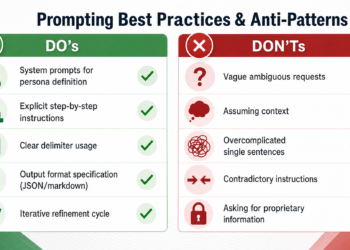

The laziest definition of AI-first is also the most common: “Our employees use AI tools.”

That is not enough.

A company is not AI-first because it has a Slack channel full of prompt tips. It is not AI-first because the marketing team uses a writing assistant. It is not AI-first because engineers use code completion. Those are useful behaviors, but they are closer to AI-enabled than AI-first.

The real test is whether AI changes how the company allocates resources.

Does AI affect hiring requests? Does it affect product roadmaps? Does it affect what work gets funded? Does it change how teams measure productivity? Does it change who owns decisions? Does it change whether a workflow needs to exist in its current form?

That is why Shopify has become one of the clearest examples of an AI-first management doctrine. In a memo later shared publicly, Shopify CEO Tobi Lütke wrote that “reflexive AI usage is now a baseline expectation” at the company. The memo, available at Tobi Lütke’s Shopify blog, also linked AI usage to resource allocation: teams were expected to show why AI could not solve a problem before asking for more headcount or resources.

That is a very different idea from “please try ChatGPT.”

It makes AI part of the permission structure for hiring.

In an AI-enabled company, a manager might ask: “Can this person be more productive with AI?”

In an AI-first company, the manager asks: “Before we hire, redesign, or fund this team, have we proven that AI cannot do the work or meaningfully change the work?”

That question changes the company.

It does not make Shopify AI-only. Humans remain central to judgment, product direction, culture, customer relationships, and accountability. But it does show what AI-first really means: AI becomes part of the operating doctrine.

AI-Only Is About the Operating Loop

Daniel Schreiber, cofounder of Lemonade, gives one of the cleanest definitions of AI-only in his essay “After AI-First Comes AI-Only”. His argument is not that companies will instantly become humanless. It is that the meaningful shift is from AI helping humans do work to AI executing entire workflows without a human inside the loop.

That phrase — inside the loop — is the key.

In an AI-first company, humans often remain in the operating loop. They approve, review, prioritize, edit, escalate, correct, and decide. The AI may draft the code, summarize the customer issue, write the report, score the lead, or produce the campaign variants, but the workflow still waits for a person.

In an AI-only workflow, the system keeps moving.

Humans define the purpose. Humans decide the acceptable level of risk. Humans determine escalation paths. Humans audit the outputs. Humans are accountable when things go wrong. But humans are not required for every step of execution.

That is the fundamental difference.

AI-first asks: “How can AI help the person in this role?”

AI-only asks: “Does this role or workflow need to exist in this form at all?”

That does not mean humans disappear from the universe. It means they move to a different layer of the system. They become designers, governors, auditors, exception handlers, and accountable owners.

The strongest way to frame AI-only is not “companies with no humans.” It is “workflows where humans are no longer part of routine execution.”

AI-First Gives Productivity Gains. AI-Only Seeks Step-Function Redesign.

One reason AI-only is becoming an attractive concept is that AI-first transformation can produce real but bounded gains.

A company can deploy copilots everywhere and become 20%, 30%, or even 50% more productive in some functions. Those gains matter. They can change margins, speed, and headcount planning. But they may not change the shape of the process.

If the old workflow had ten steps, five approvals, three handoffs, and two weekly meetings, adding AI to each step may make every participant faster while preserving the underlying bureaucracy.

That is the “horseless carriage” problem.

Early cars looked like carriages without horses because people initially mapped new technology onto old mental models. Schreiber uses a similar analogy in his AI-only argument: if you want to unleash the full power of the new technology, stop designing around the horse.

That is the central tension. AI-first companies may become much more productive while still carrying the organizational logic of the pre-AI firm. AI-only thinking asks whether the workflow should be rebuilt from zero.

Take software development. An AI-first engineering team may use AI to write code, generate tests, review pull requests, summarize tickets, and debug errors. That is useful. But the team may still preserve the same sprint rituals, managerial approvals, QA bottlenecks, deployment gates, and backlog structures.

An AI-only software QA workflow would look different. The agent would monitor code changes, generate tests, run them, classify failures, open issues, propose patches, validate fixes, and escalate only when uncertainty crosses a threshold. Humans would design the testing philosophy, review system performance, and handle edge cases. They would not manually sit inside every QA cycle.

That is not just faster work. It is a different workflow architecture.

The Company Is Becoming a Bundle of Workflows

The most important analytical move is to separate company from workflow.

A fully AI-only company is speculative. But AI-only workflows are already easier to imagine in areas such as:

- Software QA

- Lead qualification

- Sales research

- Internal IT support

- Data enrichment

- Compliance monitoring

- Campaign testing

- Report generation

- Invoice reconciliation

- Customer support triage

- Knowledge-base maintenance

- Vendor onboarding checks

- Security alert classification

These are not all equally safe or equally ready. But they share a pattern: the work is repeatable, measurable, data-rich, and often bounded by clear escalation criteria.

The future may not arrive as “the first AI-only company.” It may arrive as ordinary companies converting one workflow after another into AI-only execution systems.

That matters because the phrase “AI-only company” can become misleading hype. A startup may be human-led in strategy, legal, fundraising, brand, product taste, and customer relationships while being AI-only in internal support, QA, reporting, and outbound lead research.

That company is not AI-only. It is a human-led company with AI-only workflows.

This distinction is especially important for incumbents. If you are a bank, insurer, retailer, hospital system, university, logistics company, or software firm, you do not need to become an AI-only company to be affected by the shift. You only need a competitor to make one expensive workflow radically cheaper.

That is where the threat begins.

Why BCG Thinks CEOs Should Prepare for AI-Only Rivals

BCG’s “Why CEOs Need to Prepare for AI-Only Rivals” is useful because it avoids declaring that AI-only firms already exist. Instead, it argues that CEOs should prepare for a plausible future in which networks of specialized agents coordinate business functions with very limited human labor.

The competitive advantages BCG highlights are straightforward: lower cost, higher uptime, and faster adaptation. An AI-only rival would not operate on human schedules. It would not need traditional departmental handoffs. It could potentially run experiments continuously, update workflows quickly, and scale execution without hiring linearly.

But the article also points to the right incumbent response: become AI-first now.

That is the strategic bridge. AI-first is not the same as AI-only, but it may be the only credible way for existing companies to prepare for AI-only competition. Companies that wait until autonomous competitors arrive will have too much organizational debt. Their data will be fragmented. Their workflows will be undocumented. Their approvals will be political. Their governance will be reactive. Their employees will be unprepared to manage agentic systems.

AI-only competition would attack the cost structure of the traditional company. But incumbents are not helpless. They have customer trust, brand, proprietary data, distribution, compliance experience, domain expertise, capital, and existing relationships.

The question is whether they can convert those advantages into AI-first operating models before new entrants convert AI-only workflows into structural cost advantages.

The Org Chart Becomes an Orchestration Chart

Traditional management asks: “Who owns this?”

AI-first management asks: “Which human-agent team owns this?”

AI-only management asks: “Which agent system owns this, and which human is accountable if it fails?”

That is a profound shift in the unit of management.

The org chart was built for human labor. It shows reporting lines, authority, headcount, departments, and managerial scope. But agentic work does not fit neatly into traditional hierarchy. An AI agent may touch customer data, pricing systems, CRM records, internal knowledge bases, product analytics, and code repositories in a single workflow.

That creates a new management stack:

- Agent identity

- Agent permissions

- Tool access

- Memory and context controls

- Data boundaries

- Escalation thresholds

- Audit logs

- Evaluation systems

- Human owners

- Kill switches

- Compliance review

- Lifecycle management

- Versioning

- Incident response

- Performance dashboards

This is why the future firm may be less like a pyramid and more like an orchestration layer.

A human manager may oversee a small number of people and a much larger number of agents. Some agents may be personal assistants. Others may be workflow agents. Others may be compliance agents, evaluation agents, research agents, test agents, sales agents, or customer-support agents.

The job of management shifts from assigning tasks to designing systems of execution.

That is also why AI-first leadership is harder than buying software. The bottleneck is not access to models. The bottleneck is organizational redesign.

The AI Value Gap Is Really a Redesign Gap

BCG’s “The Widening AI Value Gap” argues that companies are separating into those that generate meaningful value from AI and those that remain stuck with limited returns. The key lesson is that tool adoption is not enough. The companies that pull ahead tend to connect AI investment to workflow redesign, talent, operating models, data, and leadership commitment.

That is the important point: the gap is no longer between companies that have AI and companies that do not.

Almost everyone has AI.

The gap is between companies that redesign around AI and companies that merely subscribe to AI tools.

BCG’s “How to Prepare for an AI-First Future” makes a related point: AI-first companies are rewriting the organizational playbook, sometimes generating large revenue with very small teams. But this does not mean small teams automatically win. It means the relationship between labor, software, and scale is changing.

The AI-first company is not simply a normal company with fewer people. It is a company that rethinks what work should be done by humans, what should be done by agents, and what should be eliminated entirely.

The AI-only idea pushes that logic to its extreme.

AI-Native Is Not the Same as AI-Only

AI-native companies are born in the AI era. They often embed AI into the product itself, not just internal operations. Cursor, Lovable, and Mercor are good examples of AI-native or AI-heavy companies. But AI-native does not mean AI-only.

Cursor, made by Anysphere, is a strong example of AI-native scale in software development. In its Series D announcement, Cursor said it had crossed $1 billion in annualized revenue, had more than 300 employees, and raised financing at a $29.3 billion valuation. That is extraordinary growth. It shows how powerful AI-native products can be when they attack a high-value workflow like coding.

But it is not proof that humans are unnecessary. Cursor has employees, leadership, customers, product judgment, infrastructure costs, security requirements, and competitive pressures.

Mercor is another useful example because it shows how human expertise may become fuel for agentic systems. In its Series C announcement, Mercor describes a model in which expert human knowledge can help train and evaluate agents so that tasks can be performed at scale. That is not an AI-only story in the simplistic sense. It is a hybrid story: humans provide expertise, evaluation, and judgment; agents scale execution.

This may be one of the most important labor-market shifts of the AI-first economy. Human expertise becomes training data, evaluation data, system design input, and escalation judgment.

The expert does not vanish. But the expert’s work may be transformed from doing every task to teaching, judging, improving, and governing systems that perform many tasks.

Revenue per Employee Is Not Profit per Employee

AI-native companies can produce astonishing revenue-per-employee numbers. That has fed speculation about one-person unicorns, tiny teams with massive revenue, and eventually AI-only firms.

But revenue per employee can be a misleading metric.

A company with very few employees may still have enormous compute costs, model inference costs, cloud costs, data costs, security costs, customer acquisition costs, and dependency risks. If a company depends on expensive foundation models or GPU-heavy workloads, its human payroll may shrink while its infrastructure bill explodes.

That is why AI-only economics need to be analyzed carefully.

A company can look incredibly efficient on headcount and still have weak gross margins. A startup can scale quickly and still be exposed to model-price changes, platform dependency, data-security obligations, and enterprise procurement friction.

The lesson is not “humans are irrelevant.” The lesson is that labor is no longer the only scaling constraint.

The cost structure of the firm is being rewritten. Some spending shifts from salaries to compute. Some management shifts from supervising people to supervising agents. Some risk shifts from human error to system error. Some bottlenecks shift from hiring to evaluation, governance, data access, and model reliability.

An AI-only workflow is not free just because no person touched it.

The Governance Problem: Who Is Responsible When No Human Clicked Approve?

AI-only workflows are powerful precisely because they remove humans from routine execution. But that is also what makes them risky.

If an AI-only workflow qualifies a lead incorrectly, the damage may be small. If it generates a flawed internal report, the damage may be manageable. If it denies an insurance claim, approves a loan, changes a price, flags fraud, terminates a customer account, recommends medical treatment, screens a job candidate, or generates a legal filing, the stakes are much higher.

The accountability question becomes unavoidable:

Who is responsible when no human clicked approve?

The answer cannot be “the agent.”

Legal systems, customers, regulators, employees, and courts will still look for accountable humans and institutions.

That is why AI-only workflows will collide with regulated industries first. Insurance, healthcare, banking, education, employment, legal services, and public-sector services often require explainability, appeal paths, audit trails, procedural fairness, and human accountability.

The EU AI Act is especially relevant here. Article 14, available through the Artificial Intelligence Act site, requires human oversight for high-risk AI systems and says oversight should be performed by natural persons with the necessary competence, training, authority, and support. The full regulation is available through EUR-Lex.

That requirement does not make AI-only workflows impossible. But it does mean companies cannot simply remove humans from high-risk decisions and declare victory.

Likewise, NIST’s generative AI risk work, including NIST AI 600-1, emphasizes identifying, measuring, and managing risks specific to generative AI. The practical implication is clear: the more autonomous a workflow becomes, the more disciplined its governance must be.

AI-only execution requires more human accountability, not less.

AI-Only Will Arrive First Where the Risk Is Bounded

Because of governance, AI-only will likely arrive unevenly.

The safest early zones are lower-risk, back-office, internal, or reversible workflows. These are places where errors can be detected, rolled back, corrected, or escalated before major harm occurs.

Examples include:

- Internal ticket routing

- Data normalization

- Basic report generation

- Software test generation

- Internal knowledge retrieval

- Vendor-document review

- Sales-account research

- Marketing experiment setup

- CRM enrichment

- Meeting-summary distribution

- Invoice matching

- Security alert triage

The harder zones are high-stakes, external, regulated, or irreversible workflows.

Examples include:

- Medical diagnosis

- Credit approval

- Insurance claim denial

- Employment screening

- Legal advice

- Student evaluation

- Public-benefits eligibility

- Account termination

- Dynamic pricing in sensitive markets

- Law-enforcement or surveillance decisions

These workflows may still use AI extensively. They may become AI-first. They may become agent-operated. But AI-only execution will require stronger oversight, auditability, appeal mechanisms, and legal clarity.

The future will not be uniformly AI-only. It will be a map of different human-control levels.

Some workflows will keep humans inside the loop. Some will move humans outside the loop. Some will require humans above the loop as accountable supervisors. Some should not be automated at all.

Duolingo and the Backlash Problem

AI-first mandates can sound empowering or threatening depending on how they are framed.

Duolingo became a useful case study after announcing an AI-first shift involving contractors, hiring, and performance processes. The company said it would stop using contractors for work AI could handle, factor AI into hiring, and approve headcount only where teams could not automate the work. It later clarified that employees should not be forced to use AI “for AI’s sake” and that performance should be judged by doing the job well, not merely by using AI.

That clarification matters.

AI-first companies need to measure outcomes, not theatrical AI usage.

If employees believe they are being evaluated on whether they used AI rather than whether they did better work, they will game the metric. They will paste AI into processes where it does not belong. They will optimize for compliance theater instead of productivity, quality, or customer value.

This is one of the biggest cultural risks of the AI-first transition.

A serious AI-first company does not say, “Use AI everywhere.” It says, “Redesign work around the best available combination of humans, agents, software, and judgment.”

Sometimes that means using AI. Sometimes it means not using AI. Sometimes it means deleting a process. Sometimes it means keeping a human exactly where they are because trust, empathy, taste, accountability, or legal responsibility matter.

Mandating AI adoption is easier than designing fair AI metrics.

AI-First Is a Culture of Adoption. AI-Only Is a Culture of Deletion.

The cultural difference between AI-first and AI-only is enormous.

AI-first culture says:

Learn the tools. Use AI before asking for resources. Build AI into product thinking. Assume every workflow can be improved. Make AI fluency part of the job.

AI-only culture says:

Redesign the process so the default execution layer is machine-run. Remove unnecessary handoffs. Remove waiting. Remove approvals that do not add judgment. Remove roles that exist only because the old workflow required manual labor.

AI-first is a culture of adoption.

AI-only is a culture of deletion.

That deletion can be productive, but it can also be brutal. It raises workforce questions, morale questions, inequality questions, and social questions. If AI-only workflows expand rapidly, who benefits from the efficiency? Customers? Shareholders? Founders? Remaining employees? Society? Or only the owners of the automated system?

Schreiber’s essay is valuable partly because it does not ignore this problem. The AI-only future is not merely an efficiency story. It is also a labor-market story.

A responsible article about AI-only cannot stop at “companies will be more efficient.” It must ask who is displaced, who is empowered, who is accountable, and what institutions are needed when the operating logic of the firm changes.

The New Hiring Question: Person, Agent, or System?

AI-first changes hiring. AI-only changes headcount logic.

The traditional hiring question is: “Do we need another person?”

The AI-first question is: “Can AI make the current team effective enough that we do not need another person?”

The AI-only question is: “Should this workflow be performed by a person at all, or should it be executed by an agent system with a human owner?”

That is a major shift.

Shopify’s memo is important because it links AI use directly to resource requests. Duolingo’s AI-first announcement did something similar by tying headcount approval to whether work could be automated. These examples show that AI is moving from the productivity layer into the budgeting layer.

Once that happens, AI becomes part of management.

The company does not merely ask whether a tool is useful. It asks whether a role, team, budget line, or process still makes sense.

This will make some companies more efficient. It will also create anxiety, resistance, and political conflict inside organizations. Employees will worry that every workflow analysis is a headcount-reduction exercise. Managers will worry that asking for resources signals AI incompetence. Leaders will be tempted to over-automate to satisfy investors.

The healthiest AI-first companies will be clear about the goal: better outcomes, not performative austerity.

The worst ones will turn AI into a blunt instrument for cutting labor without redesigning work responsibly.

AI-Only as Hype

The phrase “AI-only” will be abused.

Some companies will use it when they mean AI-enabled. Some will use it when they mean AI-first. Some will use it when they mean AI-native. Some will use it because it sounds provocative in a fundraising deck.

A job posting for an “AI-only role” does not prove the company has replaced humans. In many cases, it means the company wants humans to build, configure, manage, evaluate, or supervise agents. That is not AI-only. That is humans building agentic systems.

The safest language is this:

AI-only workflows are emerging.

Fully AI-only companies remain speculative.

That formulation captures the reality without flattening the distinction.

It also prevents another common mistake: treating every lean AI-native startup as evidence that humans are obsolete. Small teams can do more with AI. That is real. But companies still need product taste, strategy, trust, governance, customer understanding, regulatory compliance, security judgment, and capital allocation.

The human role changes. It does not vanish evenly.

What Incumbents Should Actually Do

For incumbents, the practical response is not to declare themselves AI-only. It is to become genuinely AI-first.

That means starting with workflows, not tools.

A useful executive agenda would include five moves.

First, map the company’s major workflows. Do not start with departments. Start with value streams: customer acquisition, onboarding, support, claims, fulfillment, reconciliation, software delivery, compliance review, procurement, and reporting.

Second, classify each workflow by required human control. Which workflows need humans inside the loop? Which can move humans outside the loop? Which require human oversight only by exception? Which should not be automated because the risks are too high?

Third, build the agent governance layer before autonomy scales. Agent identity, permissions, audit logs, tool access, escalation paths, and kill switches should not be afterthoughts.

Fourth, redesign metrics. Do not measure AI usage. Measure cycle time, quality, customer satisfaction, risk reduction, cost to serve, revenue conversion, employee leverage, and error rates.

Fifth, protect the human domains that matter most. Judgment, trust, empathy, taste, ethics, mission-setting, stakeholder management, and accountability become more valuable when execution becomes more automated.

The incumbents that win will not be the ones with the most AI pilots. They will be the ones that combine proprietary data, trusted brands, domain expertise, distribution, governance, and AI-first operating discipline.

What Remains Human?

The biggest open question is not whether AI will do more work. It will.

The bigger question is what remains uniquely human when more execution becomes autonomous.

Likely human domains include:

- Judgment under uncertainty

- Accountability for consequences

- Taste and product intuition

- Mission and values

- Trust-building

- Empathy

- Regulatory responsibility

- Ethical tradeoffs

- Narrative and meaning

- Stakeholder management

- Deciding what should not be automated

The irony of AI-only workflows is that they may make human judgment more important, not less. When machines execute routine work, humans spend less time moving tasks forward and more time deciding what the system should optimize for, where it should stop, and what values it should not violate.

That is a different kind of work.

It is also a different kind of company.

The Future Company Is Not Humanless. It Is Human-Placed.

The most likely future is not a world of fully autonomous corporations with no human accountability. That is still speculative, legally unclear, and operationally immature.

The more likely future is a world where companies become increasingly precise about where humans belong.

Humans inside the loop for high-stakes judgment.

Humans outside the loop for monitored execution.

Humans above the loop for governance and accountability.

Humans nowhere near the loop when the work is low-risk, repetitive, measurable, and better handled by machines.

That is the real battle.

Not AI tools versus people.

Workflows versus org charts.

AI-first companies redesign work around AI. AI-only workflows remove humans from routine execution. AI-only companies, if they arrive, will be the extreme endpoint of that logic — networks of agents coordinating work that once required departments, managers, meetings, and headcount.

But the lesson for leaders today is not to chase the phrase “AI-only.” It is to ask a harder question about every workflow in the company:

Does a human need to be inside this loop?

The future company may not be humanless.

But it will be increasingly designed around deciding where humans matter most.