How ChatGPT Images 2.0 rewrote the leaderboard, why the runners-up still matter, and what the numbers actually tell you about which model to use for which job.

Why this guide exists

For most of 2024 and the first three quarters of 2025, picking an “AI image generator” was mostly a vibes exercise. You’d read a listicle, pick a model whose sample gallery looked nice, and cope with whatever it couldn’t do. That era is over.

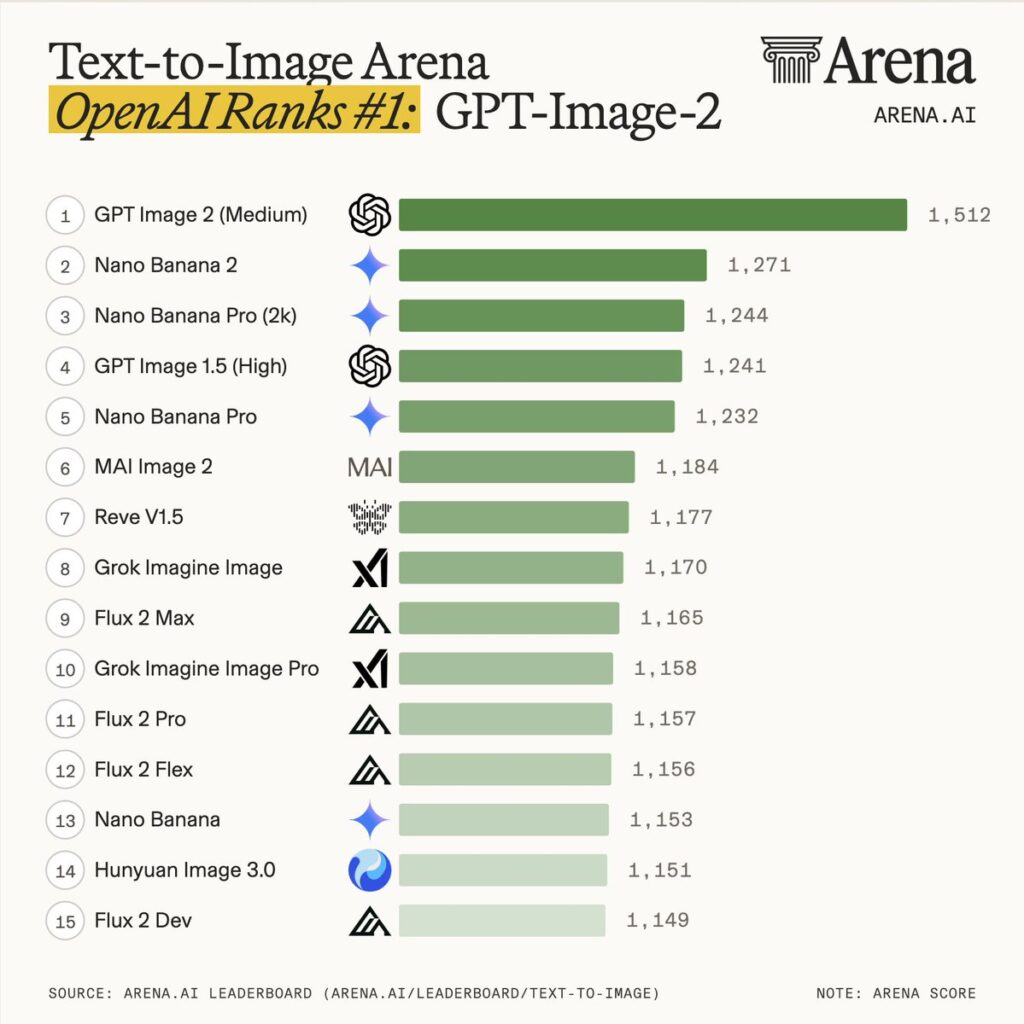

Text-to-image has matured into something measurable. Two independent, blind-voted, Elo-based arenas — the Arena.ai Text-to-Image Leaderboard and the Artificial Analysis Image Arena — now collect millions of human preference votes per quarter and rank models the same way chess players are ranked. As of April 2026, the Arena.ai text-to-image board alone has aggregated over 4.89 million votes across 55 models, with a parallel Image Edit Arena sitting on 25.77 million edit votes. Those numbers matter because they translate “which model is best?” from a taste question into a statistical one.

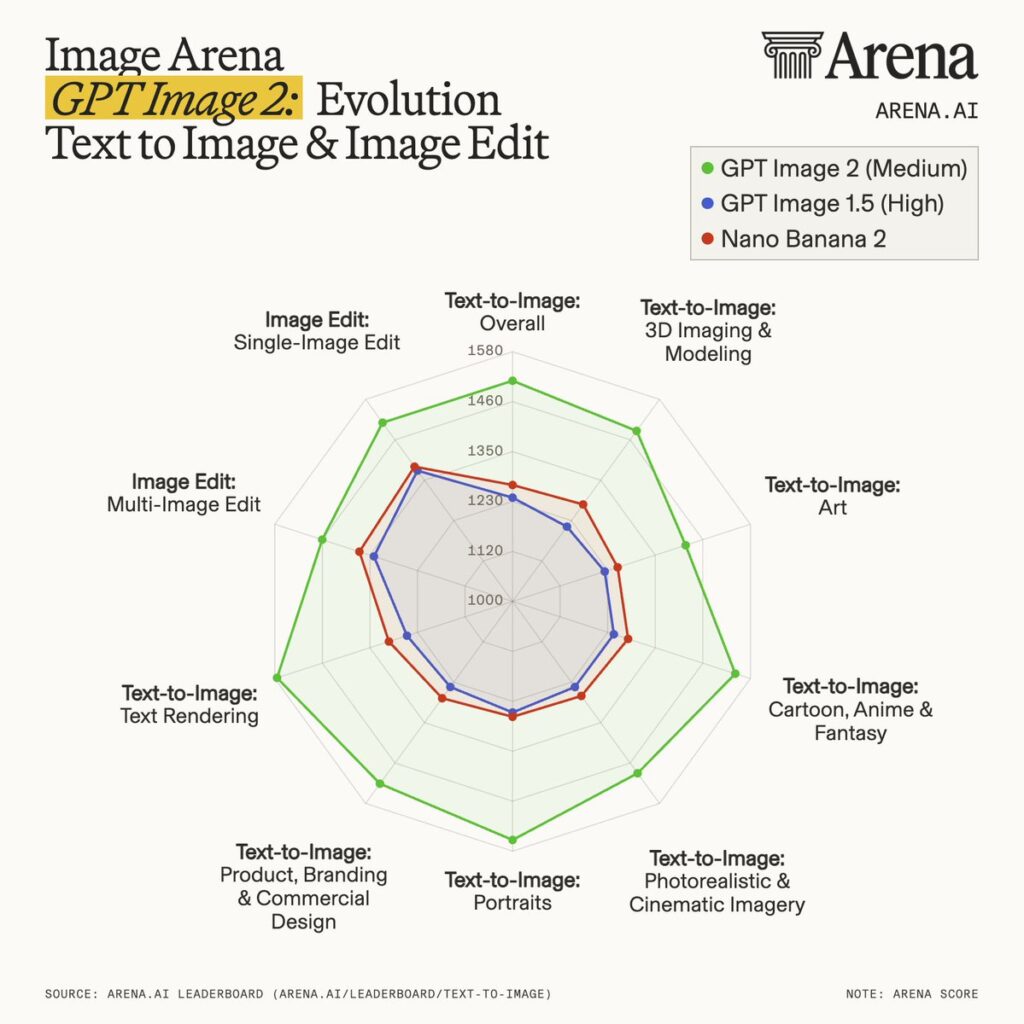

And the statistical answer, as of this writing, is unambiguous: OpenAI’s newly released ChatGPT Images 2.0 (API identifier gpt-image-2) is not just winning — it’s winning by a margin the leaderboard has never seen before. On Arena.ai, gpt-image-2 sits at an Elo of 1512±8 on the Text-to-Image board and 1513±7 on the Single-Image Edit board, both rankings #1, both more than 240 Elo points above the second-place model (Arena.ai). For context, the previous state-of-the-art — GPT Image 1.5 — sat about 30 points above its nearest rival. A 240-point gap isn’t iteration. It’s a generational reset.

This guide is the long version of what those numbers mean. We’re going to rank and deeply profile the top five AI image generators of 2026, using Elo as the spine but going well beyond it — into architecture, pricing, real production workflows, licensing traps, and the specific prompts each model still gets wrong. If you’re choosing tooling for a team, a product, or a pipeline, this is meant to save you weeks of A/B testing.

A caveat up front: the AI image space still moves in quarters, not years. Numbers cited here are current as of April 22, 2026, sourced from the live leaderboards and corroborated against OpenAI’s official launch post, Microsoft Foundry’s GPT-image-2 integration blog, and VentureBeat’s launch coverage. The gaps we describe are real, but expect them to compress as 2026 progresses.

How we ranked these models

Every ranking needs a methodology section, and most of them are garbage. Here’s ours in plain English.

Primary signal: Arena Elo. The Arena.ai leaderboard is the closest thing the industry has to a neutral referee. Users enter a prompt, get two images generated by anonymized models, and pick the one they prefer. Over millions of such head-to-heads, an Elo rating emerges — a number that behaves roughly like a chess rating. A 10-point gap is meaningful. A 50-point gap is substantial. A 100-point gap is decisive. A 240-point gap, like the one gpt-image-2 currently holds, is the kind of thing that only happens when somebody ships a categorically better model.

Secondary signal: Artificial Analysis. Artificial Analysis runs a parallel arena with a different voter population, slightly different prompt mix, and occasionally wildly different rankings. We cross-reference. Where the two arenas disagree, we explain why.

Tertiary signals. We also pulled from the JAI Portal leaderboard, the WaveSpeedAI LM Arena analysis, and workflow-level reviews like iMini AI’s benchmark for specific real-world use cases.

What we’re not using: automated metrics like FID or CLIP score. They correlate poorly with human preference. They’re useful for research papers; they’re misleading for product decisions.

Preliminary Elo warning. The gpt-image-2 Elo of 1512 is marked “Preliminary” on Arena.ai because it only has 15,127 votes at the time of this writing, versus 95,000+ for GPT Image 1.5. Confidence intervals will tighten, and the number may drift — but the gap is so large that it would take an extraordinary regression to drop gpt-image-2 out of the #1 position. Noted, watched, still called.

What we evaluated inside each profile: Elo (both arenas), prompt adherence on complex multi-element prompts, photorealism, text rendering (English and non-Latin scripts), image editing performance, speed, pricing, commercial license, and the shape of each model’s weaknesses. Each profile ends with a “use this if…” verdict, because specs only matter when translated into a decision.

#1 — ChatGPT Images 2.0 (OpenAI gpt-image-2)

Arena.ai Text-to-Image Elo: 1512±8 (rank #1, preliminary) — source

Arena.ai Image Edit Elo: 1513±7 (rank #1, preliminary) — source

Released: April 21, 2026

Lead over #2: ~242 Elo points (text-to-image), ~120 Elo points (image edit)

Availability: ChatGPT (all tiers for base model), API (gpt-image-2), Microsoft Foundry

The numbers are not normal

Leaderboards in AI tend to look like tightly packed pelotons: the top ten models cluster within 50 Elo of each other, and advantages are incremental. That’s been the shape of text-to-image for eighteen months. Until last Tuesday.

When OpenAI pushed gpt-image-2 (marketed to end users as ChatGPT Images 2.0) into the arena — initially under the test alias “duct tape” — it didn’t land in the peloton. It lapped the peloton. Within its first day of public voting, it posted an Elo of 1512 on the Arena.ai Text-to-Image Leaderboard, with the next closest model — Google’s Nano Banana 2 at 1270 — sitting 242 points behind. On the Image Edit Arena, the gap to the #2 model (its own predecessor, ChatGPT Image High-Fidelity at 1393) is a more modest but still huge 120 points.

For reference: a 240-point Elo gap implies that in blind head-to-head voting, the higher-rated model wins roughly 80% of matchups. That is not a “slight edge.” That is a different weight class.

VentureBeat’s launch coverage confirmed what the Elo numbers hinted at: during its public preview stretch on LM Arena under the “duct tape” codename, OpenAI collected what Research Lead Boyuan Chen described to VentureBeat as overwhelming preference data against every major competitor. The codename bet paid off.

What changed architecturally

OpenAI’s official launch announcement frames the 2.0 release around a deceptively simple tagline: “Images are a language, not decoration. A good image does what a good sentence does — it selects, arranges, and reveals.” Behind that framing is a much more substantive shift.

Where GPT Image 1 and 1.5 were relatively conventional text-to-image systems — take a prompt, run it through a model, emit pixels — gpt-image-2 is what OpenAI is calling a “visual system”. VentureBeat reports the underlying architecture has been “revamped from scratch,” and Chen described the model as “a generalist model” or “a GPT for images” rather than a traditional diffusion network. OpenAI has not publicly confirmed whether the system is diffusion-based, autoregressive, or hybrid; Chen explicitly declined to answer that question in the launch briefing.

What OpenAI has confirmed, and what the integration docs from Microsoft Foundry corroborate, is that gpt-image-2:

- Supports up to 4K resolution (total pixel budget of 8,294,400 pixels)

- Has a December 2025 knowledge cutoff, meaning it can generate accurate depictions of recent events, products, and public figures that previous models would have hallucinated

- Includes an “intelligent routing layer” that automatically selects a generation configuration based on the prompt

- Supports up to eight coherent images from a single prompt, with enforced character and object continuity across the set

That last feature is the sleeper capability. For teams doing storyboards, comic panels, children’s books, character sheets, or serialized marketing campaigns, the ability to generate a sequence with consistent characters in one shot collapses a workflow that used to take hours of prompt stitching and inpainting into a single API call. News9’s coverage notes the ceiling for batch generation is actually up to ten images per prompt depending on tier.

The “Thinking” mode — and what it actually does

The headline feature on the consumer side is Thinking, a mode available to ChatGPT Plus, Pro, Team, and Enterprise users (free users get the base model without the agentic layer, per Ground News’ aggregation of launch coverage).

Thinking mode is not a vague “smarter” label. Based on the live demos OpenAI ran for VentureBeat, here’s what it actually does:

- Researches the web in real time before generating, so the model can pull current facts, current brand assets, and current visual references into an image.

- Reasons through the layout before rendering the first pixel — sketching the structural logic of an infographic, for example, before committing to pixels.

- Verifies its own outputs, catching common failures (legibility errors, spatial inconsistencies) and retrying or refining before returning the image.

- Generates multi-image sets with continuity — characters who look like the same character across eight frames, products that look like the same product from multiple angles.

In the VentureBeat briefing, OpenAI Product Lead Adele Li demonstrated Thinking by uploading a PowerPoint file containing internal product strategy and asking the model to produce a poster synthesizing its contents. The output, per the reporting, correctly extracted the document’s core data, preserved the client’s logos, and produced a professional layout in the document’s original stylistic tone. That is not what image generators did even six months ago.

The trade-off, acknowledged directly by Li, is that Thinking mode is slower than base generation. That’s not a regression — it’s the point. “Thinking” is the mode you use when correctness matters more than throughput.

Typography, the real headline

Ask any professional designer what has kept AI image generators out of production workflows, and the answer has been the same for three years: text rendering. If the model can’t spell, the model can’t ship.

OpenAI claims 99% accuracy on standard typography benchmarks for English rendering, and — more importantly — meaningful gains on non-Latin scripts. The Microsoft Foundry docs explicitly call out improved rendering in Japanese, Korean, Chinese, Hindi, and Bengali. VentureBeat’s testing included a water-cycle diagram rendered with legible Hangul labels — a task that every previous top-tier model except Seedream has failed to do reliably.

What this unlocks, in concrete workflow terms:

- Magazine covers with headlines, volume numbers, and barcode “display until” dates rendered in typographic alignment that mirrors human layout software

- Multilingual social assets where the copy is natively rendered in the target script, not translated and overlaid post-hoc

- Infographics where the labels, legends, and callouts are legible enough to ship to end users

- UI mockups and fake screenshots so convincing that OpenAI teased the launch with a “Not a Screenshot” campaign built around photorealistic fake interfaces (News9)

The last bullet point is a dual-edged achievement. Reliable fake-UI generation is a blessing for product teams prototyping at speed and a gift for disinformation campaigns. OpenAI responded by pairing the launch with what it calls a “multi-layered safety stack,” including C2PA-style watermarking, classifier filtering, and real-time monitoring. Whether those mitigations survive the first adversarial news cycle is a question 2026 will answer empirically.

Image editing: the other #1

The text-to-image Elo gets the headlines, but the more consequential number may be the 1513±7 on the Image Edit Arena. Edit performance is where AI image tools earn their keep in real production pipelines — you rarely ship the first generation; you iterate. gpt-image-2 being #1 in both arenas, simultaneously, is what turns it from a “cool new model” into a default recommendation for commercial workflows.

The Foundry integration docs walk through a telling example: generate an empty subway car, then iteratively edit it to include a specific ad campaign, then iteratively refine the ads to use specific flower types. The edits preserve the scene, the lighting, and the composition; only the targeted elements change. That’s the capability Adobe’s entire business is built on, now available as an API call.

Pricing and access

Per both VentureBeat and the Microsoft Foundry pricing table:

- Free ChatGPT users: base model access (no Thinking, no multi-image)

- ChatGPT Plus / Team: Thinking mode, tool use, web search, multi-image generation

- ChatGPT Pro / Enterprise: additional “ImageGen Pro” access with higher limits

- API (

gpt-image-2): supports up to 4K (beta), aspect ratios from 3:1 to 1:3 - API pricing: $8/1M input tokens, $30/1M output tokens for image; $5 / $10 for text tokens; $2 / $1.25 for cached inputs

For typical production workloads, that works out to roughly $0.04–$0.08 per image depending on resolution and complexity — comparable to GPT Image 1.5 pricing, with $2 per million tokens shaved off the output cost. Speed is reportedly roughly 2× faster than GPT Image 1.5 on equivalent tasks.

Where it still loses

No model is universally best. Based on early testing and the Arena sub-category breakdowns:

- Pure artistic / editorial illustration: Midjourney still produces more distinctive stylization, even if it loses on prompt adherence

- Open-source and self-hosting requirements:

gpt-image-2is fully proprietary, API-only - Ultra-high-volume workloads: Google’s Nano Banana 2 is ~5× faster and materially cheaper per image

- Non-English typography outside the supported five scripts: reports are still inconsistent for Arabic, Thai, and Tamil rendering

- Speed-critical real-time applications: at ~10–15 seconds per high-fidelity image,

gpt-image-2is still too slow for real-time UI

The verdict

If you can only pick one model in 2026, pick this one. It’s the first model that’s simultaneously #1 in text-to-image and #1 in image editing, it closes the typography gap that has kept AI tools out of commercial design workflows, and its agentic Thinking mode is a meaningful step toward image generation as a reasoning activity rather than a pixel-production activity. Unless you have a specific disqualifying constraint (open-source requirement, extreme throughput needs, or a fully local deployment), ChatGPT Images 2.0 is the default.

#2 — Nano Banana 2 / Gemini 3.1 Flash Image (Google)

Arena.ai Text-to-Image Elo: 1270±5 (rank #2) — source

Arena.ai Image Edit Elo: 1387±4 (rank #4) — source

Released: February 2026

Availability: Google AI Studio, Vertex AI, consumer Gemini apps

The speed play

The second-place Elo belongs to Google’s Nano Banana 2 — officially Gemini 3.1 Flash Image Preview, informally beloved for its frankly ridiculous codename. At 1270 Elo on Arena.ai with a deep 51,886 votes, it’s a statistically mature #2 with strong signal stability. On the Image Edit Arena it sits at 1387 with 129,887 votes.

Nano Banana 2’s headline advantage isn’t quality — gpt-image-2 beats it on that front decisively. Its advantage is throughput. The model generates images in 1 to 3 seconds, which is roughly 5× faster than gpt-image-2 at comparable resolutions (iMini’s workflow breakdown). For any workflow where you’re generating thousands of images per day — e-commerce catalogs, social media content mills, rapid-iteration creative ideation — the per-image cost in both dollars and seconds matters more than the ceiling on single-image quality.

Built-in web search

One feature that often gets overlooked in benchmark comparisons: Nano Banana 2 has native web-search grounding built into its image generation pipeline. When the model needs to render a specific brand, a specific person, or a current event, it can query the web in real time before generating — similar to gpt-image-2‘s Thinking mode, but available as a baseline capability rather than a premium tier. This is flagged in the Arena.ai listing with a [web-search] annotation next to the model name.

Where it wins, where it doesn’t

Wins:

- Fastest top-tier model available, measured in seconds not double-digit seconds

- Strong prompt adherence for medium-complexity prompts (brand assets, e-commerce, editorial thumbnails)

- Excellent integration with Google’s ecosystem — Vertex AI, AI Studio, Workspace

- The #4 ranking on image editing with 129k+ votes makes it a legitimate dual-purpose tool

Doesn’t win:

- Complex composition, spatial reasoning, and infographic-style work where

gpt-image-2is visibly better - Typography — acceptable but not class-leading, particularly on non-Latin scripts (Seedream is stronger here)

- Artistic style range — Flash models are architecturally optimized for speed, which caps the ceiling on stylistic nuance

Pricing and availability

Google’s pricing for Nano Banana 2 is notably aggressive — per-image costs in the $0.015–$0.03 range depending on access tier, with generous free-tier quotas through Google AI Studio. This is arguably the cheapest high-quality model on the market, which is why it dominates Google-built products (and increasingly, third-party apps routing image generation through Vertex AI).

The verdict

Use Nano Banana 2 when speed and cost are co-equal with quality, or when your workflow involves generating tens or hundreds of images per user session. It’s the practical default for production throughput in 2026, and it’s close enough to gpt-image-2 on most prompts that the 5× speed advantage often wins the tradeoff. Pair it with gpt-image-2 for your “hero” shots and use Nano Banana 2 for volume.

#3 — Nano Banana Pro / Gemini 3 Pro Image (Google)

Arena.ai Text-to-Image Elo: 1244±4 (2K variant, rank #3), 1232±5 (standard, rank #5) — source

Arena.ai Image Edit Elo: 1388±3 (2K variant, rank #3), 1386±3 (standard, rank #5) — source

Released: November 2025

Availability: Google AI Studio, Vertex AI, consumer Gemini Pro tiers

The quality-ceiling sibling

Where Nano Banana 2 optimizes for speed, Nano Banana Pro (Gemini 3 Pro Image) optimizes for output ceiling. It’s the same model family, built on a larger parameter budget and tuned for native 4K rendering and higher-fidelity outputs. The 2K variant on Arena.ai posts a 1244±4 Elo for text-to-image and an impressive 1388±3 on image editing — good enough for rank #3 in both arenas and a top-five fixture across every benchmark we consulted.

What’s particularly striking is the image-editing score. With 316,100 votes on the 2K variant and 518,620 votes on the standard variant, Nano Banana Pro has one of the deepest, most stable Elo profiles on the leaderboard. It’s not a preliminary ranking. It’s a proven one.

What makes it different from Nano Banana 2

Both models share DNA, but the Pro tier:

- Generates at native 4K without upsampling

- Handles complex prompts with multiple subjects, layered composition, and atmospheric lighting more reliably

- Produces dramatically better photographic realism — skin, fabric, liquid, glass

- Runs ~5–10 seconds per image rather than 1–3 — still fast, but closer to the premium-model tempo

Where it’s actually the best choice

The use cases that push Nano Banana Pro over gpt-image-2 aren’t about absolute Elo — they’re about print-readiness and photographic fidelity:

- Print campaigns where you need lossless 4K at delivery

- Product photography where texture and material reflect accuracy matters more than compositional reasoning

- Photographic hero assets for e-commerce and luxury brands

The WaveSpeedAI analysis specifically highlights Gemini Pro Image as “excellent balance of quality and prompt following,” noting its particular strength on creative interpretations and multicultural content.

The verdict

If you’re a creative agency producing deliverables at print resolution, or you need photographic realism as a baseline (not a stretch goal), Nano Banana Pro belongs in your stack. It’s the production-ceiling half of Google’s two-model strategy, and it’s one of the very few models on the market that seriously competes with gpt-image-2 on image editing specifically.

#4 — FLUX.2 Max (Black Forest Labs)

Arena.ai Text-to-Image Elo: 1165±4 (rank #9) — source

Artificial Analysis Elo: 1200 (rank #4) — source

Arena.ai Image Edit Elo: 1263±3 (rank #17) — source

Released: Late 2025

Availability: fal.ai, Replicate, self-hosted, Black Forest Labs API

The open-weights champion

Here’s where the rankings get interesting, and where you have to read the leaderboards with both eyes open.

On the Arena.ai leaderboard, FLUX.2 Max sits at rank #9 with an Elo of 1165 — decent, not dominant. On the Artificial Analysis Image Arena, the same model sits at rank #4 with an Elo of 1200, tied with Seedream 4.0. Why the discrepancy? Different voter populations, different prompt mixes, different methodologies. Arena.ai voters skew toward general-purpose creative prompts; Artificial Analysis tends to weight photorealism and technical correctness more heavily. FLUX.2 Max’s relative performance is very prompt-distribution-sensitive.

What the discrepancy tells you is more useful than either single number: FLUX.2 Max is at its best on photorealistic, technically demanding prompts and comparatively less differentiated on casual creative work.

Black Forest Labs also has the deepest model lineup of any competitor. Looking at the Arena.ai leaderboard as of April 19, 2026, the Flux family occupies:

- flux-2-max (proprietary) — Elo 1165 — #9

- flux-2-pro (proprietary) — Elo 1157 — #11

- flux-2-flex (proprietary) — Elo 1156 — #12

- flux-2-dev (flux-non-commercial-license) — Elo 1149 — #15

- flux-2-klein-9b (flux-non-commercial-license) — Elo 1068 — #35

- flux-2-klein-4b (Apache 2.0) — Elo 1027 — #43

Six models in the top 45, spanning fully proprietary, non-commercial research, and Apache-licensed open weights. No one else offers that range.

Why it’s in the top 5 despite a lower Arena rank

FLUX.2 Max earns its top-5 slot not because of where its Elo lands, but because of what it enables that proprietary APIs cannot:

- Self-hosting. If you work in a regulated industry — healthcare, finance, defense, certain European government contexts — sending customer data to OpenAI or Google is either a compliance headache or fully prohibited. Flux is the only top-tier model family you can run on your own infrastructure.

- Fine-tuning. LoRAs, DreamBooth-style customization, and full-finetuning workflows work natively on Flux. Training a custom version of

gpt-image-2is not an option. Training a custom Flux is a mature practice with thousands of community LoRAs already deployed. - Commercial license flexibility. Flux Klein 4B ships under Apache 2.0 — meaning zero license friction for commercial products, including products that embed image generation as a feature.

- Photorealistic ceiling. On prompts involving skin texture, cinematic lighting, and physical-material accuracy, Flux 2 Max is widely regarded as matching or exceeding Nano Banana Pro.

Where it loses

- Prompt adherence on complex compositions — Flux has always been strong on atmosphere and weak on literal adherence

- Text rendering — still a known weak spot versus the top three

- Image editing — #17 on the edit arena is a meaningful drop from its T2I rank, reflecting that Flux’s edit story is less mature than OpenAI’s or Google’s

Pricing

API pricing through fal.ai and Replicate runs roughly $0.055 per image for Flux 2 Max per BitsFromBytes’ 2026 roundup. Self-hosted, the marginal cost is GPU time — typically $0.005–$0.02 per image on enterprise hardware.

The verdict

If your use case requires open weights, self-hosting, fine-tuning, or commercial licensing flexibility that proprietary APIs can’t offer — FLUX.2 Max is the default and has no real competition in the top tier. For most other use cases, the proprietary leaders win on headline quality. Flux’s #4 slot on this list is about its strategic niche, not its raw Elo.

#5 — Seedream 4.5 (ByteDance)

Arena.ai Text-to-Image Elo: 1143±3 (rank #17) — source

Arena.ai Image Edit Elo: 1303±3 (rank #11) — source

Artificial Analysis Elo: 1200 (rank #5) — source

Released: Late 2025

Availability: ByteDance API, iMini AI, fal.ai

The specialist’s specialist

Seedream 4.5 is the most interesting model on this list because its Arena placement dramatically understates its capabilities in the niches where it excels. At rank #17 on Arena.ai text-to-image, it looks like a middle-tier contender. At rank #11 on image editing with an Elo of 1303 and 626,490 votes, it looks more serious. Look at what users actually use it for, though, and a different story emerges.

Seedream 4.5 is the single best model on the market today for three specific workloads: multilingual text rendering, multi-reference consistency, and high-resolution production.

Text rendering — the real claim to fame

Where gpt-image-2 made the biggest gains on typography, Seedream 4.5 got there first. The iMini AI workflow benchmark specifically calls out Seedream 4.5 as “the strongest model for two specific use cases: text-heavy imagery and multi-asset consistency. Posters, UI mockups, multilingual layouts — it handles legible, correctly-spelled copy without prompt workarounds, in a way that no other model reliably replicates.”

For any workflow that needs reliable typography in CJK (Chinese, Japanese, Korean) scripts — and, particularly, in mixed-script compositions — Seedream 4.5 was the best game in town before gpt-image-2 arrived and is still extremely competitive for cost-sensitive deployments.

Multi-reference consistency

Seedream 4.5 supports up to six reference images simultaneously, preserving character appearance, pose, lighting, and environmental context across generations. For anyone building:

- Brand campaigns with consistent character identity across dozens of assets

- Storyboards and narrative sequences

- Product catalog shoots with consistent styling across hundreds of SKUs

- Editorial illustration series

…Seedream’s consistency handling is genuinely category-leading.

Native 4K, fast inference

iMini’s benchmark reports ~1.8-second render times, native 4K output, no watermark at any tier, and pricing around $0.035 per image. That’s a striking combination — fast, high-res, cheap, and production-ready.

Where it loses

- General-purpose prompt adherence — the #17 text-to-image Elo reflects that Seedream isn’t always the right default on “just give me a beautiful image of a dog”–style prompts

- Artistic style range — optimized for technical and commercial work, less distinctive for editorial/fine-art aesthetics

- Regional access considerations — some enterprise buyers in the US and EU face friction with ByteDance-hosted APIs due to policy concerns. For regulated sectors, check with legal before building production dependencies

The verdict

If your workflow is text-heavy design, multilingual content, or character-consistent serial output, Seedream 4.5 is either the best tool for the job or a very close second to gpt-image-2 at a fraction of the cost. It’s a specialist’s model, but the specialties it owns are unusually valuable in 2026.

Head-to-head: what each model actually does well

Elo rankings compress the truth. Here’s a category-by-category breakdown using the Arena.ai sub-category leaderboards and real-world workflow reports.

Photorealistic portraits

gpt-image-2— highest fidelity, best pose and anatomical accuracy- Nano Banana Pro — native 4K, exceptional skin texture

- FLUX.2 Max — particularly strong on cinematic lighting

Product photography

gpt-image-2— best for mockups with accurate branding- Nano Banana Pro — best for 4K hero shots

- Seedream 4.5 — best for catalog consistency at volume

English typography

gpt-image-2— 99% reported accuracy, best-in-class- Seedream 4.5 — consistently legible across complex layouts

- Ideogram 3.0 (honorable mention) — specialist typography model

Multilingual typography (CJK + Hindi/Bengali)

gpt-image-2— explicit gains in Japanese, Korean, Chinese, Hindi, Bengali per Microsoft Foundry docs- Seedream 4.5 — historically the best CJK typography model, still extremely strong

- Nano Banana Pro — competent but not class-leading

Complex multi-subject scenes

gpt-image-2— the Thinking mode genuinely reasons through composition- Nano Banana Pro — strong without the reasoning layer

- FLUX.2 Max — capable but less reliable

Artistic / editorial illustration

- Midjourney v8 (honorable mention) — still the style leader, absent from top 5 purely on Elo

- FLUX.2 Max — closest top-5 contender on aesthetic nuance

gpt-image-2— catching up fast, not yet the default

Logos / typography / posters

gpt-image-2— now the default- Seedream 4.5 — especially for CJK-heavy designs

- Ideogram 3.0 (honorable mention) — still a specialist pick

Image editing (inpaint / outpaint / style transfer)

gpt-image-2— Elo 1513, #1- ChatGPT Image High-Fidelity (legacy 1.5 edit model) — Elo 1393

- Nano Banana Pro 2K — Elo 1388

- Nano Banana 2 — Elo 1387

Per the Arena.ai Image Edit Leaderboard, the edit story in 2026 is essentially a two-company race between OpenAI and Google, with the top five places all going to their flagships.

The honorable mentions (and why they didn’t make the cut)

Five-model lists are inherently reductive. Here’s who we left out and why, for transparency.

- Microsoft MAI-Image-2 — Arena.ai Elo 1184, rank #6. A legitimate sixth place, rising fast. If Microsoft’s push continues, this lands on next quarter’s list.

- Reve v1.5 — Elo 1177, rank #7. Strong all-rounder, particularly for photography.

- Grok Imagine Image (xAI) — Elo 1170, rank #8, with a notably strong image-edit showing at 1332 (#7). Worth watching.

- Midjourney v8 — Still the editorial and artistic reference despite a middle-of-the-pack Elo on JAI Portal. Its absence from the top 5 is a commentary on how much benchmarks favor prompt adherence over aesthetic distinction. If you’re doing concept art or editorial illustration, Midjourney remains a top pick.

- Imagen 4 Ultra (Google) — Elo 1148, rank #16. Competent enterprise pick, mostly replaced in Google’s lineup by the Nano Banana family.

- Ideogram 3.0 — Still best-in-class for specific typography-heavy design work, but broadly surpassed for general use.

- Adobe Firefly 4 — The commercial-safety default, especially for agencies that need contractual assurance about training-data provenance. Worth considering if your legal team blocks everything else.

- Hunyuan Image 3.0 (Tencent) — Elo 1151. Strong Chinese-market option, competitive edit performance.

- Qwen Image 2512 (Alibaba, Apache 2.0) — Elo 1133. Best Apache-licensed text-to-image model, strong choice where open licensing is non-negotiable.

- Stable Diffusion 3.5 — A legacy pick. Still usable for specific fine-tuning workflows, but no longer competitive on quality.

Buying guide: matching the model to the job

Here’s the part this guide is actually for. A decision tree, no ambiguity.

“I need the absolute best quality for a flagship creative deliverable.”

→ ChatGPT Images 2.0 with Thinking mode. Not close.

“I need to generate 10,000+ images per month and cost per image matters.”

→ Nano Banana 2 via Google AI Studio. Fast, cheap, top-tier quality at the middle of the distribution.

“I need native 4K output for print or hero campaigns.”

→ Nano Banana Pro (2K or standard variant), or gpt-image-2 at 4K beta.

“I need to self-host, fine-tune, or run on regulated infrastructure.”

→ FLUX.2 Max (proprietary tier) or FLUX.2 Klein (Apache 2.0) from Black Forest Labs.

“I need flawless multilingual text rendering, especially CJK.”

→ ChatGPT Images 2.0 if budget allows, Seedream 4.5 as the cost-effective specialist.

“I need character consistency across a series of images.”

→ ChatGPT Images 2.0’s eight-image batch mode, or Seedream 4.5’s six-reference workflow.

“I’m doing editorial, artistic, or concept-art work.”

→ Midjourney v8 remains the aesthetic reference despite its benchmark rank.

“I need commercially safe, legally uncontested training data provenance.”

→ Adobe Firefly 4. Boring but defensible.

“I need the fastest possible generation for a real-time UX.”

→ Nano Banana 2 (1–3 seconds) or z-image-turbo (rank #32, Apache 2.0).

“I need an edit-first model for iterative production workflows.”

→ ChatGPT Images 2.0 on the Edit Arena. #1 by 120 Elo.

What’s about to change

Predicting the next six months of AI image generation is a fool’s errand, but a few things are worth watching.

gpt-image-2 vote count will triple. The current 15,127-vote count on Arena.ai is preliminary. Once it passes ~75,000 votes, confidence intervals tighten, and we’ll see whether 1512 was a launch spike or a stable ceiling. Either way, the #1 position is effectively locked in for Q2.

Nano Banana 3 is coming. Google has been on a six-month cadence for its image-model launches since mid-2025. Expect a response to gpt-image-2 before the end of Q3 2026.

Flux 3. Black Forest Labs hasn’t publicly committed to a Flux 3 timeline, but the fact that Flux 2 now occupies six leaderboard slots suggests the Flux 2 family is wound all the way up. A generational successor is plausible by late 2026.

Diffusion → autoregressive migration. OpenAI’s non-denial in the gpt-image-2 launch briefing about whether the model is diffusion-based is suggestive. If gpt-image-2 is a natively autoregressive visual model — a “GPT for images” in a literal architectural sense — that represents a shift the rest of the industry will feel pressure to match. Expect research papers this summer.

The regulatory floor is moving. VentureBeat’s launch coverage explicitly raised political-influence concerns during the launch briefing. C2PA watermarking and provenance tracking are becoming table stakes. Expect at least one major jurisdiction to legislate mandatory AI-image labeling before year-end.

Price floors will keep collapsing. Per-image costs dropped roughly 60% in 2025. The 2026 trajectory looks similar. By Q4, expect top-tier models at $0.01 per image and free tiers with effectively unlimited consumer use.

The honest conclusion

If you read this entire guide looking for a contrarian “actually the best model is…” take, you’re going to be disappointed. As of April 2026:

- The best AI image generator is ChatGPT Images 2.0. It’s not a close call. Arena Elo of 1512, #1 on text-to-image, #1 on image editing, 240-point lead, near-perfect text rendering, multilingual competence, agentic reasoning via Thinking mode, and simultaneous API and consumer availability. It’s the default recommendation for any team that doesn’t have a disqualifying constraint.

- But “best” is job-dependent. Four of the five models on this list are the best in at least one specific category — Nano Banana 2 for speed, Nano Banana Pro for 4K production ceiling, FLUX.2 Max for self-hosting and fine-tuning, Seedream 4.5 for multilingual text and multi-reference consistency. A serious team will probably use two or three of them in combination rather than picking one.

- The quality gap between runners-up is compressed. The gap from #2 to #9 on Arena.ai is only 105 Elo points. The gap from #1 to #2 is 242. That’s not an accident. It’s a signal that

gpt-image-2pulled off a genuine generational advance while the rest of the field converged. - Benchmark numbers can mislead. Cross-reference at least two leaderboards. Be wary of preliminary Elo scores. Weight edit-arena performance if you’re building production workflows. And remember that Midjourney’s continued commercial success despite a middling benchmark rank is the clearest evidence that aesthetic quality and Elo are not the same thing.

The field will keep moving. The leaderboards will keep updating. But the practical version of the answer, in April 2026, is this: start with ChatGPT Images 2.0, add Nano Banana 2 for throughput, add FLUX.2 Max if you need self-hosting, add Seedream 4.5 if you need multilingual typography, and add Midjourney if you care about aesthetic distinction. That’s the stack.

Sources and methodology

All Elo figures in this guide were retrieved on or before April 22, 2026 from the following live leaderboards:

- Arena.ai Text-to-Image Leaderboard — primary ranking source, 4,894,371 votes across 55 models as of April 19, 2026

- Arena.ai Image Edit Leaderboard — 25,774,117 votes across 43 models as of April 19, 2026

- Artificial Analysis Image Arena — cross-validation and independent ranking

- JAI Portal AI Model Leaderboard — tertiary cross-reference

Product capability claims were sourced from and cross-referenced against:

- OpenAI’s official ChatGPT Images 2.0 launch post

- Microsoft Foundry’s GPT-image-2 integration blog

- VentureBeat’s live launch coverage

- Startup Fortune’s launch analysis

- News9’s launch reporting

- iMini AI’s 2026 workflow benchmark

- WaveSpeedAI’s LM Arena 2026 analysis

- BitsFromBytes’ 2026 generator roundup

- OpenAI’s developer API documentation for gpt-image-2

The gpt-image-2 Elo score is flagged as “Preliminary” on Arena.ai pending additional vote accumulation; we have noted this explicitly throughout the guide. Every numerical claim in this piece traces to one of the sources linked above. Where two sources disagreed, we cited both and explained the discrepancy rather than picking one.