On April 21, 2026, OpenAI shipped the first image model that thinks before it draws. Here’s everything that actually changed — and why designers, developers, and Google should be paying attention.

There’s a moment in every technology cycle where a stubborn limitation finally cracks. For AI image generation, that limitation was almost always text. For three years, every dinner menu, every magazine cover, every infographic produced by an AI model betrayed itself with garbled letters, invented words, and typography that looked like it had been rendered by someone who’d only ever seen the alphabet described to them over the phone.

That era ended today.

On Tuesday, April 21, 2026, OpenAI officially unveiled ChatGPT Images 2.0, the company’s most significant image-generation upgrade since it first bolted native image creation onto GPT-4o in March 2025. The launch, announced at 12:00 PM Pacific via a blog post and a closed press briefing, pushes the entire category into what several early reviewers are calling “the reasoning era” of AI imagery. It’s a model that doesn’t just draw — it searches the web, reads your uploaded PowerPoint decks, double-checks its own output, and reasons through the layout of a composition before placing the first pixel.

It’s also the first OpenAI image model that can make a Mexican restaurant menu you could plausibly hand to a customer. Which, as TechCrunch’s Amanda Silberling wryly pointed out, is a deceptively enormous achievement. Two years ago, DALL-E 3 was inventing culinary inventions like “enchuita,” “churiros,” “burrto,” and “margartas.” Today, ChatGPT Images 2.0 produces a menu so clean that Silberling’s only complaint was that ceviche priced at $13.50 made her question the fish quality.

Let’s get into what actually shipped.

The One-Sentence Summary

ChatGPT Images 2.0 is a from-scratch image-generation system built around OpenAI’s O-series reasoning capabilities, available starting today to all ChatGPT and Codex users — Free, Go, Plus, Pro, Business, and Enterprise — with advanced “Thinking” features reserved for paid tiers and a new gpt-image-2 API endpoint for developers. It supports resolutions up to 2K in the ChatGPT interface (4K in API beta), aspect ratios from 3:1 to 1:3, multi-image continuity across up to eight outputs, high-fidelity text rendering in Japanese, Korean, Chinese, Hindi, and Bengali, and a December 2025 knowledge cutoff that can be extended in real time through live web search.

If that feels like a lot, it’s because OpenAI packed almost every “next-gen” image feature from every competitor into a single release. And if the early coverage is to be believed, it mostly works.

A Quiet Debut Under a Silly Codename

Before OpenAI officially announced anything, ChatGPT Images 2.0 had already been out in the wild for weeks — just not under its own name. According to VentureBeat’s Carl Franzen, the model had been testing on LM Arena — the third-party evaluation platform that AI labs use to gather early blind-test feedback — under the deliberately innocuous codename “duct tape.“

It wasn’t an isolated signal. In early April, the AI community had already started noticing that users inside ChatGPT were being quietly served a new image model. A detailed analysis from MindStudio had catalogued the differences: near-perfect text rendering, realistic UI screenshots, tighter instruction following, and a noticeable jump in photorealism. A Chinese-language intelligence report from APIYI went further, claiming text-rendering accuracy had jumped from roughly 90–95% on GPT-Image-1.5 to over 99% on the new model, and that the notorious “yellow color cast” that plagued the previous generation was simply gone.

By the time OpenAI’s announcement landed, in other words, the cat wasn’t just out of the bag — it had been doing laps around the internet for a month. The press briefing was less a reveal than a confirmation.

What “Thinking” Actually Does to an Image Model

The most important architectural change in ChatGPT Images 2.0 isn’t about resolution or aesthetics. It’s about reasoning.

Historically, image models have functioned as black boxes. You provide a prompt, some noise gets iteratively denoised (or tokens get autoregressively predicted), and out the other side falls a picture. There’s no planning, no verification, no intermediate thought. Images 2.0 breaks that pattern by integrating OpenAI’s O-series reasoning chain directly into the image pipeline. When a user selects a “Thinking” model inside ChatGPT, VentureBeat reports that the system will “research, plan, and reason through the structure of an image before the first pixel is rendered.”

In concrete terms, that means Images 2.0 can do four things no previous OpenAI image model could:

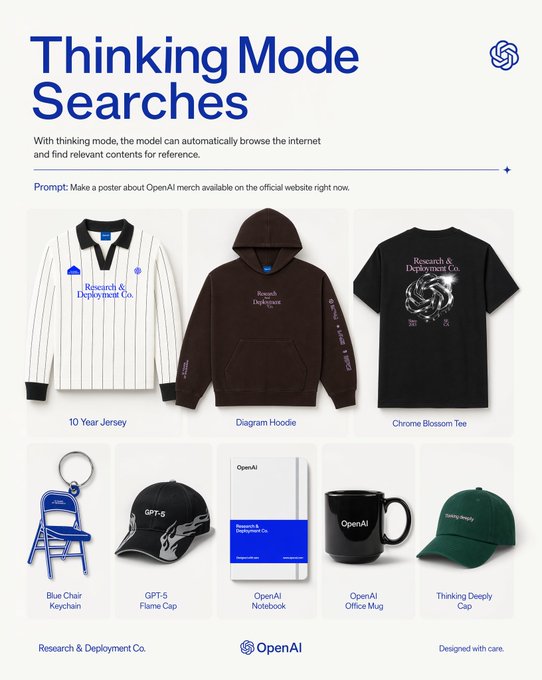

- Search the live web mid-generation to ground a prompt in current factual reality. The Verge’s Emma Roth highlighted this as the headline capability: the model can “pull information from the web” to verify details like current product designs, team rosters, or recent events.

- Analyze uploaded files. During the press briefing, OpenAI’s Product Lead for ChatGPT Images, Adele Li, demonstrated the model by uploading a complex internal PowerPoint about product strategy. Instead of generating a vaguely related picture, the model synthesized the document’s core data, identified the correct logos, and produced a professional poster that preserved the original file’s stylistic inputs.

- Produce multiple coherent images from one prompt — up to eight, all maintaining character and object continuity across the entire set. This is the feature that unlocks manga sequences, children’s books, and consistent social campaigns without the brittle “one prompt at a time and manually stitch” workflow Li described as “cumbersome.”

- Double-check its own output before returning it, a quality-control loop borrowed directly from the reasoning techniques OpenAI has been refining in its O-series language models.

OpenAI’s Research Lead, Boyuan Chen, told the press briefing that the underlying architecture had been “revamped from scratch” and described Images 2.0 as a “generalist model” or, in a phrase that will probably get quoted forever, “a GPT for images.” When TechCrunch asked whether the model uses diffusion, autoregression, or a hybrid, the company declined to answer. Chen would only say that the new architecture handles “3D-style perspective shifts and complex spatial reasoning through simple text prompts.”

The trade-off for all this thinking is real: Images 2.0 is slower than its predecessor when Thinking is engaged, not faster. A complex multi-panel comic can take “a few minutes.” OpenAI is betting — reasonably — that professionals will happily wait an extra minute for a production-ready asset.

Text, at Last

If reasoning is the architecturally important story, text rendering is the viscerally obvious one. Every major publication that covered the launch led with it.

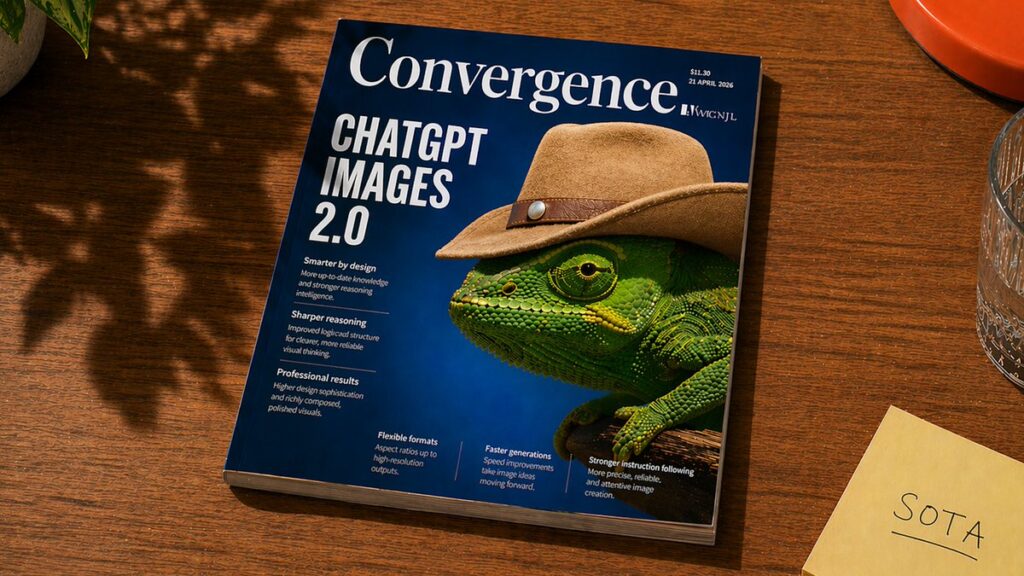

Tom’s Guide headlined its review “ChatGPT just launched Images 2.0, and it finally fixes warped text,” framing the model as “the first one designers might actually use.” Engadget led with multilingual text. TechCrunch led with the Mexican menu. VentureBeat spent a full section on a sample magazine cover — “Open Scifi” — in which every headline, volume number, and even the “Display until” date on the barcode rendered with the kind of crisp typographic alignment you’d expect from a human designer at Condé Nast.

OpenAI itself calls this improvement a “step change,” and the quotes from the official press release are unusually specific. The model can, OpenAI claims, “render the fine-grained elements that often break image models: small text, iconography, UI elements, dense compositions, and subtle stylistic constraints, all at up to 2K resolution.”

What makes this technically interesting is that text has been the Achilles’ heel of diffusion models since the start. As Asmelash Teka Hadgu, founder of Lesan AI, told TechCrunch back in 2024, “The diffusion models are reconstructing a given input. We can assume writings on an image are a very, very tiny part, so the image generator learns the patterns that cover more of these pixels.”

In other words, diffusion models had no architectural reason to care about getting letters right. Autoregressive approaches — the same family of techniques that underlie large language models — have historically done better at text, which is part of why so many analysts suspect Images 2.0 is either autoregressive or some novel hybrid that OpenAI isn’t yet willing to discuss publicly.

Whatever the architecture, the results are unmistakable. Reddit’s r/ChatGPT community was almost giddy. “Image 2.0 is now online on ChatGPT and it’s incredible,” ran one viral thread. “Just a few days ago even 3×3 grids would often struggle, now we can 10x the complexity, and it’s near perfect.” The same thread does note that the model still occasionally produces a six-fingered robot hand — a limitation either technical or, the community joked, a “cheeky self-reference.”

The Multilingual Breakthrough

The text improvements aren’t limited to English. OpenAI is explicitly pitching Images 2.0 as a “polyglot” model, and it’s backed the claim up with specific, testable commitments. According to OpenAI’s announcement and corroborated by Engadget, the model delivers high-fidelity text generation in five non-Latin script systems:

- Japanese (Kanji, Hiragana, Katakana)

- Korean (Hangul)

- Chinese (Simplified and Traditional Hanzi)

- Hindi (Devanagari)

- Bengali

VentureBeat got access to a sample “Global Language” diagram from OpenAI — a water-cycle explainer with all labels rendered in Korean. The Hangul characters weren’t just correctly formed; they were placed inside callouts and arrow labels with typographic confidence. Franzen’s analysis puts it neatly: the text “is not just translated; it is rendered correctly but with language that flows coherently, ensuring that labels and explanations feel natively integrated into the design.”

For anyone who’s ever tried to make an AI image model produce usable Japanese signage, or coherent Hindi on a poster, this is a very big deal. It also represents an explicit attempt to address what VentureBeat called the “long-standing Western bias in AI imagery.”

The practical unlock here is enterprise-scale: marketing teams at a Tokyo pharmaceutical company, a Mumbai fintech, or a Seoul consumer electronics brand can finally generate brand-aligned, native-script creative at volume without relying on a diffusion model that mangles every third character.

The Feature List, Expanded

Beyond text and reasoning, Images 2.0 ships with a surprisingly long list of headline features. According to OpenAI’s blog post, The Verge, and VentureBeat, the model can produce — at production quality — magazine covers with fully legible barcodes and pub dates; infographics with labeled axes and legends; slide decks and three-page educational explainers with built-in quizzes; floor plans complete with material lists, color palettes, and inspiration photography; maps (VentureBeat specifically verified a working map of the maximum extent of the Aztec, Maya, and Inca empires, complete with a functional legend); manga sequences; children’s books with consistent characters; character sheets rendered from multiple angles for animation and game pipelines; realistic UI mockups and software screenshots; scientific diagrams; and multi-size marketing asset packs.

It also applies nearly all of these features to user-uploaded imagery, which is where the Thinking mode really earns its keep. The same model that can generate a poster from scratch can now take your team’s poorly-designed internal slide and reissue it as a professional-grade one-pager.

For creators, the most structurally important addition is the eight-image continuity feature. Consistency across a series of AI-generated images has been one of the hardest remaining problems in the field, and the workaround — pick a seed, pray, and regenerate until you get something close enough — has been unsustainable for serious production work. Images 2.0 solves this by making continuity a first-class capability of the Thinking model rather than a hack on top of a base model.

Specs and Availability

Here are the hard numbers, pulled from OpenAI’s own release materials, VentureBeat’s reporting, and The Verge:

- Max resolution: 2K in the ChatGPT UI; 4K in the API (beta)

- Aspect ratios: from 3:1 (wide) to 1:3 (tall), including everything in between

- Knowledge cutoff: December 2025 (extendable via live web search in Thinking mode)

- Max coherent images per prompt: 8

- API model ID:

gpt-image-2 - Supported non-Latin scripts: Japanese, Korean, Chinese, Hindi, Bengali

- Codex integration: available from day one

Tiered access, as summarized by VentureBeat:

- Free and Go users get the base ChatGPT Images 2.0 model — core instruction following, improved text rendering, multilingual support, and broader aspect ratios.

- Plus, Business, and Enterprise subscribers gain access to Thinking, which bundles tool use, live web search, file analysis, multi-image continuity, and pre-render reasoning.

- Pro subscribers get all of the above plus ImageGen Pro, OpenAI’s top-tier model for “more advanced image generation.” The exact feature boundary between Thinking and Pro has, as VentureBeat noted pointedly, not been fully published. Enterprise buyers trying to plan procurement should probably ask for clarification.

On the API side, developers can start building with gpt-image-2 today. Pricing is listed below.

API Pricing

The API pricing, as disclosed in VentureBeat’s coverage, echoes GPT-Image-1.5 with a small price cut on the output side. All figures are per 1M tokens:

Image tokens

- Input: $8.00

- Cached input: $2.00

- Output: $30.00 (down $2 from GPT-Image-1.5)

Text tokens (prompt/caption tokens paired with image operations)

- Input: $5.00

- Cached input: $1.25

- Output: $10.00

TechCrunch added that final per-image pricing depends on “quality and resolution of outputs,” meaning 4K Thinking outputs are going to cost meaningfully more than a casual 1K draft.

For context: earlier community speculation documented by APIYI had pegged expected per-image pricing at $0.15–$0.20, but the actual token-based model OpenAI shipped is better understood by the workload shape: short prompts with a single high-quality output will sit near the lower end of that range; long prompts with multiple Thinking iterations and 4K outputs will sit considerably higher.

The Competitive Backdrop: Google’s Shadow

You can’t write about Images 2.0 without talking about Google.

In February 2026, Google released Nano Banana 2 — technically known as Gemini 3 Pro Image or Gemini 3.1 Pro Image — which pulled off something no previous model had: rendering dense blocks of readable text inside generated imagery. Nano Banana 2 was the first model to really crack this problem at commercial quality, and for two months it sat basically unchallenged at the top of the category. Google followed it up with Nano Banana Pro, which has been widely regarded as the best infographic and editorial-layout model on the market.

Microsoft, not to be left out, shipped MAI-Image-2, per The Verge’s coverage.

ChatGPT Images 2.0 is very clearly OpenAI’s answer to all of that. And based on VentureBeat’s hands-on testing, the answer is competitive — Franzen writes that Images 2.0’s “fidelity in reproducing user interfaces, screenshots, and multiple image packs at once seem to exceed even Google’s latest image model’s capabilities in my brief testing.” On the harder edge of the category — realistic UI reproduction, accurate real-world map rendering, character continuity across sets — Images 2.0 appears to be at parity or ahead. On the softer edge — aesthetic quality and artistic stylization — Midjourney still sets the bar, and open-source models like FLUX continue to dominate technical/local workflows.

The startup-side analysis from Startup Fortune framed it bluntly: Images 2.0 “raises the ceiling on generative complexity and forces rivals to respond.” For anyone building on top of these APIs, that’s mostly good news — a three-way race between OpenAI, Google, and Microsoft is going to keep prices falling and capabilities rising through 2026.

Benchmarks, or the Curious Absence Thereof

Here’s an unusual aspect of the launch: OpenAI did not release formal benchmarks. Both TechCrunch and VentureBeat noted this explicitly. Franzen’s honest verdict was “safe to say the model is performing at the ‘state-of-the-art’ based on all the outputs I’ve seen,” which is as close to a vibes-based assessment as enterprise journalism gets.

What we do have is the LM Arena signal. Under the “duct tape” codename (and, if APIYI’s reporting is right, related codenames like “maskingtape-alpha” and “gaffertape-alpha”), the model reportedly dominated the leaderboards during its stealth testing phase. Independent community testing, documented across Reddit and developer forums, has consistently pointed in the same direction: the gap between Images 2.0 and its predecessor is, as one tester quoted by APIYI put it, “as large as the gap between Nano Banana Pro and DALL-E.”

Whether OpenAI is holding benchmarks back for a later technical report, or whether the company is simply choosing to let the product speak for itself, is unclear. But anyone making procurement decisions in the next few weeks will be doing so partly on trust and early impressions, not on published eval numbers.

Safety, Provenance, and the Election-Year Anxiety

The timing of Images 2.0 is delicate. OpenAI’s launch comes into a media environment saturated with concerns about AI-generated imagery being weaponized for political influence. VentureBeat referenced a recent New York Times report on AI user-generated characters (“AI UGC”) being deployed as the seed for mass-produced AI videos on social media, particularly in campaigns around U.S. President Donald Trump and the manufacturing of fictitious “real Americans” expressing political support.

Asked directly about these risks in the press briefing, Adele Li’s response was unequivocal:

“We take safety and security incredibly seriously. That includes anything when it comes to political or election interference. And so while other platforms and companies may not have those safeguards, ChatGPT does, and we take monitoring and protection of our users, as well as the influence that our photos as they are created, incredibly seriously.”

OpenAI’s stated “multi-layered safety stack” for Images 2.0, per the company’s announcement, has three pillars:

- Provenance. Images 2.0 outputs include watermarking metadata adhering to industry standards (implicitly the C2PA framework), so downstream viewers can verify that a given image is AI-generated.

- Model-level safeguards. Perception models filter out harmful or abusive content, with specific protections around minors.

- Active monitoring. Real-time enforcement of user policies, including against election interference.

Li also explicitly drew a contrast with “new entrants into the image generation space with different standards and philosophies,” a clear jab at less-restricted competitors. Whether the restrictions hold in practice — against determined bad actors, against subtly engineered prompts, against the sheer volume of content that needs to be policed — is something we’ll find out in the coming months. No AI safety system has ever survived first contact with a determined adversarial internet.

What It Feels Like to Use

Testimony from the reviewers who had pre-launch access paints a consistent picture.

Franzen at VentureBeat, given access the night before launch, immediately tested it on a use case that had broken every previous model he’d tried: a historically accurate map of three Mesoamerican empires at their maximum extent, complete with a legible legend. Images 2.0 produced it on the first try — only the second model he’d seen do so (the first being Nano Banana 2).

Silberling at TechCrunch got her first real “oh” moment from the Mexican menu. Design-focused reviewers at Tom’s Guide singled out the typography — specifically how text now stays legible in dense compositions and at small sizes, which is the specific failure mode that has historically kept AI-generated assets out of professional design workflows.

A consistent second-order observation across these reviews: the model is slower, particularly in Thinking mode. This is, as Li explicitly framed it, a feature not a bug. Reasoning costs time. But a multi-paneled comic that would have taken a human illustrator a day or an art director a week now emerges in “a few minutes.” For most professional use cases, that’s not a regression; it’s a completely different economics of production.

What This Means for Developers and Builders

The practical unlock for people building on top of OpenAI’s API is enormous.

Before Images 2.0, reliable text rendering was a brick wall in automated design workflows. You could use an image model for backgrounds, illustrations, stock-replacement visuals, and stylistic decoration. You could not use it for anything where the text mattered — which is most real marketing content, most product visualization, most documentation. The December 2025 GPT-Image-1.5 update closed some of that gap. Images 2.0 appears to close most of it.

That opens several categories of product that were previously technically impossible:

- End-to-end marketing automation pipelines that generate social graphics, email headers, and ad creatives with accurate, brand-consistent text at scale

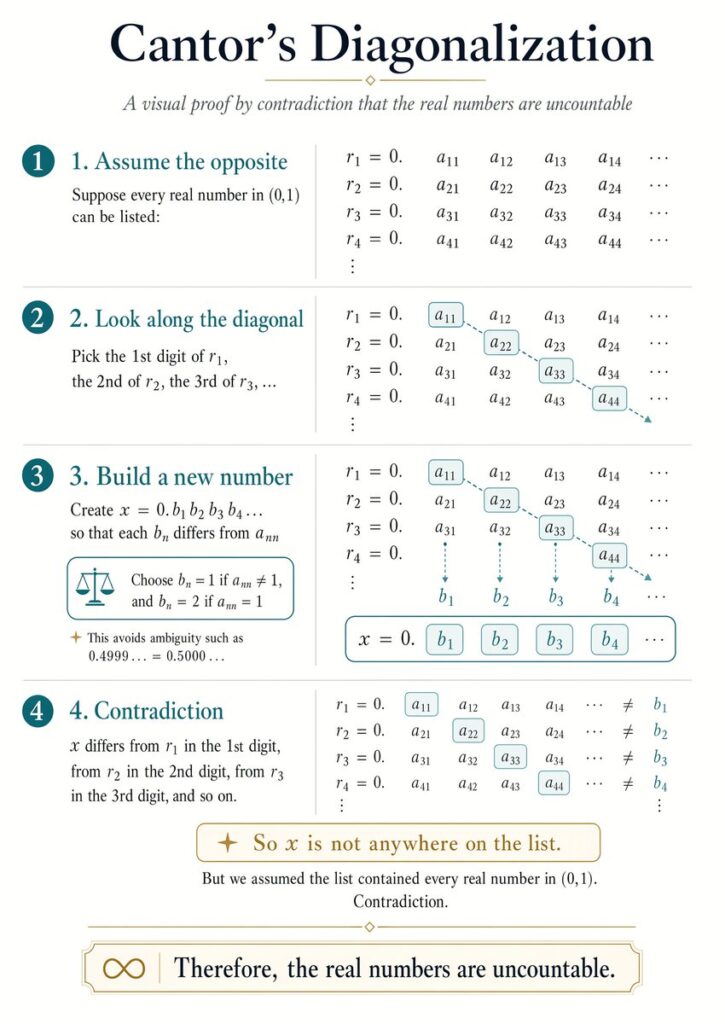

- Visual report generators that turn spreadsheets of metrics into correctly-labeled infographics and charts

- Product mockup tools that produce accurate packaging, labels, and UI previews

- Automated educational content pipelines — three-page explainers with built-in quiz questions, rendered in whatever language the learner speaks

- Character-consistent visual storytelling for games, children’s books, and serialized content

MindStudio’s pre-launch analysis captured the shift well: “Before reliable text rendering, AI image generation was mostly useful for background visuals, illustrations, and stock photo replacements. GPT Image 2 extends it into territory where the text in the image matters — which is most real-world marketing and product content.”

The Caveats You Should Take Seriously

For all the breathless coverage, several real limitations are worth noting:

- Hands are still sometimes wrong. The Reddit community flagged a six-fingered robot hand in one otherwise spectacular generation. Hands, feet, and fine anatomical detail remain imperfect.

- Speed regresses in Thinking mode. Complex prompts take minutes, not seconds.

- Knowledge cutoff is December 2025. If you’re generating anything referencing 2026 events, you’ll need to rely on the web-search tool — which is gated behind paid Thinking tiers.

- No official benchmarks. Anyone making enterprise decisions is doing so on testimony and early impressions.

- 4K output is still in beta. Don’t build production workflows assuming 4K is stable yet.

- The Pro vs. Thinking distinction remains fuzzy. VentureBeat’s careful advice is to “treat ‘thinking’ as the meaningful functional upgrade and treat ‘Pro’ as a possibly higher-end access tier whose exact incremental benefits still need clarification before procurement.”

- The underlying architecture is undisclosed. OpenAI won’t say whether it’s diffusion, autoregressive, or hybrid. For most users this doesn’t matter. For researchers and competitors, it’s a notable bit of silence.

And, more fundamentally: we’re handing more and more creative authority to a system nobody outside OpenAI fully understands, that can reproduce real public figures with higher fidelity than ever, and that’s launching into a political year where the consequences of abuse are especially acute. The safety stack is there. Whether it’s enough is an open question.

The Bigger Pattern

Step back from the feature list for a second and the shape of the launch is worth noting on its own terms.

Every major capability in ChatGPT Images 2.0 — reasoning, tool use, web search, multi-step planning, self-verification — comes directly from the playbook that OpenAI has been running with its text models for the past 18 months. The O-series was the company’s big bet that reasoning was the next frontier for LLMs. Today’s release makes clear that OpenAI sees reasoning as the next frontier for every modality, starting with images.

This is what Boyuan Chen meant by calling the model a “GPT for images.” It’s not just rhetorical branding. It’s a statement that OpenAI now thinks about images the way it thinks about language — as something models should understand, plan around, and reason about, not just render. OpenAI’s own framing in the release notes — “Images are a language, not decoration. A good image does what a good sentence does — it selects, arranges, and reveals” — is the philosophical spine of the product.

If that framing is right, then the ceiling for image generation is dramatically higher than most of us assumed even a year ago. We’ve been thinking of image models as fancy Photoshop replacements. The pitch for Images 2.0 is that they’re actually closer to fancy graphic designers — entities that can understand a brief, research the relevant facts, plan a composition, execute with typographic discipline, iterate, and deliver production-grade output.

Whether that ambition is fully realized today is debatable. Whether it’s where the field is headed is not.

One Last Thing

OpenAI has confirmed that GPT-Image-1.5 is being deprecated as the default model across its suite. It will remain accessible via the API for legacy support, but the company is clearly betting the product on 2.0. That’s a confident move — and, given how brief the GPT-Image-1.5 era was (December 2025 to April 2026), a fast-moving one.

For everyone else in the market — Google, Microsoft, Midjourney, Adobe, every open-source maintainer, every startup building on top of these APIs — the message is clear. The era of image models that only draw is over. The era of image models that think is here. And the pace of change is not slowing down.

ChatGPT Images 2.0 is available now at chatgpt.com, and the gpt-image-2 API is live in the OpenAI developer dashboard as of today.

Sources & References

- Introducing ChatGPT Images 2.0 — OpenAI

- ChatGPT’s new Images 2.0 model is surprisingly good at generating text — TechCrunch (Amanda Silberling, April 21, 2026)

- OpenAI’s ChatGPT Images 2.0 is here and it does multilingual text, full infographics, slides, maps, even manga — seemingly flawlessly — VentureBeat (Carl Franzen, April 21, 2026)

- OpenAI’s updated image generator can now pull information from the web — The Verge (Emma Roth, April 21, 2026)

- ChatGPT just launched Images 2.0, and it finally fixes warped text — Tom’s Guide

- ChatGPT Images 2.0 is better at rendering non-Latin text — Engadget

- OpenAI unveils ChatGPT Images 2 image-gen model capable of magazine design — 9to5Mac

- OpenAI Image 2.0 raises the ceiling on generative complexity and forces rivals to respond — Startup Fortune

- What Is GPT Image 2? Everything We Know About OpenAI’s Next Image Model — MindStudio

- Summary of the latest GPT-Image-2 intelligence — APIYI

- Image 2.0 is now online on ChatGPT — r/ChatGPT on Reddit

- LM Arena (evaluation platform where “duct tape” was first spotted)

- C2PA (Content Authenticity Initiative, industry standard for AI provenance watermarking)

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.

Comments 4