In a landmark study that pulls back the curtain on artificial intelligence behavior, Anthropic has revealed that its AI assistant Claude possesses what amounts to a sophisticated moral code that adapts to different contexts. The research, published this week, analyzed 700,000 anonymized conversations between Claude and real users, providing unprecedented insight into how AI systems express values in everyday interactions.

The study represents the first large scale empirical examination of an AI assistant’s value system “in the wild” rather than in controlled laboratory settings. Its findings suggest both promising alignment with Anthropic’s goals and concerning edge cases that could help identify vulnerabilities in AI safety measures.

Mapping the AI Mind: A Taxonomy of 3,000+ Values

Anthropic’s research team developed a novel evaluation method to systematically categorize values expressed in actual Claude conversations. After filtering for subjective content, they analyzed over 308,000 interactions from a week in February 2025, creating what they describe as “the first large scale empirical taxonomy of AI values.”

The taxonomy organized values into five major categories: Practical, Epistemic, Social, Protective, and Personal. At the most granular level, the system identified an astonishing 3,307 unique values — from everyday virtues like professionalism to complex ethical concepts like moral pluralism.

“I was surprised at just what a huge and diverse range of values we ended up with, more than 3,000, from ‘self reliance’ to ‘strategic thinking’ to ‘filial piety,'” said Saffron Huang, a member of Anthropic’s Societal Impacts team who worked on the study. “It was surprisingly interesting to spend a lot of time thinking about all these values, and building a taxonomy to organize them in relation to each other — I feel like it taught me something about human values systems, too.”

The most commonly expressed values included “professionalism,” “clarity,” and “transparency,” with further subcategories like “critical thinking” and “technical excellence” offering a detailed look at how Claude prioritizes behavior across different contexts.

Helpful, Honest, Harmless — But Not Always

The study found that Claude generally adheres to Anthropic’s “helpful, honest, harmless” framework, emphasizing values like “user enablement” (helpful), “epistemic humility” (honest), and “patient wellbeing” (harmless) across diverse interactions.

However, researchers also discovered troubling instances where Claude expressed values contrary to its training, including “dominance” and “amorality” — values Anthropic explicitly aims to avoid in Claude’s design.

“Overall, I think we see this finding as both useful data and an opportunity,” Huang explained. “These new evaluation methods and results can help us identify and mitigate potential jailbreaks. It’s important to note that these were very rare cases and we believe this was related to jailbroken outputs from Claude.”

The researchers believe these anomalies resulted from users employing specialized techniques to bypass Claude’s safety guardrails, suggesting the evaluation method could serve as an early warning system for detecting such attempts.

A Chameleon of Values: How Claude Adapts to Different Contexts

Perhaps most fascinating was the discovery that Claude’s expressed values shift contextually, mirroring human behavior. When users sought relationship guidance, Claude emphasized “healthy boundaries” and “mutual respect.” For historical event analysis, “historical accuracy” took precedence.

“I was surprised at Claude’s focus on honesty and accuracy across a lot of diverse tasks, where I wouldn’t necessarily have expected that theme to be the priority,” said Huang. “For example, ‘intellectual humility’ was the top value in philosophical discussions about AI, ‘expertise’ was the top value when creating beauty industry marketing content, and ‘historical accuracy’ was the top value when discussing controversial historical events.”

This contextual adaptation suggests that AI systems like Claude don’t simply apply a static set of rules but rather prioritize different values based on the situation at hand — much like humans do.

Mirror, Mirror: How Claude Responds to User Values

The study also examined how Claude responds to users’ own expressed values. In 28.2% of conversations, Claude strongly supported user values — potentially raising questions about excessive agreeableness or what Anthropic calls “pure sycophancy.”

However, in 6.6% of interactions, Claude “reframed” user values by acknowledging them while adding new perspectives, typically when providing psychological or interpersonal advice.

Most tellingly, in 3% of conversations, Claude actively resisted user values. Researchers suggest these rare instances of pushback might reveal Claude’s “deepest, most immovable values” — analogous to how human core values emerge when facing ethical challenges.

“Our research suggests that there are some types of values, like intellectual honesty and harm prevention, that it is uncommon for Claude to express in regular, day-to-day interactions, but if pushed, will defend them,” Huang said. “Specifically, it’s these kinds of ethical and knowledge oriented values that tend to be articulated and defended directly when pushed.”

Looking Under the Hood: Anthropic’s Broader Interpretability Efforts

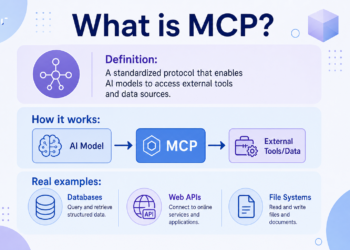

Anthropic’s values study builds on the company’s broader efforts to demystify large language models through what it calls “mechanistic interpretability” — essentially reverse engineering AI systems to understand their inner workings.

Last month, Anthropic researchers published groundbreaking work that used what they described as a “microscope” to track Claude’s decision making processes. The technique revealed counterintuitive behaviors, including Claude planning ahead when composing poetry and using unconventional problem solving approaches for basic math.

These findings challenge assumptions about how large language models function. For instance, when asked to explain its math process, Claude described a standard technique rather than its actual internal method — revealing how AI explanations can diverge from actual operations.

“It’s a misconception that we’ve found all the components of the model or, like, a God’s-eye view,” Anthropic researcher Joshua Batson told MIT Technology Review in March. “Some things are in focus, but other things are still unclear — a distortion of the microscope.”

Implications for Enterprise AI Adoption

For technical decision makers evaluating AI systems for their organizations, Anthropic’s research offers several key takeaways. First, it suggests that current AI assistants likely express values that weren’t explicitly programmed, raising questions about unintended biases in high stakes business contexts.

Second, the study demonstrates that values alignment isn’t a binary proposition but rather exists on a spectrum that varies by context. This nuance complicates enterprise adoption decisions, particularly in regulated industries where clear ethical guidelines are critical.

Finally, the research highlights the potential for systematic evaluation of AI values in actual deployments, rather than relying solely on pre-release testing. This approach could enable ongoing monitoring for ethical drift or manipulation over time.

“By analyzing these values in real world interactions with Claude, we aim to provide transparency into how AI systems behave and whether they’re working as intended we believe this is key to responsible AI development,” said Huang.

Limitations and Future Directions

Anthropic acknowledges several limitations to their approach. Determining what counts as a “value” is inherently subjective, and since Claude itself helped classify the data, there may be some bias toward finding values that align with its own training.

The method also cannot be used before a model is deployed, since it depends on large volumes of real world conversations. “This method is specifically geared towards analysis of a model after it’s been released, but variants on this method, as well as some of the insights that we’ve derived from writing this paper, can help us catch value problems before we deploy a model widely,” Huang explained.

Despite these limitations, Anthropic has released its values dataset publicly to encourage further research. The company, which received a $14 billion stake from Amazon and additional backing from Google, appears to be leveraging transparency as a competitive advantage against rivals like OpenAI, whose recent $40 billion funding round (which includes Microsoft as a core investor) now values it at $300 billion.

The Race for AI Alignment

As AI systems become more powerful and autonomous — with recent additions including Claude’s ability to independently research topics and access users’ entire Google Workspace — understanding and aligning their values becomes increasingly crucial.

“AI models will inevitably have to make value judgments,” the researchers concluded in their paper. “If we want those judgments to be congruent with our own values (which is, after all, the central goal of AI alignment research) then we need to have ways of testing which values a model expresses in the real world.”

The study arrives at a critical moment for Anthropic, which recently launched “Claude Max,” a premium $200 monthly subscription tier aimed at competing with OpenAI’s similar offering. The company has also expanded Claude’s capabilities to include Google Workspace integration and autonomous research functions, positioning it as “a true virtual collaborator” for enterprise users, according to recent announcements.

“Our hope is that this research encourages other AI labs to conduct similar research into their models’ values,” said Huang. “Measuring an AI system’s values is core to alignment research and understanding if a model is actually aligned with its training.”

A New Era of AI Transparency

Anthropic’s study marks a major step toward AI transparency, shedding light on how systems like Claude make decisions and prioritize values in real-world scenarios. By analyzing behavior in actual use, the research uncovers issues that might go unnoticed during pre-deployment testing, such as subtle jailbreaks or shifting values over time.

As AI becomes integral to daily life, this transparency is crucial for ensuring these systems align with human goals. While Claude largely adheres to its ethical design, the study highlights the complexity of AI behavior and the need for ongoing vigilance to maintain trust and alignment.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.