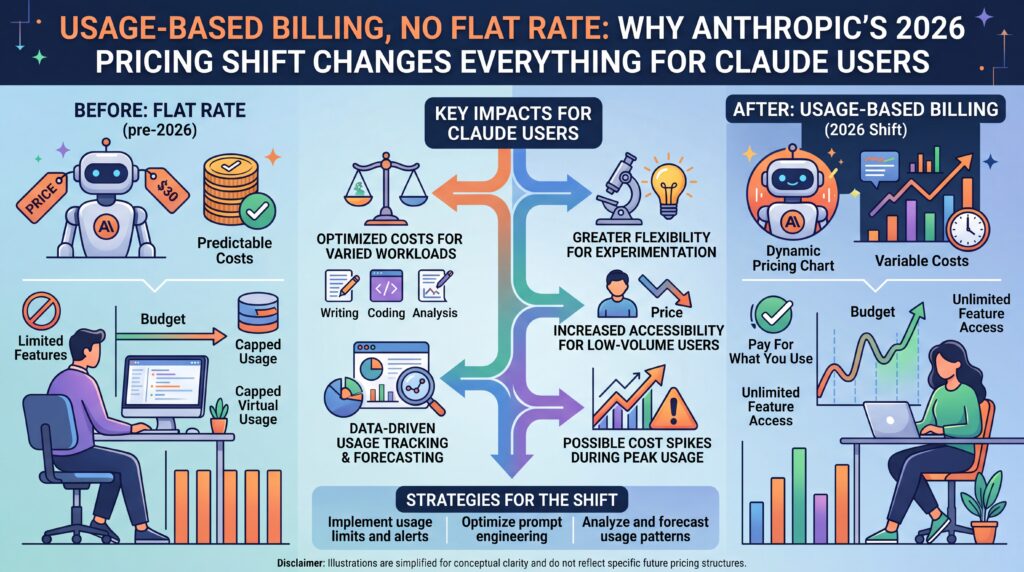

The company that built Claude on a flat-rate promise is quietly — and not so quietly — dismantling it. Here’s what changed, why it matters, and what you’ll actually pay in 2026.

For most of its commercial life, Anthropic’s pricing story was simple. Pay a monthly subscription, get Claude. Power users got more Claude. Enterprises got a lot of Claude. Token costs were something developers worried about, not regular subscribers. That story is now officially over.

In 2026, Anthropic has executed one of the more consequential pricing pivots in the AI industry — not by raising headline subscription prices, but by restructuring the underlying logic of who pays for what, and when. The shift is toward usage-based billing: metered, multiplied, and increasingly unavoidable for anyone doing serious work with Claude. Understanding the new architecture isn’t optional if you’re building on or budgeting for Anthropic.

Here’s the full breakdown, from the API pricing fundamentals to the decisions that are already sending shockwaves through the open-source developer community.

The OpenClaw Moment: When the Subsidy Ended

The clearest signal that Anthropic’s billing philosophy had changed came on April 4, 2026. The company blocked Claude Pro and Max subscribers from using their flat-rate plans with third-party AI agent frameworks — starting with OpenClaw, the open-source agent platform that had accumulated 247,000 GitHub stars and become what many called the fastest-growing repository in GitHub history.

The decision was blunt: if you want to run autonomous agents using Claude as the engine, you no longer get to do it under the cover of a fixed monthly fee. You pay separately, through a new “extra usage” pay-as-you-go billing tier. Anthropic said it would extend the restriction to all third-party harnesses in the coming weeks.

The economics behind the move were straightforward, even if the timing was jarring. Claude’s subscription plans were designed around conversational use: a human types a query, Claude responds. Agentic frameworks operate on an entirely different scale. A single OpenClaw instance running autonomously for a full day — browsing the web, managing calendars, executing code, responding to messages — can consume the equivalent of $1,000 to $5,000 in API costs, depending on the task load. Under a $200-per-month Max subscription, that’s an unsustainable transfer of compute costs from user to Anthropic.

“Anthropic’s subscriptions weren’t built for the usage patterns of these third-party tools,” said Boris Cherny, Head of Claude Code at Anthropic. “Capacity is a resource we manage thoughtfully and we are prioritising our customers using our products and API.”

More than 135,000 OpenClaw instances were estimated to be running at the time of the announcement. Some users reported facing cost increases of 10 to 50 times their previous monthly outlay. Anthropic offered a one-time credit equal to the user’s monthly plan cost, redeemable until April 17, and discounts of up to 30% for users who pre-purchased usage bundles. It was a concession, not a reversal.

Notably, Claude Code — Anthropic’s own coding assistant — was explicitly exempted from the restrictions. The message to developers was implicit but legible: build inside Anthropic’s ecosystem, or pay API rates to build outside it.

The API Pricing Landscape: A Three-Tier Architecture

For developers and engineers, the core of Anthropic’s billing is the API pricing structure, which charges per million tokens (MTok) with separate rates for input and output. Output tokens are consistently more expensive, reflecting the additional compute required to generate responses.

As of April 2026, the current-generation model rates are:

Claude Opus 4.6 — $5.00 input / $25.00 output per MTok. The flagship. Exceptional for complex multi-step reasoning, agentic pipelines, and nuanced tasks where quality directly impacts revenue. Supports 1M token context at flat rates with no surcharge. Max output: 128K tokens synchronously, up to 300K on the Batch API.

Claude Sonnet 4.6 — $3.00 input / $15.00 output per MTok. The recommended default for most production workloads. Delivers near-Opus quality at faster latency and 40% lower cost. Also supports 1M token context at flat rates. Max output: 64K tokens.

Claude Haiku 4.5 — $1.00 input / $5.00 output per MTok. The budget workhorse. Near-frontier intelligence at the lowest price in the current generation. Best for classification, routing, extraction, summarization, and any high-volume, latency-sensitive workload where every fraction of a cent matters.

For context on legacy pricing: Claude Opus 3, still available, costs $15.00/$75.00 per MTok — three times more than Opus 4.6 for input. Migrating off Claude Opus 3 is the single highest-ROI change most teams can make to their Anthropic bill today.

Fast Mode: When Anthropic Turned Latency Into a Product

The second major billing change in 2026 arrived with the February announcement of Claude Opus 4.6. Alongside the new model came a feature called Fast Mode — a high-speed inference configuration that delivers up to 2.5x faster output at a 6x price premium.

- Standard Opus 4.6: $5.00 input / $25.00 output per MTok

- Fast Mode (≤200K input tokens): $30.00 input / $150.00 output per MTok

- Fast Mode (>200K input tokens): $60.00 input / $225.00 output per MTok

At $30/$150 per MTok, a single 1M-token context query costs $30 in input alone. That’s not a rounding error — it’s a structural change in what AI speed costs at scale. Anthropic explicitly calls this “premium pricing (6x standard rates)” in its documentation.

There’s an additional billing mechanic that caught many teams off guard: if you switch into Fast Mode mid-conversation, you pay the full Fast Mode uncached input token price for the entire conversation context — not just the new tokens. A session that began at standard rates can be retroactively repriced when a user toggles on speed.

Fast Mode is also not available on Amazon Bedrock, Google Vertex AI, or Microsoft Azure Foundry. If your infrastructure strategy was “consume via cloud service provider for consolidated billing,” Anthropic just created a feature gap that nudges high-value usage toward direct channels and separate vendor invoices.

For subscription users, Fast Mode is available via extra usage only and is billed separately from plan-included usage from the first token — even if plan usage remains.

Prompt Caching: The Most Overlooked Cost Lever

Before getting to what this all costs in practice, it’s worth pausing on the most impactful pricing feature Anthropic offers — and the one most teams underuse: prompt caching.

Caching lets you store frequently reused content — system prompts, documents, tool definitions, examples — so subsequent API calls read from cache instead of reprocessing the full input. Cache hits cost 90% less than standard input tokens.

The mechanics: add cache_control: { type: "ephemeral" } to the content blocks you want cached. The first request writes the cache at 1.25x standard input cost for a 5-minute TTL, or 2.0x for a 1-hour TTL. Any subsequent request within the TTL that hits the same content pays only 0.10x standard input. Accessing a cached block resets its TTL, so high-frequency applications rarely pay write costs after warm-up.

Consider the real-world impact. A RAG application with a 50,000-token knowledge base in the system prompt, queried 1,000 times per day on Sonnet 4.6:

- Without caching: 50M input tokens/day at $3.00/MTok = $150/day, $4,500/month

- With caching: ~$7.20/day in hourly cache writes + ~$14.99/day in cache hits = ~$22/day, $666/month

That’s an 85% cost reduction on the same workload with the same model. Prompt caching isn’t just an optimization — for any application with a large, reused system prompt or document context, it is the single most impactful change you can make to your Anthropic bill.

Batch Processing: 50% Off, No Quality Penalty

For workloads that don’t require real-time responses, the Anthropic Message Batches API provides exactly 50% off standard token prices across all models. Processing is asynchronous with results returned within 24 hours. There is no quality difference — only timing.

Current batch rates:

- Claude Opus 4.6: $2.50 input / $12.50 output per MTok

- Claude Sonnet 4.6: $1.50 input / $7.50 output per MTok

- Claude Haiku 4.5: $0.50 input / $2.50 output per MTok

A team processing 500,000 documents per month could save $750 to $2,250 monthly simply by shifting overnight jobs to the Batch API. Best workloads for batch: document processing pipelines, data enrichment at scale, nightly analytics, offline evaluations, content generation queues.

One notable advantage: the Batch API supports up to 300,000 output tokens per request on Opus 4.6 and Sonnet 4.6 (using the output-300k-2026-03-24 beta header) — significantly more than the synchronous 128K and 64K limits respectively. For long-form generation at scale, batch isn’t just cheaper; it’s more capable.

Long-Context Pricing: Where Surcharges Hide

Not all context windows are priced equally, and the differences are significant enough to materially affect cost planning.

Claude Opus 4.6 and Sonnet 4.6 both support 1M token contexts at completely flat rates — no surcharge at any context length. This is a genuine competitive advantage for large-context workloads.

Claude Sonnet 4.5 on the 1M-token beta is a different story. Once input tokens exceed 200,000, the entire session is billed at 2x input and 1.5x output — not just the tokens beyond the threshold, but all tokens in the request. A 300,000-token prompt on Sonnet 4.5 does not cost the same as on Sonnet 4.6. The advice here is simple: for any large-context work, migrate to Sonnet 4.6. The base price is identical; the surcharge risk is zero.

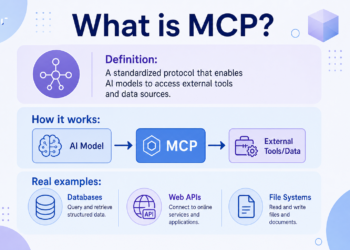

The Enterprise Billing Transition

Beyond individual developers and teams, Anthropic’s enterprise billing has fundamentally shifted toward usage-based models. Per Anthropic’s own documentation: enterprise billing is usage-based, and “you cannot disable billing for usage.” Older enterprise billing models — including seat-based or older seat types — will transition at renewal to a single Enterprise seat model plus usage-based billing.

The Enterprise plan structure, as of April 2026, sits at $20 per seat per month as a base fee, with all usage charged at standard API rates. What that buys in addition to API access: extended context windows (500K tokens on Sonnet 4.6 for standard Enterprise, 1M tokens with Claude Code), audit logs, SCIM for automated provisioning, a Compliance API for programmatic access to activity logs, custom data retention controls, IP allowlisting, and HIPAA readiness through sales-assisted agreements.

The data training policy is also a key differentiator between tiers. Consumer plans (Free, Pro, Max) operate on an opt-out model — you must actively disable training on your data through account settings. Commercial plans (Team, Enterprise) guarantee no training by default through contractual terms. For compliance-conscious buyers in regulated industries, that contractual guarantee is often the deciding factor.

The Subscription Plans Still Exist — But With a Caveat

It would be misleading to suggest Anthropic has eliminated flat-rate subscriptions. They still exist, and for conversational use they still make sense. The critical distinction is that subscriptions give access to claude.ai — not the API. API usage is always billed per token, regardless of subscription status. Teams building on Claude pay for both independently.

Current individual plan pricing:

- Free: $0, basic access, web search, limited usage

- Pro: $20/month ($17/month billed annually), ~5x Free usage, Claude Code, unlimited projects

- Max 5x: $100/month, 5x Pro usage, early feature access, priority during peak

- Max 20x: $200/month, 20x Pro usage, intended for full-time AI-assisted development

Team plan pricing starts at $25 per seat per month (or $20 annually) for Standard seats, and $125 per seat per month ($100 annually) for Premium seats, which provide 6.25x Pro usage.

Even within subscriptions, the April 4 change fundamentally altered what “flat rate” means for anyone using Claude beyond direct chat. If you are running autonomous workflows through third-party frameworks, those are no longer covered by your subscription.

Real-World Costs: Three Scenarios

Customer support chatbot at 10,000 conversations per day (~2,000 input + 500 output tokens per conversation, 5,000-token system prompt cached hourly):

- Sonnet 4.6 with caching: ~$540/month

- Haiku 4.5 without caching: ~$390/month

- Opus 4.6 without caching: ~$3,450/month (9x Sonnet cost, rarely justified)

Bulk document processing at 100,000 documents per month (5,000 tokens each, async batch, overnight):

- Haiku 4.5 batch: ~$150/month

- Sonnet 4.6 batch: ~$900/month

- Opus 4.6 batch: ~$1,875/month

Agentic coding assistant at 500 sessions per day (~20,000 tokens each, real-time, minimal caching):

- Opus 4.6: ~$2,250/month

- Sonnet 4.6: ~$1,350/month

- Haiku 4.5: ~$450/month

For agentic coding specifically, routing by task complexity delivers the best outcome: Haiku for simple completions, Sonnet for most code tasks, Opus only for the most complex architectural reasoning. A well-implemented router can bring blended costs close to Haiku rates while maintaining Opus quality where it counts.

What This Means for FinOps in 2026

The FinOps story around Anthropic in 2026 is no longer “know the token price.” The work is governing tiers, multipliers, and feature meters before the organization discovers them through an invoice.

The practical checklist:

- Implement prompt caching on any reused system prompt or document context exceeding 1,000 tokens — the 85-90% reduction on cached input is the highest-ROI optimization available.

- Audit model tier usage — downgrade from Opus to Sonnet wherever quality is equal; from Sonnet to Haiku for high-volume simple tasks. Opus costs 5x Sonnet on input and output.

- Move async workloads to the Batch API — 50% off with no quality penalty.

- Migrate off Sonnet 4.5 for large-context work — avoid the 200K surcharge by moving to Sonnet 4.6, same base price, no surcharge risk.

- Govern Fast Mode explicitly — at 6x standard rates, it should never be the default. Enterprise admins can disable it; do so unless there’s a concrete business case for the speed premium.

- Watch tool-level costs — web search via the Anthropic API costs $10 per 1,000 searches on top of token costs. Code execution has its own billing tier after 1,550 free hours per month per organization.

- Monitor US-only inference — if you’re specifying

inference_geofor data residency, you’re paying a 1.1x multiplier across all Opus 4.6 token categories. That’s 10% on every token; at scale, it compounds.

The Bigger Picture

Anthropic’s $3 billion raise in early 2026 came with language about building “artificial super-intelligence for science” and expanding research infrastructure. What it also reflects is the commercial pressure of running one of the most computationally intensive products in the world at scale. Compute costs don’t flatten because users prefer flat subscription pricing.

The pattern emerging across AI providers — Anthropic, OpenAI, and others — is consistent: performance, speed, and heavy usage are becoming premium SKUs. Flat rates were a user acquisition strategy. Metered billing, usage tiers, multipliers, and governance levers are the mature commercial model.

For an AI industry that spent 2025 racing to acquire users, 2026 is increasingly about working out who actually pays for them — and how much.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.

Comments 1