A rigorous guide to the best AI tools in 2026, ranked across chat, search, coding, image, video, voice, RAG, MLOps, and enterprise AI.

In 2026, “best AI tool” is the wrong question. The real question is: which five-tool stack saves you the most time for the least friction?

Most AI tools lists fail for one reason: they rank everything together. But a research engine, a coding copilot, a voice API, and a vector database are not substitutes. They are layers. A freelance writer and a platform engineer deploying retrieval-augmented generation into production have almost nothing in common in their tool needs — except that both probably start their day talking to an AI assistant.

The market is finally separating into winners. A small set of tools now dominates everyday work, while another smaller set runs the infrastructure behind serious AI products. This guide covers both halves. The first section is for everyone: the assistants, search engines, coding tools, and creative apps that form the modern knowledge worker’s baseline. The second section is for builders and operations teams: the frameworks, databases, evaluation platforms, and enterprise layers that turn AI experiments into governed production systems.

We ranked 20 tools. Not 50, not 100. Twenty — because that is the realistic ceiling of what a single person or team can actually evaluate and adopt. Here is how we chose them, and why each one earned its spot.

How We Scored: Methodology

This ranking is an editorial utility ranking, not a pure model leaderboard. We used a weighted framework across seven dimensions:

- Practical breadth of use cases — How many real workflows does this tool improve?

- Quality and performance within category — Is it best-in-class or just adequate?

- Ecosystem and integrations — Does it play well with other tools, or does it live on an island?

- Pricing and value — Can a solo user and a 500-person team both find a plan that makes sense?

- Enterprise readiness and privacy controls — Does it have the governance surface serious buyers require?

- Product velocity (2024–2026) — Is the team shipping fast, or coasting?

- Public customer proof — Are real organizations using it in production, not just in demos?

Benchmarks informed the ranking but did not dominate it. Chatbot Arena measures broad human preference across models. MMMU tests multimodal reasoning. LiveCodeBench and SWE-bench evaluate coding ability. ParseBench measures document processing. Each tells a different story, and none alone tells a reader which tool will save the most time or money in real workflows.

The category sweep was intentionally broad: LLMs, multimodal assistants, search/answer engines, office copilots, code assistants, image tools, video tools, audio/voice tools, agent frameworks, RAG/data frameworks, vector databases, MLOps/evaluation, data labeling, model hubs, privacy/edge runtimes, and vertical enterprise knowledge AI. A few categories were considered but deprioritized. Standalone embeddings vendors matter technically, but most readers in 2026 buy embeddings as part of a broader platform. Strictly vertical AI apps can be excellent, but they weaken a “most people need” framing unless they also solve a horizontal problem.

The net effect is a list that deliberately favors tools with repeatable, defensible adoption patterns instead of novelty.

A note on limitations: Several enterprise tools use usage-based, minimum-commitment, or custom pricing, so exact comparisons are not always apples-to-apples. Public customer proof is uneven — some vendors publish rich case studies while others publish strong docs but sparse named-customer evidence. Benchmark positions can shift quickly, and category benchmarks are not interchangeable across assistants, coding tools, multimodal tools, and document-processing systems. We make these limits explicit while still being decisive.

The Top 20 at a Glance

| Rank | Tool | Category | Entry Price | Best For |

|---|---|---|---|---|

| 1 | ChatGPT | General assistant | Free; Plus $20/mo | Broadest everyday AI utility |

| 2 | Claude | Writing & analysis assistant | Free; Pro $20/mo | Long-context reasoning, writing, agentic coding |

| 3 | Gemini | Multimodal assistant | Free; AI Pro $19.99/mo | Google-native multimodal work |

| 4 | Microsoft Copilot | Enterprise work assistant | Free; Business from $21/user/mo | Organizations in the Microsoft 365 ecosystem |

| 5 | Perplexity | Research & answer engine | Free; Pro $20/mo | Source-backed research and synthesis |

| 6 | GitHub Copilot | Team coding assistant | Free; Pro $10/mo | Default coding copilot for professional teams |

| 7 | Cursor | AI-native IDE | Hobby free; Pro $20/mo | Agentic editing and deep IDE integration |

| 8 | Midjourney | Image generation | Basic $10/mo | Pure image quality and aesthetics |

| 9 | Adobe Firefly | Commercial-safe creative AI | Varies by plan | Enterprise-safe creative workflows |

| 10 | Runway | Video generation | Free; Standard $12/user/mo | Production-ready AI video |

| 11 | ElevenLabs | Voice & audio | Free; Starter $6/mo | TTS, dubbing, voice agents, multilingual audio |

| 12 | Hugging Face | Model hub & open ecosystem | Free; Pro $9/mo | Open models, datasets, and community |

| 13 | Ollama | Local/private runtime | Free; Pro $20/mo | Running open models locally and privately |

| 14 | LangChain / LangSmith | Agent framework + observability | Free tier; Plus from $39/seat/mo | Agent orchestration, tracing, evals |

| 15 | LlamaIndex | RAG & document-agent framework | Free 10k credits/mo | Document-heavy RAG and extraction |

| 16 | Pinecone | Vector database | Starter $0; Standard $50/mo min | Managed vector search for production retrieval |

| 17 | Weights & Biases | MLOps & evals | Free tier available | Experiment tracking and agent observability |

| 18 | Databricks Mosaic AI | Enterprise data + AI platform | Trial + pay-as-you-go | Governed enterprise AI at scale |

| 19 | Labelbox | Data labeling & RLHF | Free to start | Human-data generation and eval pipelines |

| 20 | Glean | Enterprise search & work AI | Enterprise pricing | Enterprise knowledge retrieval and agents |

Part One: The Tools Most People Need

This is the stack for knowledge workers, freelancers, creators, and developers who want AI to save time today — not next quarter.

Universal Assistants

These are the front doors to AI for most people. If you adopt nothing else, pick one of these.

1. ChatGPT — The Default Starting Point

By OpenAI · chatgpt.com

ChatGPT remains the best general-purpose AI assistant to anchor any toolkit because it spans writing, analysis, ideation, coding, files, and team workflows. It is not always the best at any single task, but it is the best at the most tasks simultaneously.

Primary use cases: Drafting, summarization, analysis, brainstorming, light coding, research prep, file Q&A.

What stands out: Broad capability coverage, strong ecosystem familiarity, and a product surface that has expanded well beyond simple chat. The prompt-template ecosystem around ChatGPT is massive, which lowers the barrier for new users to get value fast.

Pricing:

- Free tier available

- Plus: $20/month

- Pro: $200/month

- Business: from $25/user/month (billed annually)

- Enterprise: custom pricing

Strengths: Breadth, familiarity, easy recommendation for first-time buyers. If someone asks “which AI tool should I try first?” — the answer is still ChatGPT.

Weaknesses: Premium tiers get expensive quickly. Official public customer references specifically for ChatGPT are less neatly packaged than for some enterprise-focused products.

Recent updates: GPT-4.1 reached ChatGPT with a broader paid-tier ladder.

Best for: Almost everyone. This is the generalist’s generalist.

2. Claude — The Thinker’s Assistant

Claude is the strongest editorial counterweight to ChatGPT, especially for long-context reasoning, writing, slides and spreadsheets, and coding-adjacent agent workflows. Where ChatGPT wins on breadth, Claude wins on depth — particularly for work that rewards careful, nuanced prose and extended analysis.

Primary use cases: Long-form writing, document analysis, knowledge work, deep research, coding with Claude Code.

What stands out: A 1M-token context window in beta for Sonnet 4.6/Opus 4.6. A strong coding and agent positioning. A growing product family that now includes Claude Code and design features.

Pricing:

- Free tier available

- Pro: $20/month

- Max: $100 or $200/month

- Team: $25/user/month (annual) or $30/month

- Enterprise: custom

- API: token-based pricing

Strengths: Excellent prose and reasoning quality, strong enterprise narrative, strong agentic coding story.

Weaknesses: Pricing expands fast at higher tiers. API and app billing are separate systems.

Notable customers: Official stories cite Affinity and Autodesk workflows.

Recent updates: Opus 4.5 in late 2025, Opus 4.6 and Sonnet 4.6 in early 2026, Opus 4.7 in April 2026.

Best for: Writers, analysts, PMs, researchers, and developers who value reasoning quality over raw speed.

3. Gemini — The Google-Native Powerhouse

By Google · gemini.google.com

Gemini is the best multimodal, Google-native AI suite and the most credible “AI inside your daily apps” story outside Microsoft. If your life runs on Gmail, Docs, Meet, and Google Drive, Gemini is the assistant that already knows where everything is.

Primary use cases: Writing, planning, Deep Research, multimodal analysis, Workspace assistance, coding via Gemini Code Assist, and app-building through Google AI Studio and Vertex AI.

What stands out: Deep Research, large context windows, Gemini embedded in Gmail/Docs/Meet, and support across the Gemini app, Workspace, Code Assist, AI Studio, and Vertex AI.

Pricing:

- Free tier available

- Google AI Pro: $19.99/month

- Google AI Ultra: $249.99/month

- Business pricing distributed across Workspace and Google Cloud plans

Strengths: Strongest cross-product Google integration, multimodal breadth, and very large platform surface area.

Weaknesses: Packaging can be confusing because consumer, Workspace, Code Assist, AI Studio, and Vertex AI pricing are separate layers.

Notable customers: Official Workspace stories cite Pepperdine University, Pennymac, and Adore Me.

Recent updates: Gemini 3.1 Pro rollout and ongoing Workspace packaging changes in 2025–2026.

Best for: Google-centric consumers, marketers, analysts, and developers.

4. Microsoft Copilot — The Enterprise Workhorse

By Microsoft · copilot.microsoft.com

Microsoft Copilot is the best enterprise work assistant for organizations already standardized on Microsoft 365. It does not try to be everything — it tries to be the best AI layer on top of the tools your company already pays for.

Primary use cases: Email, meetings, PowerPoint, Excel analysis, Teams knowledge work, agent-building in the Microsoft stack.

What stands out: Grounding in Microsoft 365 and Graph data, Copilot Chat for eligible business users, connectors for external data, and plugin/agent extensibility.

Pricing:

- Free Copilot and Copilot Chat options for some scenarios

- Microsoft 365 Premium for individuals: $19.99/month (includes Copilot)

- Copilot Business: $21/user/month (annual promo at $18/user/month)

- Microsoft 365 Copilot Enterprise: $30/user/month with a qualifying Microsoft 365 plan

Strengths: Strongest Excel/Outlook/Teams angle, deep enterprise controls, strong connector story.

Weaknesses: Best experience depends on already paying for Microsoft licenses and living inside the ecosystem.

Notable customers: Official stories include mci group and La Poste.

Recent updates: Connector expansion, plugin support for agents, and 2026 pricing/package changes.

Best for: Business teams, large organizations, operations, finance, and sales leaders already in the Microsoft ecosystem.

5. Perplexity — The Research Engine

By Perplexity · perplexity.ai

Perplexity is the cleanest answer-engine and research product on the market, especially for readers who want source-backed synthesis rather than open-ended chat. When you need to know something and need to trust the answer, Perplexity is where you go.

Primary use cases: Fast research, market scans, source-backed Q&A, deep research reports, grounded API experiences, and increasingly agentic browsing and computer tasks.

What stands out: Web-grounded answers with visible sources, model choice, enterprise connectors, premium citations to proprietary sources, and an API platform spanning Agent, Search, Sonar, and Embeddings.

Pricing:

- Free tier available

- Pro: $20/month or $200/year

- Enterprise Pro: $40/month per seat or $400/year

- Enterprise Max: $325/month per seat or $3,250/year

Strengths: Speed, source visibility, low-friction research UX.

Weaknesses: Less suitable than frontier chat tools for open-ended writing or workflow-heavy office automation.

Notable customers: Official API stories include Zoom, SAP’s Joule, and Rox.

Recent updates: Major 2026 changelog entries include Personal Computer on Mac, enterprise Memory, model additions, and finance features.

Best for: Analysts, founders, journalists, consultants, and operators who value cited sources over conversational flair.

Coding and Builder Productivity

If you write code — professionally or as a side project — these two tools represent the current frontier.

6. GitHub Copilot — The Team Standard

By GitHub · github.com/features/copilot

GitHub Copilot is still the default coding copilot for teams because it is embedded where developers already work: GitHub, IDEs, CLI, pull requests, and enterprise policy surfaces. It is not always the flashiest option, but it is the one most engineering managers can greenlight without a long procurement fight.

Primary use cases: Inline completion, chat, code review, cloud agents, pull request help, and enterprise coding assistance.

What stands out: Broad model access, organization controls, SDK, CLI, agent features, and native GitHub integration.

Pricing:

- Free tier available

- Pro: $10/month

- Pro+: $39/month

- Business: $19/user/month

- Enterprise: $39/user/month

- Additional premium requests and AI credits are usage-based

Strengths: Distribution, governance, team rollout simplicity, enterprise familiarity.

Weaknesses: Less opinionated than Cursor, and the 2026 move to usage-based billing adds budgeting complexity.

Notable customers: Official stories include Accenture and Duolingo.

Recent updates: Copilot CLI reached GA in February 2026, organization custom instructions reached GA in April 2026, and all plans moved toward AI-credit billing beginning June 2026.

Best for: Professional developers and engineering organizations that want a governed, enterprise-friendly coding assistant.

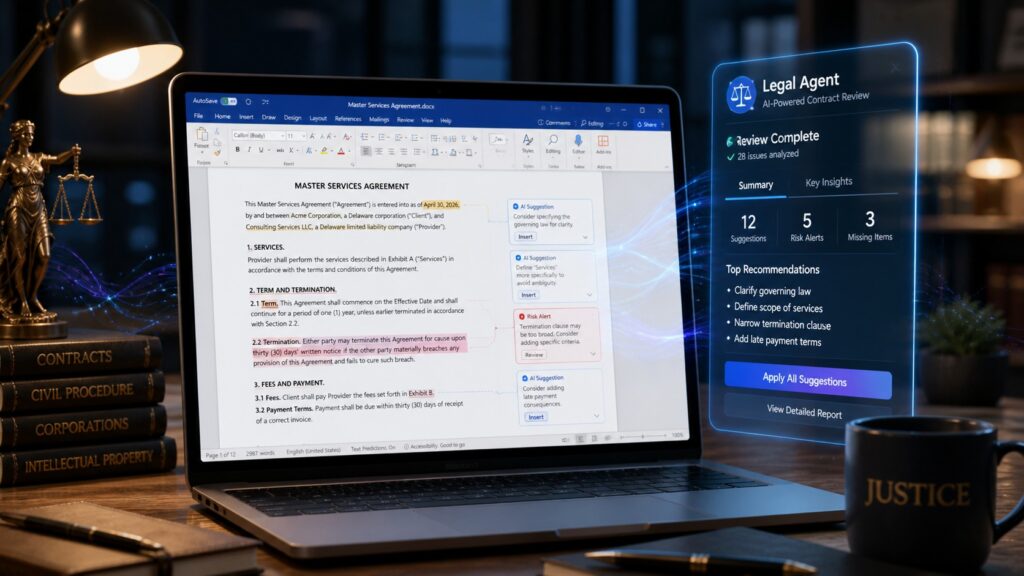

7. Cursor — The Agent-First IDE

By Anysphere · cursor.com

Cursor is the best AI-native IDE for developers who want agents, refactors, and parallelized coding work — not just autocomplete. If GitHub Copilot is the responsible team choice, Cursor is the power user’s choice.

Primary use cases: Greenfield app builds, repo-wide edits, multi-file refactors, debugging, cloud agents, agent automations.

What stands out: Multi-agent workflows, Composer 2, MCP support, cloud agents, agent automations, and the new Cursor 3 workspace.

Pricing:

- Hobby: free

- Pro: $20/month

- Pro+: $60/month

- Ultra: $200/month

- Teams: $40/user/month

- Enterprise: custom

Strengths: Fastest-moving AI IDE brand, powerful agent abstractions, strong community adoption.

Weaknesses: Usage-based surprises remain a real complaint vector. Best fit is still for hands-on developers rather than casual users.

Notable customers: Official stories cite Stripe, National Australia Bank, Amplitude, and Box.

Recent updates: Cursor 3 in April 2026, Composer 2 in March 2026, and a high-velocity 2026 changelog around security agents, plugins, and CLI improvements.

Best for: Individual developers, startup teams, and engineering orgs experimenting with agentic coding.

Creative AI

Four tools that cover the creative spectrum: images, commercially safe design, video, and voice.

8. Midjourney — The Aesthetic Standard

By Midjourney · midjourney.com

Midjourney remains the strongest editorial pick for pure image-generation because the brand still maps most cleanly to visual taste and aesthetic output quality. When art directors and designers talk about AI-generated images that actually look good, Midjourney is still the first name that comes up.

Primary use cases: Concept art, marketing visuals, art direction exploration, thumbnails, styleboards, character exploration.

What stands out: V8.1 speed and prompt adherence, HD images, strong style-reference tooling, Stealth Mode on higher tiers, and unlimited Relax-mode image generation on Standard and above.

Pricing:

- Basic: $10/month

- Standard: $30/month

- Pro: $60/month

- Mega: $120/month

- 20% annual discounts available

- No durable free tier

Strengths: Visual quality and taste that still leads the category.

Weaknesses: Weaker enterprise/API story than Adobe or Runway. Public official customer references are sparse.

Recent updates: V8 Alpha in March 2026 and V8.1 on April 30, 2026.

Best for: Creators, art directors, indie marketers, and agencies who prioritize visual quality above all else.

9. Adobe Firefly — The Enterprise-Safe Creative Choice

By Adobe · adobe.com/products/firefly.html

Adobe Firefly is the most defensible “commercial creative AI” pick because it sits inside a mature professional content stack and offers APIs plus enterprise custom models. If your team needs to generate creative assets without legal risk, Firefly is where you start.

Primary use cases: Branded image and video generation, Adobe-native ideation, content supply-chain automation, safe enterprise creative workflows.

What stands out: Firefly API, enterprise custom models, integration with Creative Cloud/Express/GenStudio flows, and Adobe’s focus on controlled, commercially oriented workflows.

Pricing: Higher-credit Firefly tiers such as Pro Plus and Premium exist. Enterprise pricing is handled through Adobe business sales. Exact lower-tier Firefly-only entry pricing is partly unspecified in public sources.

Strengths: Strongest commercial/enterprise creative narrative, API story, and adjacent Adobe workflow value.

Weaknesses: Packaging is more complex than Midjourney, and value depends on already using Adobe products.

Notable customers: Official customer-story material includes Currys.

Recent updates: Major Firefly refresh in April 2025, an all-new Firefly home at MAX 2025, video improvements in late 2025, and unlimited-generation promotional moves in early 2026.

Best for: In-house creative teams, agencies, design ops, and brand studios.

10. Runway — The Video Generation Leader

By Runway · runwayml.com

Runway is the best AI video pick because it combines creator brand, product depth, and API access more credibly than most rivals. If you need to go from text or image to production-ready video, Runway is the most complete option available.

Primary use cases: Text-to-video, image-to-video, performance capture, previsualization, ad concepts, and AI-assisted post-production workflows.

What stands out: Gen-4.5, API access, third-party model support, workflow tooling, and an enterprise sales motion aimed at production teams.

Pricing:

- Free tier available

- Standard: $12/user/month

- Pro: $28/user/month

- Unlimited: $76/user/month

- Enterprise: custom

- API credits: $0.01/credit

Strengths: Strongest end-to-end AI video story and one of the better bridges from experimentation into production.

Weaknesses: At scale, video generation remains expensive relative to image or text tools.

Notable customers: Official stories reference campaigns and productions involving Under Armour and work for brands such as Disney and Nike.

Recent updates: Gen-4.5 and a continuing 2026 API and product push.

Best for: Creative teams, filmmakers, production studios, and performance marketers.

11. ElevenLabs — The Voice Standard

By ElevenLabs · elevenlabs.io

ElevenLabs is the clearest market leader in AI voice. If you need speech, dubbing, voice agents, or multilingual audio output, this is the tool with the broadest product surface and the strongest quality reputation.

Primary use cases: Text-to-speech, localization, dubbing, voice cloning, conversational agents, training simulations, customer support.

What stands out: Very broad audio product surface, official SDKs, streaming APIs, native agent tooling across web and mobile stacks, and strong multilingual positioning.

Pricing:

- Free tier available

- Starter: $6/month

- Creator: $22/month

- Pro: $99/month

- Scale: $299/month

- Business: $990/month

- Enterprise: custom

Strengths: Best mix of consumer accessibility and enterprise-grade audio infrastructure.

Weaknesses: Voice-cloning and rights workflows create governance questions for some teams.

Notable customers: Official stories reference Deutsche Telekom, the Council of the European Union presidency workflow, and legal-AI partnership work with Harvey.

Recent updates: Eleven v3 became generally available in February 2026 and Expressive Mode for ElevenAgents launched days later.

Best for: Creators, media teams, call centers, product teams, and localization teams.

Part Two: The Stack for Builders and Enterprise Teams

If Part One is about saving time, Part Two is about building systems. These tools form the infrastructure layer that serious teams need once AI pilots become production workloads.

The Open Model and Runtime Layer

12. Hugging Face — The Hub for Everything Open

By Hugging Face · huggingface.co

Hugging Face is the essential open-AI platform pick because no other vendor has the same density of model, dataset, demo, inference, and community gravity. It is to AI models what GitHub is to source code.

Primary use cases: Discovering open models, prototyping, dataset sharing, demo apps, managed or provider-routed inference, open-source workflow integration.

What stands out: Hub scale, Inference Providers, Inference Endpoints, open-source library ecosystem, and massive organizational adoption.

Pricing:

- Free tier available

- Pro: $9/month

- Team: starting at $20/user/month

- Enterprise and Enterprise Plus: custom

Strengths: Best discovery layer for open AI, powerful community effects, integrates well across stacks.

Weaknesses: Breadth can overwhelm mainstream readers. Not every tool on the hub is production-ready.

Notable adoption: The home page reports more than 50,000 organizations use the platform, highlighting organizations such as Meta, Amazon, Google, and Microsoft.

Recent updates: 2026 state-of-open-source reporting and the formal addition of GGML/llama.cpp into the ecosystem.

Best for: ML engineers, researchers, hobbyists, infra teams, and AI founders.

13. Ollama — The Local-First Runtime

By Ollama · ollama.com

Ollama is the best privacy and edge story for readers who want to run open models on their own hardware without cloud dependence. In a world where most AI tools phone home, Ollama gives you the option to keep everything local.

Primary use cases: Running open models locally, private experimentation, local coding assistants, lightweight deployment, cloud-optional hybrid work.

What stands out: Dead-simple local runtime, official Python/JS libraries, OpenAI-compatible API support, web search support, cloud-model expansion, and direct integrations like Claude Code support.

Pricing:

- Free tier available

- Pro: $20/month

- Max: $100/month

Strengths: Simplicity, privacy, local-first ergonomics, low switching friction for builders.

Weaknesses: Mainstream nontechnical users still need more setup than they do with hosted assistants.

Recent updates: Cloud models in late 2025, ollama launch in January 2026, and MLX-powered Apple Silicon performance in March 2026.

Best for: Developers, privacy-sensitive teams, tinkerers, and edge/local AI enthusiasts.

The Agent, RAG, Retrieval, and Observability Layer

This is the middleware of modern AI applications. If you are building anything that retrieves documents, orchestrates agents, or needs evaluation infrastructure, these four tools are the most defensible choices.

14. LangChain / LangSmith — The Agent Engineering Stack

By LangChain · langchain.com

LangChain and LangSmith together form the best-known agent engineering stack, pairing flexible frameworks with observability and evaluation. LangChain gives you the building blocks; LangSmith gives you the visibility into what those blocks are doing in production.

Primary use cases: Agent orchestration, tracing, evaluations, prompt management, deployment, and fleet management.

What stands out: Framework-agnostic observability in LangSmith, broad SDK support (Python, JS/TS, Go, Java), OpenTelemetry ingestion, evaluators and templates, and newer Fleet capabilities.

Pricing:

- Free tier available

- Plus: from $39/seat/month

- Enterprise and self-hosted options exist

- Pay-for-what-you-use model across services

Strengths: Strong market mindshare, broad educational footprint, and a good narrative bridge from prototyping to production governance.

Weaknesses: Newer agent abstractions mean some product surfaces are still moving quickly.

Notable customers: Official case studies include ServiceNow, Remote, Lovable, and Credit Genie.

Recent updates: LangSmith Fleet in March 2026, evaluator and cost-alert improvements in April 2026, and ongoing enterprise readiness work.

Best for: AI application builders, platform teams, and agent engineers.

15. LlamaIndex — The Document Intelligence Framework

By LlamaIndex · llamaindex.ai

LlamaIndex is the strongest document- and RAG-heavy framework because it cleanly connects parsing, extraction, indexing, workflows, and agentic document operations. If your AI application is fundamentally about understanding documents, LlamaIndex is where you start.

Primary use cases: Document parsing, RAG, extraction, knowledge assistants, document agents, workflow automation.

What stands out: Free monthly credits, credit-based usage, LlamaParse, Workflows 1.0, and document-agent architecture work.

Pricing:

- Free plan: 10,000 credits/month (~1,000 pages)

- Usage-based: 1,000 credits = $1.25

- Enterprise: handled through sales

Strengths: One of the clearest “RAG platform” picks for document-heavy use cases.

Weaknesses: Named official customer stories were not clearly surfaced in public sources.

Notable scale metrics: 1B+ documents processed, 25M+ monthly package downloads, and 300k+ LlamaParse users.

Recent updates: Workflows 1.0 in mid-2025, long-horizon document-agent work in 2026, and ParseBench in April 2026.

Best for: RAG builders, document-AI teams, and enterprise automation teams.

16. Pinecone — The Retrieval Infrastructure

By Pinecone · pinecone.io

Pinecone is the cleanest managed vector and RAG-database inclusion because the brand remains tightly associated with production-grade retrieval. When teams move from prototype to production retrieval, Pinecone is consistently in the conversation.

Primary use cases: Vector search, retrieval-augmented generation, knowledge assistants, managed long-term memory and search layers.

What stands out: Fully managed infrastructure, clear Starter/Standard/Enterprise packaging, and newer Pinecone Assistant pricing changes that make the assistant layer more usage-based.

Pricing:

- Starter: $0/month

- Standard: $50/month minimum usage

- Enterprise: $500/month minimum usage

- Beyond minimums, billing is usage-based

Strengths: Strong retrieval brand, good production story, approachable pricing narrative for RAG teams.

Weaknesses: Exact SDK/API integration specifics should be verified against current docs for the most up-to-date picture.

Recent updates: Major 2026 changes made Assistant fully usage-based and removed the hourly per-assistant fee.

Best for: RAG teams, platform teams, and search-heavy applications.

17. Weights & Biases — The Evaluation and Observability Layer

By Weights & Biases · wandb.ai

Weights & Biases is still the most natural MLOps and evaluation inclusion because it now spans both classic model development and agent/application observability. W&B Weave extends its reach from training into production agent tracing.

Primary use cases: Experiment tracking, dashboards, sweeps, evaluations, agent tracing via Weave, hosted inference, and post-training workflows.

What stands out: W&B Models, W&B Weave, training pricing separated from storage/inference, and visible case-study depth.

Pricing:

- Free tier exists

- Pro exists for early-stage teams (exact public seat price varies)

- Training and inference are usage-based

- Enterprise: custom

Strengths: Strong ecosystem credibility and excellent customer proof.

Weaknesses: Pricing is workload-dependent enough that you should estimate costs from actual usage, not simple seat math.

Notable customers: Official stories include Shell, Toyota Research Institute, Lyft, and Microsoft.

Recent updates: 2026 positioning increasingly highlights inference and Claude Code-related workflows, while training and inference pricing pages make usage economics explicit.

Best for: ML teams, applied AI teams, and evaluation-heavy product teams.

The Enterprise Data, Labeling, and Knowledge Layer

These three tools solve the hardest problems in enterprise AI: governance, human data quality, and finding knowledge buried across dozens of SaaS tools.

18. Databricks Mosaic AI — The Enterprise Platform

By Databricks · databricks.com/product/machine-learning

Databricks Mosaic AI is the best enterprise data-platform inclusion for organizations turning AI projects into governed production systems. If your company has data-platform complexity, Mosaic AI is the layer that unifies it.

Primary use cases: Model serving, agent governance, evaluations, data/AI unification, vector search, foundation model APIs.

What stands out: Pay-as-you-go pricing, Mosaic AI Model Serving, Foundation Model APIs, custom evaluations, and Unity Catalog governance and guardrails.

Pricing:

- Pay-as-you-go with free trial

- Serving priced in DBUs (e.g., 10.48 DBU/hour for small T4-class serving, 20 DBU/hour for medium A10G-class serving)

- Committed-use discounts available

Strengths: Strongest “single enterprise platform” story on this list.

Weaknesses: Too heavyweight for casual users and startups that do not already have data-platform complexity.

Recent updates: 2026 hosted-model support continues to expand, including GPT-5.4 availability in Mosaic AI Model Serving.

Best for: Large organizations, data/ML platform teams, and regulated industries.

19. Labelbox — The Human Data Layer

By Labelbox · labelbox.com

Labelbox is the strongest labeling and data-factory pick for a guide that wants to cover the human-data layer behind frontier models. In a world obsessed with model capabilities, Labelbox focuses on the data quality that makes those capabilities possible.

Primary use cases: Data labeling, workflow review, RLHF and RL data creation, model-assisted labeling, evaluations, human-in-the-loop systems.

What stands out: “Data factory” positioning, Foundry apps with REST access, workflow editor improvements, and explicit focus on frontier-lab data generation.

Pricing: Supports a free/start-for-free motion and flat-rate LBU pricing. Exact public monthly plan numbers are partly unspecified; enterprise and custom packaging available.

Strengths: Credible frontier-data narrative, strong workflow orchestration, and very relevant to 2026 post-training economics.

Weaknesses: Too specialized for mainstream readers — but essential for teams doing serious model development.

Notable customers: The official homepage highlights logos such as Shutterstock, Ideogram, Stryker, and Intuitive.

Recent updates: Pricing simplification, new workflow editor, and continuing RLHF and data-services expansion.

Best for: AI labs, applied AI teams, data ops groups, and evaluation teams.

20. Glean — The Enterprise Knowledge Assistant

Glean is the best enterprise knowledge-assistant pick because it merges search, assistant, agents, model management, and a serious connector layer into one product. If your organization’s biggest AI problem is “our knowledge is trapped in 40 different SaaS tools,” Glean is the answer.

Primary use cases: Enterprise search, internal knowledge retrieval, connected chat/assistant, agent workflows, search across SaaS and content systems.

What stands out: Model Hub, extensive connectors, remote MCP server support, Flex-credit pricing for advanced agent runs, and strong admin controls.

Pricing: Enterprise and Flex pricing models. Some newer features such as Deep Research are explicitly usage-based. Public list pricing is not exposed.

Strengths: One of the clearest enterprise “work AI” narratives in the market.

Weaknesses: Not a consumer-facing recommendation. Public pricing transparency is lower than consumer tools.

Integration/compatibility: Official docs show native connectors, custom connectors, Model Hub support for OpenAI/Google/Anthropic/Amazon/Meta, and MCP integrations.

Recent updates: MCP support in February 2026, Deep Research admin controls and usage pricing in March 2026, and a rapidly expanding 2026 connectors and model-management surface.

Best for: Enterprise IT, knowledge management, operations, and internal AI teams.

How to Choose Your Stack by Role and Budget

AI tools are no longer one category. In 2026, you need a compact stack — not a single app. Here is how to think about it by role:

The Solo Knowledge Worker or Freelancer

Budget: $0–$40/month

- Assistant: ChatGPT Plus or Claude Pro ($20/month)

- Research: Perplexity Free or Pro ($0–$20/month)

- Creative: Midjourney Basic ($10/month) if you create visual content

You get 80% of the value from just an assistant and a research tool. Add a creative tool only if your work demands it.

The Developer

Budget: $20–$60/month

- Assistant: Claude Pro ($20/month) — strong coding reasoning

- Coding: GitHub Copilot Pro ($10/month) for team workflows, or Cursor Pro ($20/month) for agentic power

- Local models: Ollama (free) for private experimentation

The Creative Professional

Budget: $30–$100/month

- Assistant: ChatGPT Plus ($20/month) for brainstorming and drafting

- Images: Midjourney Standard ($30/month) for quality, or Adobe Firefly for commercial safety

- Video: Runway Standard ($12/month) for short-form video

- Voice: ElevenLabs Starter ($6/month) for voiceover and dubbing

The AI Platform Team

Budget: Varies widely by scale

- Hub: Hugging Face (free or Pro)

- Agents: LangChain / LangSmith

- RAG: LlamaIndex + Pinecone

- Evals: Weights & Biases

- Enterprise platform: Databricks Mosaic AI

- Data quality: Labelbox

- Knowledge: Glean

The stack approach matters because no single tool solves everything, and pretending otherwise wastes both time and money.

A Note on Benchmarks

It is tempting to pick AI tools based on benchmark rankings alone. Resist that temptation.

Chatbot Arena measures broad human preference and is excellent for comparing assistants. MMMU tests multimodal reasoning. LiveCodeBench and SWE-bench evaluate coding differently — one measures raw code generation, the other measures real-world software engineering. ParseBench (from LlamaIndex) evaluates document processing.

These benchmarks are useful but incomplete. They measure different things. They shift quarterly. And crucially, they do not tell you which tool has the best pricing for your team, the integrations you need, or the governance controls your compliance team requires. Use them as one input, not the input.

Conclusion: Future-Proofing Your AI Stack

The most important trend in AI tools in 2026 is not any single product launch. It is the separation of layers. Assistants, search, coding, creative, and infrastructure have become distinct categories with distinct winners. The tools that try to do everything are losing to the tools that do one thing exceptionally well and integrate cleanly with the rest of the stack.

The practical advice is simple:

- Pick your assistant first. ChatGPT, Claude, or Gemini — whichever fits your ecosystem.

- Add a research layer if you make decisions based on external information. Perplexity is the clear leader.

- Add coding or creative tools only for the workflows you actually do weekly.

- Build your infrastructure stack only when you are moving from pilot to production.

Do not adopt 20 tools at once. Adopt 3–5 that match your current work, and expand as your needs grow. The tools will still be here — and they will be better — when you are ready.