There is a story being told about artificial intelligence that is, at best, dangerously incomplete. The story goes like this: AI is democratizing knowledge. Anyone with a smartphone can query a large language model. A student in Lagos can access the same chatbot as a Harvard professor. A small business in rural Nebraska can use the same writing assistant as a Fortune 500 marketing department. The tools are free, or nearly so. The playing field is leveling.

This story is not entirely wrong. But it is focused on the wrong layer of the economy. It is looking at the consumer-facing surface and calling it the whole picture. Beneath it, a very different dynamic is underway — one that regulators, economists, and competition authorities are beginning to describe with increasing alarm.

The newest, most capable AI models, and crucially the infrastructure and integration advantages that come with early and exclusive access to them, are concentrating in the hands of a remarkably small number of firms. And the mechanisms that are enabling this concentration are not accidental. They are structural, self-reinforcing, and — if current trajectories hold — increasingly difficult to reverse.

This is not a story about chatbots. It is a story about who controls the cognitive infrastructure of the next economy — and what happens to everyone who doesn’t.

The Illusion of Access

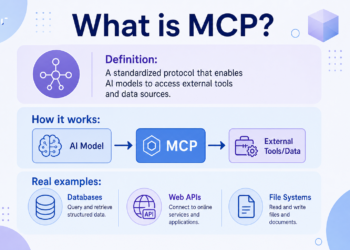

To understand why the “AI is democratizing” narrative is incomplete, you need to understand what the Competition and Markets Authority (CMA) calls the “layered” nature of AI access. Consumer access to AI — the kind you get when you open ChatGPT or Claude in a browser tab — can diffuse quickly. Chatbot subscriptions spread like any consumer technology. But economically decisive access is something else entirely.

Economically decisive access means: access to frontier model weights. Access to model improvement loops, where your users’ interactions feed back into making your model better while competitors’ models stagnate. Dedicated compute capacity, so your inference isn’t throttled during peak demand. Tight integration into dominant software platforms — the office suites, the developer tools, the enterprise systems where work actually happens. And IP rights, the legal structures that determine who can build on whose technology and under what conditions.

That kind of access, according to the CMA’s foundation model analysis, remains concentrated among a small number of firms and their chosen partners. The gap between consumer access and this deeper, commercially decisive access is not a minor technical distinction. It is the difference between using a road and owning the toll booth.

There is a transparency dimension here that compounds the problem. The Stanford Center for Research on Foundation Models (CRFM) Foundation Model Transparency Index scores leading AI developers on how much they disclose about their training data, compute usage, and post-deployment impact. In 2024, the average score was 58 out of 100 — already not particularly high. By 2025, it had reportedly dropped to 40 out of 100. The leading developers of the most powerful models are becoming less transparent precisely where market power is most likely to matter most. Regulators, researchers, and competitors are being asked to evaluate a competitive landscape whose most important features are increasingly hidden from view.

The Compute Crisis: A New Kind of Scarcity

At the foundation of the “have/have-not” dynamic in frontier AI is a resource that looks boring from the outside but functions like oxygen for AI development: compute. Specifically, access to high-performance AI accelerator chips — GPUs and specialized processors — and the data center infrastructure to run them at scale.

Compute scarcity is not a speculative concern. The CMA has repeatedly emphasized that bottlenecks in access to state-of-the-art AI accelerator chips represent a genuine structural barrier to entry — and that partnerships between AI developers and cloud providers can secure access to these scarce inputs in ways that reduce contestability for everyone else.

The Stanford HAI AI Index documents the compute scaling dynamics driving this scarcity: training compute for notable frontier models has been doubling roughly every five months. That is not a linear trend. It is an exponential one. And it has a very practical consequence: Epoch AI’s analysis suggests that frontier training costs have risen approximately two to three times per year since 2016, with projections indicating that individual training runs could exceed one billion dollars by 2027 if these trends persist.

Think about what that number means for competition. A billion-dollar training run is not an expense that most well-funded startups can absorb. It is not something that universities can do. It is not something that governments in most countries can routinely finance. It is something that only a handful of the most capitalized organizations on Earth can contemplate.

This is not a market where a garage startup stumbles on a breakthrough and changes everything. This is a market where the cost of playing at the frontier is a structural filter — one that automatically eliminates the vast majority of potential competitors before they can even enter.

At the same time, the AI Index documents a genuinely countervailing force that is important to understand accurately: inference costs — the cost of actually running a model to generate a response — have fallen sharply. The price per million tokens for GPT-3.5-equivalent capability, for example, has reportedly fallen from approximately $20 to approximately $0.07. This is a real and significant development. It means that consuming AI-generated outputs is getting cheaper fast, and that consumer access is likely to continue broadening.

But this conflates two different problems. Falling inference costs are good news for end users. They do not meaningfully change the economics of frontier training, where the billion-dollar barrier remains. The result is a bifurcation even within the AI stack: inference becomes a commodity that spreads, while training — and the power that comes with running the models that define the frontier — stays concentrated.

The Partnership Playbook: How Deals Become Moats

If compute is the foundation of the new AI power structure, then partnership agreements are the architecture built on top of it. And the architecture that has emerged over the past few years is, when examined in detail, remarkable in its scope and strategic depth.

The Federal Trade Commission’s staff report on cloud provider-AI developer partnerships — one of the most important regulatory documents of the current AI era and still not widely read by the general public — lays out the anatomy of these arrangements in detail. Typical partnership terms involve equity stakes and revenue-sharing rights. They include consultation and control provisions. They feature exclusivity rights for specific services. They create “circular” cloud spending commitments, where AI developers agree to spend large sums on the cloud infrastructure of their partners, which the partner can then book as both AI investment and cloud revenue.

They give cloud partners access to the AI developer’s IP and sensitive usage data. And they raise contractual and technical switching costs — making it harder for AI developers to move their workloads to alternative providers, even if better options emerge.

The FTC press release accompanying the report makes clear that these structures are not being evaluated as routine commercial arrangements. They are being scrutinized as potential mechanisms of market foreclosure — ways to secure scarce inputs and control distribution in a manner that may raise rivals’ costs and limit the competitive field.

Let’s look at specific deals, because the abstractions become vivid in the details.

Microsoft and OpenAI. Microsoft’s January 2023 announcement described Azure as OpenAI’s “exclusive cloud provider.” A February 2026 joint statement reiterated that Microsoft maintains an “exclusive license and access to intellectual property across OpenAI models and products” and describes Azure as the “exclusive cloud provider of stateless OpenAI APIs” — through, based on the original arrangement, at least 2030.

The IP exclusivity clause is particularly significant: it means that Microsoft’s products and services have legal access to capabilities that competitors, by definition, cannot match using the same underlying technology. This is not just a hosting agreement. It is an integration and IP moat embedded inside the world’s dominant enterprise productivity suite.

Anthropic and Amazon. Anthropic announced in 2023 that Amazon would invest up to $4 billion in the company. By 2024, that arrangement had expanded, with Amazon establishing itself as Anthropic’s primary cloud and training partner — and Amazon’s total investment reaching $8 billion. In parallel, Anthropic selected Amazon’s Trainium chips as a preferred hardware platform for training. AWS integration into Amazon’s cloud marketplace gives Anthropic distribution to Amazon’s enormous enterprise customer base. The investment gives Amazon strategic positioning at the frontier of AI development.

Anthropic, Google, and Broadcom. In 2026, Anthropic announced an agreement with Google and Broadcom for multiple gigawatts of next-generation TPU computing capacity coming online starting in 2027. Google had already been established as Anthropic’s “preferred cloud provider” with access to large TPU and GPU clusters. This means the company developing what many consider a leading frontier model has secured preferential access to a specific chip ecosystem at a scale that most competitors cannot approach — not because of superior technology alone, but because of strategic capital commitments made years in advance.

The compute overflow. Even for the best-resourced frontier developers, demand sometimes exceeds what a single cloud relationship can provide. Oracle announced that OpenAI selected Oracle Cloud Infrastructure to extend Microsoft’s Azure AI platform capacity — a signal that even the most well-positioned actors in this market face compute scarcity at the frontier.

Each of these arrangements, taken individually, might look like ordinary business deals. Taken together, they reveal a pattern: the frontier AI landscape is being organized around a set of hub-and-spoke relationships between a small number of hyperscale cloud providers, a small number of frontier model developers, and an even smaller number of chip manufacturers. Everyone else is, in the language the CMA uses, “outside the circle.”

The Winners, the Losers, and the Reverse Acquihire

The market dynamics described above are not theoretical. They are already producing visible outcomes — firms that are thriving because they locked in compute and customers early, firms that are struggling under compute bills they cannot sustain, and a new pattern of consolidation that bypasses traditional acquisition law entirely.

CoreWeave is perhaps the most striking success story of the current moment. As a specialized GPU-focused cloud provider, it had been essentially invisible in cloud market rankings just two years ago. Synergy Research Group now reports that it has entered the top-ten cloud providers globally, generating more than $1.5 billion in quarterly cloud revenue.

Reuters reports a $21 billion expanded deal with Meta extending through 2032. CoreWeave thrived because it was positioned at exactly the bottleneck the market needed — AI-optimized GPU infrastructure — and because it moved early enough to secure long-dated capacity contracts with major AI spenders.

Stability AI represents the flip side. Reuters reported that the company was exploring a sale amid a cash crunch, including substantial losses relative to low revenues and close to $100 million in outstanding bills to cloud providers and others. Whether Stability AI’s difficulties were attributable solely to access constraints is difficult to prove causally.

But its trajectory is consistent with what the evidence suggests about model developers who lack preferential compute arrangements: they face full market-rate cloud bills during the most capital-intensive phase of frontier development, while well-partnered competitors pay lower effective rates and receive strategic support. The structural disadvantage compounds over time.

The most revealing data point, however, may be the rise of what might be called the “reverse acquihire” — a pattern in which dominant incumbents absorb the talent and IP of smaller frontier AI companies without executing formal acquisitions that would trigger standard antitrust review. Reuters reported that Microsoft paid Inflection approximately $650 million in a licensing arrangement while hiring the majority of the company’s staff — an arrangement structured to avoid the formal mechanics of an acquisition while achieving many of the same competitive effects.

Amazon followed a similar pattern with Adept, hiring its cofounders and parts of its team in a deal that was also structured around licensing rather than outright purchase.

These patterns reveal something important: the “have” side of the AI economy does not need to formally acquire competitors to consolidate them. It can simply hire their talent, license their technology, and absorb their capability — all while the structural barriers described above make it increasingly difficult for new competitors to form from scratch. The effect on competitive diversity is similar to consolidation, without the regulatory scrutiny that consolidation would normally attract.

Quantifying the Divide: Concentration by the Numbers

Competition analysis requires metrics, and the most relevant proxy for frontier AI power concentration is cloud infrastructure — because frontier AI training and high-volume inference predominantly run on hyperscale compute, and because cloud market structure shapes who can access it and on what terms.

Synergy Research Group estimates Q4 2025 worldwide cloud market shares of approximately 28%, 21%, and 14% for the top three providers, with quarterly revenues of $119.1 billion and full-year 2025 revenues of $419 billion. In public cloud specifically, the top three providers account for 68% of the market. These are not small players with large market shares. These are enormous companies whose cloud businesses, already measured in hundreds of billions of dollars annually, are now being supercharged by generative AI demand.

Translating these shares into standard antitrust concentration metrics: the implied Herfindahl-Hirschman Index (HHI) using Synergy’s Q4 2025 shares as inputs ranges from approximately 1,421 to approximately 2,790, depending on assumptions about how the remaining market is structured. The lower bound of this range falls in the “moderately concentrated” zone under common antitrust heuristics. The upper bound approaches “highly concentrated.” And this is before accounting for the exclusive AI partnerships and switching costs layered on top of cloud market structure.

Scenario analysis — which is explicitly noted here as illustrative rather than predictive — is instructive. If current trends toward deeper lock-in push the top three providers’ combined share toward 80%, HHI rises to approximately 2,270. A “winner-take-most shock” where two providers come to dominate could push HHI above 2,770. A diffusion scenario where public compute programs and increased competition hold combined shares to 60% would actually lower HHI to approximately 1,352 — suggesting that mitigation efforts, if they work, could meaningfully change the concentration trajectory. The window for that kind of outcome remains open but is not unlimited.

The investment data reinforces the scale of the capital advantages at play. Stanford’s AI Index 2026 report describes global corporate AI investments of $581.7 billion in 2025, with private investment reaching $344.7 billion. U.S. investment substantially outpaces the next-highest country. The capital flowing into AI is enormous, and it is not flowing evenly — it is being channeled through the partnership and infrastructure structures described above, which means it is, in many cases, flowing toward those who already have the most.

What the IMF Is Telling Us About Who Benefits

The International Monetary Fund is not typically a source of radical economic warnings. Its 2024 staff discussion note on generative AI and the future of work is therefore worth pausing on, because its conclusions are striking.

The IMF estimates that approximately 40% of global employment is exposed to AI — workers in jobs where AI could either complement or displace their work. In advanced economies, that exposure figure rises to approximately 60%. These numbers represent stakes large enough to affect not just individual workers’ wages but broader social stability and political dynamics.

What does the IMF’s modeling suggest happens to incomes and wealth under AI adoption? The headline result is that productivity gains can raise labor income overall. But the distribution of those gains is deeply unequal. Labor income inequality rises because the gains are larger for workers with high AI complementarity — workers whose skills are enhanced rather than replaced by AI tools. And capital income and wealth inequality, in the IMF’s framework, “always increase” with AI adoption. Not sometimes. Not under adverse scenarios. Always, in their model, when AI is adopted, capital’s share of income grows and wealth becomes more concentrated.

This is the economic logic behind the bifurcation thesis. A world where average productivity and wages rise but wealth inequality also rises is not a world of broadly shared prosperity. It is a world where the ownership of the technology matters enormously for who captures the gains. And as this article has documented, the ownership of the most decisive AI infrastructure and model IP is already concentrating rapidly.

The OECD’s 2025-2026 research on emerging divides in the transition to AI provides the granular evidence behind this concern. More than one-third of individuals across OECD countries used generative AI tools in 2025 — a genuinely rapid diffusion rate. But the usage patterns are deeply stratified: by age, by education level, and by income. In the firm sector, 52% of large firms report using AI, compared to just 17.4% of small firms. This three-to-one gap in AI adoption by firm size is not just a technology diffusion lag. It reflects a structural difference in the resources available to invest in AI integration, the data assets available to benefit from AI tools, and the talent available to deploy them effectively.

The OECD frames these as “emerging divides” that reinforce existing economic cleavages — larger firms and knowledge-intensive sectors moving first, capturing early productivity gains, and potentially pulling away from smaller competitors and lower-skill workers in ways that compound existing inequality rather than correcting it.

The Education Divide: The Next Generation of the Gap

Education is the long-run mechanism through which access inequality transmits across generations. And AI is becoming a critical dimension of educational quality and equity in ways that are only beginning to be understood.

UNESCO’s guidance on generative AI in education and research — updated as of 2026 — emphasizes the need for capacity building, governance frameworks, and explicit attention to equity in how AI tools are integrated into educational systems. The concern is not hypothetical: when higher-quality AI tutoring, research assistance, and skills development tools are available to well-resourced students and institutions but not to under-resourced ones, the technology that is supposed to democratize education becomes another vector of stratification.

The skills gap has a bidirectional relationship with the access gap. Access to frontier AI tools helps workers develop the AI-complementary skills that the IMF identifies as protective against displacement. Workers who lack access to those tools also lack the experience needed to develop those skills. The result is a self-reinforcing dynamic: those who are already in the “haves” column gain the skills to remain there, while those who are not face barriers to developing the capabilities that would let them cross the divide.

This is where the aggregate statistics about AI adoption rates become deeply misleading as measures of opportunity. One-third of OECD individuals using some AI tool tells you very little about whether those individuals are developing the skills and accessing the capabilities that will determine their labor market position over the next decade. A student using a free chatbot for homework help and a software engineer using frontier coding assistance integrated into their development environment are both “AI users.” They are not accessing the same opportunities.

Geopolitics: The Emergence of Compute Blocs

The bifurcation of the AI economy is not only a matter of firms and workers. It is also reshaping the strategic landscape among nations. Compute and advanced chips have become explicit instruments of state power, and the policies being enacted around them are creating a new geography of AI opportunity and exclusion.

The Bureau of Industry and Security has repeatedly updated its export controls and licensing policies regarding advanced computing semiconductors, reflecting an explicit governmental judgment that access to frontier AI compute is a national security matter. The U.S.-China Economic and Security Review Commission has analyzed compute and cloud advantages in the context of broader emerging technology competition, including concerns about cross-border access to AI capabilities through cloud services.

The emerging global structure may thus come to resemble what could be called “compute blocs” — a small number of countries with domestic hyperscale compute infrastructure and leading frontier model developers (call them “AI-rich”), a larger group of countries that are dependent on imported models and foreign cloud providers for their AI capabilities (“AI-dependent”), and geographies where access is substantially shaped by export controls and countermeasures (“AI-restricted”).

The IMF’s AI Preparedness framework and dashboard explicitly identifies that wealthier economies are better equipped for AI adoption — in terms of digital infrastructure, human capital, and institutional capacity — and warns that without foundational investments, the divergence in AI readiness among nations could widen income inequality at the global level, not just within countries.

This is an underappreciated dimension of the bifurcation thesis. Much of the debate about AI concentration focuses on firm-level dynamics — which companies are capturing value, which startups are failing, which incumbents are entrenching. But the national-level dynamics may ultimately be more consequential. A world where a handful of countries dominate the development of frontier AI — not because their citizens are more innovative, but because they secured the compute, the capital, and the partnership structures first — is a world where the productivity gains of the next technological era are distributed geographically in ways that may be very difficult to rebalance.

Misinformation and the Information Integrity Stakes

The political consequences of AI concentration are not limited to labor markets and economic growth. There is a second-order risk that may be at least as significant: the concentration of advanced content generation capacity in a small number of hands, while most institutions lag in their capacity to evaluate and verify AI-generated content.

The World Economic Forum’s Global Risks Report 2024 identified misinformation and disinformation as a major short-term global risk, with AI as an identified accelerant. In a bifurcated AI world, this risk takes a specific and troubling form: a small number of actors with access to the most capable content generation and influence tools, while the verification infrastructure of civil society, journalism, and democratic institutions remains far less capable.

Notably, OpenAI’s own “Industrial Policy for the Intelligence Age” document explicitly flags the risk of governments or institutions deploying AI in ways that undermine democratic values, and of power and wealth becoming concentrated rather than widely shared. That a leading frontier AI developer acknowledges this risk in its own policy documents — while simultaneously participating in the partnership structures that concentrate capability — captures the deep tension at the heart of the current moment.

Tipping Points: A Timeline of What’s Already Happening and What Comes Next

The bifurcation of the AI economy is not a future risk. It is an ongoing process with identifiable inflection points. Based on the regulatory, market, and technological evidence documented here, several key tipping points are visible on the near-to-medium horizon.

2024–2025 marked the consolidation of the partnership architecture. The FTC began formal scrutiny of cloud provider-AI developer partnerships. Exclusivity agreements, IP-sharing arrangements, and circular cloud commitments became standard features of frontier AI contracting. The transparency of leading model developers declined to its lowest documented level.

2026 — the present — is seeing generative AI drive cloud market revenues to new records (quarterly revenues of $119.1 billion, per Synergy), while the concentration of compute commitments in multi-year, multi-gigawatt infrastructure deals deepens. Regulatory scrutiny is intensifying but has not yet produced structural interventions.

2027 marks a projected threshold: if current training cost trends persist, individual frontier training runs may exceed $1 billion. Multi-gigawatt compute deals — like Anthropic’s agreement for TPU capacity with Google and Broadcom — will begin coming online, cementing the compute positioning of partnered frontier developers for years to come.

2025–2028 is the window in which public compute access programs — the NSF’s National AI Research Resource (NAIRR) and the EuroHPC Joint Undertaking’s AI Factories — must demonstrate meaningful scale to function as real counterweights. Their impact will depend not just on funding levels but on procurement speed and governance safeguards designed to prevent incumbent firms from capturing the benefit of public investment.

2028 and beyond is when regulatory regimes mature. The EU AI Act’s staged implementation schedule will produce its most significant obligations in this window. Whether the market structure has already locked in by then — or whether competition policy and public investment have created a meaningful alternative — may be the central question of AI economic governance for the next decade.

The Mitigation Portfolio: What Can Actually Be Done

The evidence reviewed here does not lead inevitably to pessimism. Bifurcation is a risk and a trajectory, not a fait accompli. But the academic and policy literature makes clear that addressing it requires a portfolio of interventions, not a single solution. Solving only one bottleneck without addressing the others is unlikely to shift the trajectory.

Compute access as public infrastructure. The NAIRR and EuroHPC AI Factories represent institutional templates for democratizing compute access — giving researchers, smaller firms, and public institutions access to AI infrastructure without going through dominant commercial clouds. The critical design challenge is ensuring that access rules prevent capture by incumbents. Transparent allocation processes, SME-friendly onboarding, and independent governance are essential. The trade-off is real: large public compute programs can lag industry pace and risk subsidizing already-dominant actors if governance is weak. But the alternative — leaving compute access entirely to the market — appears, on current evidence, to produce concentration.

Competition policy for vertical cloud-model structures. The FTC’s analysis points clearly at the areas requiring scrutiny: exclusivity clauses, preferential capacity access, circular spending commitments, and the technical switching costs embedded in AI-optimized infrastructure. Interoperability and portability standards for AI workloads — model deployment, orchestration, and chip-specific tooling — could reduce technical lock-in and lower switching costs. The challenge is calibrating intervention: too aggressive, and investment incentives may decline; too passive, and the current arrangements may harden into permanent structural advantages.

Transparency and auditability. The FMTI’s finding that leading developers are most opaque about training data and compute — precisely the dimensions where market power is most likely to concentrate — points directly at where disclosure obligations are needed. Mozilla’s analysis argues that external researcher access to closed foundation models is essential for accountability. The NIST AI Risk Management Framework provides an operational structure for risk controls. The question is whether disclosure obligations can be targeted precisely enough to reveal competitively significant information without creating security vulnerabilities.

Open models as a competition strategy — with caveats. The NTIA’s analysis suggests that open weights alone may not substantially change the advanced foundation model industry, due to the compute constraints described throughout this article. Open weights lower the cost of deploying capable models and can increase competition in downstream applications. But they do not meaningfully change who can train frontier models, which is where the structural power is. A more nuanced approach — “tiered openness,” where evaluation documentation is broadly available, weights are accessible for less-dangerous capability tiers, and gated access governs high-misuse-potential models — may be more effective than either blanket openness or blanket restriction.

Data trusts and collective stewardship. The Open Data Institute’s framework for data trusts describes a fiduciary model in which communities pool and govern data access. When paired with privacy-preserving compute and enforceable usage constraints, data trusts could give smaller actors and communities a way to benefit from AI training without surrendering data to dominant platforms. The governance complexity is real, and the history of collective data initiatives is mixed. But the alternative — data pooling that favors incumbents by default — is not neutral.

Labor market institutions and social protection. The IMF is explicit that policy must address labor reallocation, safety nets, and skill development for workers in high-AI-exposure occupations. Civil society organizations and labor institutions can negotiate “AI augmentation” standards: training rights, audit rights for workplace AI systems, and mechanisms for sharing productivity gains through wages or profit-sharing. The political feasibility of these interventions depends on the credibility of measured productivity gains and the visibility of harms.

Taxation and public investment. The IMF’s concern that AI increases capital’s share of income points toward a structural question about tax systems. If frontier AI shifts economic value from labor — which is broadly taxed — to AI-driven capital income — which may concentrate in a small number of firms — public revenues may weaken precisely when the demand for public investment (in compute, education, and resilience) is rising. Taxing rents from AI-driven market power, and recycling the revenue into public goods, is a coherent policy response. The execution challenge is formidable: it requires measuring rents accurately and preventing profit-shifting across jurisdictions.

The Known Unknowns: Open Questions That Will Determine the Outcome

Several empirical questions will determine whether the current trajectory toward bifurcation is permanent or correctable — and honest analysis requires acknowledging that these questions are genuinely unresolved.

When do frontier models commoditize? The falling cost of inference demonstrates that AI capabilities do commoditize over time. But the frontier is always moving. The key unknown is whether the economic advantages of being at the frontier will persist indefinitely, or whether a dynamic similar to what happened with electricity — universal access at regulated rates — will eventually emerge. If training costs stop rising or begin to fall, the bifurcation dynamics at the training layer could ease significantly. If they don’t, the oligopoly at the frontier may become structurally permanent.

How strong are data feedback loops relative to raw compute? The CMA warns that proprietary interaction data could entrench advantage in ways that are hard to compete away. But the actual magnitude of this effect — how much proprietary feedback data improves model quality relative to general training data and raw compute — is not well-established empirically. This matters enormously for policy: if feedback loops are the primary moat, data governance is the priority intervention. If compute is the primary moat, public infrastructure programs matter more.

What is the minimum viable scale for public compute programs to matter? The NAIRR and EuroHPC AI Factories are institutional innovations, but their capacity is modest relative to the compute commitments being made by dominant commercial players. The threshold at which public compute programs would meaningfully change national innovation outcomes — producing work at or near the frontier, not just enabling research on already-commoditized models — is not known. Answering this question is urgent for policymakers evaluating how much to invest in these programs.

How does AI alter wage-setting and bargaining power at the firm level? The IMF’s macro simulations provide aggregate distributional projections. But the firm-level mechanisms through which AI exposure translates into wages — through which sectors, in which occupations, on which timelines — remain underspecified. This is where the empirical agenda is most urgent, because firm-level dynamics are where policy interventions can be most precisely targeted.

The Window Is Still Open — But It Is Closing

There is a pattern in the history of general-purpose technologies that should inform how we read the current AI moment. The industrial era saw significant distributional shifts, with wage inequality rising during key phases of technological transition. The information technology revolution was linked to skill-biased changes and labor market polarization. Digital technologies shifted markets toward “superstar firms,” increasing concentration while reducing labor’s share in affected industries. Each of these transitions involved periods in which the distributional consequences were still malleable — when institutions, competition policy, and labor markets could have shaped the outcomes differently — followed by periods in which the structures had hardened and redistribution required much more disruptive intervention.

Frontier AI is simultaneous a productivity technology (like computing), a platform technology (like the internet), and an automation and complementarity technology that affects cognitive labor — historically the segment most protected from automation. This combination makes it potentially more distributionally consequential than any of its predecessors. It also makes the current moment — when the partnership structures are still being formed, when regulatory frameworks are still being designed, and when the public compute alternative is still small enough to be scaled — unusually important.

The core finding of the evidence reviewed here is not that a single AI monopoly is inevitable. It is that a multi-layered oligarchy — a small set of cloud providers, frontier model developers, and chip supply-chain nodes — is already setting terms for broad sectors of the economy through pricing, access conditions, and platform integration. This structure enables disproportionate capture of AI-generated surplus, in a way that the IMF’s own modeling suggests will increase capital income and wealth inequality in absolute terms even as aggregate productivity rises.

The response required is not anti-technology. It is the recognition that access to the most economically decisive AI capabilities — not chatbot subscriptions, but compute, model weights, feedback loops, IP, and platform integration — is a question of infrastructure, competition policy, and social contract, not just market preference. Treating it as a public infrastructure question, as the NAIRR and EuroHPC AI Factories begin to do, is the right instinct. But the scale of these programs has not yet matched the urgency of the dynamic they are trying to counterbalance.

Every major infrastructure technology in history — railroads, electricity, telecommunications — eventually produced both regulatory frameworks and public access provisions that prevented permanent capture by first movers. The question for frontier AI is not whether such frameworks are needed. The evidence reviewed here makes that answer clear. The question is whether they will arrive before the structural advantages of current incumbents have compounded beyond reach.

The window is still open. Whether it remains so depends on what policymakers, regulators, and civil society choose to do — and how quickly they choose to do it.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.