I. The argument in one paragraph

Three and a half years after ChatGPT’s release, the question “will AI take our jobs?” has finally produced enough data to answer something better than a hot take. The answer is conditional. In the aggregate, AI has not yet caused an economy-wide collapse in employment, even in highly exposed advanced economies. Productivity gains at the task level are real, often enormous, and disproportionately benefit less-experienced workers.

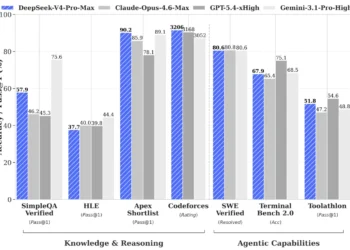

But the labor market is already showing the kind of stress fractures you would expect early in a general-purpose technology shock: weaker hiring into entry-level white-collar roles, sharply reduced vacancies in substitution-vulnerable occupations, a widening premium for workers and firms that adopt AI well, and a growing public anxiety that the next five years will look nothing like the last five. The serious debate now is no longer “apocalypse vs. abundance.”

It is whether AI will produce a manageable churn that institutions can shape, or a faster, more concentrated displacement that they cannot. As Anthropic CEO Dario Amodei told the Financial Times in April 2026: “We should not deny that the disruption is going to happen. We just have to make the positive effect so large that we have a tool to address the disruption.”

That is the argument. The rest of this piece is the evidence.

II. The verdict in three time horizons

The most useful way to talk about AI and employment is not as a single forecast but as three separate questions, each on a different time horizon.

Near term (now through ~2027): neutral to mildly negative aggregate effects, concentrated at entry levels. The strongest empirical work to date — the Yale Budget Lab tracker of U.S. labor-market data, the Danish administrative-records study by Anders Humlum and Emilie Vestergaard (NBER, Large Language Models, Small Labor Market Effects), and the cross-country survey work by Jonathan Hartley and coauthors — has consistently found small or precisely null effects on aggregate hours, earnings, openings, and total employment. The Federal Reserve Bank of Dallas estimates that AI has added roughly 0.1 percentage points to the U.S. unemployment rate.

However, an NBER paper by Erik Brynjolfsson, Bharat Chandar, and Ruyu Chen — colloquially titled “Canaries in the Coal Mine” — finds that workers aged 22 to 25 in the most AI-exposed occupations have seen a 6% employment decline since late 2022, even as older workers in the same roles have gained. A 2025 World Bank working paper using 285 million Lightcast job postings finds postings in substitution-vulnerable occupations down roughly 12% relative to less-exposed ones, with the gap widening from 6% in year one to 18% by year three. The pattern is not “no effect.” It is “no aggregate effect yet + visible damage at the hiring margin.”

Medium term (2027–2030): genuinely uncertain, and increasingly dependent on agentic deployment. This is where the empirical literature stops being decisive and the scenario logic begins. Whether the medium term looks like the late-1990s IT boom (productivity surge, broad job creation, wage growth) or like a sped-up version of 1980s manufacturing decline (productivity gains captured by capital, displaced workers never recovering) depends on three variables: how quickly agentic AI extends from demos into reliable production workflows; whether new labor-intensive tasks emerge fast enough to absorb displaced workers; and whether institutions — schools, unions, governments, firms — adapt at the speed of the technology rather than the speed of legislation.

Long term (2030+): undetermined by capability alone. The deepest insight from the post-Acemoglu/Restrepo task-based literature is that the long-run net employment effect of any general-purpose technology is jointly produced by capability and by demand elasticities, organization design, education systems, ownership structures, and labor-market institutions. That is why two equally informed economists can look at the same AI capabilities and reach opposite conclusions. They are not disagreeing about the technology. They are disagreeing about what humans, firms, and governments will do with it.

The framing matters because most of the public debate — and almost all of the argument among AI CEOs on X — collapses these three horizons into one. Amodei’s “50% of entry-level white-collar jobs in five years” is a medium-term claim. Yale Budget Lab’s “no major macro effects” is a near-term claim. Elon Musk’s “work will be optional” is a long-term claim. They can all be simultaneously true and simultaneously misleading.

III. Why “exposure” is not “replacement”

The single most important conceptual mistake in this debate is treating AI exposure as if it were the same as AI replacement. Exposure is the share of tasks an occupation contains that a current AI system could perform at adequate quality. Replacement is the share of those tasks that an actual organization actually delegates to AI at actual cost-effective quality, in a way that reduces actual labor demand. The gap between them is enormous, and it is where most of the bad public predictions live.

Three exposure maps now anchor the conversation.

OpenAI’s “GPTs are GPTs” estimated that about 80% of U.S. workers could have at least 10% of their work tasks affected by GPT-class systems, and roughly 19% could have at least half their tasks affected. Anthropic’s Economic Index observes actual Claude usage and finds that about 36% of occupations show AI use in at least a quarter of associated tasks, while only ~4% see usage across three-quarters of tasks. Anthropic also classifies observed use as roughly 57% augmentation versus 43% automation — a critical fact that almost never makes the headline. The ILO’s 2025 update on generative AI and jobs estimates that one in four workers globally is in an occupation with some degree of generative-AI exposure, and emphasizes — repeatedly — that most jobs are far more likely to be transformed than eliminated.

A telling data point arrived in March 2026 when Andrej Karpathy published a public job-exposure analysis scoring all 342 BLS occupations on a 0–10 AI-exposure scale, scraped against employment volume and visualized as a treemap. Karpathy’s analysis — covered widely on X — concluded that out of roughly 143 million U.S. workers, about 57 million sit in occupations with high to very high exposure. His characterization is almost identical to OpenAI’s and Anthropic’s: “if your whole job happens on a screen, you’re more exposed.” The safest jobs in his map were physically embodied: roofers, janitors, plumbers, electricians, groundskeepers, construction laborers.

This is consistent with what the MIT Iceberg Index found in late 2025 and what CNBC reported on Anthropic’s labor research: AI can already do an estimated 11.7% of the U.S. labor market’s tasks — roughly $1.2 trillion in wages — but visible layoffs attributed to AI account for only ~2.2% of that exposed surface. The rest is a slow, quiet pressure being applied beneath the headline numbers.

What does that mean? It means exposure is necessary but not sufficient for displacement. A task can be technically automatable for years before any organization deploys the workflow that actually removes a job. Telephone operators were technically automatable for decades before they vanished. Customer-service roles were technically partially automatable in 2018; their headcount kept growing until 2023. The bottleneck is rarely capability. It is integration cost, change management, regulatory exposure, reliability under tail conditions, customer trust, and — crucially — the willingness of human workers to redesign their own jobs around the tool. None of these scale at the speed of model parameters.

So when the WEF’s 2025 Future of Jobs Report projects 170 million jobs created and 92 million displaced globally by 2030, the precise numbers are less important than the underlying composition: substantial churn, net positive if reskilling and demand expansion materialize, net negative if they don’t. WEF’s number is an employer-survey forecast, not realized data, and should be read as a sentiment signal rather than a projection.

IV. The best hard evidence so far

Let’s ground the conversation in studies, not vibes. The micro-productivity literature, the labor-demand literature, and the aggregate macro literature each tell a different but coherent piece of the same story.

Micro: AI works, sometimes spectacularly

The cleanest evidence that AI does something real comes from controlled experiments and firm rollouts.

Brynjolfsson, Li, and Raymond’s Generative AI at Work — a study of more than 5,000 customer-support agents — found a roughly 14% productivity increase from a generative-AI assistant overall, and around a 34% improvement for novice and lower-skilled workers. The senior agents barely moved; the bottom of the distribution shot up. This is the now-famous “skill compression” pattern, and it has been replicated, in varying degrees, in writing tasks, legal drafting, programming, and sales.

Noy and Zhang’s Science paper on generative AI in writing found that ChatGPT access reduced completion time on professional writing tasks by roughly 40% and raised assessed quality by about 18%. A subsequent line of work has documented similar magnitudes in coding (GitHub Copilot), in medical question answering, in financial analysis, and in entry-level legal review.

What the micro literature decisively rejects is the claim that AI is “just hype.” Inside well-defined tasks, the productivity gains are real, large, and arriving faster than any other technology in living memory.

Vacancies: hiring is moving before headcount

The single most underappreciated dataset on AI and labor is job postings, because firms cut hiring long before they cut workers. The World Bank’s Labor Demand in the Age of Generative AI working paper, which analyzes 285 million U.S. postings from 2018 to 2025, found that postings in occupations with above-median AI substitution vulnerability fell about 12% relative to below-median occupations after ChatGPT’s launch, with effects rising from 6% in the first year to 18% by year three. The decline was sharpest for entry-level roles in administrative support and professional services.

That finding lines up with Federal Reserve research using a broader definition of “software workers” (including coders outside the formal tech sector), which estimated roughly half a million fewer coding jobs exist today than pre-AI trend lines would have predicted. It is the missing-vacancy effect that worries Amodei and the labor economists in roughly equal measure, even though they disagree about almost everything else.

Macro: still quiet, but watch the J-curve

The Yale Budget Lab has been running a continuous monitor of U.S. labor-market data from 2022 onward. Its repeated finding, most recently in October 2025 and reaffirmed in February 2026, is that the occupational mix of the U.S. labor market has not changed significantly since ChatGPT’s release. As Yale Budget Lab director Martha Gimbel told Fortune: “No matter which way you look at the data, at this exact moment, it just doesn’t seem like there’s major macroeconomic effects here.”

Apollo Global’s chief economist Torsten Slok summed up the same observation more bluntly: “AI is everywhere except in the incoming macroeconomic data.” That is a Solow-style productivity-paradox echo, and it is the central tension of the moment.

But there are now early hints that the paradox may be breaking. Erik Brynjolfsson, in a February 2026 Financial Times op-ed, pointed to a striking decoupling in U.S. data: the latest revised jobs report showed only 181,000 net new jobs in a recent month, while Q4 2025 GDP tracked up 3.7%, and Brynjolfsson’s own analysis identified a 2.7% year-over-year productivity jump in 2025, which he attributes to the early effects of AI. His framing is the productivity J-curve: an investment phase in which spending shows up as cost without much output, followed by a harvest phase in which the investment compounds into real productivity. He believes the U.S. just transitioned into the harvest phase.

That is, importantly, an economist-led claim with empirical scaffolding — not an industry projection.

Headcount actions: real, but messy

Reported AI-related layoffs reached roughly 55,000 in the United States in 2025 according to Challenger, Gray & Christmas, and have climbed in early 2026 with notable round numbers from Amazon, Microsoft, Klarna, Nike, and others. Klarna CEO Sebastian Siemiatkowski announced in February 2026 that the buy-now-pay-later firm would shrink its 3,000-person workforce by roughly one-third by 2030, in part because of AI acceleration.

The complication is AI redundancy washing. Deutsche Bank analysts, in a January 2026 note, warned this would be a major feature of 2026: firms attributing layoffs to AI that they would have made anyway because of macro pressure, weak consumer demand, geopolitical strain, or post-2021 over-hiring. Sam Altman himself — in unusually candid remarks in February 2026 — confirmed publicly that AI washing is happening: “There’s some AI washing where people are blaming AI for layoffs that they would otherwise do, and then there’s some real displacement by AI of different kinds of jobs.”

Both can be true. They probably are. The hard analytic problem is that we cannot, with current data, cleanly separate the two.

V. The CEOs are fighting, and that itself is data

Few intellectual disputes in modern economics have ever played out as publicly — or as colorfully — as the current AI-jobs debate among the people building the technology. The fight is happening on X, in Davos speeches, in 20,000-word essays, in podcasts, and in dueling op-eds. It is worth taking seriously not because the founders are necessarily right but because their public framings shape investor expectations, regulator attention, and the workforce decisions of the firms downstream of them.

Dario Amodei: the alarm bell

Anthropic’s Dario Amodei is, by some distance, the loudest pessimist among the frontier-lab CEOs. In May 2025 he told Axios that AI could eliminate half of all entry-level white-collar jobs within five years, with unemployment rising to 10–20%. The remark detonated, drew rebukes from across the industry, and was — in the view of many of his peers — the starkest projection ever made by a sitting frontier-AI CEO.

In late January 2026, Amodei published a roughly 20,000-word essay titled The Adolescence of Technology, in which he doubled down. He argued that AI’s “cognitive breadth” makes it unlike previous technological revolutions: it can substitute simultaneously across finance, law, consulting, tech, and back-office work, leaving displaced workers no adjacent industry to escape into.

“AI isn’t a substitute for specific human jobs but rather a general labor substitute for humans,” he wrote. He warned of an “unusually painful” short-term shock and the possible emergence of an “unemployed or very-low-wage ‘underclass.'” In an April 2026 Financial Times interview at lunch — where he reportedly ate three of the four shared bread rolls — he reiterated the case directly: stop sugar-coating, the disruption is real, and AI’s diffusion is bottlenecked by trust, which the industry is currently squandering by overselling and under-delivering.

Sam Altman: cautious enabler with a transition agenda

OpenAI’s Sam Altman has staked out a more carefully hedged position. OpenAI’s Workforce Blueprint and its public usage research argue that, in observed data so far, AI is “more enabler than replacer.” But Altman has not pretended the displacement question is settled. In August 2025 he was asked how displaced workers would survive and answered, with unusual candor, “I don’t know — neither does anyone else,” before gesturing at “some new economic model.”

In April 2026 he released a 13-page policy document proposing a kind of New Deal for the AI era — four-day workweeks, public wealth funds, portable benefits, a transition architecture for what he calls “superintelligence.” Critics, including the analyst quoted by the Financial Times, called it “comms work to provide cover for regulatory nihilism.” Altman’s stance is essentially: more displacement is coming, new jobs will form, and society must build the safety net while we build the systems. In February 2026 he confirmed publicly that “AI washing” is happening and that “the real impact of AI doing jobs in the next few years will begin to be palpable.”

Yann LeCun: the “stay in your lane” rebuttal

Few public exchanges have been as sharp as Meta former chief AI scientist Yann LeCun’s April 2026 broadside on X. Reacting to a recirculating clip of Amodei’s white-collar-bloodbath warning, LeCun posted on April 18, 2026:

“Dario is wrong. He knows absolutely nothing about the effects of technological revolutions on the labor market. Don’t listen to him, Sam, Yoshua, Geoff, or me on this topic. Listen to economists who have spent their career studying this, like @Ph_Aghion, @erikbryn, @DAcemogluMIT, @amcafee, @davidautor.”

It is a remarkable post for a sitting AI luminary: a public instruction not to take the labor-market predictions of AI luminaries — including himself — seriously. The next day, when a commenter pushed back that AI is qualitatively different from past technologies, LeCun escalated: “It really doesn’t differ qualitatively from previous technological revolutions. That’s the whole point. People like Dario present it as qualitatively different. They are just deluded or biased by their vested interests in magnifying the impact of their work.”

LeCun’s argument has two parts. First, technical expertise in AI does not confer expertise in labor economics — and labor economics has its own deep, painstaking, multi-decade body of evidence. Second, frontier-lab CEOs face a structural conflict of interest: dramatic predictions raise capital, attract regulation that favors incumbents, and amplify the perceived stakes of their own work. As David Sacks has phrased it, “fear” can be a marketing strategy.

Andrej Karpathy: data, not drama

Karpathy’s March 2026 jobs analysis is a useful counterweight to both Amodei and LeCun because it is a quantitative artifact, not a vibe. By scoring all 342 U.S. occupations with an LLM and weighting by employment, he produced a treemap that visualizes exposure as a structural map: a few large dark-red blocks (administrative support, customer service, data entry, paralegal work, junior analyst roles), a long tail of partially exposed cognitive work, and a sturdy band of physically embodied jobs that stay green.

His characterization — roughly 57 million of 143 million U.S. workers in high or very high exposure occupations — is approximately consistent with the OpenAI exposure baseline. It is not a prediction of layoffs. It is a map of where the heat is, and it was produced by an AI insider famously unwilling to either hype or doomsay.

Jensen Huang: the constructive optimist

Nvidia CEO Jensen Huang’s view, repeated at Davos 2026, is that AI will create substantial blue-collar work — chip factories, AI data-center construction, electricians, plumbers, technicians — paying six-figure salaries. He has also publicly criticized Anthropic’s framing as “scary” while “only [Anthropic] should do it.” Whether or not one agrees with Huang’s optimism, his point about the physically embodied complement is empirically credible: the AI economy requires enormous physical infrastructure, and the demand pulse for skilled tradespeople is already visible in U.S. construction wage data.

Demis Hassabis, Greg Brockman, and the cautious-optimist lab view

DeepMind’s Demis Hassabis tends to land between Amodei and LeCun: he expects significant disruption but believes long-run abundance is achievable if the gains are widely distributed and complementary tasks emerge fast enough. OpenAI’s Greg Brockman has emphasized agentic computing and scientific acceleration in public talks rather than quantified labor forecasts. Both are useful as scenario markers; neither offers a number rigorous enough to anchor against.

Elon Musk: the “work optional” pole

Musk represents the most extreme abundance scenario in serious circulation. At Davos 2026 he again described AI and robotics as “the path to abundance for all” and proposed a universal high income, not just basic income. His position has now picked up surprising traction in policy circles: U.K. minister for investment Lord Jason Stockwood told the Financial Times in early 2026 the U.K. government is weighing universal basic income to cushion AI-driven displacement. Musk’s view is best read not as a near-term forecast but as an architecture for a long-term scenario nobody can yet rule out.

The CEO meta-pattern

The striking thing about reading the lab leaders together is not that they disagree; it is how they disagree. Amodei and Hinton argue from capability extrapolation. LeCun and Acemoglu argue from labor-market history. Altman and Hassabis argue from observed usage and the texture of deployment. Musk argues from a particular long-run physical model of robotics. Huang argues from concrete infrastructure demand. They are not really debating the same proposition. They are debating five different propositions wearing the same costume. The honest reader has to disentangle them.

VI. The economists push back, hard

LeCun’s instruction to listen to economists points at a real, large, and unusually disciplined body of evidence that the AI debate has often ignored. Here is the short version of what those economists actually believe, based on their published work and recent public statements.

Daron Acemoglu: motivated reasoning all around

Daron Acemoglu — MIT, 2024 Nobel laureate — gave a remarkable interview to Business Insider in April 2026 in which he explicitly engaged with Amodei’s white-collar bloodbath thesis. His diagnosis was sharp: Amodei is engaging in “motivated reasoning.” Not because Amodei is dishonest — Acemoglu was careful to say he believes Amodei sincerely holds his view — but because Amodei’s role as a frontier-AI CEO biases him toward emphasizing model capabilities and dramatizing the stakes of his own work. Acemoglu’s framework, central to his joint research with Pascual Restrepo, is that labor-market outcomes depend on the balance between displacement (tasks taken from labor) and reinstatement (new labor-intensive tasks created). New job creation is not automatic. It depends on what technologies and organizations choose to build.

Acemoglu’s most quoted line from that interview is also the most sobering: “If we lose 20% of jobs in the United States, democracy won’t survive.” He rates that scenario as unlikely but explicitly possible. His policy point follows: the relevant question is not “can AI destroy jobs?” but “why is Anthropic so keen on making automation its main priority, when there are other directions — worker complementation, training, expertise extension — that AI capability could be aimed at?”

That is the most precise framing of the labor question that any economist has offered in the GenAI era.

David Autor: the institutional answer

David Autor, the canonical voice on routine-biased technological change, argues that AI’s impact will be determined less by capability than by whether it is deployed to extend expertise to people who don’t currently have it (rebuilding middle-skill work) or to replicate expertise in software (hollowing it out). The first scenario is materially good for labor; the second is materially bad. Autor’s view is that the path is institutionally determined and not yet fixed.

Erik Brynjolfsson: the productivity J-curve

Brynjolfsson’s Stanford Digital Economy Lab work is quietly the most important empirical research on AI and labor. His combined results — large micro productivity gains, weak macro pass-through until 2025, decoupling of GDP and jobs in late 2025, and the early career hit for 22–25-year-olds — comprise the most coherent evidence base anyone has assembled. His public framing is that we are exiting the J-curve’s investment phase and entering the harvest phase. If he is right, U.S. productivity growth will run well above trend over the next several years, while employment growth slows.

Philippe Aghion: creative destruction with policy variables

Aghion’s Schumpeterian growth tradition treats innovation as a destruction-and-renewal process, with the speed of renewal — and therefore the magnitude of transition pain — depending heavily on policy variables: competition, training, labor mobility, financing for SMEs. His view, broadly, is that AI’s net employment effects will track the quality of complementary policy more than the trajectory of the technology.

Andrew McAfee and the “second machine age” position

McAfee, with Brynjolfsson, has long argued that technology restructures labor demand rather than simply destroying it. His framing is moderate-optimist: technological revolutions create more value than they destroy, but distribution of that value is a political choice.

The collective economist message — and this is the part LeCun was right to highlight — is that none of the leading labor economists have endorsed anything like Amodei’s 50% figure. Their work is more nuanced, more conditional, and far more focused on policy than on capability extrapolation.

VII. Who actually loses

The “is it net positive or net negative” debate misses the more important question: who?

The clearest pattern in the early evidence is that AI’s costs and benefits are sharply unequally distributed across age, occupation, geography, firm size, and skill access. Even if the aggregate effect is small, the distributional effect is already large.

Younger workers in entry-level white-collar roles are the canary. Brynjolfsson, Chandar, and Chen’s payroll analysis found a 6% employment decline for 22–25-year-olds in the most exposed occupations from late 2022 to late 2025, while older workers in those same occupations gained. Anthropic’s April 2026 survey of 81,000 Claude users found that early-career workers reported significantly higher displacement anxiety than senior professionals — a perception that is consistent with hiring data showing entry rates into high-exposure occupations down roughly 14% from pre-ChatGPT trend.

This is not just a distributional concern. It is a structural concern about career-ladder erosion. White-collar professions have historically built expertise through entry-level apprenticeship: the first-year associate reviewing documents, the junior analyst building models, the new consultant grinding decks. If AI eats those tasks, then where do senior workers come from in 2035? That problem is mostly invisible in current labor-market statistics because incumbent senior workers are still performing their roles — but the pipeline behind them is thinning.

Clerical, administrative, and customer-service work is heavily exposed and disproportionately female. The ILO’s 2025 update emphasizes that clerical-support occupations — disproportionately staffed by women in advanced economies — are among the most exposed. The OECD’s analysis suggests overall AI exposure is roughly similar by sex, but the composition of exposure differs in ways that matter for risk profiles and retraining needs.

Geography is destiny — again. The OECD’s Emerging Divides in the Transition to Artificial Intelligence shows widening adoption gaps across countries, sectors, large versus small firms, and capital versus non-capital regions. Knowledge-intensive services and large firms are adopting fastest; SMEs face financing, skill, and infrastructure obstacles. In a recent World Bank framing, low- and middle-income countries are partially insulated from displacement but also poorly positioned to capture the upside without foundational digital investment.

Firm-level concentration is widening. PwC’s 2025 Global AI Jobs Barometer reports AI-linked productivity growth roughly four times higher in AI-exposed industries than in less-exposed industries, and a 56% wage premium for workers with AI skills. Those numbers reflect rising returns to the firms and workers who have learned to use the technology, and a widening gap to those who haven’t. The IMF’s Bridging Skill Gaps for the Future finds that about 1 in 10 vacancies in advanced economies now requires at least one new (often AI- or IT-related) skill — wage-positive for those who possess it, deeply polarizing for those who don’t.

The C-suite is not insulated. A surprising 2025–26 phenomenon: senior CEOs have begun resigning explicitly because of AI-driven transformation pressures. Walmart’s Doug McMillon, Coca-Cola’s James Quincey, and Adobe’s Shantanu Narayen all stepped down in 2025–2026 with public statements about needing fresh leadership for the AI era. Mercer’s Global Talent Trends 2026 survey found that 40% of employees fear losing jobs to AI, up from 28% in 2024. Sam Altman has gone further, telling the MD MEETS podcast that he expects AI to eventually produce a better CEO than he is, “and I will be nothing but enthusiastic the day that happens.” The compression effect is reaching the top.

Job quality is moving as fast as job quantity. The OECD’s 2023 Employment Outlook noted that AI can improve job satisfaction, safety, and even wages — and simultaneously raise risks around surveillance, work intensification, privacy, and bias. Anthropic’s survey evidence captures the same paradox at the individual level: workers reporting the largest speedups also report the strongest displacement concern. Productivity gains and anxiety can — and do — rise together.

The overall pattern is unambiguous: aggregate stability is masking large and growing distributional shifts. Ignoring those shifts because the headline unemployment number is calm is a category error.

VIII. The mechanisms that will decide the net answer

If you want to know whether AI will, in the long run, create more jobs than it destroys, do not look at capability charts. Look at six mechanisms.

1. Displacement vs. reinstatement. Acemoglu and Restrepo’s framework remains the cleanest lens. Automation removes labor from existing tasks; reinstatement creates new ones. The historical net effect has usually been positive over decades, but only because new tasks emerged. The open question for AI is whether enough new labor-intensive tasks will emerge fast enough — and at the right skill levels — to absorb displaced workers. Current evidence on new-task creation in the AI era is thin but not zero: AI has already produced demand for prompt engineers, AI auditors, ML ops, fine-tuners, evaluators, agentic-system designers, and a long tail of integration roles. Whether those scale to millions of jobs or stay in the thousands is the central long-run question.

2. Demand elasticity and Jevons rebound. When AI lowers the cost of a cognitive task, total demand for that task may rise enough to increase labor in the affected occupation. Lower-cost legal drafting could mean more legal services consumed; cheaper code could mean more software written; cheaper customer service could mean broader service coverage. The classical example is bank tellers: ATMs lowered the cost of routine transactions, but bank branches multiplied because the cost of opening a branch fell, and tellers shifted toward relationship work. AI’s Jevons potential is large, but it is not automatic — it requires elastic demand and human bottlenecks that scale with output.

3. Pass-through into prices and wages. Even if AI raises productivity sharply at the firm level, the labor-market impact depends on whether those gains flow into (a) lower prices, (b) higher wages, (c) higher profits, or (d) higher capital expenditure. Lower prices expand demand and create jobs. Higher wages reduce inequality. Higher profits and capex without pass-through concentrate gains and lower the labor share. Which of these dominates is institutional, not technological.

4. Diffusion speed. McKinsey reports employee AI usage rose from 30% in 2023 to 76% in 2025, but firm-level productive deployment remains far behind that. A widely cited industry survey in early 2026 found that 80% of executives say AI has not yet had a measurable impact on productivity or headcount. The gap between adoption and effect is the productivity J-curve. Faster diffusion compresses the transition; slower diffusion stretches it.

5. Agentic deployment. The single biggest variable for the medium term is whether agentic AI — systems that plan, execute, and self-correct over multi-step tasks — scales reliably from demos to production. If yes, a much larger share of cognitive work becomes automatable, and the displacement curve steepens. If no, AI remains primarily an augmentation technology and the trajectory looks more like the IT revolution of the 1990s. The empirical answer will be visible in the next 18–36 months.

6. Institutions, ownership, and policy. The historical record is clear that the distribution of technological gains is largely determined by labor-market institutions, education systems, social insurance, tax policy, competition policy, and ownership structures — not by the technology itself. The Industrial Revolution eventually produced enormous gains, but the early decades were brutal precisely because institutional adjustment lagged. The same set of choices will determine whether AI produces broad prosperity, narrow prosperity, or neither.

IX. Designing the transition: policy options that actually matter

A serious policy response to AI-and-employment will not be one big lever. It will be a layered system. Here is what the evidence currently supports as worth doing, and what is mostly distraction.

Worth doing.

- Education and lifelong learning. Broad-based digital, STEM, and AI-complementary education remain the highest-return long-term investment. The IMF’s evidence on new-skills demand shows large wage premia for AI-complementary skills, and the supply has not yet caught up. The challenge is that education is slow, while displacement is potentially fast.

- Short-cycle, employer-linked retraining. Generic retraining has a poor track record. The evidence favors retraining that is tightly linked to actual vacancies, with employer co-investment and clear pathways into specific roles. Programs like IBM’s AI training initiative and Amazon’s Upskilling 2025 are credible templates.

- Apprenticeships and expertise-extension job design. The most powerful response to the entry-level squeeze is to redesign the jobs so that AI extends apprenticeship rather than removes it. David Autor’s work points exactly here: use AI to teach the junior the senior’s skill, rather than to replace the junior with the senior’s skill in software.

- Wage insurance, portable benefits, stronger UI. Transition unemployment is a real cost even when net employment is stable. Wage insurance — partial replacement of pay losses for displaced workers who find new work at lower pay — has a particularly strong evidence base for shock absorption.

- Worker voice and social dialogue. OECD evidence and ILO policy guidance both find that AI deployments with worker consultation tend to produce better job-quality outcomes. This is one of the cheapest interventions and one of the most consistently undervalued.

- Place-based diffusion policy. OECD adoption-divide data is unambiguous: SMEs and lagging regions are falling behind. Targeted diffusion support — cloud credits, integration grants, regional AI hubs — addresses the long-term tail risk that concentration produces both economic and political instability.

- Better measurement. Almost every dispute in this debate would be sharper if public statistical agencies tracked AI usage, automation, augmentation, and task-level changes systematically. Current data infrastructure is decades behind the technology.

More speculative but worth taking seriously.

- Tax policy on AI-driven windfalls. The labor-share argument deserves real attention. If AI gains accrue narrowly to capital, broad-based taxation of capital income and wealth — not necessarily a “robot tax” — becomes part of the story. Larry Fink’s opening remarks at Davos 2026 — “If AI does to white-collar work what globalization did to blue-collar, we need to confront that directly” — is BlackRock’s CEO saying out loud what the labor-share data is already starting to show.

- Universal basic income or universal high income. UBI is not, on its own, a labor-market policy: it does not preserve mobility, meaning, or productive engagement. It is a contingency architecture for scenarios in which displacement runs faster than reinstatement. The U.K. minister for investment, Lord Jason Stockwood, openly raised UBI as a hedge in February 2026; Sam Altman’s own OpenResearch UBI experiments provide some of the best evidence base; Musk’s “universal high income” sits at the speculative tail of the same idea. The honest answer is that UBI is an option that should be on the policy shelf, not yet on the policy table.

- Public investment in human-intensive sectors. Care, health, education, climate adaptation, and skilled trades are areas where labor demand is robust to AI and where social returns are high. Pivoting public investment toward these sectors hedges against displacement scenarios while delivering value regardless.

Mostly distraction.

- A “pause” on AI development. Politically infeasible; would not address the actual labor-transition problem; would simply shift advantage to less-cautious competitors.

- Heavy-handed automation taxes that suppress diffusion. The downside of badly designed robot/AI taxes is well documented in the OECD literature — they tend to slow productivity without protecting workers.

- One-size-fits-all national reskilling programs. The evidence on these is poor.

The deeper policy lesson is that the binary choices framing the public debate — innovation vs. regulation, education vs. UBI, market vs. state — are mostly false. The transition needs diffusion-friendly policy where AI augments labor, protective policy where AI substitutes for labor, and redistributive policy where ownership concentration would otherwise capture the gains. All three at once.

X. The conclusion you can actually defend

After all the evidence, the X.com fights, the 20,000-word essays, the Yale Budget Lab tracker, the Karpathy treemaps, the LeCun broadsides, and the Acemoglu interviews, the most defensible answer to “what will AI do to employment?” is this:

AI is more likely to cause large-scale job churn than a one-way job apocalypse or a frictionless boom. That churn is already visible in vacancy data and in entry-level cohort employment. It is not yet visible in aggregate labor-market statistics, and may not become visible there for several more years — but the absence of an aggregate signal is not the absence of harm. Distributional damage is already concentrated, particularly on workers aged 22 to 25 in white-collar professions, on substitution-vulnerable clerical work, on women in those clerical roles, on smaller firms, and on regions outside the major adoption hubs.

The aggregate effect over the next five years could be neutral or modestly positive, neutral or modestly negative. The data does not support either extreme prediction. Yale Budget Lab’s data, the Danish administrative records, the cross-country survey work, the Federal Reserve Bank of Dallas analysis, and the offsetting-effects firm-level studies all point to muted aggregate impact so far, with continuing transition pressure ahead.

The medium-term answer depends primarily on agentic deployment, demand elasticity, new-task creation, and policy. If agentic AI scales reliably and new labor-intensive tasks fail to emerge fast enough and policy fails to keep up, then Amodei’s entry-level white-collar bloodbath is a credible scenario. If instead agentic AI hits diffusion friction, demand expands, and new tasks emerge — the historical pattern — then the medium term looks more like the IT revolution: substantial churn, real winners and losers, broad gains over time.

The long-term net employment outcome is not predetermined by the technology. It is jointly produced by capability, market structure, demand elasticities, education systems, social insurance, worker bargaining power, tax policy, ownership structures, and the speed of new-task creation. That is the deepest finding of fifty years of labor economics, and the AI shock has not changed it.

Yann LeCun was right about one important thing: the people who build AI are not the people best equipped to forecast its labor-market consequences. Dario Amodei was right about another: pretending the disruption isn’t happening corrodes public trust in the technology. Daron Acemoglu may have been right about the most important thing: if Amodei’s stark scenario does materialize, “democracy won’t survive” the political backlash — and therefore the question of which direction we point AI capability matters more than the question of how fast it improves.

A serious answer to “what is AI doing to employment?” therefore is not a forecast. It is a frame. The frame is: under what economic and institutional conditions does AI create enough new human work — and enough good human work — to offset the work it automates, and who benefits if it does? The honest answer to that question is that it is undetermined, contested, and open to design. The transition has begun. The shape of it is still being written. What we do in the next several years — at firms, at school systems, at unions, at central banks, at parliaments — will matter more than the next round of model releases.

The evidence does not support panic. It does not support complacency either. It supports something harder than both: serious, evidence-led, policy-active engagement with one of the most consequential technological shifts of the modern era. The economists know it. The honest CEOs know it. And the data, in its quiet way, is finally beginning to know it too.