A research-based playbook for founders, product leaders, and growth teams navigating the new rules of AI go-to-market

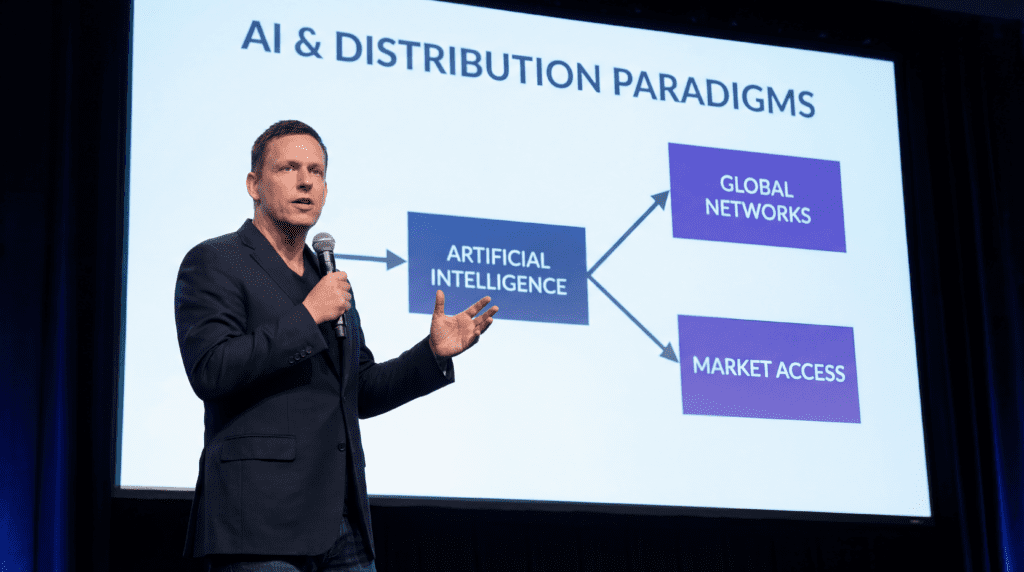

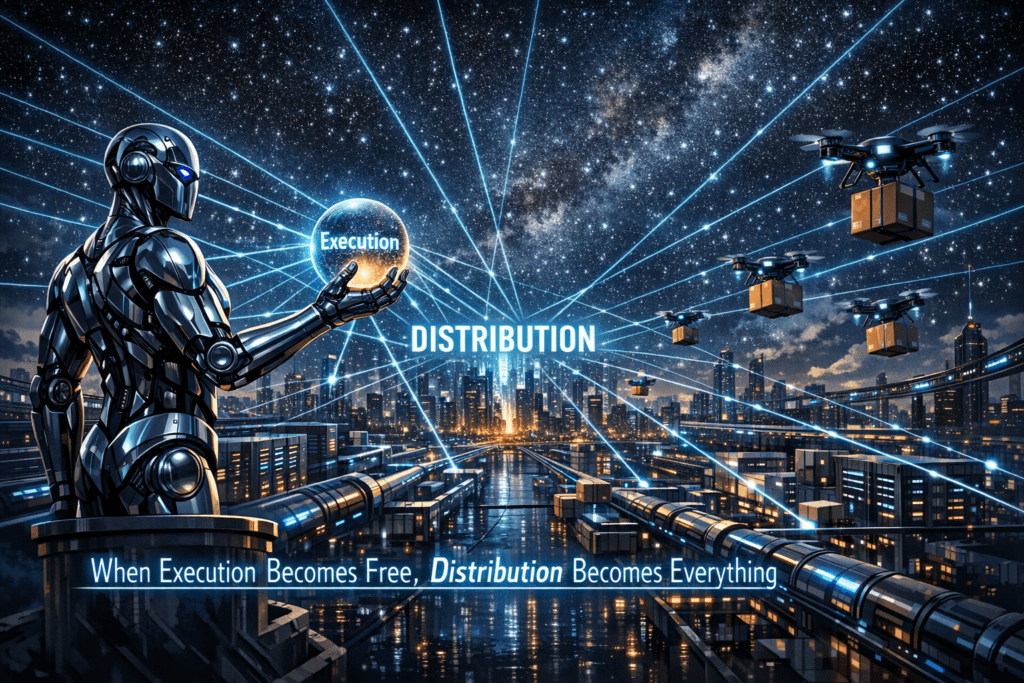

There is a seductive myth at the heart of the generative-AI gold rush: build something brilliant enough, and the world will find you. It is a myth that has destroyed more promising startups than bad code ever could. The uncomfortable truth — one that the most successful AI companies have quietly internalized — is that distribution is the product.

In a market where over 60% of enterprise SaaS products now have embedded AI features and where the global generative-AI market is projected to drive $1.3 trillion in annual economic impact by 2030, the question is no longer whether your model is good. The question is whether anyone can find it, use it, and keep using it.

This playbook is for the founders, product leaders, and growth teams who understand that insight. It maps the six primary distribution channels available to generative-AI applications today — API ecosystems, SaaS platform integrations, marketplaces, enterprise partnerships, open-source communities, and influencer marketing — and explains, with real-world examples, how to use each one strategically.

It draws on the core thesis that Kingy AI and others in the distribution-focused AI commentary space have been advancing: that in the generative-AI era, the companies that win are not necessarily the ones with the best models, but the ones with the best routes to users.

Why Distribution Is the Defining Variable

Before diving into channels, it is worth understanding why distribution has become so decisive in this particular technology wave.

The generative-AI landscape has a structural feature that makes it unlike previous software eras: the underlying models are rapidly commoditizing. According to Founders Forum Group’s 2025 AI statistics report, over 300 enterprise tools have already embedded generative AI via APIs or in-product copilots. The gap between a frontier model and a capable open-weight alternative is narrowing every quarter.

When the core technology is increasingly accessible, the moat shifts from the model itself to the distribution infrastructure around it — the integrations, the communities, the partnerships, the brand trust, and the workflows that make a product sticky.

This is not a new observation in software history. Slack did not win because it invented workplace messaging; it won because it built an ecosystem of integrations and a viral loop that made it indispensable. Shopify did not win because it invented e-commerce; it won because it built a marketplace of apps and a partner network that made switching costs prohibitive.

As Appgile’s analysis of the 2025 SaaS landscape notes, “by exposing core functionality via APIs, platforms increase their relevance across industries and use cases.” The same logic applies with even greater force to generative-AI applications, where the underlying capability is often replicable but the distribution infrastructure is not.

The six channels below are not mutually exclusive. The most successful AI companies use several simultaneously, often in a deliberate sequence that matches their stage of growth.

Channel 1: API Ecosystems — Becoming Infrastructure

The most powerful distribution strategy available to a generative-AI company is to become infrastructure. When your model or capability is accessible via a clean, well-documented API, you are not just selling a product — you are embedding yourself into the workflows of every developer who builds on top of you. Each downstream application becomes a distribution node.

OpenAI is the canonical example. By releasing the GPT API and making it trivially easy to integrate language model capabilities into third-party applications, OpenAI effectively turned thousands of independent developers into its distribution force. Every app that says “powered by GPT-4” is a marketing touchpoint. Every developer who integrates the API becomes a customer with switching costs. The API-first strategy transformed OpenAI from a research lab into the infrastructure layer of an entire industry.

The strategic logic of API distribution rests on several compounding advantages. First, it creates network effects on the supply side: the more developers build on your API, the more use cases are discovered, the more feedback you receive, and the more your model improves. Second, it creates switching costs: once a developer has built their application around your API’s specific behavior, response format, and pricing model, migrating to a competitor is expensive. Third, it generates revenue at scale without proportional sales effort: API usage is metered, meaning revenue grows with consumption rather than requiring a new sales motion for each customer.

For startups, the API distribution playbook has several practical components. Documentation quality is a distribution lever. Developers choose APIs based on how quickly they can get to a working prototype. Stripe’s legendary developer experience — clear docs, excellent error messages, a sandbox environment — is a significant reason it dominates payment processing. AI API providers that invest in developer experience compound their distribution advantage over time.

Pricing architecture matters enormously. A generous free tier is not charity; it is a customer acquisition strategy. Developers who build on a free tier become advocates, build dependencies, and eventually convert to paid plans as their applications scale. The token-based pricing model pioneered by OpenAI has become the industry standard, but startups can differentiate by offering more predictable pricing, better rate limits, or specialized capabilities at competitive price points.

Ecosystem partnerships amplify API reach. When Microsoft integrated OpenAI’s models into Azure, it instantly gave OpenAI access to Microsoft’s enterprise customer base — a distribution channel that would have taken years to build independently. Similarly, when Anthropic partnered with AWS Bedrock and Google Cloud, it gained distribution through two of the world’s largest cloud platforms without building its own enterprise sales infrastructure from scratch.

The risk of API-first distribution is dependency on platform gatekeepers. If your primary distribution channel is another company’s marketplace or cloud platform, you are subject to their pricing changes, policy decisions, and competitive interests. The mitigation is to build direct relationships with developers in parallel — through documentation, community forums, developer events, and content — so that your brand has independent value beyond any single platform.

Channel 2: SaaS Platform Integrations — Riding Existing Workflows

If API distribution is about becoming infrastructure, SaaS platform integration is about becoming a feature of someone else’s infrastructure. The strategic insight here is that users do not want to leave their existing workflows to use a new AI tool. They want AI capabilities to appear inside the tools they already use every day.

According to BetterCloud’s 2026 SaaS industry analysis, the percentage of organizations using generative AI in at least one function jumped to 71% in 2024, and 78% when including all analytical AI. This adoption is happening primarily through embedded AI features in existing SaaS platforms — not through standalone AI applications. The implication for AI startups is clear: if you can get your capability embedded in a platform that already has millions of users, you inherit their distribution.

The mechanics of SaaS integration distribution vary by approach. Native integrations — where your AI capability appears as a built-in feature of a major platform — are the most powerful but hardest to achieve. They typically require a formal partnership with the platform vendor, revenue sharing arrangements, and significant engineering investment on both sides.

Jasper’s integration with HubSpot, which allows marketers to generate AI-written content directly within HubSpot’s CRM, is an example of this approach. The integration gives Jasper access to HubSpot’s marketing customer base without requiring those customers to adopt a new tool.

Plugin and extension ecosystems offer a lower-barrier entry point. Platforms like Notion, Slack, Salesforce, and Microsoft 365 all have app marketplaces where third-party developers can publish integrations. An AI startup that builds a high-quality plugin for one of these platforms can access millions of potential users through the platform’s own discovery mechanisms. The challenge is discoverability within the marketplace itself — which brings us to the next channel.

Workflow automation platforms — Zapier, Make (formerly Integromat), and n8n — represent a particularly interesting distribution vector for AI startups. These platforms allow non-technical users to connect AI capabilities to their existing tools without writing code. An AI startup that publishes a Zapier integration can suddenly be accessible to the millions of small businesses and solopreneurs who use Zapier to automate their workflows. The user does not need to understand APIs or write code; they just connect the AI capability to their existing stack through a visual interface.

The key metric for SaaS integration distribution is activation rate within the host platform. It is not enough to be listed in a marketplace; users need to actually install and use your integration. This requires investing in the integration’s user experience, writing clear documentation targeted at the host platform’s user base, and often running co-marketing campaigns with the platform vendor to drive awareness.

Cyclr’s 2026 SaaS predictions highlight the emergence of the Model Context Protocol (MCP) as a new integration standard that will make it easier for AI capabilities to be embedded across multiple platforms simultaneously. As MCP becomes standard infrastructure, AI startups that adopt it early will gain distribution advantages similar to those that early REST API adopters enjoyed in the previous decade.

Channel 3: Marketplaces — Leveraging Platform Discovery

Marketplaces are a distinct distribution channel from SaaS integrations, though they overlap. Where SaaS integrations embed your capability inside another product, marketplaces are discovery platforms where users actively search for AI tools to solve specific problems. The most important AI marketplaces today include the GPT Store (OpenAI’s marketplace for custom GPTs), Hugging Face Spaces, AWS Marketplace, Google Cloud Marketplace, and the Apple App Store and Google Play Store for consumer-facing AI applications.

The GPT Store, launched by OpenAI in early 2024, represents a fascinating experiment in AI marketplace distribution. By allowing developers to publish custom GPT configurations — essentially AI assistants tuned for specific use cases — OpenAI created a discovery layer that benefits both users (who can find specialized AI tools) and developers (who can reach OpenAI’s massive user base). The strategic parallel is to the early App Store: a platform with enormous user traffic creates a marketplace, and the first movers who build high-quality applications in underserved categories capture disproportionate distribution.

The challenge with marketplace distribution is discoverability within the marketplace itself. As any App Store developer knows, being listed is not the same as being found. Marketplace SEO — optimizing your listing title, description, and category tags for the search terms your target users are likely to use — is a genuine skill. User reviews and ratings function as social proof that drives organic discovery. And featured placement, which platform operators typically award to high-quality or strategically important applications, can generate enormous traffic spikes.

Hugging Face Spaces deserves special attention as a marketplace for AI demos and applications. With Hugging Face growing to 13 million users and over 2 million public models by 2025, Spaces has become one of the most important discovery platforms in the AI ecosystem. A well-built Space — an interactive demo of your model or application — can generate thousands of organic users who discover it through Hugging Face’s trending pages, search, and community sharing. For AI startups targeting developer and researcher audiences, a high-quality Hugging Face Space is often more valuable than a paid advertising campaign.

The marketplace distribution playbook for AI startups involves several tactical elements. Launch timing matters: being among the first high-quality applications in a new marketplace category captures the organic traffic that flows to early movers before competition intensifies. Review velocity matters: actively encouraging satisfied users to leave reviews in the early days of a marketplace listing creates social proof that compounds over time. Cross-marketplace presence matters: being listed on multiple relevant marketplaces — AWS, Google Cloud, Azure, and domain-specific platforms — multiplies your surface area for discovery.

Channel 4: Enterprise Partnerships — The High-Touch, High-Value Channel

Enterprise partnerships are the highest-friction, highest-value distribution channel available to AI startups. They require significant investment in sales, legal, and customer success infrastructure, but they deliver something that no other channel can: large, committed revenue contracts with organizations that have the budget, the use cases, and the organizational influence to drive deep adoption.

The enterprise AI market is enormous and growing rapidly. Gartner predicts that by 2028, 33% of enterprise software applications will include agentic AI, up from less than 1% in 2024. Enterprise organizations are actively seeking AI partners, which means the demand side of the market is strong. The challenge for AI startups is navigating the enterprise sales cycle — which is long, complex, and requires demonstrating security, compliance, and reliability standards that consumer-focused products often lack.

The most effective enterprise partnership strategies for AI startups follow a recognizable pattern. Start with a lighthouse customer: identify one or two large, well-known organizations in your target vertical that are willing to be early adopters. Invest heavily in making them successful, even if the economics of the initial contract are unfavorable. The case study, the logo, and the reference call that a successful lighthouse customer provides are worth far more than the contract value itself — they are the social proof that unlocks the next ten enterprise deals.

System integrator partnerships are a force multiplier for enterprise distribution. Companies like Accenture, Deloitte, IBM, and Infosys have relationships with virtually every large enterprise on the planet. When a system integrator includes your AI capability in their standard implementation stack for a particular industry or use case, you gain access to their entire client base without building your own enterprise sales force.

Salesforce’s Agentforce platform, which organizations like FedEx, Accenture, and IBM have begun utilizing, illustrates how platform vendors can leverage system integrator relationships to accelerate enterprise adoption.

Vertical specialization is often the key to breaking into enterprise markets. A horizontal AI capability — “we do natural language processing” — is hard to sell to an enterprise buyer who needs to justify the purchase to a CFO. A vertical solution — “we reduce insurance claims processing time by 40%” — is much easier to sell because it maps directly to a measurable business outcome.

AI startups that invest in building vertical-specific features, compliance certifications (HIPAA for healthcare, SOC 2 for enterprise generally, FedRAMP for government), and industry-specific case studies dramatically accelerate their enterprise sales cycles.

Pricing architecture for enterprise requires careful thought. Enterprise buyers expect annual contracts, volume discounts, and predictable pricing — not the token-based consumption pricing that works well for developers. Many successful AI startups maintain two separate pricing tracks: a self-serve, consumption-based track for developers and small businesses, and a negotiated, contract-based track for enterprise customers. The enterprise track typically includes dedicated support, SLA guarantees, custom security configurations, and often a professional services component.

Case Study: Hugging Face’s Community-First Distribution

No discussion of generative-AI distribution would be complete without examining Hugging Face, which has built what is arguably the most successful community-driven distribution strategy in the history of AI.

Hugging Face’s approach is a masterclass in what might be called ecosystem-as-distribution. Rather than building a single product and selling it, Hugging Face built a platform where the AI community could share models, datasets, and applications — and then monetized the infrastructure that the community needed to do so at scale. The result is a flywheel: more community activity attracts more users, which attracts more organizations, which generates more revenue, which funds more platform development, which attracts more community activity.

The numbers are staggering. By 2025, Hugging Face had grown to 13 million users, more than 2 million public models, and over 500,000 public datasets. Over 30% of Fortune 500 companies now maintain verified accounts on the platform. NVIDIA has emerged as the strongest corporate contributor. The platform has become so central to the AI ecosystem that Decrypt named it their 2024 Project of the Year, describing it as “a massive library of over one million AI models, 190,000 datasets, and 55,000 demo apps.”

What makes Hugging Face’s distribution strategy instructive for other AI startups is its deliberate sequencing. The company began by building genuinely useful open-source tools — the Transformers library, which now has over 158,000 GitHub stars, became the standard way to work with pre-trained language models. By solving a real problem for the developer community and releasing the solution as open source, Hugging Face built trust and brand recognition before it had a commercial product to sell. The community came for the tools and stayed for the platform.

The monetization layer — enterprise subscriptions, inference endpoints, and compute services — was layered on top of a community that already trusted and depended on Hugging Face’s infrastructure. Enterprise customers did not need to be convinced that Hugging Face was valuable; they were already using it. The sales motion became a matter of upgrading existing users to paid tiers rather than acquiring new customers from scratch.

The lesson for AI startups is not necessarily to replicate Hugging Face’s specific model — building a community platform is a long-term, capital-intensive strategy that requires patience and a genuine commitment to the community’s interests. The lesson is the underlying principle: distribution that is built on genuine value creation for a community is more durable and more defensible than distribution that is built on paid acquisition or platform dependency.

Channel 5: Open-Source Communities — Building Trust at Scale

Open source is both a distribution channel and a philosophy, and the most successful AI companies have learned to treat it as both. The strategic logic of open-source distribution is straightforward: by releasing code, models, or tools as open source, you dramatically lower the barrier to adoption, build trust through transparency, and create a community of contributors who extend your product’s capabilities and advocate for it within their networks.

Meta’s release of the Llama family of models is the most prominent recent example of open-source distribution strategy in generative AI. By releasing competitive open-weight models, Meta achieved several distribution objectives simultaneously. It positioned Meta as a contributor to the AI ecosystem rather than a pure competitor, which improved its relationships with researchers and developers.

It created a massive installed base of users who built applications on Llama, generating feedback, fine-tuned variants, and use cases that Meta’s internal teams could not have discovered independently. And it established Llama as a reference point in the industry, meaning that every comparison of open-weight models includes Llama — a form of brand presence that no advertising budget could purchase.

Hugging Face’s Spring 2026 state of open source report documents the extraordinary growth of open-source AI participation: “Industry’s share of overall development fell from around 70% before 2022 to roughly 37% in 2025. Meanwhile, independent or unaffiliated developers rose from 17% to 39% of all downloads over the same period.” This shift means that open-source communities are increasingly the primary distribution channel for AI capabilities — not corporate sales teams.

For AI startups, the open-source distribution playbook involves several strategic choices. What to open-source and what to keep proprietary is the central question. The most common approach is to open-source the model weights or the core library while keeping the fine-tuning data, the inference infrastructure, or the application layer proprietary. This gives the community enough to build on and advocate for, while preserving a commercial moat.

Community infrastructure investment is non-negotiable for open-source distribution to work. A GitHub repository with poor documentation, slow issue response times, and no clear contribution guidelines will not build a community. The projects that generate genuine community distribution — Hugging Face’s Transformers, LangChain, LlamaIndex — invest heavily in documentation, tutorials, Discord servers, and responsive maintainers who treat community members as partners rather than users.

The derivative model phenomenon is a particularly interesting aspect of open-source AI distribution. Alibaba’s Qwen family has generated over 200,000 derivative models on Hugging Face — fine-tuned variants, quantized versions, and specialized adaptations created by the community. Each derivative model is a distribution node that carries the Qwen brand into new use cases and communities. This is a form of distribution that no marketing budget can replicate; it emerges organically from the quality and accessibility of the base model.

Open-source as enterprise sales enablement is a pattern that deserves more attention. Many enterprise buyers are deeply skeptical of AI vendors whose models are black boxes. Open-source models allow enterprise security teams to audit the model’s behavior, compliance teams to understand its training data, and engineering teams to customize it for specific use cases. For AI startups targeting regulated industries — healthcare, finance, government — open-sourcing at least part of the stack can be a significant competitive advantage in enterprise sales conversations.

Case Study: Runway’s Creative Community and Influencer Strategy

If Hugging Face represents the developer-community approach to AI distribution, Runway represents the creative-community approach. Runway’s generative video platform has grown from a niche tool used by experimental filmmakers into a platform used by Netflix, Lionsgate, CBS, and Under Armour — and the distribution strategy that drove that growth is a sophisticated blend of influencer marketing, community building, and enterprise partnership.

Runway’s Creative Partners Program is the centerpiece of its influencer distribution strategy. The program provides a select group of artists, filmmakers, and creators with complimentary Unlimited plan access, early access to new features, and dedicated support — in exchange for creating content that showcases what Runway can do. This is not traditional influencer marketing, where a brand pays a creator to post a sponsored video. It is a deeper, more authentic form of partnership where creators become genuine advocates because they are genuinely invested in the platform’s success.

The strategic insight behind Runway’s approach is that creative professionals are the most credible advocates for creative tools. When a respected filmmaker or visual artist shares work they created with Runway, the implicit message is not “this company paid me to say this is good.” The message is “this tool enabled me to create something I could not have created otherwise.” That is a fundamentally different form of social proof, and it is far more persuasive to the target audience of other creative professionals.

Netflix has reported 90% faster VFX production and 10x cost reductions using Runway. Lionsgate partnered with Runway in 2024 to integrate AI into film production. The Late Show with Stephen Colbert reduced editing tasks from hours to minutes. These enterprise case studies did not emerge from a traditional enterprise sales motion; they emerged from a creative community that was already using Runway and advocating for it within their professional networks. The enterprise partnerships followed the community adoption, not the other way around.

Runway has also invested in community infrastructure beyond the Creative Partners Program. The Gen:48 film festival — a competition where filmmakers create short films using Runway’s tools — generates enormous amounts of user-created content that showcases the platform’s capabilities. The Runway Academy provides educational resources that lower the barrier to adoption for new users. These initiatives create a flywheel: more creators learn Runway, create impressive work, share it publicly, attract more creators, and generate the kind of organic social proof that drives both consumer adoption and enterprise interest.

The influencer marketing dimension of Runway’s strategy extends beyond its formal Creative Partners Program. The platform has benefited enormously from organic advocacy by creators on YouTube, TikTok, and Instagram who discovered Runway independently and shared tutorials, experiments, and finished work with their audiences. This organic influencer activity is not accidental; it is the result of building a product that genuinely enables creative work that was previously impossible or prohibitively expensive, and making it accessible enough that creators want to share their discoveries.

Channel 6: Influencer Marketing — The Authenticity Imperative

Influencer marketing for generative-AI applications is a distinct discipline from traditional influencer marketing, and it requires a different strategic framework. The core challenge is that AI tools are complex, and the value proposition is often not immediately obvious from a short social media post. The most effective AI influencer campaigns are educational rather than promotional — they show, in detail, what the tool can do and how it changes the creator’s workflow.

The influencer marketing industry is projected to reach $32.55 billion in 2025, up from $24 billion in 2024. But the dynamics of effective influencer marketing are shifting in ways that are particularly relevant for AI companies. According to Popular Pays’ 2025 analysis, micro-influencers — creators with smaller but highly engaged audiences — deliver average engagement rates of 4%, compared to 1.3% for macro-influencers. For AI tools, which require a certain level of technical sophistication to appreciate, micro-influencers who speak to specific professional communities are often more effective than broad-reach celebrities.

The most effective influencer distribution strategies for AI applications share several characteristics. Tutorial content outperforms promotional content. A creator who spends 15 minutes walking their audience through how they used an AI tool to solve a specific problem generates far more qualified interest than a creator who posts a 30-second sponsored clip saying the tool is great. The tutorial format works because it demonstrates value concretely, addresses the audience’s skepticism about AI capabilities, and gives viewers enough information to decide whether the tool is relevant to their own work.

Niche community targeting is more efficient than broad reach. An AI writing tool that partners with 50 micro-influencers who speak to specific professional communities — legal writers, technical documentation specialists, marketing copywriters — will generate more qualified users than a single partnership with a general-audience creator with 10 million followers. The niche audience is already primed to care about the specific problem the tool solves.

Long-term partnerships outperform one-off campaigns. Popular Pays notes that 2025 is seeing more equity partnerships, creative director positions, and long-term strategic alignments between brands and creators. For AI tools, this makes particular sense: a creator who uses a tool consistently over months develops genuine expertise and authentic enthusiasm that is impossible to fake in a one-off sponsored post. Long-term partnerships also allow the creator to showcase the tool’s evolution as new features are released, creating a narrative of continuous improvement that builds audience trust.

Community platforms are increasingly important. As Popular Pays observes, 2024 saw a surge in community-focused platforms like Substack and Discord, where creators build dedicated spaces for their most engaged followers. For AI companies, these community platforms offer a particularly valuable distribution opportunity: a creator who discusses an AI tool in their Discord server or Substack newsletter is speaking to an audience that has self-selected for high engagement and trust. The conversion rates from these community channels often far exceed those from traditional social media posts.

AI-powered influencer discovery is itself becoming a distribution lever. IndaHash’s 2025 influencer marketing playbook describes how AI tools can now scan millions of creator profiles to identify those whose audience demographics, engagement patterns, and content themes align with a brand’s target customer. For AI companies, this means being able to identify the specific creators whose audiences are most likely to be interested in their tools — and approaching those creators with partnership proposals that are genuinely relevant to their content.

Building a Multi-Channel Distribution Stack

The most sophisticated AI companies do not choose a single distribution channel; they build a stack of channels that reinforce each other. Understanding how these channels interact is as important as understanding each channel individually.

A typical distribution stack for a generative-AI startup might look like this: Open source builds trust and community → Community generates organic influencer advocacy → Influencer advocacy drives consumer adoption → Consumer adoption generates enterprise inbound → Enterprise partnerships fund API infrastructure → API infrastructure enables SaaS integrations → SaaS integrations drive marketplace listings → Marketplace listings generate more community members.

The sequencing matters. Most AI startups should not attempt to pursue all six channels simultaneously from day one. The resource requirements are too high, and the channels require different organizational capabilities. A more practical approach is to identify the one or two channels that are most aligned with your target customer and your team’s strengths, execute them exceptionally well, and then layer in additional channels as you scale.

For developer-focused AI tools, the natural starting sequence is API ecosystem → open-source community → marketplace listings. Build a clean API, release supporting tools as open source, publish on relevant marketplaces, and let the developer community do the distribution work.

For creative professional tools, the natural starting sequence is influencer marketing → community building → enterprise partnerships. Find the creators who are most likely to love your tool, give them early access and support, let their advocacy build your community, and use the community’s work as social proof for enterprise conversations.

For enterprise-focused AI tools, the natural starting sequence is lighthouse customer → system integrator partnerships → SaaS platform integrations. Land one or two marquee customers, build relationships with the system integrators who serve your target vertical, and get embedded in the SaaS platforms your enterprise customers already use.

Gartner’s prediction that 80% of enterprises will have deployed GenAI-enabled applications by 2026, up from less than 5% a few years ago, means that the enterprise distribution opportunity is enormous — but so is the competition. The AI startups that will capture disproportionate enterprise market share are those that have built distribution infrastructure — community trust, platform integrations, system integrator relationships — before the enterprise buying wave fully crests.

The Measurement Framework: What to Track Across Channels

Distribution strategy without measurement is guesswork. Each channel requires a different set of metrics, and the most important metrics are often not the most obvious ones.

For API ecosystems, the key metrics are developer activation rate (what percentage of API sign-ups make their first successful API call within 24 hours), API call volume growth (week-over-week), and application-to-production rate (what percentage of developers who build a prototype actually deploy it to production). These metrics tell you whether your API is genuinely useful and whether your developer experience is removing friction from the adoption journey.

For SaaS platform integrations, the key metrics are integration install rate (what percentage of the host platform’s users install your integration), activation rate within the integration (what percentage of installers actually use the integration at least once), and retention rate (what percentage of users who activate the integration are still using it 30 days later). These metrics tell you whether your integration is solving a real problem for the host platform’s users.

For marketplace distribution, the key metrics are organic search ranking within the marketplace, review velocity and average rating, and conversion rate from marketplace listing page to install or sign-up. These metrics tell you whether your marketplace presence is generating discovery and whether your listing is converting that discovery into users.

For enterprise partnerships, the key metrics are average contract value, sales cycle length, win rate against identified competitors, and net revenue retention (what percentage of enterprise contract value renews and expands year over year). These metrics tell you whether your enterprise distribution is generating sustainable, growing revenue.

For open-source community distribution, the key metrics are GitHub stars and forks, contributor count and contribution velocity, derivative model or application count (for model releases), and community forum activity. These metrics tell you whether your open-source release is generating genuine community engagement rather than passive downloads.

For influencer marketing, the key metrics are not follower count or impressions — they are qualified sign-up rate from influencer-specific tracking links, activation rate of influencer-referred users, and long-term retention of influencer-referred users compared to other acquisition channels. These metrics tell you whether your influencer partnerships are generating users who actually find value in your product.

The Competitive Moat: Why Distribution Compounds

The final and perhaps most important insight about AI distribution is that it compounds. Each user acquired through any channel is a potential advocate who generates organic distribution. Each integration built is a switching cost that retains existing users and attracts new ones. Each community member is a potential contributor who extends your product’s capabilities. Each enterprise customer is a reference that unlocks the next enterprise customer.

This compounding dynamic means that distribution advantages, once established, are extremely difficult for competitors to overcome — even if those competitors have technically superior models. As the Founders Forum Group’s AI statistics report notes, “the competitive landscape is seeing an evolving dynamic between open vs closed models, startups vs incumbents, and horizontal vs vertical AI.” In each of these competitive dimensions, distribution infrastructure is often the decisive variable.

The generative-AI market is still early enough that distribution advantages are not yet fully locked in. The companies that invest in building multi-channel distribution infrastructure today — API ecosystems, SaaS integrations, marketplace presence, enterprise partnerships, open-source communities, and influencer networks — are building moats that will be extremely difficult to breach as the market matures.

The lesson from Hugging Face, Runway, OpenAI, and the other generative-AI distribution leaders is consistent: the best model does not always win. The best-distributed model does. In a market where the underlying technology is rapidly commoditizing, distribution is not just a go-to-market function — it is the product itself.