The AI video generation landscape has transformed from a novelty into a production-grade toolset. Here’s everything you need to know about the models reshaping filmmaking, advertising, and content creation — including which ones are worth your money right now.

In early 2024, AI-generated video was a curiosity — six seconds of wobbly physics, nightmare hands, and faces that melted between frames. Two years later, we live in a fundamentally different world. The leading models now produce native 4K output with synchronized dialogue, simulate real-world physics with startling accuracy, and maintain character consistency across multi-shot sequences. Some can generate two full minutes of coherent narrative video from a single text prompt.

The shift hasn’t just been technical. It’s been economic. A 10-second clip that would have required a camera crew, lighting rig, and a day of post-production can now be generated for less than a dollar in under five minutes. Entire advertising campaigns are being prototyped — and increasingly shipped — using AI-generated footage. Independent filmmakers are using these tools to create proof-of-concept reels that would have required six-figure budgets just 18 months ago.

But with at least a dozen serious contenders now vying for dominance, choosing the right tool has become its own challenge. Each model has distinct strengths, limitations, pricing structures, and philosophical approaches to what “AI video” should be. Some prioritize raw visual fidelity. Others optimize for control, speed, or accessibility. A few are open-source, inviting the community to build on top of them. At least one major player has already shut down entirely.

This guide covers the ten most significant AI video generation models available in 2026 — the top five in depth, followed by five honorable mentions that serve important niches. It’s based on hands-on testing, published benchmarks, official documentation, and the experiences of professional creators who use these tools daily.

A note on methodology: the AI video space moves fast. Benchmarks shift monthly. Pricing changes without warning. Where I’m uncertain about a specific claim, I’ll say so explicitly and note my confidence level. Every technical specification cited here has been cross-referenced against official documentation or multiple independent sources as of mid-April 2026.

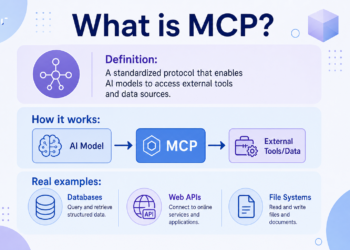

Before diving into the rankings, it’s worth understanding how these models actually work. Nearly all current AI video generators are built on diffusion transformer architectures — neural networks that learn to reverse a noise-addition process, gradually refining random static into coherent video frames.

The key differences lie in how they handle temporal coherence (keeping things consistent across frames), how they integrate audio, how much control they give users over the generation process, and how efficiently they use compute. These architectural choices produce dramatically different user experiences, even when the underlying mathematics is similar.

The Top Five

1. Seedance 2.0 (ByteDance)

Resolution: Up to 2K native (4K with upscaling) · Up to 15 seconds per clip

Price: ~$0.60 per 10-second clip · Subscription plans from ~$14/month

Best for: Advertising, music videos, multi-shot storytelling, template-based production

If you’ve spent any time on social media in 2026, you’ve seen Seedance 2.0 output — even if you didn’t realize it. ByteDance’s second-generation video model, released in February 2026, has rapidly become the default choice for commercial video production, and the benchmarks explain why: it currently holds the #1 Elo rating on the Artificial Analysis Video Arena for both text-to-video (1,269 Elo) and image-to-video (1,351 Elo), outpacing every other model tested.

The headline feature is its unified multimodal architecture. Where most video generators accept a text prompt and maybe a reference image, Seedance 2.0 accepts up to 9 reference images, 3 video clips, and 3 audio clips simultaneously — all fed into a single generation pass. This “@ Reference System,” as ByteDance calls it, means you can hand the model a storyboard, a voiceover track, a brand guideline image, and a reference video for camera movement, then receive a cohesive clip that synthesizes all of those inputs. For production teams accustomed to treating AI video as a one-prompt-at-a-time tool, this multi-input workflow is transformative.

Audio generation is native and genuinely impressive. Seedance 2.0 generates dialogue with phoneme-level lip-sync accuracy across eight or more languages, alongside ambient sound effects and background audio — all created jointly with the video rather than layered on afterward. The practical difference is enormous: you don’t need to fix mismatched lip movements in post-production because the audio and video were never separate to begin with.

The director-level control system deserves special mention. You can specify dolly zooms, rack focuses, tracking shots, and POV switches directly in your prompt, and the model handles them with surprising fluency. The keyword “lens switch” triggers a cut within a multi-shot generation, enabling you to produce edited sequences — not just single clips — from a single prompt.

Where it falls short: Generation speed. A 10-second clip typically takes 5–10 minutes, which is significantly slower than some competitors. Content moderation is also aggressive — ByteDance restricts realistic human imagery heavily, which limits certain commercial applications. And the copyright controversy that erupted shortly after launch, when users generated clips featuring recognizable actors and copyrighted characters, remains unresolved. The MPA, Disney, and Paramount Skydance have all publicly denounced the model for alleged infringement.

There’s also the platform dependency question. Seedance 2.0 is locked to ByteDance’s ecosystem and approved third-party platforms — there’s no self-hosted option, no open-source weights, and no support for custom model training or LoRA fine-tuning. For teams that need control over their infrastructure or want to customize the model for specific use cases, this is a meaningful limitation. You’re renting capabilities, not owning them.

Usability note: The ~80–90% usable output rate on first generation is a figure that comes from production teams working with the tool daily. In practice, this means you’ll typically get a workable clip on your first or second try — a significant improvement over the earlier generation of models where you might need five or ten attempts to get something usable. This dramatically reduces the effective cost per finished clip.

The bottom line: Seedance 2.0 leads the quality benchmarks for good reason. If you need the highest-fidelity commercial video output and can work within its content guidelines and generation time, it’s the current leader. Access it through Dreamina (Jimeng AI), CapCut, or third-party APIs like fal.

2. Veo 3.1 (Google DeepMind)

Resolution: Up to 1080p (4K at 60fps reported in some iterations) · 8-second clips

Price: ~$0.40/sec via API · Free tier via Gemini; $19.99/month for Gemini Advanced

Best for: Film production, broadcast content, high-end commercials, product videos

Veo 3.1 is Google DeepMind’s answer to the question: what if an AI video generator was built by people who actually care about cinematography? The model produces output with a distinctive film-grade quality — lighting, texture, and depth-of-field rendering that consistently looks like it was shot on professional cinema cameras rather than generated by a neural network.

The audio generation is best-in-class. Veo 3.1 produces music, sound effects, dialogue, and ambient soundscapes that are natively synchronized to the visuals, and the quality of that audio is noticeably superior to what competing models produce. Dialogue has natural cadence and timing. Sound effects are contextually appropriate. Background music matches the emotional tone of the scene. For projects where audio quality matters — and it always matters — this is a significant differentiator.

Prompt adherence is another standout strength. Veo 3.1 understands complex cinematic language: you can request specific camera movements (time-lapse, over-the-shoulder, dolly zoom), environmental details, and emotional tones, and the model translates those instructions with high fidelity. Character consistency across clips is strong, including lip movements synced to dialogue and maintained facial identity — crucial for any narrative work.

The model is accessible through multiple touchpoints: the Artlist AI Toolkit, Google AI Studio, the Gemini API, and Google’s dedicated video creation tool, Flow. The free tier through Gemini makes it one of the most accessible high-quality options for experimentation. For developers, Veo 3.1 Lite offers a cost-effective variant optimized for high-volume applications.

Where it falls short: The 8-second maximum clip length is the most obvious limitation. While that’s sufficient for many commercial applications, it constrains narrative work and requires more stitching in post-production. Some users have reported occasional issues with prompt misinterpretation — garbled speech, deformed character models, or incomplete complex scenes. And Google’s content guidelines are strict: no content depicting real individuals, and broad restrictions on violence and other sensitive material.

Pricing detail: The free tier through Gemini is genuinely useful for experimentation — you can generate video without spending anything, though with lower priority and some feature restrictions. The $19.99/month Gemini Advanced tier unlocks higher-priority processing and fuller feature access. For developers building applications, API pricing at roughly $0.40 per second of generated video is competitive with the field, and Veo 3.1 Lite offers a cheaper option for high-volume use cases where maximum quality isn’t required. The seamless integration with Google Cloud services — storage, compute, other AI APIs — creates a compelling package for teams already invested in that ecosystem.

Confidence note on resolution: Multiple sources describe Veo 3.1 as capable of 4K at 60fps, but the most consistently documented output specifications from Google’s own platforms cite 720p and 1080p for standard generation. The 4K capability may refer to upscaled output or specific access tiers. I’d rate my confidence at about 70% that native 4K generation is available in some form, but I’d recommend verifying this against the latest documentation on Google AI Studio before building a workflow around it.

The bottom line: If visual quality and audio fidelity are your top priorities, Veo 3.1 is arguably the best model available. The Google Cloud integration makes it particularly attractive for teams already in that ecosystem. The 8-second clip ceiling is real, but for commercials, product videos, and polished short-form content, it’s rarely a dealbreaker. Learn more at Google DeepMind.

3. Sora 2 (OpenAI)

Resolution: 1080p · Up to 25 seconds (Pro tier)

Price: Included with ChatGPT Plus ($20/month) or Pro ($200/month)

Best for: Cinematic scenes requiring physical plausibility, creative shorts

⚠️ Critical Update: Sora 2 is being discontinued. OpenAI announced on March 24, 2026 that the Sora app will shut down on April 26, 2026, with the API following on September 24, 2026. I’m including it in this guide because the API remains functional through September, because its technical achievements remain significant benchmarks for the industry, and because its failure offers important lessons about the economics of AI video.

When Sora 2 launched in September 2025, it was genuinely stunning. The physics simulation was — and remains — the best in the field. Objects fall with realistic weight. Fluids flow with proper dynamics. Multi-body collisions resolve believably. Where other models produce output that looks real but feels slightly wrong, Sora 2’s understanding of physical plausibility gave its videos a grounded quality that no competitor has fully matched.

The Disney partnership, which licensed 200+ Disney, Marvel, and Pixar characters for generation, was a bold move that demonstrated one possible future for licensed AI content. Users could generate custom scenarios featuring beloved characters with remarkable fidelity — a capability that attracted enormous public attention.

Synchronized audio generation was strong: natural dialogue matching lip movements, ambient effects, background music, and sound design for transitions, all generated in a single pass. The 25-second maximum duration for Pro users was among the longest available at launch.

So why is it shutting down? The reported reasons are instructive. The service cost an estimated $1 million per day to operate. User numbers peaked around one million before declining to fewer than 500,000. The social media app features — designed to make Sora a consumer platform rather than just a tool — attracted criticism (users called it “SlopTok”). Copyright concerns remained unresolved despite the Disney deal. And OpenAI ultimately decided to redirect resources toward core enterprise products.

Should you still use it? If you’re already on a ChatGPT Plus or Pro plan and need physics-realistic video before September 2026, the API remains functional and the quality is still excellent. But building a production workflow around a tool with a published shutdown date would be unwise. Export your content via sora.chatgpt.com/exports/me while you still can.

What the shutdown means for the industry: Sora 2’s demise isn’t just an OpenAI story — it’s a data point about the entire sector’s economics. At a million dollars per day in compute costs, roughly 500,000 active users, and a $20/month subscription that included many other features, the math never worked. Even the $200/month Pro tier couldn’t close the gap at scale. This suggests that pure consumer-subscription models for AI video may not be viable without either dramatic compute cost reductions or advertising revenue — neither of which has materialized.

The Disney partnership, which was announced in December 2025 and gave users access to over 200 licensed characters, was abandoned just three months later with the shutdown announcement. This is particularly notable because it represented one of the first major attempts at licensed AI video content — a potential path to legal clarity that died with the product. Whether other platforms attempt similar licensing arrangements remains to be seen.

For creators who built workflows around Sora 2, the transition is painful but manageable. The physics simulation capabilities are most closely matched by Hailuo 02 and Kling 3.0. The character consistency and prompt adherence find parallels in Veo 3.1 and Seedance 2.0. No single model replicates everything Sora 2 did, but the multi-model workflow approach (discussed later in this guide) can cover most use cases.

The bottom line: Sora 2’s technical achievements — particularly in physics simulation — set a bar that the industry is still reaching for. Its commercial failure is a cautionary tale about the economics of consumer-facing AI video: compute costs, content moderation complexity, and IP liability create a cost structure that advertising-free subscription revenue may not sustain. The physics engine, at least, lives on in OpenAI’s research.

4. Kling (Kuaishou)

Resolution: Up to 4K @ 60fps (Kling 3.0) · Up to 2 minutes with extensions (paid plans)

Price: Free tier available; paid plans from ~$6.99/month

Best for: Long-form narrative content, sequential storytelling, character-driven videos

Kuaishou’s Kling platform occupies a unique position in the market: it’s the only major AI video generator optimized for length. While competitors cap out at 8–25 seconds per generation, Kling’s paid plans allow initial generations of up to 2 minutes, with an Extend feature pushing that to 3 minutes in 5-second increments. For anyone working on narrative content — short films, episodic social content, product walkthroughs — this extended duration fundamentally changes what’s possible.

The platform has evolved rapidly. Kling 2.6, released in December 2025, introduced simultaneous audio-visual generation — speech, sound effects, and ambient sounds created in a single pass alongside the video. Kling 3.0, released in February 2026, pushed the model into multi-shot cinematic territory with native 4K at 60fps, a multi-shot AI Director system, and significantly improved character consistency.

The multi-shot Director feature in Kling 3.0 is particularly noteworthy. You can structure a prompt with up to 6 individual shots, each with its own framing, action, and camera movement specifications. The model maintains character identity, prop consistency, and visual coherence across all shots — effectively producing a rough-cut edited sequence rather than a single clip. For storyboard-to-video workflows, this is powerful.

Motion control is another strong suit. Kling offers motion brushes for directing specific elements within a frame, reference video input for replicating specific motion patterns, and first/last frame interpolation. The Elements feature preserves character identity across multiple generations, which is essential for episodic or serialized content.

Where it falls short: Quality degrades noticeably with length. Kling’s own users report that consistency remains strong through about 30 seconds, with subtle degradation beginning at 30–60 seconds and noticeable morphing and physics breakdowns appearing in clips longer than one minute. The recommendation from experienced users is to keep individual shots under 30 seconds and edit them together in post — which somewhat undercuts the “2-minute generation” headline. Audio generation, while functional, doesn’t match the quality of Seedance 2.0 or Veo 3.1. And the credit-based pricing can become expensive at higher resolutions and durations.

Understanding the version landscape: The rapid iteration at Kuaishou can be confusing. Kling 2.6 introduced simultaneous audio-visual generation and supports Chinese and English voice synthesis. Kling 3.0, released in February 2026, brought 4K at 60fps, the multi-shot AI Director, and improved physics. As of April 2026, both versions are accessible through the platform, with Kling 3.0 available on Pro plans and above. The 2-minute generation capability applies to Kling 2.0+ with the Extend feature on paid plans — individual clips from Kling 3.0 are typically 3–15 seconds, designed to be combined through the multi-shot system. This distinction matters: you’re not getting a single two-minute coherent generation, but rather a system that can chain shorter clips into longer sequences with maintained consistency.

Pricing reality check: Kling’s credit system can be opaque. The free tier offers 66 daily credits — enough for a few draft-quality clips. The Standard plan ($6.99/month) provides 660 credits. The Pro plan ($25.99/month) gives 3,000 credits with Kling 3.0 access. A 1-minute video can cost approximately 120 credits in Standard mode or 420 credits in Professional mode, meaning heavy users will burn through even the Pro allocation quickly. Higher tiers (Premier, Ultra) exist for production-level usage.

The bottom line: Kling is the right choice when you need longer sequences, multi-shot coherence, or character consistency across a series. The combination of duration, motion control, and multi-shot directing makes it uniquely suited to narrative work. Just manage expectations about quality at the longer durations — plan to edit, not to ship raw two-minute generations. Access it at klingai.com or through platforms like Artlist.

5. Runway Gen-4.5

Resolution: 720p native (up to 4K with upscaling) · 2–10 second clips

Price: From $12/month (Standard plan)

Best for: VFX workflows, iterative creative exploration, professional film integration

Runway has been in the AI video game longer than almost anyone else, and Gen-4.5 — nicknamed “David” internally — represents the culmination of that experience. Released in December 2025, it holds the top position on the Artificial Analysis Text-to-Video benchmark with 1,247 Elo points, and it earned that ranking through a distinctive combination of motion quality, temporal consistency, and creative control that no other model quite matches.

What sets Runway apart isn’t any single capability — it’s the ecosystem. Gen-4.5 is embedded in a professional creative environment that includes motion brushes for per-element control, keyframe systems for defining start and end states, video-to-video transformation, and scene consistency tools that maintain coherent visual language across multiple generations. For professional VFX artists and filmmakers, this tooling depth is the reason Runway remains the default despite newer competitors surpassing it on raw visual quality metrics.

The motion quality is genuinely impressive. Objects move with realistic weight, momentum, and force. Liquids flow with proper dynamics. Fine details remain coherent across motion and time — a persistent challenge for video generation models that Gen-4.5 handles better than most. The model also excels at stylistic range: it can produce photorealistic output, cinematic compositions, and stylized animation while maintaining internal visual consistency.

Gen-4 Turbo, a faster and cheaper variant, offers 2× the speed at reduced cost for iterative work — useful when you’re exploring creative directions and don’t need maximum quality on every generation. This kind of tiered offering reflects Runway’s understanding that professional workflows involve a lot of experimentation before final renders.

Where it falls short: The native output resolution of 720p is a limitation — especially when competitors like Kling 3.0 and Veo 3.1 output at 1080p or higher natively. Upscaling to 4K is available but adds a step. The 10-second maximum clip length is competitive but not leading. And Runway shares some fundamental limitations with all current video generation models: occasional causality errors (effects preceding causes), object permanence failures (items disappearing between frames), and a bias toward “success” outcomes in physical simulations.

The pricing model, while reasonable at the entry level ($12/month for Standard), scales up quickly for heavy users. Professional plans with adequate credit allocations can become expensive for high-volume production.

The evolution context matters. Runway has iterated through five generations of video models since February 2023 — Gen-1 through Gen-4.5 — each representing a genuine leap in capability. Gen-1 could only apply styles to existing video. Gen-2 introduced text-to-video. Gen-3 Alpha brought 3D understanding. Gen-4 added “World Consistency” for cross-scene coherence. Gen-4.5 represents significant advances in pre-training data efficiency and post-training techniques, achieving better quality with more efficient computation. This iterative track record gives confidence that Runway will continue improving — and that investment in learning the platform pays dividends over time.

The model is developed on NVIDIA Hopper and Blackwell GPUs, optimized for high performance across the NVIDIA stack. For enterprises, API access enables integration into custom pipelines, and the output supports 24fps and 25fps at various aspect ratios including 16:9, 9:16, 1:1, 4:3, 3:4, and the ultrawide 21:9 — useful for cinematic presentations and specific platform requirements.

The bottom line: Runway Gen-4.5 is the professional’s choice — not because it produces the single best output on any one metric, but because its tooling, ecosystem, and workflow integration make it the most productive platform for iterative creative work. If you’re a VFX artist, filmmaker, or creative director who needs granular control over every element of your generated video, this is where you should be working. Start at runwayml.com.

Honorable Mentions

The five models above represent the current leaders, but the AI video landscape is broader and more diverse than any top-five list can capture. These next five serve important niches — and in some cases, they’re advancing faster than the leaders.

6. Luma Ray3 (Luma AI)

Resolution: Up to 4K HDR · Up to 20 seconds at 1080p

Price: From $7.99/month (Lite plan via Dream Machine)

Best for: High-fidelity visual production, HDR workflows, budget-conscious creators

Luma’s Ray3 — and its newer Ray3.14 variant — occupies an interesting position in the market: premium visual quality at an accessible price point. The Hi-Fi 4K HDR output is a genuine differentiator; Luma is one of the few platforms producing HDR video natively, complete with EXR output for professional color grading workflows. For creators who need broadcast-quality color depth and dynamic range, this matters.

The Dream Machine platform offers a generous free tier with limited credits and draft resolution, making it one of the best options for experimentation before committing to a paid plan. The Lite plan at $7.99/month provides full Ray3 access with 4K upscaling — making it the most affordable entry point for high-resolution AI video among the serious contenders.

Ray3 supports text-to-video, image-to-video, and video-to-video workflows, with various duration options up to 20 seconds at 1080p. The physics simulation is solid, and motion quality is competitive, though it trails the top five in prompt adherence consistency and character coherence across longer sequences.

The recently released Ray3.14 variant pushes quality further, with improved motion coherence and visual fidelity, though at higher credit costs — particularly for HDR and video-to-video workflows. For professional colorists and post-production teams, the EXR output option enables the kind of precise color manipulation that’s standard in broadcast and film workflows but entirely absent from most AI video platforms.

Confidence note: Luma’s pricing structure is complex, with credit costs varying significantly by resolution, HDR mode, and generation type. The figures above are drawn from Luma’s official pricing page and Dream Machine support documentation, but the credit-to-dollar conversion depends on your subscription tier. Verify current pricing before committing.

7. Wan2.2 (Alibaba)

Resolution: 720p @ 24fps · Variable duration

Price: Free (Apache 2.0 open-source license)

Best for: Developers, researchers, self-hosted workflows, budget-zero production

Wan2.2 is the most important model on this list for anyone who cares about the open-source AI ecosystem. Released by Alibaba’s Tongyi Lab in July 2025, it’s the world’s first open-source Mixture-of-Experts (MoE) video generation model, and it punches far above what you’d expect from a free tool.

The MoE architecture is the key innovation. The A14B model contains approximately 27 billion total parameters but only activates about 14 billion per inference step, delivering strong performance without proportional compute costs. This makes it the most capable video model that can actually run on hardware a research lab or indie studio might own. The 5B variant is optimized to run on a single RTX 4090 at 720p and 24fps — a remarkable achievement for consumer-grade hardware.

The model family includes specialized variants: T2V and I2V MoE models for standard generation, a unified TI2V-5B model for consumer GPUs, a Speech-to-Video model for audio-driven avatar generation, and an Animate model for character animation. The cinematic aesthetic control — with detailed labels for lighting, composition, contrast, and color tone — allows for more stylistic precision than you’d expect from an open-source offering.

Training data is substantial: 65.6% more images and 83.2% more videos than Wan2.1, enabling strong generalization across motion, semantics, and aesthetics. The Apache 2.0 license means full commercial use is permitted, and the community has already built extensive integrations with ComfyUI, Diffusers, and various acceleration frameworks.

The Wan2.2 family also includes a Speech-to-Video model (S2V-14B) that converts portrait photos into film-quality speaking avatars — supporting speech, singing, and performance generation. An Animate model handles character animation and replacement. This breadth of specialized variants, all under the same open-source license, makes Wan2.2 more of an ecosystem than a single model.

Where it falls short: 720p output is the ceiling for the consumer-friendly models. Generation speed on consumer hardware, while impressive for what it is, can’t compete with cloud-served commercial models. And while quality is strong for an open-source model, it doesn’t match the top-tier commercial offerings on visual fidelity, physics simulation, or character consistency. The larger A14B models require 80GB+ VRAM without offloading — effectively limiting them to datacenter GPUs or multi-GPU setups.

The bottom line: Wan2.2 is the right choice if you need on-premise video generation, can’t afford subscription fees, want to fine-tune a model for your specific use case, or simply believe in open-source AI. It’s also the foundation that many community projects are building on — with integrations into ComfyUI, Diffusers, and acceleration frameworks like FastVideo and Cache-dit — which means its capabilities are expanding faster than any single company’s roadmap. The Wan series has accumulated over 6.9 million downloads across Hugging Face and ModelScope, making it the most widely adopted open-source video model to date. Available on GitHub, Hugging Face, and ModelScope.

8. Hailuo 02 (MiniMax)

Resolution: Native 1080p · Up to 10 seconds (768p) or 6 seconds (1080p)

Price: ~$0.28 per generation via API · Credit-based system on web platform

Best for: Cost-efficient production, physics-heavy content, high-volume workflows

Hailuo 02 from Chinese AI startup MiniMax might be the most technically interesting model on this list — not for what it generates, but for how it generates it. The Noise-aware Compute Redistribution (NCR) architecture dynamically allocates computational resources based on the complexity of what’s being rendered at any given moment, spending more compute on difficult frames and less on easy ones. The result is a 2.5× efficiency improvement over previous architectures at comparable parameter scales.

That efficiency gain isn’t just an engineering flex — it has practical consequences. MiniMax used the saved compute to triple the model’s parameter count and quadruple its training data volume without increasing generation costs. The result is a model that generates native 1080p video with strong physics simulation, excellent instruction following, and competitive visual quality, all at a price point that significantly undercuts most competitors.

Hailuo 02 currently holds the #2 position on the Artificial Analysis Video Arena, which is remarkable for a model from a relatively small startup. Its physics mastery — particularly for complex scenarios like gymnastics, fluid dynamics, and multi-body interactions — is among the best in the field. Instruction following is exceptionally precise, reducing the prompt engineering overhead that plagues some competitors.

Where it falls short: Duration is limited (6 seconds at 1080p, 10 seconds at 768p). The model doesn’t yet support advanced features like multi-shot generation or motion brushes. And while it scores well on benchmarks, some independent evaluations note lower consistency and imaging quality compared to the top-tier models in certain scenarios.

The bottom line: If cost efficiency is a priority — and it should be for high-volume production workflows — Hailuo 02 delivers remarkable quality per dollar. The NCR architecture represents a genuinely novel approach to the compute-cost problem that defines AI video, and MiniMax is iterating rapidly. Available at hailuo.ai and via API on platforms like fal and Replicate.

9. Pika 2.5 (Pika Labs)

Resolution: Up to 1080p · 3–15 seconds per clip

Price: Free tier available; paid plans for higher capacity

Best for: Social media content, creative experimentation, fast iteration, fun effects

Pika 2.5 is the model that best understands its audience: creators who need fast, fun, shareable video content and don’t want to learn a professional VFX pipeline to get it. Where Runway is a Swiss Army knife for filmmakers, Pika is a magic wand for social media creators — and it’s very good at what it does.

The generation speed is the first thing you notice. Standard clips render in 10–30 seconds, which is dramatically faster than most competitors. For a social media workflow where you might generate dozens of variations before finding the right one, this speed advantage compounds quickly. The visual quality in Pika 2.5 is a meaningful step up from earlier versions — sharper textures, smoother motion, reduced morphing artifacts, and stronger prompt adherence.

But Pika’s real charm is its creative toolkit. Pikaffects applies dramatic transformations — melting, exploding, inflating, squishing — with a single prompt or preset. Pikaswaps lets you replace visual elements in existing clips. Pikaframes gives you keyframe control for defining start and end states. Pikaformance turns a still image and an audio track into an expressive animated performance with lip-sync. These aren’t gimmicks — they’re precisely the kind of effects that go viral on TikTok and Instagram Reels, and Pika has built them to be dead-simple to use.

The platform’s output customization is well-designed for social creators. You can set aspect ratios optimized for TikTok/Reels/Shorts (9:16), YouTube (16:9), or square feeds (1:1) directly in the interface. Motion control offers granular settings for camera path, subject motion, background motion, and overall intensity. The learning curve is gentler than Runway’s professional toolset, which is precisely the point — Pika is designed to be productive on your first session, not your tenth.

Where it falls short: Core generations don’t include native audio — you’ll need to add sound separately. Character consistency across multiple shots is weaker than the top-tier models. Fine detail at 1080p can show artifacts, with 720p often being more reliable. And if you need anything longer than about 15 seconds, you’ll be stitching clips together manually. Anatomical accuracy — particularly hands and fingers — remains an occasional issue, as it does across the entire field.

The bottom line: Pika 2.5 is the fastest path from “I have an idea” to “this is posted.” If your workflow centers on social content, creative experimentation, or rapid prototyping, Pika’s speed and creative tools make it the most fun model on this list — and often the most productive for short-form work. Try it at pika.art.

10. HunyuanVideo 1.5 (Tencent)

Resolution: Up to 1080p (with super-resolution upscaling) · Variable duration

Price: Free (open-source)

Best for: Local generation, privacy-sensitive workflows, researchers, hardware enthusiasts

HunyuanVideo 1.5 from Tencent rounds out this list as the second major open-source entry, and it occupies a slightly different niche than Alibaba’s Wan2.2. Where Wan2.2 prioritizes architectural innovation (MoE) and community ecosystem, HunyuanVideo 1.5 prioritizes performance on consumer hardware — specifically, the ability to generate quality video on a single NVIDIA RTX 4090.

The numbers are impressive: using the step-distilled model, a single RTX 4090 can generate video within 75 seconds — a 75% reduction in end-to-end generation time compared to the base model. The minimum GPU memory requirement is just 14GB with model offloading enabled, making it compatible with a wider range of consumer hardware than most AI video models.

The technical architecture is built around an 8.3-billion-parameter Diffusion Transformer (DiT) with a 3D causal VAE achieving 16×16×4 compression ratios. The Selective and Sliding Tile Attention (SSTA) mechanism prunes redundant spatiotemporal key-value blocks, reducing computational overhead for longer video sequences — achieving 1.87× speedup compared to FlashAttention-3 for 10-second 720p synthesis.

Both text-to-video and image-to-video generation are supported, with bilingual (Chinese-English) understanding and dedicated glyph encoding for accurate text rendering within videos. An integrated super-resolution network upscales outputs to 1080p. The training code and LoRA tuning scripts are publicly available, enabling fine-tuning for specific use cases.

Where it falls short: Visual quality, while impressive for an open-source model running on consumer hardware, doesn’t match the commercial leaders. The model lacks the integrated creative tools (motion brushes, multi-shot direction, etc.) that platforms like Runway and Kling provide. And “runs on an RTX 4090” still means you need an RTX 4090 — a $1,600+ GPU that’s hardly entry-level consumer hardware.

The bottom line: HunyuanVideo 1.5 is the best option for anyone who needs to generate video locally — whether for privacy, cost, customization, or simply because they don’t want to depend on a cloud service that might shut down (see: Sora 2). The 75-second generation time on consumer hardware is a milestone for accessible AI video. Available on GitHub and Hugging Face.

How to Choose: A Decision Framework

With ten strong options, the choice can feel overwhelming. Here’s a practical framework:

By Primary Use Case

| Use Case | First Choice | Why |

|---|---|---|

| Commercial advertising | Seedance 2.0 | Multi-input workflow, highest benchmark scores, native audio |

| Film / broadcast production | Veo 3.1 | Film-grade aesthetics, best audio generation, Google Cloud integration |

| Narrative / episodic content | Kling 3.0 | Multi-shot director, extended duration, character consistency |

| VFX / professional filmmaking | Runway Gen-4.5 | Motion brushes, established ecosystem, granular creative control |

| Social media content | Pika 2.5 | Fastest generation, fun effects toolkit, platform-optimized output |

| Budget production ($0) | Wan2.2 or HunyuanVideo 1.5 | Open-source, free, self-hostable |

| High-volume / cost-sensitive | Hailuo 02 | NCR efficiency, low per-generation cost, strong quality |

| HDR / high-fidelity visuals | Luma Ray3 | Native 4K HDR, EXR output, professional color workflows |

By Budget

- $0/month: Wan2.2 (cloud-free, self-hosted), HunyuanVideo 1.5 (self-hosted), or free tiers on Kling, Pika, Luma, Veo (via Gemini)

- Under $15/month: Pika (Standard), Runway (Standard), Luma (Lite at $7.99)

- $15–30/month: Seedance 2.0, Veo 3.1 (Gemini Advanced at $19.99)

- $30+/month: Kling Pro, Luma Pro/Ultra, Runway Pro — for heavy production use

By Technical Requirements

- Need 4K native output: Kling 3.0, Luma Ray3

- Need clips longer than 15 seconds: Kling (up to 2–3 minutes), Sora 2 (25 seconds, sunsetting)

- Need native audio: Seedance 2.0, Veo 3.1, Kling 2.6+, Sora 2

- Need on-premise / self-hosted: Wan2.2, HunyuanVideo 1.5

- Need professional VFX tools: Runway Gen-4.5

- Need API access: Veo 3.1, Seedance 2.0, Kling, Runway, Hailuo 02

The Multi-Model Reality

Perhaps the most important insight about AI video in 2026 is that no single model does everything best. This shouldn’t be surprising — traditional film production has always used different cameras, lenses, lighting setups, and post-production tools for different purposes. A RED camera serves a different function than a GoPro, and both are valid choices depending on the shot. AI video models are no different: each embodies specific tradeoffs between quality, speed, control, cost, and creative flexibility.

The most sophisticated production teams are already running multi-model workflows:

- Seedance 2.0 for hero shots and key visuals that need maximum quality

- Kling for narrative sequences and multi-shot storytelling

- Veo 3.1 for polished final renders with premium audio

- Runway Gen-4.5 for VFX iteration and element-level control

- Pika 2.5 for rapid social media variants and creative experimentation

- Wan2.2 or HunyuanVideo 1.5 for internal prototyping and budget-sensitive work

This mix-and-match approach requires more sophistication — you need to understand each model’s strengths, manage outputs across multiple platforms, and handle the inevitable inconsistencies in style and tone when combining clips from different generators. But the results justify the complexity. A commercial that uses Seedance for the hero product shot, Veo for the atmospheric establishing sequence, and Pika for the quick social media cutdowns will consistently outperform one that forces a single model to do everything.

The tooling for multi-model workflows is still immature. Most teams are managing this through manual editing in traditional NLEs like Premiere Pro or DaVinci Resolve, with AI-generated clips treated as raw footage. Expect dedicated orchestration tools — platforms that let you route different shots to different models within a single project timeline — to emerge within the next year. The workflow integration challenge is arguably the next big opportunity in this space.

What’s Coming Next

The pace of development shows no signs of slowing. Several trends are visible in the pipeline:

Duration is expanding rapidly. Kling’s 2-minute generations will likely be matched by competitors within months. The technical barriers to longer coherent generation are being solved through multi-shot architectures and better temporal modeling.

Audio-visual joint generation is becoming standard. A year ago, most models generated silent video. Now, four of the top five produce synchronized audio natively. Within 2026, silent-only video generation will likely be a legacy feature.

Open-source is closing the gap. Wan2.2 and HunyuanVideo 1.5 are already competitive with where commercial models were 6–12 months ago. The community-driven development cycle — particularly through ComfyUI and Diffusers integrations — is accelerating this convergence.

The business model question remains unresolved. Sora 2’s shutdown at an estimated $1 million per day in compute costs highlights the fundamental challenge: AI video is expensive to run, and the market hasn’t yet settled on pricing models that make it sustainably profitable at consumer scale. Expect more consolidation, more tiered pricing, and possibly more shutdowns.

Resolution is climbing fast. In 2024, 720p was the standard for AI video. By mid-2025, 1080p became table stakes. Now Kling 3.0 generates native 4K at 60fps, and Luma Ray3 delivers HDR output. Within the next year, 4K will likely be the baseline expectation, and we may see the first 8K experimental outputs. This matters because it moves AI video from “good enough for social media” to “indistinguishable from professional camera footage” in terms of raw resolution.

The compute efficiency race is heating up. Hailuo 02’s NCR architecture delivered a 2.5× efficiency gain. Wan2.2’s MoE approach achieves better output with fewer active parameters. HunyuanVideo 1.5’s SSTA attention mechanism reduces overhead for longer sequences. These aren’t incremental optimizations — they’re architectural innovations that could fundamentally change the cost curve for AI video generation, potentially making the economics work where Sora 2’s economics didn’t.

Regulation is coming. The copyright controversies around Seedance 2.0, the deepfake concerns around all these models, and the broader political conversation about AI-generated media are converging toward regulatory frameworks that will shape what these tools can and cannot do. The models that build strong content provenance, watermarking, and licensing frameworks now will be best positioned for whatever regulatory environment emerges.

Final Thoughts

We’re in the second year of a transformation that will take a decade to fully play out. The tools covered in this guide — from Seedance 2.0’s benchmark-leading quality to HunyuanVideo 1.5’s consumer-hardware accessibility — represent the current state of the art, but that state is advancing monthly.

The most important thing you can do right now isn’t to pick the “best” model and commit to it exclusively. It’s to build fluency across the landscape: understand what each model is good at, develop workflows that leverage their complementary strengths, and stay adaptable as the capabilities evolve. The creators and studios that thrive in this new era won’t be the ones who bet on a single tool — they’ll be the ones who learned to orchestrate the full ensemble.

A year from now, this guide will need a complete rewrite. The models that don’t exist yet will outperform the ones covered here. New architectural breakthroughs — perhaps in consistency, perhaps in duration, perhaps in real-time generation — will shift the competitive landscape in ways we can’t predict. The open-source ecosystem will continue to democratize capabilities that were cutting-edge and proprietary just months earlier.

But the fundamental pattern is clear: AI video generation has crossed the threshold from experimental technology to production tool. It’s not replacing human creativity — it’s amplifying it, giving individuals the production capabilities that once required entire studios, and giving studios the iteration speed that once required months. The ten models in this guide are the leading edge of that transformation.

The cameras of the future are already here. They just happen to be neural networks.

Last updated: April 15, 2026. Specifications, pricing, and availability are subject to change. The Sora 2 app is scheduled to shut down on April 26, 2026. Always verify current pricing and features on official platforms before making purchasing decisions.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.