A beginner-friendly guide to why “type, wait, read” is becoming obsolete — and what happens when AI can finally talk, listen, and watch all at once.

If you’ve ever used ChatGPT, Claude, or Gemini, you already know the rhythm: you type something, you wait, the AI thinks, the AI writes back, you read, and then it’s your turn again. Type. Wait. Read. Type. Wait. Read.

It feels normal. It feels like talking to a computer. But here’s the strange thing — it isn’t actually how humans talk to each other at all. When you have coffee with a friend, you don’t take strict turns. You nod while they speak. You finish their sentence. You interrupt to ask a quick question. You glance at the menu while still listening. You laugh in the middle of their joke. Conversation is a messy, overlapping, simultaneous thing — and our most advanced AIs, until very recently, couldn’t do any of it.

That’s starting to change. On May 11, 2026, Thinking Machines Lab — the AI research company founded by former OpenAI CTO Mira Murati — published a research preview of something they call Interaction Models. The headline idea is deceptively simple: instead of forcing AI to take turns, build a model that experiences time the way we do — in a continuous, flowing stream, broken into chunks of just 200 milliseconds.

It’s a small number with very big consequences. This article unpacks what’s actually changing, why it matters for everyday users, and why a lot of researchers are quietly saying that turn-based chat may be the last “obvious” UI paradigm of the AI era.

The bottleneck nobody talks about

Let’s start with an analogy. Imagine you’re trying to resolve a serious disagreement with your partner — about money, or in-laws, or whose turn it was to do the dishes. You have two options:

- Do it over email. You write a long message. They read it later. They write a long reply. You read it the next morning. Each round takes hours. Tone gets misread. Nuance dies. Small misunderstandings become big ones because nobody can interrupt to clarify.

- Do it in person. You see their face. They see yours. When you misread the situation, they raise an eyebrow before you even finish your sentence. You self-correct on the fly. The whole thing is resolved in fifteen minutes.

This is more or less the difference between today’s turn-based chatbots and what an interaction model is trying to be. Thinking Machines uses almost exactly this framing in their announcement: “Picture trying to resolve a crucial disagreement over email rather than in person.” The medium itself shapes how much of your knowledge, intent, and judgment can reach the other party.

For two decades, we’ve been having every conversation with AI over the equivalent of email. The AI can’t see your eyebrow raise. You can’t tell when it’s about to go off the rails because it doesn’t speak until it’s finished generating its entire response. Neither of you can react to the other in real time. It works — but it’s a bottleneck.

And here’s the kicker that the Thinking Machines team flags openly: the major AI labs have been quietly deprioritizing the human-in-the-loop experience. As a recent frontier model card from Anthropic admits, when their model is used in a “hands-on-keyboard” interactive pattern, some users find it too slow and don’t get as much value out of it — the model performs better when you let it run autonomously in the background. In other words, the industry has been optimizing AI to not need you, rather than to work well with you.

That’s a strange goal if you actually like being involved in your own work.

What’s wrong with “turn-based”

To understand interaction models, it helps to understand exactly what a “turn” is in today’s systems.

In a turn-based AI, the model lives in a single thread of tokens — text chunks, basically. Until you finish typing or speaking, the model doesn’t know what you’re doing. It can’t see whether you’re hesitating, scrolling, double-checking a document, or about to change your mind. And once it starts generating, the reverse is true: its perception freezes. It won’t notice if you’ve already started talking over it, gotten up and left the room, or pointed at the wrong line of code on your screen.

Even today’s so-called “real-time voice” assistants — like advanced voice modes from OpenAI, Google’s Gemini Live, or earlier full-duplex systems — generally cheat. They bolt on a harness: a separate piece of software, typically using something called voice activity detection (VAD), that listens for when you’ve stopped talking and then hands the audio over to the language model. The intelligence is turn-based; the illusion of real-time is a wrapper around it.

Thinking Machines points out the deeper problem with this approach by invoking Rich Sutton’s famous essay The Bitter Lesson. Sutton’s argument, in short, is that over decades of AI research, hand-engineered scaffolding has consistently been outperformed by general-purpose models trained with more data and compute. The VAD harness, the dialog management layer, the turn-boundary predictor — all of these are exactly the kind of hand-crafted scaffolding that historically gets eaten by scaling. For interactivity to keep up with intelligence, it has to live inside the model itself.

That’s the bet. And it’s a big one.

The 200ms idea

Here’s where it gets interesting. Instead of one big turn, the Thinking Machines interaction model carves time into tiny slices — 200 milliseconds each, roughly a fifth of a second. Each slice is called a micro-turn.

Why 200ms? It’s not arbitrary. It’s about the threshold of human conversational perception. Linguists and psychologists who study natural conversation — including the foundational work of Herbert Clark and Susan Brennan on grounding in communication — have shown that humans hand off conversational turns with gaps that average around 200ms across cultures. Faster than that and it feels like interruption; much slower and it feels like awkwardness. Two hundred milliseconds is, roughly, the heartbeat of human conversation.

So every fifth of a second, the model does something genuinely new:

- It receives 200ms worth of input — could be audio, could be video frames, could be text, could be all three at once.

- It decides what, if anything, to output in that 200ms slice — speech, silence, a backchannel “mm-hmm,” a visual reaction, a tool call.

- And then it does it again. And again. And again. Five times every second.

This is the part that breaks the old mental model. The AI isn’t “waiting for you to finish.” There is no finishing. There is just an unbroken stream of tiny moments, and in each moment the model can choose to listen, talk, both, or neither.

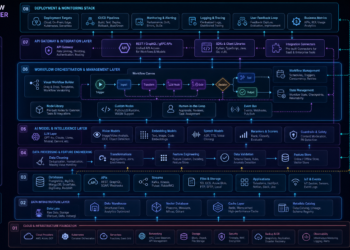

In the architecture diagrams Thinking Machines shared, you can see a continuous video stream and a continuous audio stream feeding in side by side, with the model’s output stream interleaved between them. The model interrupts when it needs to. It stays silent when both of you are silent. It backchannels — those little “yeah,” “uh-huh,” “right” noises humans make to show we’re listening — while you’re still talking. It reacts to a visual cue (you held up your phone) without you having to explicitly say “look at this.”

It is, in a small but real way, present.

What this unlocks for everyday users

This is where it stops being an architecture nerd-fest and starts being something you might actually feel. Let me walk through a few scenarios.

1. Real conversation, not radio

You’re talking through a problem with your AI assistant — maybe planning a trip, or thinking through a tough email to your boss. In today’s voice modes, you say your whole thought, wait for it to detect that you’re done, listen to a full response, and then try to remember the part you wanted to push back on. In an interaction-model world, you can simply interrupt. The model can interrupt you, too — gently — when you say something it thinks is wrong, or when it notices you trailing off mid-thought.

Thinking Machines lists this explicitly as a new capability: seamless dialog management with no separate handoff layer, and verbal interjections where the model jumps in based on context, not just on you stopping mid-breath.

2. Talking and listening at the same time

This sounds impossible until you realize humans do it all the time. The classic example in the Thinking Machines post is live translation: you speak Spanish; the model speaks English over the top of you, in real time, lagging by less than a second. No “wait for me to finish, then translate.” Just continuous, simultaneous bilingual flow — the way a human interpreter at the UN actually works.

The same trick enables live sports commentary, real-time pronunciation correction (“correct my mispronunciations as you hear them”), and co-piloting during a task where the model narrates what it’s seeing while you work.

3. The model actually watches

This might be the biggest shift for non-technical users. Today’s “vision” features in chat assistants are basically photo-album tools — you upload an image, the model describes it, you reply. An interaction model is continuously seeing. Thinking Machines tested this with several benchmarks adapted from academic work, including RepCount-A (counting exercise repetitions from video) and ProactiveVideoQA (answering questions whose answers only become available at specific moments in a video).

The use cases are immediate and concrete:

- “Count my pushups.” The model watches, counts out loud — “one… two… three…” — and notices when your form breaks.

- “Tell me when I’ve written a bug in my code.” It watches your screen, says nothing for ten minutes, then quietly interrupts: “Line 47, you’re not handling the null case.”

- “Watch this recipe video with me and pause when I need to do something.” It commentates as you cook.

Crucially, on Thinking Machines’ internal benchmarks, every other major model — including OpenAI’s GPT-realtime-2.0 and Google’s Gemini 3.1 Flash Live — scored close to zero on these proactive visual tasks. They simply can’t do this yet. Not because they’re stupid, but because their architecture has no way to choose to speak in response to something visual that the user didn’t explicitly point to.

4. Time awareness

Here’s a small one that turns out to be huge: today’s AIs have no real sense of elapsed time during a conversation. Ask current voice assistants “how long did it take me to run that mile?” or “remind me to breathe in and out every four seconds” and they will struggle or fail outright. The Thinking Machines team built two internal benchmarks — TimeSpeak and CueSpeak — specifically to measure this, and their model scored 64.7% and 81.7% respectively, versus single-digit scores for GPT-realtime-2.0.

Time-aware AI changes what’s possible for fitness coaches, meditation guides, language tutors with timed drills, accessibility tools for people with cognitive disabilities, and just about any application where when you say something matters as much as what you say.

5. Thinking deeply while staying present

One of the cleverest architectural choices in the paper is the two-model split:

- The interaction model stays with you, in real time, always present.

- A separate background model runs asynchronously in the background, doing slower, deeper reasoning — searching the web, calling tools, working through agentic workflows.

The interaction model hands tasks off to the background model when a question needs more thought, but it doesn’t go silent. It keeps the thread going — “okay, I’m pulling that up, hold on, by the way you mentioned earlier…” — and weaves the background results back in when they arrive.

If you’ve ever talked to a smart friend who can keep small-talking with you at a party while they’re mentally working out a math problem you asked them about, you already understand the design.

What it’s not (yet)

A healthy reality check before you get too excited. This is a research preview, not a product. Thinking Machines is explicit about the limitations:

- Long sessions are hard. Streaming audio and video accumulate context fast, and managing memory across hours-long conversations is still an open problem.

- You need a good internet connection. Streaming five-times-a-second updates is bandwidth-hungry; bad Wi-Fi will make the experience fall apart.

- The current model is small.

TML-Interaction-Smallis a 276-billion-parameter Mixture-of-Experts model with 12B active parameters — competitive but not at the very frontier of raw intelligence. Their bigger models are still too slow to run in this real-time regime. - Safety in a real-time medium is unsolved. When the AI can speak at any moment and reacts to what it sees, the ways things can go wrong are different — and the team flags this as an active area of work, including modality-appropriate refusals and red-teaming for multi-turn speech-to-speech conversations.

- There’s no public release date. A limited research preview is coming “in the coming months,” with a wider release later in 2026.

It’s also worth being honest about the competition. Smaller specialized full-duplex systems like Kyutai’s Moshi have been demonstrating audio interactivity since 2024. OpenAI and Google have shipped real-time voice and video features in their consumer products. What’s genuinely new about Thinking Machines’ work isn’t that real-time AI exists — it’s that they’re claiming state-of-the-art combined performance on intelligence and interactivity, in one unified, trained-from-scratch model rather than a clever harness.

If their benchmark numbers hold up under independent scrutiny, that’s the bigger deal. They’ve built a model that doesn’t trade smarts for speed.

Why this changes the metaphor for AI

For sixty years, the dominant metaphor for “using a computer” was the desktop — files, folders, a trash can. Then, for the last fifteen years, it became the app — a tile on a touchscreen. With ChatGPT, the metaphor briefly became the chat window — a transcript you scroll through.

Chat is already starting to feel wrong. Anyone who has tried to do real collaborative work with an AI — writing a complex document, debugging a tricky system, brainstorming a strategy — has had the same frustrating experience: the medium can’t carry the weight of the relationship. Things that would take five minutes face-to-face take an hour in a chat window. Misunderstandings compound. You either over-specify your prompt (and end up doing all the work) or under-specify and get a useless answer.

Researchers and designers have been groping toward what the next metaphor should be. Archetype AI argues that we currently borrow from three older paradigms — the companion (ChatGPT, Siri), the toolkit (Photoshop’s Generative Fill), and the enchanted object (smart thermostats, self-driving cars) — but that none of them quite fit what AI is becoming. Jakob Nielsen has called the AI shift “the third user-interface paradigm in the history of computing,” but the actual shape of that paradigm is still being figured out.

Interaction models suggest the answer might just be: the conversation itself, but actually conversational. Not a metaphor borrowed from offices or magic or pets — just the thing humans have been doing with each other for two hundred thousand years, finally available with software.

That’s a quieter, less science-fictional vision than “AI agents will do everything for you while you sleep.” But it might be the more useful one. Most of us don’t actually want an AI that runs off and does our job autonomously — we want an AI that helps us do our job better, and stays in the room while we do it.

The bigger industry context

The timing isn’t accidental. Real-time, multimodal AI has been the frontier everyone has been racing toward since at least early 2024. Google shipped Gemini Live with screen sharing. OpenAI made advanced voice and vision standard in ChatGPT. Apple is reportedly close to mass-producing camera-equipped AirPods that feed visual data to Siri. Robotics labs like Physical Intelligence and Figure are building foundation models that operate in continuous real-time loops because the physical world doesn’t pause for token generation.

What Thinking Machines is contributing isn’t the first attempt at this — it’s an argument about how to do it correctly. The case they’re making is architectural and philosophical: stop bolting interactivity onto language models as an afterthought, and start building it in from the bottom. Train it from scratch. Make it part of what the model is, not what wraps around it.

If they’re right, then most of the current generation of “real-time” AI features — built on top of turn-based language models with VAD harnesses and stitched-together pipelines — are a transitional technology. They’ll feel, in two or three years, the way flip-phone “mobile web” feels now: clearly trying to do the right thing, clearly held back by the underlying architecture.

If they’re wrong, the bitter lesson cuts the other way: turn-based models will simply get fast enough and smart enough that the harness disappears into the noise, and we won’t need a separate “interaction-native” architecture.

The next 12 months will tell us a lot.

What to actually do about it

If you’re an everyday user — not an AI researcher — what do you take away from all this?

A few practical thoughts:

1. Notice the bottleneck in your own workflow. Next time you’re using ChatGPT or Claude or Gemini for real work, pay attention to the moments where you wish you could interrupt, where you wish the AI could see your screen and react proactively, where the back-and-forth feels like email when it should feel like a phone call. Those are exactly the moments interaction models are designed to fix. Knowing the shape of the bottleneck will make you a better evaluator of the tools that try to solve it.

2. Start preferring voice and video features when they’re available. They’re imperfect, but they’re the modes where the next wave of UX innovation will land first. Building the habit now — talking to your AI, sharing your screen with it, pointing your camera at things — will pay off enormously when these interfaces actually become good.

3. Don’t expect a clean switch. Real adoption of interaction-native models will probably look like a slow, uneven creep. You’ll first notice it in narrow domains — a language tutor that finally feels natural, a coding assistant that watches your screen, a customer service bot that doesn’t infuriate you. Whole-life “AI co-pilots” that work this way are further out.

4. Watch the benchmarks, not the demos. Demos are easy to fake. The interesting question is whether other labs reproduce the kinds of capabilities Thinking Machines is measuring — time awareness, visual proactivity, simultaneous speech — on independent benchmarks. The FD-bench and Audio MultiChallenge numbers in the announcement are a starting point, but the field needs more open evaluations, and Thinking Machines has explicitly invited the community to build them.

5. Stay a little skeptical of the hype, but take the direction seriously. Whether or not Thinking Machines specifically is the lab that nails this, the direction is almost certainly right. AI that can talk, listen, and watch at the same time — without a clunky harness in the middle — is the obvious endpoint of where multimodal models are going. The only real question is who builds it first, how good it gets, and how soon.

The end of “type, wait, read”

There’s a small, ordinary moment in every human conversation that we never think about: the moment when you start to say something, and the person you’re talking to nods before you’ve finished the sentence, because they already know where you’re going. You don’t bother finishing. You just move on. Communication compresses. Trust deepens. The conversation gets faster and richer because both parties are present in the same moment together.

For sixty years of computing, we’ve never had that with a machine. The machine waits for us to finish typing. We wait for it to finish responding. We meet, briefly, in the gap between turns — and then we go back to our separate timelines.

Interaction models, if they live up to what Thinking Machines is claiming, are the first serious attempt to close that gap. Not by making the AI faster at taking turns, but by getting rid of turns altogether — replacing them with a continuous fifth-of-a-second heartbeat that both of you share.

It’s a small technical change. It might be a very large cultural one.

In a few years, we may look back at the era of typing into a box and waiting for a reply the same way we now look back at dial-up internet, or hand-cranking car engines, or sending each other letters and waiting two weeks for a response. It worked. It got us here. But it was never how we were going to want to do it forever.

The end of turn-based AI isn’t a dramatic event. It’s just the quiet moment when, finally, you stop noticing the wait.