A benchmark-by-benchmark breakdown of how OpenAI’s new model beat every rival in every category, by margins we’ve never seen before.

When a new frontier model ships, the story is usually told in single-digit points. GPT-5.4 edges Claude Opus 4.7 by two Elo. Gemini 3.1 Pro squeaks past Opus by three. Leaderboards at the top of the pack compress into noise, and the press release inevitably leans on a graph with a y-axis that starts at 1450.

That’s not what happened on April 20, 2026.

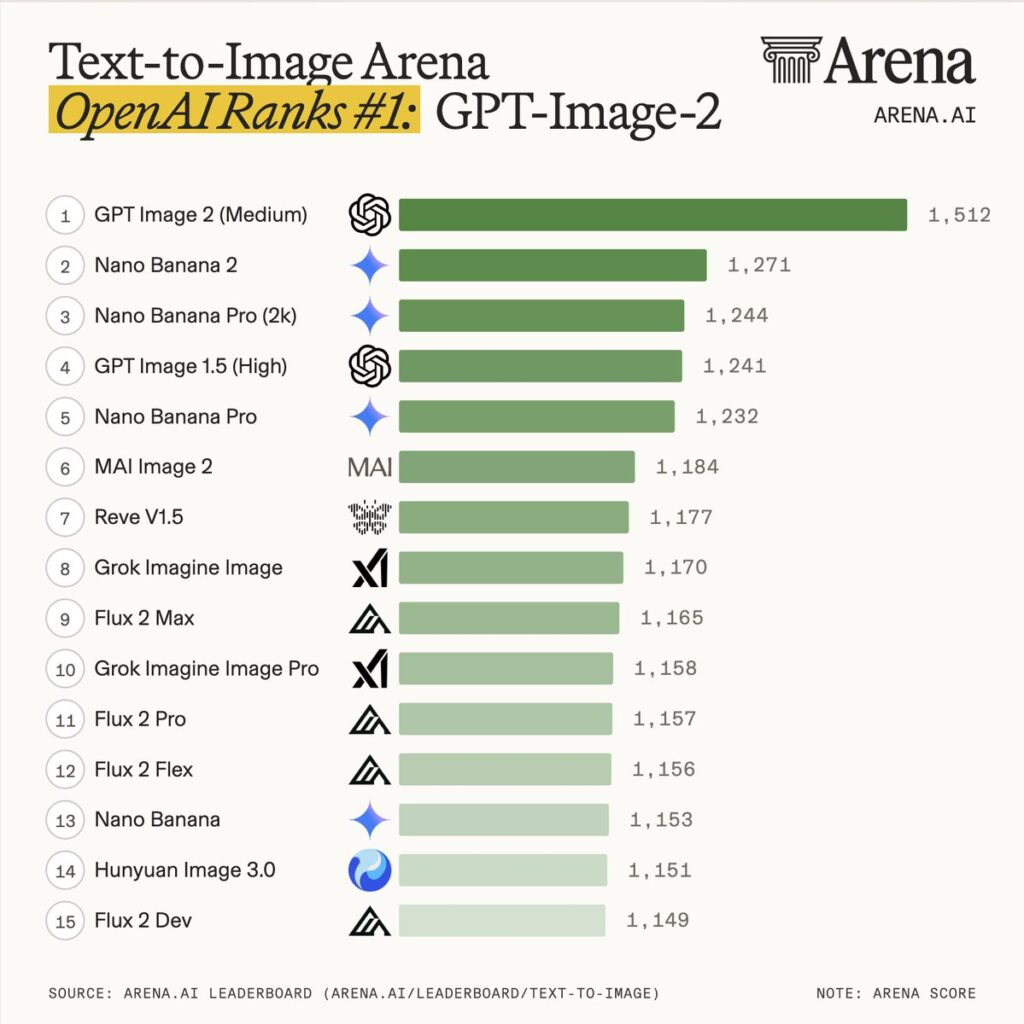

OpenAI launched ChatGPT Images 2.0 and the underlying gpt-image-2 model, and the Arena Text-to-Image Leaderboard posted numbers that look like a typo. gpt-image-2 (medium) debuted at 1512 Elo, with the nearest competitor sitting at 1270. That’s a 242-point gap between rank one and rank two — Arena itself called it “the largest gap we’ve seen to date,” and “no model has dominated Image Arena with margins this wide.”

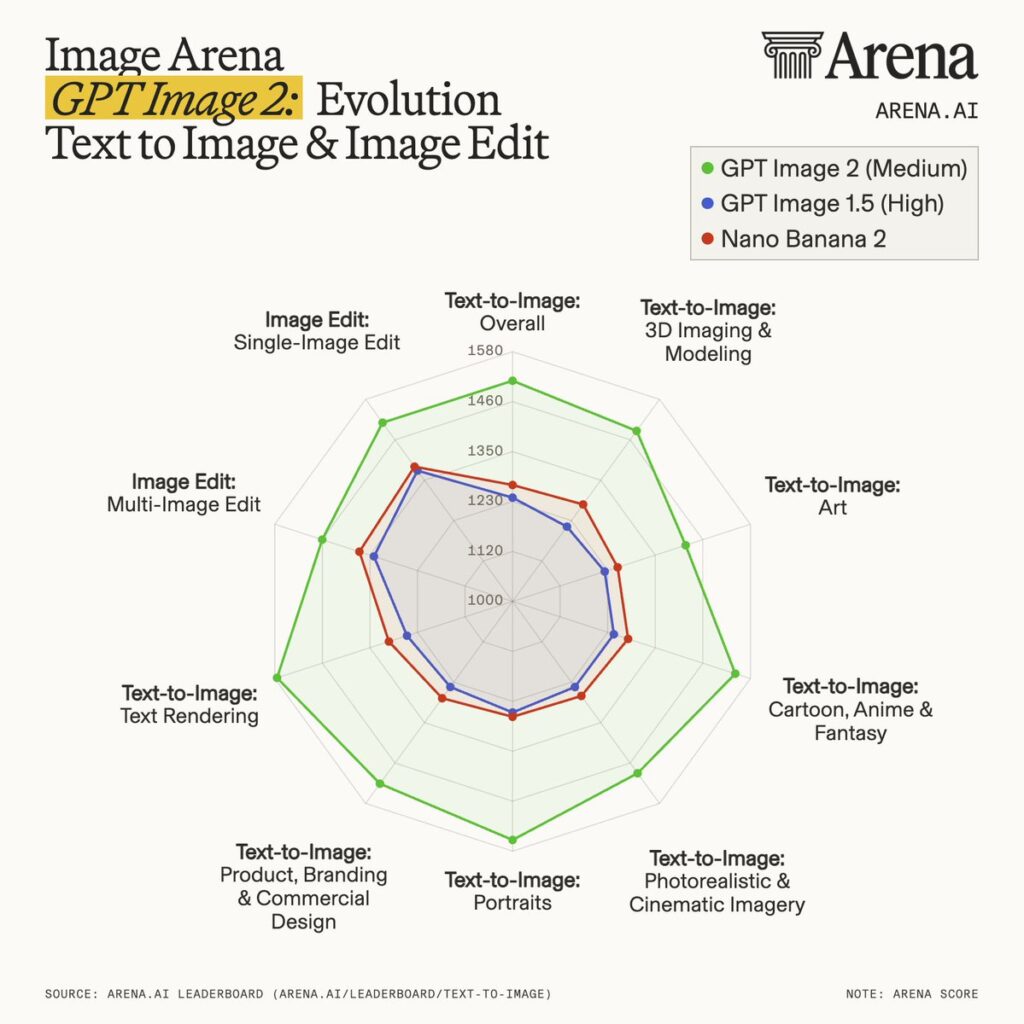

Then came the sweep. Not just #1 in Text-to-Image. #1 in Single-Image Edit. #1 in Multi-Image Edit. #1 in every one of the seven Text-to-Image sub-categories, including every weakness (text rendering, 3D, multilingual typography) that has historically tripped up autoregressive and diffusion models alike.

This is a benchmark article. So let’s stay in the numbers.

The Three Headline Arenas

Arena runs three distinct Image leaderboards. GPT-Image-2 is #1 in all three, and the size of the lead is the story:

| Arena | GPT-Image-2 Score | Lead Over #2 | #2 Model |

|---|---|---|---|

| Text-to-Image | 1512 | +242 | nano-banana-2 with web-search (gemini-3.1-flash-image-preview) |

| Single-Image Edit | 1513 | +125 | nano-banana-pro (gemini-3-pro-image-preview-2k) |

| Multi-Image Edit | 1464 | +90 | nano-banana-2 |

For context: on the live Text-to-Image board, the difference between rank #2 (Gemini 3.1 Flash Image, 1270) and rank #20 (qwen-image-2512, 1133) is 137 points. GPT-Image-2’s lead over rank #2 alone (242) is wider than the entire top-20 spread behind it.

If you’re used to reading AI benchmark tables where the top of the pack is a photo finish, it helps to recalibrate. This isn’t a photo finish. It’s one model lapping the field.

The Text-to-Image Leaderboard (April 19, 2026 Snapshot)

Here is what the top of the Arena Text-to-Image board actually looks like the day after launch:

| Rank | Model | Score | Votes |

|---|---|---|---|

| 1 | gpt-image-2 (medium) — OpenAI | 1512 ± 8 (Preliminary) | 15,127 |

| 2 | gemini-3.1-flash-image-preview (nano-banana-2, web-search) — Google | 1270 ± 5 | 51,886 |

| 3 | gemini-3-pro-image-preview-2k (nano-banana-pro) — Google | 1244 ± 4 | 90,321 |

| 4 | gpt-image-1.5-high-fidelity — OpenAI | 1241 ± 4 | 95,176 |

| 5 | gemini-3-pro-image-preview (nano-banana-pro) — Google | 1232 ± 5 | 82,636 |

| 6 | mai-image-2 — Microsoft AI | 1184 ± 5 | 32,001 |

| 7 | reve-v1.5 — Reve | 1177 ± 6 | 7,807 |

| 8 | grok-imagine-image — xAI | 1170 ± 3 | 122,850 |

| 9 | flux-2-max — Black Forest Labs | 1165 ± 4 | 93,917 |

| 10 | grok-imagine-image-pro — xAI | 1158 ± 4 | 77,922 |

Before moving on, it’s worth acknowledging the caveat Arena itself flags: the gpt-image-2 score is marked Preliminary, with a confidence interval of ±8 versus ±3–5 for its neighbors. That’s a function of vote volume — 15,127 battles at the time of snapshot, compared with 51,886 for Gemini 3.1 Flash Image and 750,440 for the standard nano-banana variant (gemini-2.5-flash-image-preview) at rank #13.

Even at the pessimistic end of its confidence band (1504), though, GPT-Image-2 is ahead of the optimistic end of rank #2’s band (1275) by 229 points. The uncertainty does not meaningfully threaten the ordering; it only debates the exact size of an already-historic lead.

The Full Category Sweep — #1 in All 7 Text-to-Image Sub-Categories

Arena breaks Text-to-Image into seven qualitative buckets. GPT-Image-2 takes the top spot in every one, beating nano-banana-2 (its closest rival) across the board. The really instructive numbers are the deltas against OpenAI’s previous SOTA, gpt-image-1.5-high-fidelity:

| Category | GPT-Image-2 Rank | Elo Gain vs. GPT-Image-1.5-High-Fidelity |

|---|---|---|

| Product, Branding & Commercial Design | #1 | +277 |

| 3D Imaging & Modeling | #1 | +274 |

| Cartoon, Anime & Fantasy | #1 | +296 |

| Photorealistic & Cinematic Imagery | #1 | +247 |

| Art | #1 | +197 |

| Portraits | #1 | +296 |

| Text Rendering | #1 | +316 |

Three observations worth pulling out:

1. Text Rendering (+316) is the biggest jump. This is the category every image model has historically lost. Diffusion-era models (SD 3.5, Midjourney v6) got eviscerated for garbled signage and fake Lorem Ipsum. GPT Image 1.5 already ran around 90–95% text accuracy, per fal.ai’s coverage. Arena’s +316 Elo swing implies the community now prefers GPT-Image-2 over GPT-Image-1.5 on text tasks in the overwhelming majority of paired matchups. Outside-of-Arena reports suggest accuracy now sits above 99%.

2. Portraits (+296) and Cartoon/Anime/Fantasy (+296) tied for second-biggest. These are stylistic and identity-consistency categories, where Midjourney and Google’s Imagen/nano-banana families traditionally held an edge. A nearly 300-point Elo jump suggests GPT-Image-2 isn’t just catching up on photorealism — it’s ahead on aesthetic control too.

3. Art (+197) is the smallest. Even the floor of the improvement curve is a full generational leap. For perspective, the gap between gpt-image-1.5-high-fidelity (1241) and gpt-image-1 (1115) — OpenAI’s previous full-generation upgrade from March 2025 to December 2025 — was 126 points. The smallest category gain in GPT-Image-2 (+197 on Art) is larger than the entire previous generational delta.

How It Compares to Every Serious Competitor

Instead of pulling marketing tables, let’s read the numbers directly off the Arena Overall board.

vs. Google’s nano-banana family (Gemini 3.1 Flash Image, Gemini 3 Pro Image)

Before GPT-Image-2, this was the family to beat. nano-banana-2 with web-search held the crown at 1270; the 2K pro variant sat at 1244. GPT-Image-2 now leads them by 242 and 268 points respectively. Google’s models remain excellent — nano-banana-2’s web-search-grounded generation was the reason 01Founder’s launch analysis spent most of its word count on “Thinking Mode” parity — but on Arena’s blind-pairwise votes, the delta is decisive.

vs. Microsoft’s mai-image-2

mai-image-2 sits at rank #6 with 1184 Elo. Gap to GPT-Image-2: 328 points. Microsoft’s model was a credible challenger in March; it is now closer in Elo to rank #23 (wan2.5-t2i-preview, 1117) than to the new #1.

vs. Black Forest Labs’ Flux 2 family

The strongest of the Flux 2 variants — flux-2-max — lands at #9 with 1165 Elo, a 347-point gap. flux-2-pro (1157), flux-2-flex (1156), and flux-2-dev (1149) fill ranks #11, #12, and #15. For open-weight workloads, Flux remains the ceiling, but frontier Flux is now competitive with 2025-era proprietary models — not 2026-era ones.

vs. xAI’s grok-imagine

Two variants, ranks #8 and #10: grok-imagine-image at 1170, grok-imagine-image-pro at 1158. A 342-point gap to the new leader. Of note: xAI has unusually high vote volume for a recent entrant (122,850 and 77,922 respectively), so the Elo is more settled than most.

vs. Bytedance Seedream

seedream-4.5 at #17 (1143), seedream-4-2k at #18 (1140), seedream-5.0-lite at #22 (1118). The Chinese labs’ image models remain in a tight cluster around 1115–1145 Elo. GPT-Image-2 leads seedream-4.5 by 369 points.

vs. OpenAI’s own DALL-E 3

DALL-E 3 is still on the board — rank #52 at 968 Elo. GPT-Image-2 leads its own ancestor by 544 points. DALL-E is scheduled to shut down May 12, 2026, and this leaderboard is essentially its eulogy.

vs. Midjourney

Midjourney is not on Arena, so we can’t put an Elo on it. But note that Arena includes nearly every other production-grade image model — Ideogram v3 (1049), Recraft v4 (1107), Runway Gen4 (1027), Leonardo’s Lucid Origin (1013), Stable Diffusion 3.5 Large (938). Midjourney’s continued absence from this kind of blind-pairwise human eval is itself a data point worth considering.

The Generational Arc of OpenAI’s Image Stack

It’s rare to be able to trace a lab’s entire product line on a single Elo axis. Arena lets us do it:

| OpenAI Model | Rank | Elo |

|---|---|---|

gpt-image-2 (medium) | #1 | 1512 |

gpt-image-1.5-high-fidelity | #4 | 1241 |

gpt-image-1 | #25 | 1115 |

gpt-image-1-mini | #28 | 1104 |

dall-e-3 | #52 | 968 |

From dall-e-3 (October 2023) to gpt-image-2 (April 2026), OpenAI’s image stack has climbed 544 Elo points — spanning what amounts to the entire quality distribution of the current market. The jump from gpt-image-1 (March 2025) to gpt-image-2 (April 2026), in just over 13 months, is 397 Elo points.

For comparison, Anthropic’s Claude family has gained roughly 60 Elo on the Text Arena between claude-opus-4-5 (1491 at rank #7 per the overview page) and claude-opus-4-7-thinking (1504 at rank #1) — a full generation of text-model iteration. Image generation, in other words, is still moving at a steeper slope than text.

What the Numbers Don’t Capture (But Are Consistent With)

Benchmarks tell you what the crowd preferred in a blind pairwise vote. They don’t explain why. Based on community testing documented by Fello AI, Latent Space’s launch roundup, and the PANews deep-dive, a few themes consistently map to the Elo pattern:

- A new standalone architecture, not an extension of GPT-4o’s image pipeline. Single-pass inference instead of the previous two-stage process, with new PNG metadata tags detected in outputs.

- Two distinct modes in product: Instant Mode for fast generation and Thinking Mode, which searches the web, plans layout, and self-checks before rendering. This correlates directly with nano-banana-2’s lower Elo — Google shipped web-search on an image model first, but Arena voters prefer GPT-Image-2’s execution.

- Near-100% text rendering accuracy, consistent with Arena’s +316 Elo jump in the Text Rendering category.

- Elimination of the yellow color cast that dogged GPT Image 1.5 — relevant especially to the +247 Elo gain in the Photorealistic & Cinematic category.

- Support for wider aspect ratios (16:9, 9:16, ultra-wide 3:1, ultra-tall 1:3), which likely drives part of the gain in Product, Branding & Commercial Design (+277).

None of these alone would produce a 242-point lead. The interaction of all of them — plus whatever OpenAI did to the base architecture — is what the Arena is measuring in aggregate.

Methodology Notes for the Skeptics

A benchmark-forward article should be honest about the benchmark. Arena’s methodology is worth understanding before you cite the 1512.

- Elo is relative. There is no absolute “image quality” scale. 1512 is only meaningful because nano-banana-2 is 1270, Flux 2 Max is 1165, and DALL-E 3 is 968 on the same board.

- Votes are human, not automated. Users submit prompts, see two blind outputs, and pick one (or tie). Each vote updates the Elo. This makes Arena biased toward whatever a self-selected population of Arena users is generating (more cartoons and portraits, fewer niche industrial use-cases), but that bias is consistent across models.

- Style Control is available. Arena offers a “Remove Style Control” version of the board that normalizes for presentation artifacts. The 1512 figure is the default (with Style Control active). The “Preliminary” flag on GPT-Image-2’s score is due to vote volume (15,127), not methodology.

- The 242-point figure is pre-stabilization. It will almost certainly shrink once vote counts cross ~100,000, because that’s what happens to every new model on Arena — the initial gap compresses as more battles accumulate. Current top models (gpt-image-1.5, nano-banana-pro) all have 80k–95k votes; their Elo won’t move much. GPT-Image-2’s will.

The honest version of the story is this: the ordering (GPT-Image-2 at #1, by a wide margin, across all three Image Arenas) is robust. The exact size of the lead (1512, +242) is still settling. That distinction matters when you write about benchmarks.

Where This Leaves the Market

The full April 19 leaderboard — 55 models, 4,894,371 total votes — reads as a cleanly stratified hierarchy:

- Tier 1 (one model):

gpt-image-2at 1512. - Tier 2 (~1230–1270): Google’s nano-banana-2 and nano-banana-pro variants, plus

gpt-image-1.5-high-fidelity. - Tier 3 (~1150–1190): Microsoft

mai-image-2, Reve, Grok Imagine, Flux 2 Max. - Tier 4 (~1100–1160): The rest of Flux 2, the Seedream family,

wan2.6-t2i,qwen-image-2512, Google’s Imagen 4,gpt-image-1,gpt-image-1-mini. - Tier 5 and below: Everything older, smaller, or open-source-first.

The tier boundaries were already tight before GPT-Image-2. What the new model does is carve out a Tier 0 that only it occupies, and push the center of gravity of the entire conversation. Every announcement for the next six months will be evaluated against 1512.

For non-OpenAI competitors, the strategic implication is steep. Google’s nano-banana-pro-2k is at 1244. To close the gap, they don’t need to ship a better image model — they need to ship a decisively better one, because a five-point Elo bump doesn’t matter when the leader is 242 points ahead. The Arena Text-to-Image Overall page will be worth watching weekly for the next quarter to see who, if anyone, responds.

Closing: Why This Benchmark Cycle Is Different

Image Arena has existed long enough to see multiple “new #1” moments — Midjourney v6 briefly, Imagen 3, Flux 1.1 Pro, Nano Banana Pro, Nano Banana 2, GPT Image 1.5. Every one of them took over by single-digit to low-double-digit Elo points. The field kept compressing at the top.

GPT-Image-2’s Arena debut ends that pattern. 1512 vs. 1270. +242 in Text-to-Image, +125 in Single-Image Edit, +90 in Multi-Image Edit. #1 in all seven Text-to-Image sub-categories, with a minimum +197 gain over the previous OpenAI SOTA. Three separate records in three separate arenas, set by one model on day one.

The Elo will soften over the coming weeks. That’s expected. But nothing in the current snapshot — and nothing in the independent qualitative testing documented so far — suggests the ordering will change. The only remaining question is how long #1 stays 242 points above #2.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.