A 2026 field guide for founders, investors, and creators navigating the bifurcation of the generative AI market.

Published on kingy.ai — April 2026

Prologue: Two Years In, and the Ground Has Shifted

It has been roughly two and a half years since ChatGPT’s public debut triggered what is arguably the most violent platform shift in modern technology history. The initial frenzy — the “Act One” of generative AI, as Sequoia Capital famously labelled it in Generative AI’s Act Two — was defined by an explosion of thin, novelty applications wrapping foundation model APIs. If you could type a prompt, pipe a response through a pretty UI, and charge $9.99/month, you had a business. For a while.

That window has closed.

As of April 2026, the market has decisively bifurcated. The easiest way to understand what happened is this: the generative AI industry is not converging toward a single dominant platform, nor is it flattening into a fragmented long-tail of thousands of equal-sized apps. Instead, it is splitting into two very different economies that happen to sit on the same substrate.

On one side, a Platform Layer — OpenAI, Anthropic, Google, Microsoft, Meta — is consolidating at a breathtaking pace, absorbing generic functionality into their core products at a rate that makes a huge class of independent applications structurally obsolete within weeks of launch. On the other side, a Specialized Application Layer is quietly emerging: vertical-specific, deeply integrated, data-rich, agentic — and, crucially, defensible.

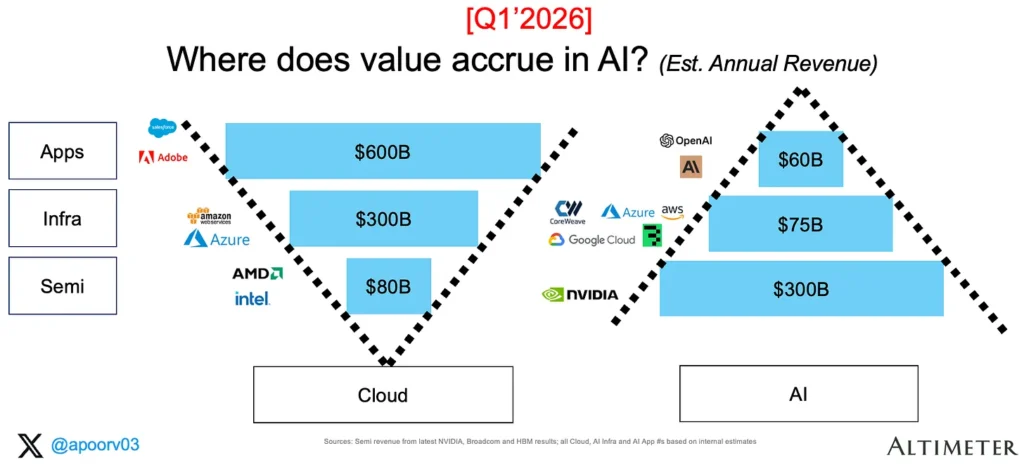

Apoorv Agrawal of Altimeter Capital captured the economics of this split better than almost anyone in his recent update, The Economics of Generative AI: Two Years Later. Two years after his original 2024 analysis, The Economics of Generative AI, the AI ecosystem has grown roughly 5x — from ~$90 billion to ~$435 billion in annualized revenue — yet the shape of the value chain has barely moved. Semiconductors still capture around 70% of revenue and 79% of gross profit. Apps capture just $60 billion in annualized revenue, despite being closest to the end customer.

For any founder who built a “chat with your PDF” app in 2023, or any investor who backed one, the implications are severe. For anyone building in the right part of the market — vertical agents, enterprise systems of action, open-weight tooling, proprietary-data products — the next ten years could be the single greatest opportunity in software history.

This guide lays out the map.

We will walk through the current value chain, the release cadence that is killing thin wrappers, the true size and trajectory of the market, the structural reasons B2C AI subscriptions churn so viciously, the three archetypes of durable AI businesses, and what all of this means both for investors and for creators thinking about who to accept sponsorships from.

It’s a long read — deliberately. If you’re going to build, invest, or endorse anything in this space over the next five years, you need to understand the physics of the ground beneath you.

Let’s get into it.

1. The Inverted Pyramid: Who Actually Captures Value in AI

To understand why thin wrappers struggle, you first need to understand where the money actually goes.

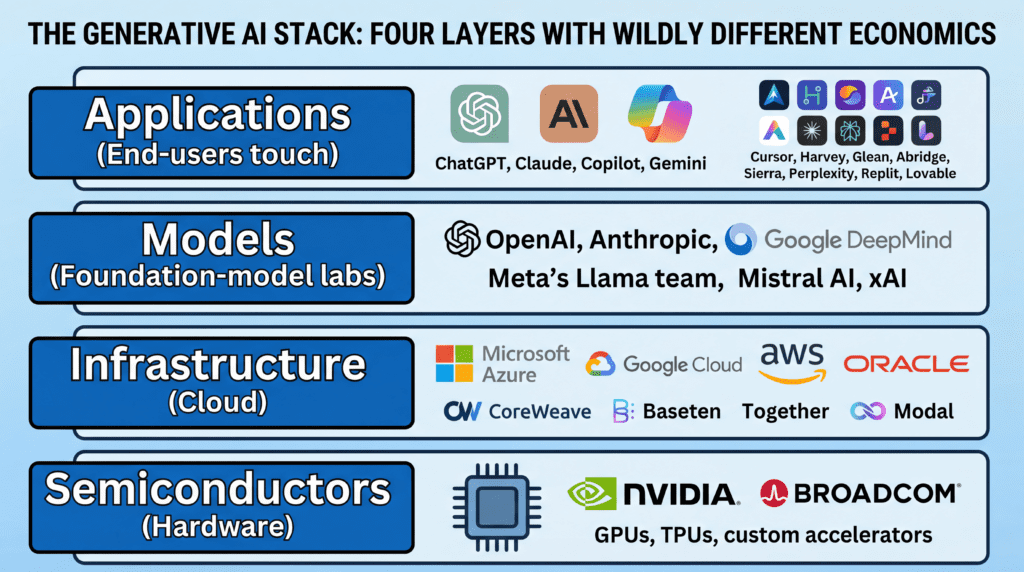

The generative AI stack consists of four layers, each with wildly different economics:

- Semiconductors (Hardware) — the GPUs, TPUs, and custom accelerators that do the computation. Dominated by NVIDIA, with Broadcom (designing custom chips for Google, Meta, ByteDance) and a handful of custom-silicon programs filling the margins.

- Infrastructure (Cloud) — the hyperscalers and AI-specific clouds: Microsoft Azure, Google Cloud, AWS, Oracle, CoreWeave, Baseten, Together, Modal.

- Models — the foundation-model labs themselves: OpenAI, Anthropic, Google DeepMind, Meta’s Llama team, Mistral AI, xAI.

- Applications — everything end-users actually touch: ChatGPT, Claude, Copilot, Gemini, plus the ecosystem of vertical tools, agents, and “AI-native” products like Cursor, Harvey, Glean, Abridge, Sierra, Perplexity, Replit, and Lovable.

In a mature cloud software market, value distribution tends to follow an intuitive shape: applications, sitting closest to the customer, capture the majority of profits (~70%), infrastructure takes a middle slice, and semiconductors earn a small fraction (~6%). That’s the “V” shape of cloud economics.

Generative AI has produced an almost exact inversion.

According to Agrawal’s Two Years Later analysis, as of April 2026:

- The Semi layer generates approximately $300 billion in annualized revenue and captures ~70% of all AI revenue. NVIDIA alone represents roughly 80% of that layer, with a data-center business annualizing at ~$250 billion. At gross margins of ~73%, the semi layer earns approximately $225 billion in gross profit.

- The Infrastructure layer pulls in ~$75 billion in AI-attributable revenue, with Azure, AWS, GCP and Oracle each contributing $10–$20 billion, CoreWeave adding ~$6 billion, and inference providers making up the remainder. Gross margins sit around 55%, yielding roughly $40 billion in gross profit.

- The Applications layer — despite being the most visible — generates around $60 billion in annualized revenue. OpenAI and Anthropic together represent ~$45 billion of that (~75% of the layer). Cursor, ElevenLabs, Glean, Sierra, Perplexity, Replit, Lovable, Harvey, and Abridge follow at a distance. Gross margins here are compressed to ~33% due to inference costs paid up the stack. Total gross profit: ~$20 billion.

Put bluntly: NVIDIA alone added $175 billion of incremental revenue over two years — roughly 3x the size of the entire application layer today.

This is not how technology markets usually look. It is the mirror image of cloud.

Will the Stack Flip?

The consensus among most serious analysts is yes — eventually. In every prior platform shift (mobile, cloud), the value chain eventually tilted toward the application layer as infrastructure commoditized. Agrawal’s own 2024 prediction was exactly that: the application layer would capture an outsized share as models and compute became cheaper.

But the honest reframe in his 2026 update is that the flip is happening more slowly than anyone expected. Over two years, infra and apps each gained about four points of profit share. Semis fell from 87% to 79%. At that pace, the AI stack would need well over a decade — likely closer to 15 years, echoing cloud’s own timeline — before apps enjoy cloud-level economics.

The key variable is custom silicon. If Google TPUs (now on their 7th-generation Ironwood), Amazon’s Trainium3, Microsoft’s Maia 200, OpenAI’s Broadcom-designed ASICs, Meta’s MTIA, and the AMD MI450 program begin to scale meaningfully against NVIDIA’s dominance, margins will compress at the hardware layer, and profits can begin to migrate upward.

For founders and investors, the takeaway is pragmatic: the application layer is still early, still under-monetized, and still the biggest open opportunity — but the economics there are genuinely harder than they appear. You are not going to capture value by wrapping an API. You have to do something the platforms underneath you can’t.

Which brings us to the second, more urgent problem.

2. The Release Cadence That Kills Thin Wrappers

The single most underappreciated force in the AI market right now is the relentless speed at which foundation-model providers are absorbing features.

Look at the release logs of the major labs and you will see a pattern that is fatal for any business built on a thin integration layer.

OpenAI

Between September 2025 and March 2026, OpenAI rolled out GPT-5, followed by a tight sequence of incremental updates including GPT-5.3-Codex and GPT-5.4 Thinking, while simultaneously retiring GPT-4o and GPT-5.1. Every release is accompanied by expanded native capabilities — file analysis, browsing, code execution, image generation, vision, voice — that systematically collapse the value proposition of the cottage industry of wrapper apps that sprung up around each capability.

The ChatGPT Release Notes are, in a literal sense, a graveyard of startups.

The April 2026 expansion of Codex from a coding assistant into a general-purpose agent capable of “operating a computer” is particularly telling. Autonomous computer-use was, until recently, the raison d’être of a whole generation of AI orchestration startups. Now it’s a platform feature.

Anthropic

Anthropic’s release cadence through the Claude 4.x family has been equally aggressive. The Anthropic release notes document at least six major updates between May 2025 and April 2026, culminating in Claude Opus 4.7.

Anthropic has also explicitly rolled out features that used to be the domain of startups: Artifacts (an interactive workspace for code and content generation — effectively the product category that several early wrappers occupied), Managed Agents (handling orchestration and long-running tasks), and increasingly sophisticated tool use. The deprecation schedule is brutal: models are often retired less than a year after launch, forcing any company built on a specific Claude endpoint to constantly re-tool.

Google’s Vertex AI release notes tell the same story from the cloud side. The RAG Engine rolled into Vertex AI makes “chat with your documents” a commoditized, two-click feature at the infrastructure level. Google’s model marketplace on Vertex is absorbing fine-tuning, enterprise deployment, and compliance tooling that third-party MLOps vendors used to sell as entire businesses.

Microsoft

Microsoft’s Copilot Studio What’s New page is arguably the most aggressive of all. Microsoft is systematically embedding RAG, agent orchestration, multi-agent workflows, Computer-Using Agents, and deep Microsoft 365 data integration directly into Copilot Studio. Any product built to do “AI + Teams” or “AI + SharePoint” or “AI + email” is competing with native features shipped by a platform that owns the distribution channel, the data, and the identity layer.

Meta and Mistral: The Open-Weight Counter-Current

On the open-weight side, Meta’s Llama 4 herd launched in 2025 as a natively multimodal generation, building on the Llama 3 family from 2024. Mistral AI’s trajectory — from Mistral Large 2 in mid-2024 to the more powerful open-weight Mistral Large 3 in late 2025 — provides a meaningful counterweight to closed-model lock-in.

This matters because open-weight models are the one structural escape valve from the platform-absorption problem. More on that shortly.

Why the Cadence Itself Is the Moat

The cadence of releases is not incidental. It is a deliberate strategic weapon. Every time a platform ships a major update, three things happen:

- Entire categories of thin-wrapper apps lose their differentiation overnight.

- Small developers are forced to re-build on new endpoints, under new pricing, with new capabilities, often within weeks.

- The cost of staying current — both in engineering time and in platform lock-in — rises.

A small team trying to build a business on top of GPT-5 in November 2025 may have found themselves, by April 2026, running on GPT-5.4 Thinking while simultaneously dealing with deprecated GPT-5.1 endpoints, new agent APIs, and a native Codex competitor launched by OpenAI itself. This is not a healthy environment for standalone consumer businesses.

It is, however, a fantastic environment if you are building on top of the platform in a way that the platform cannot easily replicate — using proprietary data, deep industry workflows, or infrastructure the platform doesn’t want to compete with.

3. How Big Is This Market, Really?

Before we go further into what works and what doesn’t, let’s calibrate with numbers.

The top-down market figures vary widely by analyst, but they all point the same direction: up, fast, and for a long time.

Market-size estimates for the global generative AI market in 2024 and 2025 range from roughly $18.6 billion to $71.4 billion, depending on whose definition you use. Research houses including Precedence Research, MarketsandMarkets, GM Insights, Grandview Research, and Mordor Intelligence all place compound annual growth rates between 25% and 43% through the early 2030s.

Long-term forecasts get dramatic:

- Precedence Research projects the market to reach ~$1.2 trillion by 2035.

- MarketsandMarkets and Mordor Intelligence forecast values ranging from ~$126 billion (by 2031) to ~$890 billion (by 2032) depending on methodology.

- Software — including SaaS platforms and end-user applications — is consistently identified as the single largest component, capturing over 60% of total market share.

North America currently dominates, holding over 40% of revenue, driven by concentrated venture capital, mature enterprise adoption, and the presence of the major labs. Asia Pacific is the fastest-growing region, with China, India, South Korea, and Japan all accelerating government-supported AI initiatives.

For our purposes, the precise number matters less than the structural implications:

- The application layer, while small today relative to hardware, is projected to be the largest segment of a multi-hundred-billion (or trillion) dollar market within the decade.

- Enterprise adoption, not consumer, drives the bulk of projected growth.

- The pie is big enough that even if concentration at the platform level is extreme, a vibrant specialized ecosystem is not just possible but economically necessary.

The question is where in this enormous expansion value actually gets captured.

And here the evidence is clear: it is not being captured by thin B2C wrappers.

4. The Economic Physics of B2C AI Wrappers

Let’s talk about why the “wrap an API, build a subscription app” business model has proven so economically fragile.

In classical SaaS, marginal cost is effectively zero. Once you’ve built your software, each additional user costs you almost nothing to serve. This is what makes software a magic business — gross margins of 75–85%, operational leverage, and scale that compounds.

AI changes this.

Every prompt a user sends in an AI app generates a direct, variable cost. Tokens are metered and billed. Frontier models like GPT-5.4, Claude Opus 4.7, and Gemini 2.5 Ultra are not cheap to query at scale. The result is that high engagement — normally a positive signal of product-market fit — becomes a direct profitability threat unless the business model is tightly designed around it.

Baytech Consulting, in a widely-circulated March 2026 piece titled Why Generic AI Startups Are Dead: The Executive Playbook to Building a Structural AI Moat, documents this dynamic in brutal detail. Their analysis draws on Bessemer Venture Partners’ State of AI 2025 benchmarks and multiple 2025 VC surveys. The bottom line:

- Applications adding a chat interface to an existing LLM are now receiving immediate passes from top-tier venture firms.

- VCs are demanding LTV:CAC ratios of 3:1 or higher, combined with a credible path to controlling inference costs via techniques like model routing, prompt compression, and semantic caching.

- The barrier to cloning a generic AI app has collapsed toward zero thanks to “vibe coding” — natural-language software development where competitors can reproduce your product in days.

Traditional B2B SaaS growing at a healthy 75% YoY from $20M ARR to $35M ARR — a business that in 2019 would have been a hot Series B — is now, per Baytech’s framing, “virtually unfundable by top-tier venture funds if it lacks a core AI architectural advantage.”

That is a remarkable shift in just two years.

The Churn Problem

The problem is not just margin compression on the cost side. It’s also retention on the revenue side.

Industry subscription-analytics firms including RevenueCat, Adapty, and Elena Verna’s subscription benchmarks have been tracking the performance of AI apps vs. non-AI apps across 2025. The pattern is consistent:

- AI apps show approximately 70% higher install-to-LTV than average mobile apps initially — suggesting strong novelty-driven acquisition.

- But AI apps lose approximately 20% more of their annual and trial subscribers by the end of year one than other categories.

- Across all subscription apps, roughly 25% of customers churn after their first term; for annual mobile subscribers, that figure jumps to as high as 70% at first renewal.

- Involuntary churn from payment failures accounts for 18–32% of all subscriber loss.

For a general-purpose AI chat app, these numbers are lethal. Users are intrigued, pay once, explore the novelty, and then realize that ChatGPT — or Copilot, or Claude, or Gemini — already does 90% of what the wrapper offers, often for free, and is updated weekly with new features. Retention collapses.

The apps that retain are the ones that do something distinct enough, or embedded enough, that the platforms genuinely can’t replicate.

Where the Money Is Actually Going

While B2C wrapper apps struggle, capital has been flowing in clearly visible directions:

- AI infrastructure pivots: CoreWeave and Applied Digital transitioning from crypto mining to AI data center capacity — both experiencing major stock price re-ratings.

- Public AI leaders: NVIDIA, Palantir, Broadcom, and other AI-infrastructure plays have materially outperformed broader indices since early 2024.

- M&A consolidation: Global M&A reached ~$2.6 trillion in 2025, with AI as a primary driver. Apple acquired 30+ startups in 2023–2024. Google acquired Wiz (security) for $32 billion. Salesforce acquired Informatica for $8 billion. Microsoft’s $650 million “acqui-hire” of Inflection AI absorbed talent, not product.

Crucially, none of these major acquisitions targeted standalone consumer AI wrapper apps. Big tech is buying infrastructure, data, security, enterprise workflows, and — most of all — people. Successful B2C AI startup exits at venture-scale are conspicuously absent.

This is the clearest possible signal about where durable value lives.

5. The Three Durable Moats

If thin wrappers are dying, what is actually fundable — and, more importantly, durable?

Based on the last two years of VC behavior, M&A patterns, and the evolving platform dynamics, three archetypes have emerged. Each represents a genuinely defensible position in the post-wrapper AI economy.

Moat 1 — Vertical Specialization

The first durable archetype is the vertical AI company: a product deeply embedded in a specific industry, solving domain-specific problems with domain-specific data, workflows, and compliance requirements.

Examples that have reached real scale:

- Harvey (legal) — embedded into Am Law 100 firms with workflows tied to discovery, contract analysis, and litigation prep.

- Abridge (healthcare) — medical documentation and clinician workflow.

- Glean (enterprise search) — company-specific knowledge graphs integrated with Slack, Gmail, Salesforce, Notion, and every proprietary internal tool.

- Hippocratic AI, OpenEvidence, Turquoise Health (healthcare verticals).

- Sierra (customer experience).

- Eve Legal, Ironclad (legal ops).

What makes these companies defensible is not the model — any of them could swap the underlying LLM tomorrow — but the proprietary dataset, workflow integration, and regulatory/compliance positioning that surrounds it.

Sonya Huang, partner at Sequoia, has been among the most vocal proponents of this thesis. In her AI Ascent 2025 keynote, she argued that general-purpose foundation models would become commoditized, but the application layer would explode, with vertical agents representing “Act Three” of generative AI. Sequoia has reportedly deployed roughly an order of magnitude more capital into application-layer companies than into foundation models themselves.

The key pattern: horizontal foundation models become smarter, but vertical applications build a compounding moat as user interactions generate proprietary feedback that horizontal models can never access.

A doctor correcting an AI-generated note inside a healthcare app doesn’t make ChatGPT smarter. It makes Abridge smarter — in ways that ChatGPT structurally cannot replicate without the distribution, compliance, and customer trust Abridge has spent years building.

Moat 2 — Complex Enterprise Agents (Systems of Action)

The second archetype is perhaps the most interesting: agents that don’t just inform, they act.

For the past decade, enterprise SaaS was dominated by “Systems of Record” — Salesforce, Workday, NetSuite — software whose primary job was to store and organize information. Humans clicked through interfaces to perform actions.

Agentic AI is forcing a paradigm shift toward “Systems of Action.” Instead of a dashboard, you get an agent that ingests real-time data, triggers operations, and completes multi-step business processes end-to-end. It doesn’t just tell your sales team what to do — it updates the CRM, sends the follow-up emails, schedules the calls, runs the contract generation, and escalates anomalies.

Companies pushing into this territory include:

- Sierra (autonomous customer service).

- Decagon (agentic support).

- Cognition (Devin) and Cursor (agentic coding).

- Replit Agent.

- Lovable (agentic software development).

- Enterprise-focused players building agent orchestration for specific verticals.

The defensibility here comes from three sources:

- Integration depth: Connecting to internal ERP, CRM, data warehouses, email, security infrastructure, and bespoke line-of-business tooling is hard. It takes 18–24 months of enterprise sales and implementation work to replicate — a timeframe during which the platform providers will never invest evenly across every vertical.

- Reliability engineering: Making agents actually work on production-critical tasks requires sophisticated evaluation, guardrails, observability, retry logic, human-in-the-loop escalation, and compliance auditing. This is a deep engineering problem that takes years to solve per industry.

- Switching costs: Once an agent is embedded into daily operations — running procurement, handling Tier 1 support, automating expense reports — ripping it out is a major operational decision. Switching costs start to approach CRM-replacement levels.

Microsoft, Google, and OpenAI are all building agent frameworks. But the frameworks are not the business. The business is the specific agent configured for your company’s specific operations. That’s where independent developers — not platforms — have a structural edge, because platforms cannot economically custom-build for every vertical.

Moat 3 — The Open-Weight Ecosystem

The third archetype is the one most underappreciated by the mainstream tech press: the open-weight alternative.

Meta’s Llama 4 release and Mistral’s increasingly powerful open-weight models have created a genuinely viable path for companies that cannot — or will not — rely on closed platforms. The reasons for choosing open-weight models are many:

- Data privacy: Healthcare, finance, legal, government, and defense customers often cannot send sensitive data to third-party APIs.

- Sovereignty: EU customers facing AI Act compliance, and non-US governments concerned about American platform lock-in.

- Cost control: Self-hosted inference at scale can be dramatically cheaper than paying per-token to OpenAI or Anthropic, especially for high-volume use cases.

- Customization: Fine-tuning on proprietary data is easier, cheaper, and more flexible on open weights.

- Durability: Your product doesn’t break when a platform deprecates an API.

This creates a whole ecosystem of companies serving this segment:

- MLOps platforms: Anyscale, Modal, Baseten, Together AI, Fireworks AI, Ollama.

- Fine-tuning and customization shops: Specialized AI consultancies building custom stacks for specific industries.

- Model hosting and inference providers optimized for open-weight deployment.

- Evaluation, observability, and guardrails platforms: Companies like Langfuse, Arize, Weights & Biases, and LangChain providing the tooling for self-hosted production AI.

The open-weight ecosystem is not a fringe movement. It is increasingly the default path for serious enterprise AI — particularly in regulated industries and outside of North America. Any durable view of the AI application layer has to account for the fact that a huge percentage of real production workloads will, within five years, be running on open-weight models hosted on customer-owned or private cloud infrastructure.

For founders and investors, this opens an entire adjacent category of businesses that are not “AI apps” in the traditional sense, but are critical infrastructure for the open-weight world.

6. Implications for Investors: The Death of Hype-Driven Capital

The implications of the market’s bifurcation for venture investors are stark.

The “invest in anything with AI in the pitch deck” era of 2023–2024 has ended. In its place, a much more disciplined, structural approach is emerging — one that looks more like classical venture capital, where moats, unit economics, and durability matter more than surface-level growth metrics.

The red flags for 2026-era AI investing are clear:

- Pure thin wrappers: Products that are one API away from the platform absorbing them.

- High burn with inference-driven cost structures and no clear path to controlling marginal cost.

- B2C consumer apps with no proprietary data, no network effects, and no integration depth.

- Growth driven by novelty rather than workflow embedding — high initial install numbers, weak Day 30 retention.

- Founders who don’t have a clear answer to: “What happens if OpenAI ships your feature next month?”

The green flags are equally clear:

- Workflow ownership: Products embedded deeply enough into a customer’s operations to be a “System of Action,” not just a “System of Record.”

- Proprietary data flywheels: Every user interaction generates unique training data that compounds into a smarter product.

- Vertical specialization: Deep industry expertise, regulatory positioning, and domain-specific data that horizontal platforms can’t replicate.

- Open-weight infrastructure: MLOps, inference, fine-tuning, evaluation, and deployment tools serving the rapidly growing self-hosted ecosystem.

- Picks and shovels: Semiconductors, specialized cloud, data management, security, and observability.

Agrawal’s analysis is blunt about where the easy money has been: “The most profitable company in AI is still the one selling the shovels.” NVIDIA, Broadcom, Applied Materials, ASML, Micron, TSMC, and the hyperscalers have been the cleanest plays for investors, full stop.

But — and this is the nuance — that window is closing too. Valuations in the infrastructure stack are extended. OpenAI’s March 2025 round at a $300 billion valuation priced at ~67x trailing revenue. NVIDIA’s multiple, while more defensible, is priced for perfection. At these levels, the marginal risk-adjusted return starts to favor the specialized application layer, where the absolute dollars are smaller but the valuations are more reasonable and the moat potential is larger.

The investor’s 2026 playbook, in one sentence: stop paying for access to growth, start paying for access to defensibility.

7. Implications for YouTube Creators: Sponsorship Strategy in the Wrapper Era

This section is for the creators, because it deserves its own treatment.

If you run a YouTube channel — particularly one focused on tech, productivity, personal knowledge management, or tools — you have probably been flooded with sponsorship offers from B2C AI companies over the last 18 months. The AI sponsorship market has exploded, and the underlying economics are a mix of genuine opportunity and real reputational risk.

The Opportunity

A few well-documented industry trends favor creators right now:

- The creator economy is projected to reach ~$500 billion by 2027, driven by significant growth in sponsored content.

- YouTube saw a ~54% YoY increase in sponsored video volume in the first half of 2025.

- ~70% of brands in 2025 surveys report preferring to work with creators with fewer than 100,000 followers, citing higher engagement rates, trust, and ROI.

- YouTube Shorts sponsorships have become a mainstream revenue channel, with rates commonly between $100 and $500 per video.

- YouTube’s own Creator Partnerships program is using AI to match creators with brands.

If you have a loyal niche audience — even a small one — the AI sponsorship money is real, and the barrier to entry is lower than it has been in years.

The Risk

But here is the catch: many of the companies offering sponsorship money are exactly the kind of thin-wrapper businesses that are most likely to fail, pivot, or shut down in the next 12–24 months.

The same churn and retention data that makes these apps a bad investment makes them a problematic endorsement target. If a creator wholeheartedly endorses an AI tool that:

- Is primarily a reskinned version of something ChatGPT/Claude/Copilot already does for free,

- Has 20% worse annual retention than typical apps,

- And is one OpenAI release away from obsolescence,

then within 6–12 months of the video going up, viewers may find the product degraded, buggy, or gone entirely. That erodes audience trust — which, for a creator, is by far the most valuable asset.

A Practical Framework for Evaluating AI Sponsors

For creators reading this, here is a practical framework I use and would recommend:

1. Test the product for at least 30 days before accepting the deal.

Does it do something meaningfully different from what the major free platforms offer? Is it stable? Is the UX good? Does it solve a real problem for your audience?

2. Understand the company’s moat.

Is it a vertical-specific tool with real data or workflow moats (good)? Or is it a thin chat interface over GPT-5 (risky)? Ask the company: “What happens to your product if OpenAI ships a native version of this?” If they don’t have a good answer, you shouldn’t either.

3. Favor companies with real distribution or pre-AI revenue.

A company that had a profitable business before bolting AI on top is structurally healthier than an AI-first startup dependent on venture capital. Integrations with Notion, Slack, Google Workspace, or Microsoft 365 are positive signals.

4. Prefer long-term partnerships over one-offs.

Multi-video, multi-month sponsorship deals force both sides to care about actual product quality. A single-video cash grab from a volatile startup is bad for your channel in the long run.

5. Consider diversifying across the three durable archetypes.

Sponsorships from vertical AI (legal, healthcare, creative), agent platforms (coding agents, workflow automation), and open-weight tooling (self-hosted AI, MLOps) tend to be more defensible — and therefore more reputation-safe — than generic consumer wrappers.

6. Say no more often.

Turning down $2,000 sponsorship deals from shaky wrapper startups is a better business decision than taking them. The channels that will thrive over the next five years are the ones that become known as trusted curators of durable tools — not billboards for whatever AI startup happened to pay this week.

8. The Likely Market Outcome: Partial Concentration, Specialized Abundance

Stepping back from all of this, what is the most probable shape of the AI market over the next five years?

Based on everything discussed above, the most defensible forecast is partial concentration with specialized abundance:

- The foundation model layer will consolidate to a handful of players. OpenAI and Anthropic are already ~75% of the app layer by revenue per Agrawal’s numbers. Google, Microsoft (via OpenAI partnership + first-party), Meta (Llama), and Mistral will round out the core. A long tail of specialized and regional labs will persist, but the majority of frontier capability will live with perhaps five to seven companies.

- The infrastructure layer will remain competitively distributed across AWS, Azure, GCP, Oracle, and specialized AI clouds like CoreWeave. Custom silicon will gradually compress NVIDIA’s share of gross profit, though NVIDIA will remain dominant through the end of the decade.

- The application layer will not consolidate in the same way. Instead, it will stratify. Horizontal generalist AI (ChatGPT, Copilot, Gemini, Claude) will be largely owned by the platform providers. Vertical, agentic, and open-weight applications will support a vibrant ecosystem of hundreds of durable companies — the “thousands of SaaS apps” pattern from the cloud era, repeated at the AI layer.

- Thin wrappers will continue to exist at the consumer level, but as short-lived novelty plays rather than durable businesses. They will be the musical-chairs sector of the AI economy, with individual winners rotating every few months.

This outcome is, crucially, not a winner-take-all scenario at the top of the stack. It is a platform oligopoly feeding a specialized application ecosystem.

For anyone trying to place bets — building a company, deploying capital, endorsing products, or making career choices — this is the map.

9. What to Build, Back, or Endorse Right Now

Let me close with the most actionable version of everything above.

If you are building:

- Pick a specific vertical. Not “AI for enterprises” — AI for orthopedic surgery scheduling. The narrower, the better.

- Build around proprietary data and embedded workflows, not around a specific model. Assume every frontier model endpoint you use today will be deprecated within 12 months.

- Design for model agnosticism. Use abstractions that let you swap between OpenAI, Anthropic, Llama, Mistral, and any future entrant without rewriting your product.

- Make your product agentic where it makes sense, but invest heavily in reliability, evaluation, and human-in-the-loop fallbacks. Unreliable agents are a path to churn.

- Take unit economics seriously from day one. If your inference costs are eating your gross margin, you do not have a business — you have a burn rate.

- Consider the open-weight path, especially if you are building for regulated industries or outside the US.

If you are investing:

- Avoid B2C thin wrappers. The probability of a venture-scale outcome is low, and the probability of a zero is high.

- Look for workflow ownership, proprietary data flywheels, and integration depth. Assume anything generic will be absorbed by platforms within 12–24 months.

- Stay exposed to picks and shovels — semis, specialized compute, MLOps, observability, security — but be mindful of valuations.

- Invest in the open-weight ecosystem. This is the most under-rated pocket of opportunity in the entire AI stack right now.

- Back founders who have a clear, honest answer to “why can’t the platforms do this?” Be skeptical of founders who wave that question away.

If you are creating content / considering sponsorships:

- Treat your audience’s trust as your most valuable long-term asset.

- Test every AI sponsor’s product thoroughly before endorsing.

- Favor verticals, agents, and open-weight tools over horizontal consumer wrappers.

- Pursue multi-video, long-term partnerships with companies that have real moats.

- Say no to the generic wrapper cash. A lot.

If you are a consumer or professional just trying to use this stuff:

- Default to the frontier platforms (ChatGPT, Claude, Gemini, Copilot) for general tasks. They are cheaper, faster, and updated more often than any wrapper you’ll find.

- Use specialized AI only when it solves a problem the generalists don’t. If you can’t articulate the specific workflow benefit, you don’t need the subscription.

- Beware of annual subscriptions from small AI startups. The churn math works against you, and the product you love today may not exist in a year.

Conclusion: A More Honest Kind of Optimism

The narrative about AI has swung between two extremes over the past three years. In 2023 and early 2024, every AI startup was going to be a unicorn. In late 2024 and 2025, the discourse flipped toward bubble talk, concerns about capex overspending, and skepticism about application-layer economics.

The truth, as of April 2026, is more nuanced — and, honestly, more interesting.

The generative AI market is not a bubble about to pop. It is also not a rising tide lifting all boats. It is a real, structural, multi-trillion-dollar shift that is reallocating value in uneven and occasionally counterintuitive ways. The platform layer is consolidating faster than most people realized. The application layer is simultaneously dying at the thin-wrapper bottom and flourishing at the vertical-agentic top. The open-weight ecosystem is a genuine escape valve from platform lock-in that is creating entire new categories of durable businesses.

For founders, the window for “slap an API on a UI and charge $19/month” is closed. But the window for deeply specialized, workflow-embedded, data-rich AI companies is wide open, and will remain so for at least the next decade.

For investors, hype-driven capital is out; defensibility-driven capital is in. The returns in the next cycle will accrue to those who understand the difference between growth and durability.

For creators, the opportunity to monetize is real — but so is the risk of attaching your reputation to businesses that won’t exist in 18 months. Curate ruthlessly.

And for everyone trying to make sense of where this is going: the key insight is that AI is simultaneously the most consolidating and the most proliferating technology shift we’ve seen. Power is concentrating at the platform layer. Opportunity is proliferating at the specialized application layer. Both things are true at the same time. Both dynamics will intensify.

The wrappers are dying. The specialists are just getting started. Build accordingly.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.