The AI assistant promise is finally real. Gemini’s task automation is live, and it’s stranger than you think.

For years, tech companies have been selling us a dream. “Your AI assistant will handle everything,” they said. It’ll book your rides. Order your food. Navigate apps so you don’t have to. We nodded along, downloaded the updates, and waited. Nothing ever quite delivered.

That wait is over.

Google and Samsung just rolled out Gemini’s task automation feature in beta, and it actually works. Your phone now opens apps, browses menus, adds items to a cart, and completes orders. All by itself. All while you watch.

It’s impressive. It’s useful. And honestly? It’s a little unsettling.

What Just Happened — And Why It Matters

Let’s be clear about what Google just shipped. This isn’t a smarter chatbot. It isn’t a voice command that opens Spotify. This is an AI agent that takes control of your phone’s screen, navigates real apps in real time, and completes multi-step tasks without you lifting a finger.

Android Central broke down the rollout clearly: Samsung and Google are pushing Gemini screen automation to Galaxy S26 series devices in the U.S. and Korea first. The feature lets Gemini open and control apps to complete tasks, ordering food, calling a cab, handling groceries, all from a single command.

This is a big deal. Not just as a feature. As a signal.

We’ve crossed a line from reactive AI, the kind that answers questions and sets timers, to proactive AI that executes complex, multi-step tasks. That’s a fundamentally different relationship between humans and their devices. And it’s happening right now, on phones people already own.

How It Actually Works

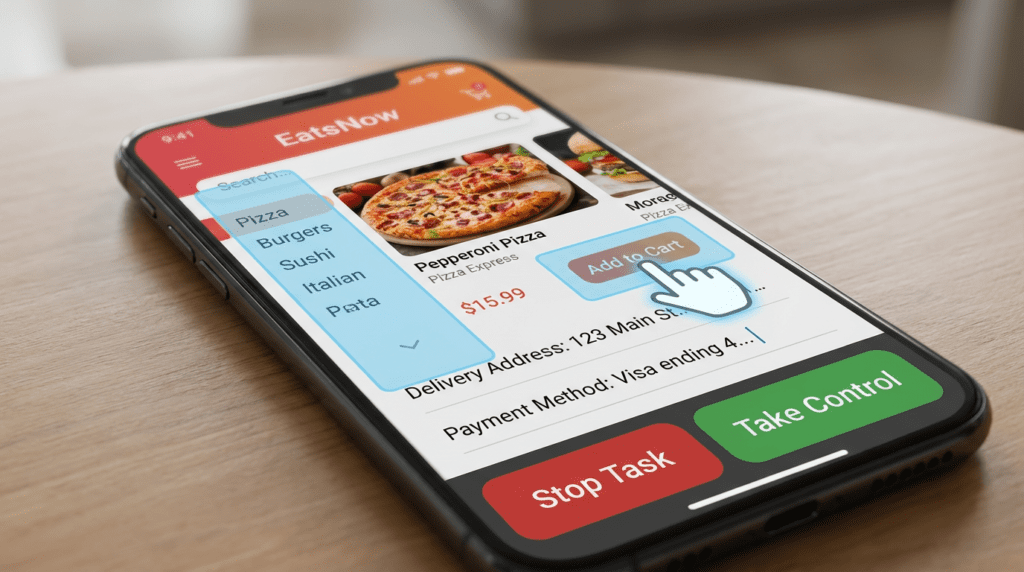

Here’s the mechanics. When you long-press the power button on a Galaxy S26 and give Gemini a command, the assistant opens a virtual window. Inside that window, Gemini navigates apps the same way you would, tapping buttons, scrolling menus, filling in fields. You can watch every step in real time.

Two options stay visible throughout: Stop task and Take control. You can jump in at any moment. You can cancel entirely. And before Gemini finalizes any order or action, it stops and asks for your confirmation. You stay in the loop. You stay in charge.

The Verge’s Allison Johnson tested the feature firsthand on the Galaxy S26 Ultra. Her first prompt was simple: order an Uber to the airport. Gemini asked which airport, a smart clarification, then added the destination and skipped an irrelevant step about specifying an airline. It paused before the final confirmation, exactly as designed.

Then she pushed it harder. She asked Gemini to order a coffee and a croissant. Gemini scrolled through Starbucks’ entire hot drink menu, found the flat white, and then faced a decision: should the chocolate croissant be warmed? Without any input, it chose correctly, warmed. Johnson called it “pretty impressive for an assistant that just a year ago would argue with me over the details of a flight on my calendar.”

That’s not a parlor trick. That’s genuine contextual reasoning.

The Apps It Supports Right Now

The initial rollout is intentionally narrow. In the U.S., Gemini task automation currently supports:

- Rideshare: Uber, Lyft

- Food delivery: DoorDash, Grubhub, Uber Eats

- Coffee: Starbucks

- Groceries: Instacart (coming soon)

Google chose these apps deliberately. They have standardized interfaces. The stakes are relatively low. Ordering the wrong burrito is annoying, it’s not a crisis. This controlled launch gives Google room to refine the feature before expanding it to higher-stakes territory.

NewsGPT described the feature as “a significant leap from previous AI capabilities, moving beyond simple information retrieval to proactive task completion.” That framing is accurate. This isn’t an upgrade to an existing feature. It’s a new category entirely.

The Tech Behind the Magic

So how does Gemini actually pull this off? It doesn’t rely on custom API integrations with each app. Instead, it uses vision and reasoning models to understand app interfaces the way humans do, recognizing buttons, reading menus, interpreting navigation patterns in real time.

The Tech Buzz explained it well: “It’s essentially performing UI automation in real-time, recognizing buttons, form fields, and navigation patterns without needing custom API integrations for each app.”

That’s technically remarkable. It means Gemini can theoretically work with any app that has a visual interface, not just the ones Google has formally partnered with. The current limitation to food and rideshare apps is a choice, not a technical ceiling.

This architecture also hints at Google’s longer game. By teaching Gemini to understand and manipulate app interfaces visually, Google is building toward a future where AI assistants can control any software. That’s the real vision behind autonomous agents AI that doesn’t need permission or custom code to interact with digital systems.

Samsung’s Big Bet

Samsung isn’t just a distribution partner here. The company is betting its flagship lineup on this integration.

The Galaxy S26 Ultra launched at Samsung’s Unpacked event in February 2026, and Gemini task automation was one of the headline features. Now that it’s live in beta, Samsung has a software differentiator that’s actually visible to consumers, not a spec sheet bullet point, but something you can watch happen on your screen.

Android Central noted that the feature is rolling out in the U.S. and Korea in English first, with expansion to more regions planned. Google also confirmed that Pixel 10, Pixel 10 Pro, and Pixel 10 Pro XL will receive the feature soon, making this a broader Android push, not a Samsung exclusive.

For Samsung, the partnership with Google delivers something Bixby never could: a genuinely capable AI agent that consumers actually want to use. For Google, Samsung’s global reach provides massive distribution for Gemini at a moment when the company needs to prove its AI investments translate to real-world utility.

Both companies win. And right now, both companies are ahead.

Where Apple Stands — And Where It Doesn’t

Let’s talk about the elephant in the room. Apple has been promising smarter Siri capabilities for years. Apple Intelligence launched with improved natural language processing and on-device task handling. It’s good. But it can’t do this.

The Tech Buzz put it bluntly: “While Apple Intelligence handles on-device tasks and improved natural language processing, it can’t independently navigate third-party apps the way Gemini now can. That’s a meaningful competitive advantage as the AI assistant wars heat up.”

Apple has the hardware. It has the ecosystem. It has the trust. But right now, it doesn’t have an AI agent that opens DoorDash and orders your dinner. Google does. That gap matters, and it’s going to matter more as consumers start expecting this level of capability from their devices.

The AI assistant wars just got a new benchmark. And Apple is playing catch-up.

The Trust Problem Nobody’s Talking About

Here’s where things get complicated. Handing your phone to an AI agent sounds great in theory. In practice, it means trusting that agent with your payment methods, your location, your personal preferences, and your purchasing decisions.

One misread command could mean a $200 food order. One wrong tap could book a ride to the wrong address. These aren’t hypothetical risks, they’re the natural consequence of giving any system autonomous control over consequential actions.

The Tech Buzz raised this directly: “Google will need to nail the confirmation workflows and rollback mechanisms, or this feature risks becoming a liability instead of a selling point.”

Google’s current approach, pausing before final confirmation, showing every step, offering a “Stop task” button, addresses some of this. But it doesn’t address everything. What happens when Gemini misinterprets a vague prompt, when an app updates its interface and Gemini gets confused? And what happens when someone else gives your phone a voice command?

These are real questions. The beta label is doing heavy lifting right now. Early adopters report the feature works but requires patience and occasional correction. Gemini sometimes selects the wrong menu item or struggles with apps that have unusual layouts. That’s expected for a beta. It’s also a reminder that trust at scale is earned slowly.

What This Means for the Future of Android

Google’s move here isn’t just about food delivery. It’s about establishing a new paradigm for how AI interacts with software.

By teaching Gemini to navigate any app visually, Google is laying the groundwork for a future where AI agents can control any digital system, not just the ones with formal integrations. That’s a massive shift. It means the bottleneck for AI automation is no longer developer partnerships. It’s the quality of the AI’s vision and reasoning.

NewsGPT framed the significance well: “This marks a significant leap from previous AI capabilities… promising to streamline daily routines and redefine the role of digital assistants from mere responders to genuine personal agents.”

That’s exactly right. The role of a digital assistant is changing. It’s no longer a search interface with a voice. It’s an agent. It acts, decides and It executes.

Android Central’s take was equally direct: “We’ve seen plenty of AI features on smartphones, but this feels like one of the most capable agentic features introduced in a while.”

The fragmentation challenge remains real. Autonomous app control across different manufacturers, Android versions, and app ecosystems is complex. Google will need to manage that complexity carefully as the rollout expands beyond Samsung devices and English-language markets.

The Bottom Line

Gemini task automation is the first legitimate delivery of autonomous AI agent capabilities on consumer devices. Not a demo. Not a concept video. A real feature, live in beta, on phones people are using today.

It orders your dinner, books your ride, navigates menus, makes reasonable inferences, and stops to ask before it spends your money. It’s not perfect, no beta ever is. But it works. And it works in a way that feels genuinely new.

The question now isn’t whether this technology is real. It is. The question is whether users will trust it enough to let it run. That trust gets built one flat white and one Uber ride at a time.

Your phone just learned to order dinner. It didn’t ask for permission. But for once, you might actually be glad it didn’t.

Sources

- The Verge — “Gemini’s task automation is here and it’s wild” — Allison Johnson, March 12, 2026

- Android Central — “Google just gave Gemini the power to control apps on the Galaxy S26 — and it’s pretty wild” — Sanuj Bhatia, March 12, 2026

- NewsGPT — “Google And Samsung Debut Gemini For Autonomous App Control” — March 13, 2026

- The Tech Buzz — “Google’s Gemini Now Orders Your Dinner Autonomously” — March 12, 2026

Comments 1