Apple just rolled out a new system to label AI-generated music. It sounds promising. But there’s a catch — and it’s a big one.

What Apple Just Announced

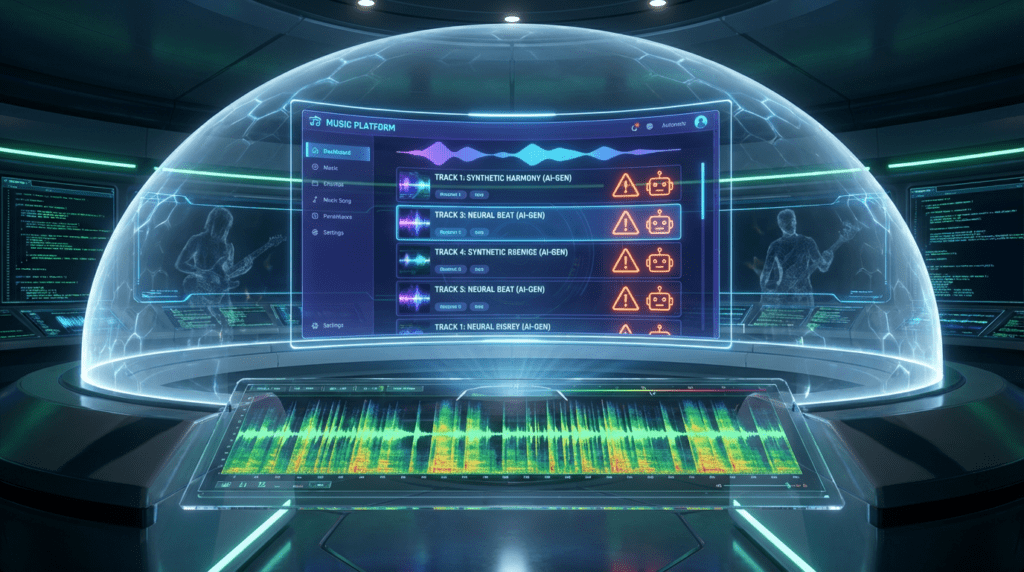

On March 4, 2026, Apple Music quietly dropped a bombshell in the music industry. The company sent a newsletter to its industry partners announcing the launch of “Transparency Tags” a new metadata system designed to flag AI-generated content on the platform. According to 9to5Mac, the tags will be required “when delivering new content to Apple Music in the future.”

The move signals that Apple is paying attention. AI-generated music is flooding streaming platforms at an unprecedented rate. The industry is scrambling to respond. And Apple, one of the biggest players in music streaming, is finally putting its foot down, sort of.

The new framework covers four key creative elements. Labels and distributors can now tag content across four categories: Artwork, Track, Composition, and Music Video. Each tag applies when AI generates “a material portion” of that element. Multiple tags can be applied to the same piece of content simultaneously.

This is a concrete move. It’s also, as we’ll see, a deeply flawed one.

Breaking Down the Four Tags

Let’s get specific about what each tag actually means.

The Track tag applies when AI generates a material portion of a sound recording. This is the most obvious one,the actual music you hear. The Composition tag goes deeper. It covers AI-generated lyrics and other compositional elements. So if a human recorded the song but an AI wrote the words, the Composition tag kicks in.

The Artwork tag operates at the album level. It flags when AI generates static or motion graphic artwork,think album covers and animated visuals. Finally, the Music Video tag covers any AI-generated visual content, whether it’s bundled with an album or delivered as a standalone video.

As Apple explained in its partner newsletter, reported by Music Business Worldwide, “Proper tagging of content is the first step in giving the music industry the data and tools needed to develop thoughtful policies around AI.” Apple added that labels and distributors “must take an active role in reporting when the content they deliver is created using AI.”

That sounds reasonable. But here’s where things get complicated.

The Enforcement Problem

Apple leaves the definition of “AI-generated content” entirely up to its partners. The Verge noted that Apple says determining what qualifies as AI-generated music and visuals will be left to the discretion of content providers, “similar to genres, credits, and other metadata.” And critically, if a label omits the tags entirely, Apple assumes no AI was used.

Read that again. No tag means no AI. That’s the honor system.

There’s no enforcement mechanism. No cross-verification process. No automated detection running in the background to catch bad actors. Apple is asking the music industry to police itself. And the music industry, as we’re about to see, has a serious problem with bad actors.

Digital Trends put it bluntly: “Apple is asking people uploading fraudulent content to label it honestly.” That’s not a policy. That’s a wish.

The Fraud Crisis Nobody Is Talking About Enough

Here’s the context that makes Apple’s announcement feel even more inadequate. Weeks before Apple’s announcement, Deezer released data that should have sent shockwaves through the entire streaming industry.

The Paris-based platform now receives more than 60,000 fully AI-generated tracks every single day. That’s up from 30,000 in September 2025, 50,000 in November, and just 10,000 when Deezer first launched its detection tool in January 2025. Synthetic content now accounts for roughly 39% of all music delivered to the platform daily. Since early 2025, Deezer has detected and tagged over 13.4 million AI-generated tracks in total.

Those numbers are staggering. But the fraud data is even more alarming.

Deezer found that up to 85% of all streams on AI-generated music in 2025 were fraudulent, up from 70% the year before. Those streams get demonetized and removed from the royalty pool. For context, streaming fraud across Deezer’s entire catalog was just 8% last year. AI music fraud is operating at more than ten times the rate of general streaming fraud.

“We know that the majority of AI-music is uploaded to Deezer with the purpose of committing fraud, and we continue to take action,” said Deezer CEO Alexis Lanternier.

The scheme is simple and devastating. Generate 60,000 tracks in a day. Run bots to stream them. Every fake stream pulls money out of the royalty pool, money that should go to human artists. As Digital Trends explains, this is the environment Apple is stepping into with its voluntary tagging system.

How Apple’s Approach Compares to the Competition

Apple isn’t the only streaming platform wrestling with this problem. But its approach stands out, and not in a good way.

Deezer built its own AI detection infrastructure. It identifies AI-generated content through technical analysis, not self-reporting. The platform doesn’t wait for labels to confess. It catches the content independently. Deezer has since moved to license its detection technology to the wider industry, with French collecting society Sacem among the first partners to trial the tool. The company claims its system can identify 100% of AI-generated music from generative models including Suno and Udio.

Qobuz introduced its own proprietary AI detection system just last week. Deezer made its AI music detection tool available to other platforms in January. These platforms are building active defenses.

Apple is building a suggestion box.

Spotify sits somewhere in the middle. PCMag reports that Spotify announced a similar feature for labels, distributors, and music partners back in September 2025. Spotify is also working on industry tagging standards with DDEX, a music standards-setting organization. Notably, senior Apple Music exec Nick Williamson sits on the DDEX board, which means Apple is at least aware of the broader industry conversation. But like Apple, Spotify’s detection infrastructure still lags behind Deezer’s. Both platforms depend heavily on what labels choose to disclose.

The Decoder highlights the broader industry context: AI music startup Suno recently hit $300 million in annual revenue but faces a legal battle with the music industry. Universal Music struck a deal with Udio, focusing on licensed AI partnerships. And Google recently integrated its music generator Lyria 3 directly into the Gemini app, making AI music creation accessible to millions of everyday users. The floodgates are open.

Why the Industry Is Moving Now

The timing of Apple’s announcement isn’t random. The music industry is under enormous pressure. AI-generated content is growing exponentially. Fraud is spiking. Human artists are getting squeezed out of royalty pools by bots streaming synthetic tracks.

The Verge notes that Apple Music’s tagging system follows other efforts from competing music streaming providers to protect authentic artists from spam and impersonation, and to make AI-generated music easier for users to identify. The industry is moving because it has to.

There’s also a legal dimension. AI-generated content remains ineligible for copyright in the United States. PCMag points out that this creates a murky landscape where AI tracks can flood platforms without the same protections, or accountability, that human-created works carry.

Apple’s Transparency Tags are, at minimum, an acknowledgment that the problem is real. The company calls the new tags “a concrete first step toward the transparency necessary for the industry to establish best practices and policies that work for everyone.” That framing is important. Apple isn’t claiming this solves the problem. It’s claiming this starts a conversation.

Will Labels Actually Use the Tags?

That’s the million-dollar question. Or, given the scale of AI music fraud, the billion-dollar question.

The Verge’s Jess Weatherbed is skeptical: “Honesty policies for other AI labelling solutions haven’t worked out so far. Given the lack of enforcement surrounding Apple Music’s tagging system, I’m struggling to see why creators and record labels would be motivated to actually use it.”

She has a point. The labels and distributors most likely to use the tags honestly are the ones already committed to transparency. The bad actors, the ones flooding platforms with fraudulent AI tracks, have zero incentive to self-report. They’re committing fraud. Asking them to label their fraud is not a deterrent.

Music Business Worldwide notes that Apple’s technical specification describes the tags as optional for now, with the note that if omitted, none is assumed. Apple says the tags will eventually become mandatory for new content deliveries. But “eventually” is doing a lot of heavy lifting in that sentence. Until enforcement arrives, the system relies entirely on goodwill.

What Needs to Happen Next

Apple’s Transparency Tags are a start. They’re not a solution.

The music industry needs automated detection, not just voluntary disclosure. Deezer proved it works. Its system catches 100% of AI-generated tracks from major generative models. That technology exists. It’s being licensed. Other platforms need to adopt it.

Apple needs to move faster. The tags are optional today. They need to become mandatory tomorrow, not eventually. And “mandatory” needs to mean something. There must be consequences for non-compliance. Without enforcement, the system is theater.

Listeners deserve to know what they’re hearing. Human artists deserve to be protected from fraud. The royalty pool needs to be defended from bots. None of that happens with a suggestion box.

Apple took a step. It’s a small one. The music industry needs a giant leap.

Sources

- The Verge — Apple Music adds optional labels for AI songs and visuals

- The Decoder — Apple puts AI disclosure responsibility on labels and distributors

- Digital Trends — Apple Music will put custom tags for AI songs and visuals, but it’s not enough

- PCMag — Apple Music Will Reportedly Require AI Disclosures on Songs

- Music Business Worldwide — Apple Music launches AI transparency tags — but only if labels and distributors declare them

- 9to5Mac — Apple Music introduces metadata tags to disclose AI-generated content