Anthropic’s latest release turns Claude Code into a genuinely autonomous software engineer that keeps working while you sleep.

There’s a quiet assumption baked into most AI coding tools: that you, the human, need to be present. You open the terminal, you type the prompt, you watch the output scroll by. The AI is a supercharged autocomplete. A tireless pair programmer. But still, ultimately, a tool that waits for you.

Claude Code just broke that assumption wide open.

On April 14, 2026, Anthropic announced routines in Claude Code — currently in research preview — and the implications for how software teams work are worth sitting with for a moment. Routines are not a minor quality-of-life feature. They represent a genuine architectural shift in what an AI coding assistant can be: not just something you talk to, but something you deploy.

What Exactly Is a Routine?

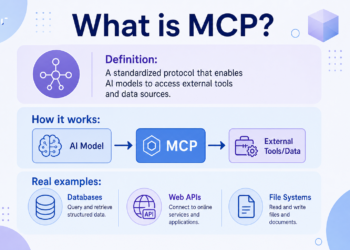

At its core, a routine is a saved Claude Code configuration. You define a prompt, point it at one or more GitHub repositories, attach whatever MCP connectors you need — Slack, Linear, Google Drive, and more — and then tell Claude when or how to run it.

That “when or how” is where it gets interesting. Routines support three distinct trigger types:

- Scheduled — runs on a recurring cadence: hourly, nightly, weekdays, or weekly

- API — triggers on demand via an HTTP POST to a per-routine endpoint with a bearer token

- GitHub — kicks off automatically in response to repository events like pull requests, pushes, or issues

You can mix and match. A single routine can run on a schedule, accept API calls, and also react to GitHub events. Whatever combination your workflow demands.

What makes all of this genuinely new is where routines execute. They run on Anthropic-managed cloud infrastructure — not on your local machine. Your laptop doesn’t need to be open. Your terminal doesn’t need a session alive. You configure it once, and it goes.

The Problem Routines Actually Solve

To understand why this matters, you need to understand the gap it’s closing.

Before routines, developers who wanted to automate Claude Code workflows were essentially on their own. You could use /schedule in the CLI to schedule tasks, but execution depended on your machine being available. If you wanted anything more sophisticated — triggered automations, API-accessible agents, GitHub-reactive workflows — you were wiring together cron jobs, MCP servers, and custom infrastructure yourself.

That’s not nothing. Plenty of power users had already built impressive automations this way. But it created real friction: every team that wanted Claude-powered automation was reinventing the plumbing.

Routines collapse that entire stack into a single configuration form. The infrastructure is Anthropic’s problem. Your problem is writing a good prompt.

It’s also worth noting for anyone who’s been using /schedule in the CLI: those tasks are now routines, and there’s nothing to migrate. They’ll show up in your routines dashboard at claude.ai/code/routines automatically.

Scheduled Routines: The Overnight Shift

The simplest trigger type, and arguably the most powerful for teams dealing with the relentless grind of software maintenance, is the scheduled routine.

The premise is disarmingly simple: give Claude a prompt and a cadence, and it handles execution. Want Claude to pull the top open bug from Linear every night at 2am, attempt a fix, and open a draft PR for review the next morning? That’s one prompt, one schedule, configured once. What used to require a dedicated developer, a cron job, and a weekend of infrastructure work is now a form submission.

Anthropic’s documentation lays out some of the patterns that early users have already landed on. Backlog management is a natural fit: a nightly routine that reads newly opened issues, applies labels, assigns owners based on which area of the codebase the issue touches, and posts a morning summary to Slack so the team starts the day with a groomed queue instead of an inbox. Documentation drift is another: a weekly scan of merged PRs to flag any documentation that references APIs that have since changed, automatically opening PRs against the docs repo for an editor to review.

The common thread is unattended, repeatable work with a clear outcome — exactly the kind of task that falls through the cracks in every engineering team, not because it’s hard, but because no one has time to do it consistently.

Schedules are entered in your local timezone and converted automatically, so you don’t have to think about UTC offsets. For anything beyond the preset frequencies, the CLI’s /schedule update command accepts custom cron expressions, down to a minimum interval of one hour.

API Routines: Claude as an Endpoint

The second trigger type is perhaps the most conceptually interesting, because it flips the usual model on its head.

Normally, you send messages to Claude. An API routine gives Claude its own endpoint. You send an HTTP POST — from your monitoring tool, your deploy pipeline, your internal dashboard, anywhere you can make an authenticated request — and Claude wakes up, runs your configured prompt against the provided context, and starts working.

Each routine gets a unique URL and a bearer token. The token is shown once and stored on your end. The token is scoped to triggering that one routine only, and you can rotate or revoke it at any time from the routine’s edit form. The full API reference is available in Claude Platform documentation.

Here’s what a trigger looks like in practice:

bashCopycurl -X POST https://api.anthropic.com/v1/claude_code/routines/trig_01ABCDEFGHJKLMNOPQRSTUVW/fire \

-H "Authorization: Bearer sk-ant-oat01-xxxxx" \

-H "anthropic-beta: experimental-cc-routine-2026-04-01" \

-H "anthropic-version: 2023-06-01" \

-H "Content-Type: application/json" \

-d '{"text": "Sentry alert SEN-4521 fired in prod. Stack trace attached."}'

That optional text field is key. It gets appended to the routine’s configured prompt as a one-shot user turn, so you can pass context — an alert payload, a failing log, a deploy identifier — directly into the session. The response comes back with a session ID and a URL where you can watch the run in real time.

The use cases here are where things get genuinely exciting. Consider alert triage: your monitoring tool (say, Datadog or PagerDuty) calls the routine’s endpoint when an error threshold is crossed, passing the alert body as the text payload. The routine pulls the stack trace, correlates it with recent commits in the repository, and opens a draft PR with a proposed fix before the on-call engineer even opens the page. The engineer reviews a proposed fix rather than starting from a blank terminal at 3am.

Or consider deploy verification: your CD pipeline posts to the routine’s API endpoint after each production deploy. The routine runs smoke checks against the new build, scans error logs for regressions, and posts a go/no-go to the release channel before the deploy window closes. This is the kind of post-deploy gate that every team knows they should have and almost none consistently do.

The /fire endpoint ships under a dated beta header (experimental-cc-routine-2026-04-01), which is Anthropic’s way of signaling that the API surface may evolve during the research preview. Breaking changes will arrive behind a new dated header, and the two most recent previous versions continue to work to give callers time to migrate.

GitHub Routines: Reactive Automation at Scale

The third trigger type — and the one that will likely get the most attention from teams with active codebases — is the GitHub trigger.

You subscribe a routine to a GitHub repository event, and Claude creates a new session for every matching event and runs your configured prompt. This isn’t polling. It’s webhook-driven: events arrive in real time, and Claude responds accordingly.

The list of supported events is comprehensive. Pull request lifecycle events (opened, closed, synchronized, labeled), PR reviews and review comments, pushes, releases, issues, issue comments, sub-issues, commit comments, discussions, check runs, check suites, merge queue events, workflow runs, workflow jobs, workflow dispatches, and repository dispatch events — essentially the full surface area of GitHub’s webhook system.

For pull request triggers, you can add filters to narrow which events actually start a session. Filter on author, title text, body text, base branch, head branch, labels, draft status, merge status, or whether the PR comes from a fork. All filter conditions must match for the routine to fire.

This creates some elegant possibilities. An auth module review routine might filter on base branch main and head branch containing auth-provider, ensuring that any PR touching the authentication layer automatically gets a focused security and style review before a human looks at it. An external contributor triage routine might filter on from fork: true, routing every fork-based PR through an extra security pass. A backport routine might trigger only when a maintainer adds a needs-backport label.

One standout use case from early adopters: library porting. Every PR merged to a Python SDK triggers a routine that ports the change to a parallel Go SDK and opens a matching PR. Keeping two SDKs in sync is exactly the kind of mechanical, tedious work that humans do poorly and slowly — and that Claude can do consistently and immediately.

Another pattern that’s emerged: bespoke code review. On PR opened, run your team’s own review checklist across security, performance, and style criteria, leaving inline comments before a human reviewer looks. The human can focus on architecture and design decisions rather than mechanical checks. It’s not replacing code review — it’s making it better.

One important architectural note: each matching GitHub event starts its own independent session. Session reuse across events isn’t available for GitHub-triggered routines, so two PR updates produce two independent sessions. Claude opens one session per PR and continues to feed updates from that PR — comments, CI failures — to the existing session so it can address follow-ups.

How Routines Actually Run

A few things are worth understanding about the execution model, because they have real implications for how you write prompts and configure routines.

Routines run fully autonomously. There’s no permission-mode picker and no approval prompts during a run. Claude can run shell commands, use skills committed to the cloned repository, and call any connectors you’ve included. This is different from interactive sessions where you might be prompted to approve certain actions. The documentation is direct about this: “The prompt is the most important part: the routine runs autonomously, so the prompt must be self-contained and explicit about what to do and what success looks like.”

Branch permissions are scoped by default. By default, Claude can only push to branches prefixed with claude/, which prevents routines from accidentally modifying protected or long-lived branches. You can remove this restriction per repository by enabling “Allow unrestricted branch pushes” when creating or editing the routine.

Connectors are included broadly by default. All of your connected MCP connectors are included in a new routine by default. The docs recommend removing any that the routine doesn’t actually need, to limit the scope of what Claude has access to during each run.

Routines draw down subscription usage the same way interactive sessions do. In addition to standard subscription limits, there’s a daily cap on runs per account. Pro users can run up to 5 routines per day, Max users up to 15, and Team and Enterprise users up to 25. Teams that need more can enable extra usage from Settings > Billing, at which point additional runs continue on metered overage.

Each run creates a new session alongside your other sessions, where you can see exactly what Claude did, review any changes it made, and create a pull request if one wasn’t automatically opened.

Who Can Use Routines?

Routines are available today for Pro, Max, Team, and Enterprise plan users with Claude Code on the web enabled. You can create and manage them at claude.ai/code/routines, or from the CLI with /schedule.

Routines belong to your individual claude.ai account — they’re not shared with teammates automatically. Importantly, anything a routine does through your connected GitHub identity or connectors appears as you. Commits and pull requests carry your GitHub user. Slack messages, Linear tickets, and other connector actions use your linked accounts for those services. This is not a bot account operating in the background — it’s your account acting autonomously on your behalf. Worth keeping in mind when configuring what a routine is permitted to do.

For GitHub triggers specifically, you’ll need to install the Claude GitHub App on the relevant repository. The trigger setup prompts you to do this if it isn’t already installed. Note that running /web-setup in the CLI grants repository access for cloning but does not install the GitHub App or enable webhook delivery — that step requires the web UI.

Getting Started: A Practical Path

If you want to start simple, the fastest onramp is the CLI. Open any Claude Code session, type /schedule, and Claude will walk you through creating a scheduled routine conversationally. You can also pass a description directly: /schedule daily PR review at 9am. Once created, the routine appears immediately at claude.ai/code/routines.

For API and GitHub triggers, you’ll need the web UI. The creation flow is a straightforward form: name the routine, write the prompt, select repositories, choose an environment (there’s a default provided), add triggers, review connectors, and create. Start a run immediately with the “Run now” button on the routine’s detail page to validate that it’s working before relying on automated triggers.

A few practical notes from the documentation that are worth front-loading:

- Write explicit prompts. Because routines run autonomously, vague instructions produce inconsistent results. Define what success looks like. Specify exactly what Claude should do when it encounters edge cases.

- Scope your connectors. Remove any MCP connectors the routine doesn’t need. Fewer permissions means fewer surprises.

- Set appropriate branch permissions. The

claude/-prefixed branch restriction is sensible for most routines, but confirm whether your workflow needs to push to other branches. - Check your daily limits. You can view current consumption and remaining daily routine runs at claude.ai/code/routines or claude.ai/settings/usage.

The Bigger Picture

Step back from the mechanics for a moment and consider what’s actually being announced here.

The standard narrative around AI coding tools in 2025 was that they make individual developers faster. You write code faster. You debug faster. You understand unfamiliar codebases faster. The human is still the driver; the AI is a better vehicle.

Routines gesture at something different: AI that operates on its own time, at its own cadence, in response to its own triggers. Not because a human typed a prompt, but because a monitoring alert fired or a GitHub PR was opened or a cron job hit 2am. The human is the architect of the automation, not the operator of each instance.

This isn’t science fiction. It’s a forms-based configuration UI at claude.ai/code/routines. But the implications compound quickly once you start thinking about what engineering teams actually spend their time on: backlog grooming, documentation updates, post-deploy verification, code review checklists, cross-SDK synchronization, alert triage. A significant fraction of that work is well-defined, repeatable, and — as it turns out — automatable with a clear prompt and the right triggers.

We’re in a research preview. Behavior, limits, and the API surface may change. More event sources beyond GitHub are coming. The daily run limits will presumably evolve as Anthropic learns how teams actually use this.

But the direction is clear. Claude Code is becoming something closer to a teammate you deploy than a tool you operate. Routines are the first major step in that direction.

If you’re building software today, the question worth asking isn’t whether you’ll eventually adopt something like this. It’s which workflows you’re going to automate first.

Routines are available in research preview starting April 14, 2026. Get started at claude.ai/code. Full documentation is available at code.claude.com/docs/en/routines.

Want your AI product explained to a large AI-native audience?

Kingy AI helps AI companies turn complex products into clear, useful YouTube videos that drive awareness, product understanding, demos, clicks, and search visibility.

Comments 2