Neural rendering promises to close the gap between Hollywood and your gaming PC. But not everyone is impressed.

The Big Announcement Nobody Saw Coming

NVIDIA dropped a bombshell at its annual GTC 2026 keynote. CEO Jensen Huang took the stage and unveiled DLSS 5, a technology the company is calling “the most significant breakthrough in computer graphics since the debut of real-time ray tracing in 2018.” Bold words. And for a few minutes, the gaming world held its breath.

Huang didn’t hold back. He called it “the GPT moment for graphics.” Said it would blend hand-crafted rendering with generative AI to deliver a dramatic leap in visual realism. He even invoked NVIDIA’s own legacy, reminding the audience that the company invented the programmable shader 25 years ago. Now, he said, they’re reinventing computer graphics all over again.

The crowd was impressed. Then the demo played. And the internet had thoughts.

So What Exactly Is DLSS 5?

Let’s start with the basics. DLSS stands for Deep Learning Super Sampling. It first launched in 2018 on RTX 20-series cards. The original idea was simple: use AI to upscale lower-resolution frames so your GPU doesn’t have to work as hard. Over time, it evolved. DLSS 4 brought transformer models to replace older CNN architecture. DLSS 4.5 pushed Multi Frame Generation to 6x. Now DLSS 5 is doing something fundamentally different.

It’s not about performance anymore. It’s about looks.

DLSS 5 is a real-time neural rendering model. It takes a game’s color and motion vectors for each frame as input. Then it uses an AI model to infuse the scene with photoreal lighting and materials, anchored to the source 3D content and consistent from frame to frame. It runs in real time at up to 4K resolution, meaning gameplay stays smooth and interactive while looking dramatically better.

The AI model was trained end-to-end to understand complex scene semantics. It recognizes characters, hair, fabric, and translucent skin. It reads environmental lighting conditions, front-lit, back-lit, overcast, all by analyzing a single frame. That’s a remarkable technical feat. A game frame has just 16 milliseconds to render. Hollywood VFX frames can take hours. NVIDIA is betting neural rendering can close that gap where brute computational force simply can’t.

Huang described the process as “neural rendering” because it “fuses” AI and 3D graphics. “We combined 3D graphics, structured data, with generative AI, probabilistic computing,” he said on stage. “One of them is completely predictive, the other probabilistic yet highly realistic. We combined these two ideas, controlled through structured data, controlled perfectly, and yet generating at the same time.”

The Demo That Divided the Internet

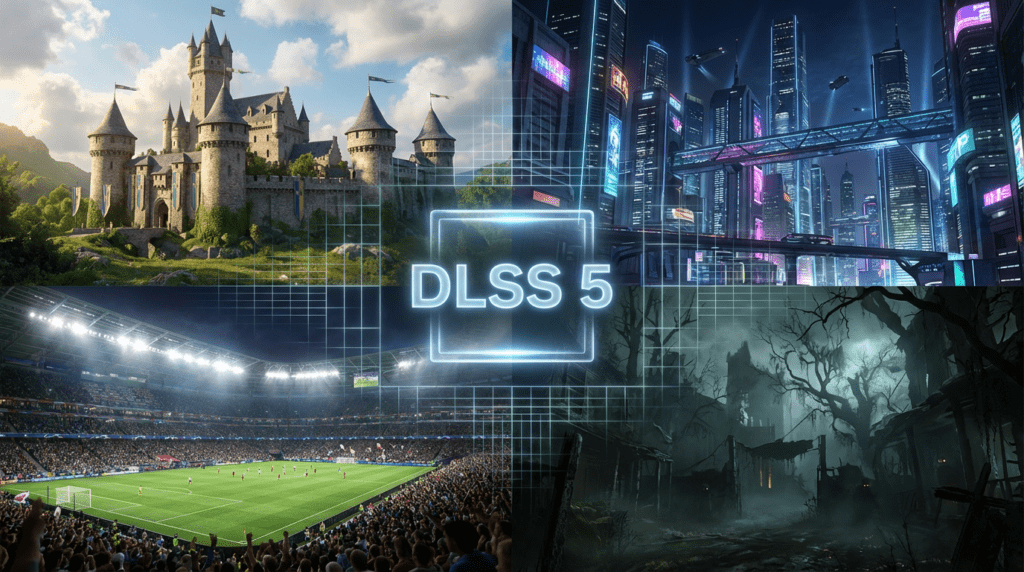

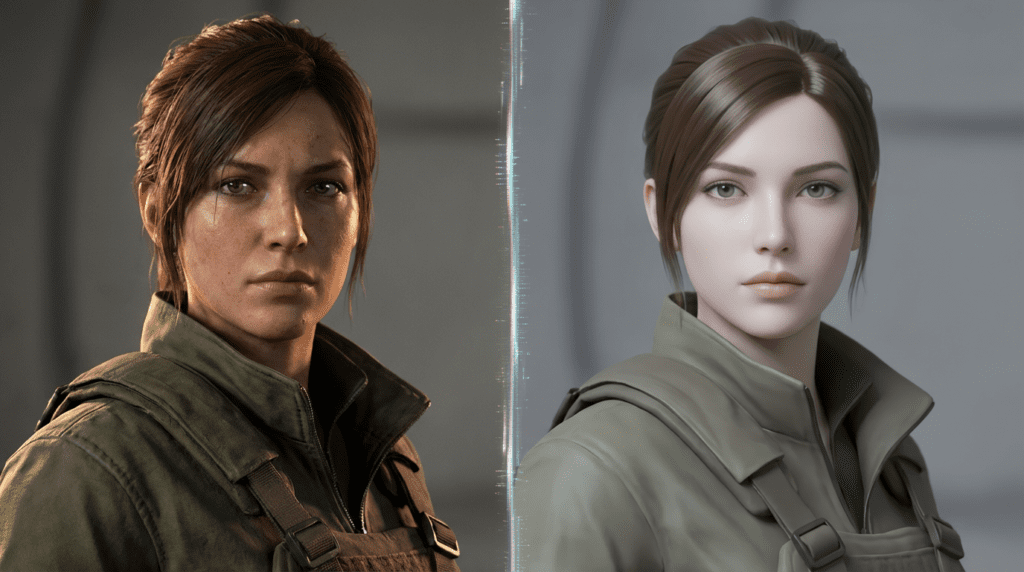

The demo video showed several games with characters staring directly at the camera. Then DLSS 5 switched on. The difference was stark, at least in some cases.

In Starfield, a female character’s face transformed. Dead, lifeless pupils suddenly reflected light accurately. Fine lines appeared on her skin. Her eyebrows looked plucked from a real human. Her down jacket showed realistic bubbling. Imperfections made it believable. The Register noted that with DLSS 5 on, she “comes to life completely” crossing from the uncanny valley to something approaching photorealism.

In EA Sports FC, a soccer player’s blotchy, pixelated skin became textured and human. You could clearly see the difference between his facial hair and shadows. Dark patches, forehead lines, and skin imperfections all appeared. It looked real.

But then came Resident Evil: Requiem. Protagonist Grace got a makeover. And not everyone liked what they saw.

The Verge described it bluntly: Grace looked like she’d been “ripped out of a Grok Imagine demo.” The Hogwarts Legacy kids looked like they’d been run through an Instagram filter. Even Liverpool captain Virgil van Dijk, a very real, very famous person had his features warped into something generic. He became, as The Verge put it, “just some other dude.”

The criticism wasn’t just aesthetic. It was deeper than that. The faces didn’t just look bad. They looked the same. Unnaturally smooth skin. Uniform features. Perpetually cheerful eyes. Full lips. Perfectly styled synthetic-looking hair. Small noses. HDR-style lighting that highlights every contour. These are the telltale signs of AI-generated faces, and DLSS 5 was stamping them onto beloved game characters.

The Uncanny Valley Problem

The uncanny valley is a well-documented phenomenon. When computer-generated characters are semi-realistic but just “off” enough, they feel deeply disturbing. DLSS 5 was supposed to push characters out of the uncanny valley. In some cases, it did. In others, it pushed them somewhere arguably worse, into the realm of AI slop.

The Register framed the technology optimistically, noting that with DLSS 5 on, characters look like they’ve “stepped out of a photo.” But even that framing carries a caveat: “perhaps an AI-generated photo, but one of the better ones.”

The Verge was less charitable. Senior entertainment editor Andrew Webster compared DLSS 5 to motion smoothing that much-maligned TV feature that makes everything look like a cheap soap opera. “It’s sort of like motion smoothing,” he wrote, “if motion smoothing went a step farther and changed people’s faces, and it’s making everything look the same.”

That homogenization is the real concern. AI-generated faces are an amalgamation of countless images, processed into a sort of averaged ideal. The result is a face that belongs to no one and everyone at once. It’s the same aesthetic flooding Instagram feeds and YouTube thumbnails. Now it’s coming for your video games.

What the Developers Are Saying

NVIDIA didn’t announce DLSS 5 alone. It brought friends.

Major publishers have already committed to the technology. Bethesda, Capcom, Ubisoft, Warner Bros. Games, Tencent, NetEase, Hotta Studio, and NCSoft are all on board. That’s a serious lineup. These aren’t small studios hedging their bets, these are the companies that define mainstream gaming.

Bethesda boss Todd Howard said that “with DLSS 5 the artistic style and detail shine through without being held back by the traditional limits of real-time rendering.” He confirmed the feature will be available in Starfield. Capcom’s Jun Takeuchi, executive producer on many of the company’s biggest titles including Resident Evil: Requiem, said that “DLSS 5 represents another important step in pushing visual fidelity forward, helping players become even more immersed in the world of Resident Evil.”

These are enthusiastic endorsements. But they also raise a question: did these developers actually look at the demo? Because the internet certainly did, and it wasn’t impressed by what DLSS 5 did to their carefully crafted characters.

To be fair, Bethesda later clarified on social media that what audiences saw was a “very early look,” and that the studio’s “art teams will be further adjusting the lighting and final effect to look the way we think works best for each game.” That’s a meaningful caveat. The fall 2026 release is still months away. There’s time to refine things.

Which Games Will Support It?

Hindustan Times and Blockchain.news both confirmed the growing list of titles set to support DLSS 5. Here’s what’s confirmed so far:

- Assassin’s Creed Shadows

- Hogwarts Legacy

- Starfield

- Resident Evil: Requiem

- The Elder Scrolls IV: Oblivion Remastered

- Phantom Blade Zero

- EA Sports FC

That’s a diverse mix, open-world RPGs, survival horror, sports simulations, and action games. The breadth of support suggests NVIDIA has done serious legwork convincing developers to integrate the technology. And integration uses the existing NVIDIA Streamline framework, the same one used for current DLSS and Reflex implementations. Developers get controls for intensity, color grading, and masking to maintain artistic intent. That flexibility matters. It means studios can dial back the effect if it clashes with their visual style.

What Hardware Will You Need?

Here’s where things get complicated for most gamers.

The early preview demo shown at GTC ran on two GeForce RTX 5090s. One GPU handled game rendering. The other ran the DLSS 5 model. That’s an eye-watering hardware requirement, two of NVIDIA’s most powerful and expensive consumer graphics cards working in tandem.

NVIDIA says DLSS 5 will run on a single GPU at release. But the company hasn’t specified exactly which GPUs will be supported, or what the minimum requirements will look like. Given that DLSS 5 is a neural rendering model operating at up to 4K resolution in real time, the demands will almost certainly be steep.

PCMag noted that Huang only teased the technology at GTC without revealing full technical details. The company published a blog post with additional information, but key questions remain unanswered, including how DLSS 5 affects frame rates and what compromises it introduces.

For most PC gamers, this is a technology to watch rather than immediately plan for. The fall 2026 launch will bring more clarity.

The Bigger Picture: AI in Gaming

DLSS 5 doesn’t exist in a vacuum. It arrives at a moment when AI is infiltrating nearly every corner of the gaming industry, and not always to applause.

The industry has been battered by layoffs and studio closures. Post-pandemic slowdowns and expensive misplaced bets have left thousands of developers out of work. Against that backdrop, a technology that uses AI to replace or alter the work of artists and character designers carries real weight. It’s not just an aesthetic debate. It’s an economic one.

Since the announcement, indie developers have pushed back hard. Memes spread fast. More explicit negative statements followed. A growing number of smaller studios already use “AI free” as a marketing label, a badge of honor signaling that human artists made every pixel. DLSS 5 puts pressure on that position, especially when major publishers are enthusiastically endorsing it.

The Verge pointed out another uncomfortable dimension: gaming has a subset of audience members with “very backward ideas about what a normal human woman looks like.” Making existing female characters simultaneously more generic and more cartoonish through an AI tool isn’t just an artistic problem. It’s a cultural one.

The Verdict: Breakthrough or Blunder?

The truth is probably somewhere in the middle.

DLSS 5 is technically impressive. The idea of closing the gap between Hollywood rendering and real-time gaming is genuinely exciting. The Starfield and EA Sports FC demos showed real promise. Characters that previously looked lifeless suddenly had texture, depth, and humanity.

But the Resident Evil and Hogwarts Legacy demos showed something else entirely. They showed what happens when AI homogenizes art, when a tool trained on millions of images produces a face that belongs to no one and looks like everyone. That’s not a minor bug. That’s a fundamental tension at the heart of what DLSS 5 is trying to do.

NVIDIA has until fall 2026 to get this right. The publisher support is there. The technical foundation is real. But the company needs to prove that neural rendering can enhance a game’s artistic vision rather than replace it with something generic.

Jensen Huang called this the “GPT moment for graphics.” Maybe. But GPT also had its share of hallucinations.

Sources

- The Verge — Nvidia’s DLSS 5 is like motion smoothing for video games, but worse

- The Register — Nvidia’s DLSS 5 promises to bring you out the other side of the uncanny valley

- Blockchain.news — NVIDIA Unveils DLSS 5 at GTC as NVDA Stock Dips 1.6%

- Hindustan Times — What Is NVIDIA DLSS 5 and why is it a big deal?

- PCMag Middle East — Whoa: Nvidia’s DLSS 5 Can Make PC Games Look Real