ByteDance just dropped its most powerful AI video model yet. The internet is buzzing. Hollywood is furious. And creators everywhere are asking one question: is this the future of filmmaking?

The Clip That Broke the Internet

It started with a short video. Irish filmmaker Ruairi Robinson uploaded a series of clips to social media in mid-February 2026. The footage showed a digital duplicate of Tom Cruise fighting Brad Pitt, battling humanoid robots, and fending off zombies. The characters moved with fluid, almost choreographed precision. The “camerawork” was kinetic and cinematic. And it was all generated by an AI.

The model behind it? Seedance 2.0 ByteDance’s newest and most powerful AI video generation engine.

The clips went viral fast. Gen AI enthusiasts declared Hollywood “cooked.” Studios panicked. And suddenly, Seedance 2.0 was the most talked-about AI model of the month. But the story doesn’t end with impressive visuals. It gets complicated fast.

What Exactly Is Seedance 2.0?

Let’s back up. Seedance 2.0 is a next-generation AI video engine built by ByteDance, the Chinese company best known for TikTok. It runs on an advanced multimodal architecture. That means it doesn’t just process text. It understands how images, video, and audio work together and it generates all three simultaneously.

According to ByteDance, the model features “exceptional motion stability” and delivers “an ultra-realistic immersive experience.” That’s marketing language, sure. But the sample videos back it up. One clip opens on a ballerina’s feet, pulls back to a wide shot as she spins, then cuts to a mid-shot all in one seamless generation. Another starts with an ultra-wide view of a crowded amphitheater, then dives like a drone into a mid-shot of circus performers.

These aren’t static, jittery clips. They’re cinematic. They move like real camera operators shot them.

The model supports text prompts, reference images, audio files, and existing video clips as inputs. It outputs 1080p to 2K resolution video with native audio dialogue, ambient sound, and music all baked in during a single generation pass. No stitching. No post-production audio sync. Just one prompt, one output.

As Stone Griko noted on DEV Community, Seedance 2.0 combines “10x faster generation speeds with deep narrative coherence,” enabling creators to produce professional-grade content that previously required massive post-production budgets.

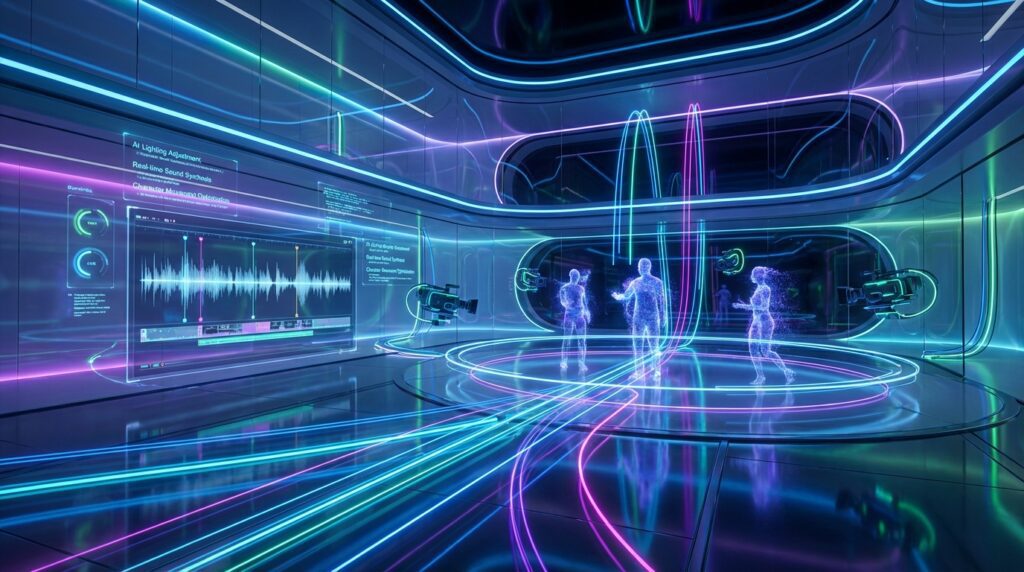

The @Tag System: Directing AI Like a Film Crew

Here’s where Seedance 2.0 gets genuinely interesting for creators. Most AI video generators take a text prompt and do whatever they want with it. Seedance 2.0 works differently. It uses a multimodal @tag reference system that gives creators precise, director-level control over every element of a video.

The system works like this: you upload images, video clips, and audio files, then assign each one a specific role using @tags in your prompt. @Image1 as the first frame. @Video1 for camera movement reference. @Audio1 as background music. The model follows your instructions not its own interpretation.

You can upload up to 12 files in a single generation: 9 images, 3 videos, and 3 audio clips. Each gets a numbered tag. Each tag gets a job.

The results are remarkable. Want a Van Gogh-style video? Upload a Van Gogh painting as @Image1 and prompt the model to render the scene in that style. The output isn’t a filter overlay it’s genuine style transfer with brushstroke texture maintained throughout the entire clip. Want a product commercial? Upload three product photos from different angles, assign each a role, and the model generates a polished showcase that maintains exact material textures and proportions.

The @tag system also solves one of AI video’s biggest headaches: character consistency. Upload multiple reference images of the same character from different angles, and Seedance 2.0 maintains their appearance across shots. No more “face morphing” between scenes.

This level of control separates Seedance 2.0 from competitors like Sora 2, Kling 3.0, and Veo 3.1. None of them offer this kind of multimodal direction. It’s the difference between describing what you want and actually showing the model what you mean.

Cinematic Camera Work, On Demand

One of Seedance 2.0’s most jaw-dropping capabilities is its ability to replicate specific camera techniques from a reference video. Upload a clip with a Hitchcock zoom, and the model reproduces that exact dolly-zoom effect applied to a completely different character and setting.

According to a complete guide on DEV Community, the platform supports multiple input modalities and can extend or transform existing footage while maintaining visual consistency. The model understands camera language. It can replicate 360° orbits, one-shot continuous takes, mechanical arm multi-angle tracking, low-angle hero shots, handheld chase cameras, and fish-eye lens distortion all from a single reference video.

This is huge for independent filmmakers. You don’t need a drone operator or a Steadicam rig. You upload a reference clip and describe what you want. The model handles the rest.

The audio side is equally impressive. Seedance 2.0 supports beat synchronization upload a music track, and the model syncs visual cuts and transitions to the beat. It generates multi-language dialogue with matching lip-sync and voice acting. It creates context-aware ambient sounds that match the scene. All of this happens natively, in one generation pass.

Who’s Actually Using It?

Seedance 2.0 is still in beta. The official Seedance website currently shows version 1.5 Pro and 1.0 for general users, with 2.0 listed as “coming soon.” There’s no confirmed timeline for a wider release.

But third-party platforms have already stepped in to fill the gap. Seedance2ai.online launched in February 2026 as a browser-based platform giving global creators access to the Seedance 2.0 model no software installation, no API keys, no Mandarin-language interface required.

That last point matters. Seedance 2.0 sits behind platforms designed for domestic Chinese users. International creators face Mandarin interfaces, payment systems that don’t accept foreign cards, and slow-moving waitlists. Seedance2ai.online was built specifically to solve that access problem.

The workflow is simple: upload a photo or type a description, set your resolution and aspect ratio, hit generate. A finished clip shows up in minutes. No watermark. No branding on the output. Seven aspect ratios are supported 16:9 for YouTube, 9:16 for Reels, 1:1 for feed posts, and more. Clips run up to 12 seconds. Resolution options range from 480p to 1080p.

Early users have included Etsy sellers turning product photos into short video listings, real estate agents building walkthrough clips from property stills, and freelance social media managers who need five pieces of video content a week without a videographer on call. Three pricing tiers are available, including a free tier with no credit card required.

The real-world applications extend far beyond social media. Marketing teams create product demos in minutes. Educators produce instructional videos easily. Game developers create cutscenes and trailers. Independent filmmakers prototype scenes before committing to a full shoot.

Hollywood Fires Back

Here’s where the story gets messy. The Tom Cruise clips weren’t just impressive they were illegal. Or at least, that’s what Hollywood’s biggest players argued.

The Motion Picture Association, Disney, Paramount, and Netflix each sent ByteDance cease and desist letters over claims of copyright infringement. The studios weren’t just upset about the Cruise likeness. They were alarmed by what Seedance 2.0’s capabilities implied: that any actor’s face, any fictional character, any copyrighted visual could be replicated and repurposed without permission.

ByteDance responded by saying it would take steps “to strengthen current safeguards as we work to prevent the unauthorized use of intellectual property and likeness by users.” But as of late February 2026, ByteDance had not released a version of Seedance that actually prevents users from generating footage the company doesn’t have the rights to create. The company also paused its plans to release Seedance 2.0’s API to the public.

The platform itself currently blocks uploads of realistic human face photos a technical limitation that forces users to work with illustrated or stylized character references instead. But that restriction applies to inputs, not outputs. Users can still prompt the model to generate realistic-looking faces.

The legal and ethical debate over generative AI is not new. But Seedance 2.0 has sharpened it. The model’s ability to re-create real people’s faces with striking accuracy raises serious questions about consent, likeness rights, and the sourcing of training data.

Art or Slop? The Jia Zhangke Question

Not all Seedance 2.0 content is viral celebrity deepfakes. Chinese director Jia Zhangke used the model to create Jia Zhangke’s Dance a short film in which Zhangke debates the nature of creativity with an AI version of himself. After one of the Jias reveals himself to be an AI copy, the film follows both characters on a Matrix-like journey through different settings designed to showcase AI’s visual range.

The film unfolds with a smoothness and narrative coherence you’d struggle to find scrolling through OpenAI’s Sora app. Shots are short as they are with all AI-generated video but they’re edited together to create the illusion of longer takes. Foreground objects strategically cover background continuity errors. It’s skilled, deliberate filmmaking that works around the technology’s limitations rather than ignoring them.

The Verge’s Charles Pulliam-Moore argues that Jia Zhangke’s Dance shows “the degree to which many AI enthusiasts haven’t been trying especially hard to make their creations look like the kind of art that would put butts in theaters.” The film is a shining example of what’s possible when a skilled filmmaker engages seriously with the tool.

But it also raises the central question: is AI-generated video art, or is it always “slop” not because it looks bad, but because it’s the product of a workflow devoid of direct authorial intent? The model can parse inputs and generate outputs that seem narrative-driven. But it can’t follow a story’s beats or understand a character’s motivations the way a human filmmaker can.

The answer probably depends on who’s holding the prompt.

The Road Ahead: Impressive, But Unresolved

Seedance 2.0 is genuinely impressive. Independent benchmarks from Artificial Analysis ranked it among the top AI video models currently available. Its multimodal architecture, @tag reference system, native audio generation, and cinematic camera replication put it ahead of most competitors.

But the path forward is complicated. ByteDance faces ongoing legal pressure from Hollywood studios. The API release is paused. Questions about training data sourcing remain unanswered. And the model’s most viral use cases celebrity deepfakes and copyrighted character recreations have made it a lightning rod for the broader IP debate in AI.

Studios like Asteria and companies including Adobe are building “IP-safe” models trained on properly licensed data. That’s the direction the industry needs to move. Until then, Seedance 2.0 sits in a strange position: the most technically capable AI video model available, wrapped in a controversy that undermines its own best qualities.

The technology is here. The creative potential is real. What happens next depends on whether ByteDance and the AI video industry as a whole can build something worth watching without stealing the footage to do it.

Sources

- The Verge — “Seedance 2.0 might be gen AI video’s next big hope, but it’s still slop”

- Benzinga — “Seedance2ai.online Launches All-in-One Seedance 2.0 Video Creation Platform”

- Seedance2API — “Seedance 2.0 Multimodal Reference: The Ultimate Guide to @Tags”

- Videomaker — “ByteDance releases Seedance 2.0 AI video generator”

- DEV Community — “Seedance 2.0 is a next-generation AI video engine”

- DEV Community — “How to Create Cinematic AI Videos with Seedance 2.0 Pro”