The Scandal That’s Shaking the Wearable Tech World

Meta’s Ray-Ban smart glasses were supposed to be the future. Sleek. Stylish. Hands-free AI at your fingertips, or rather, on your nose. But a bombshell investigation from two Swedish newspapers has cracked that polished image wide open. And what’s underneath is deeply unsettling.

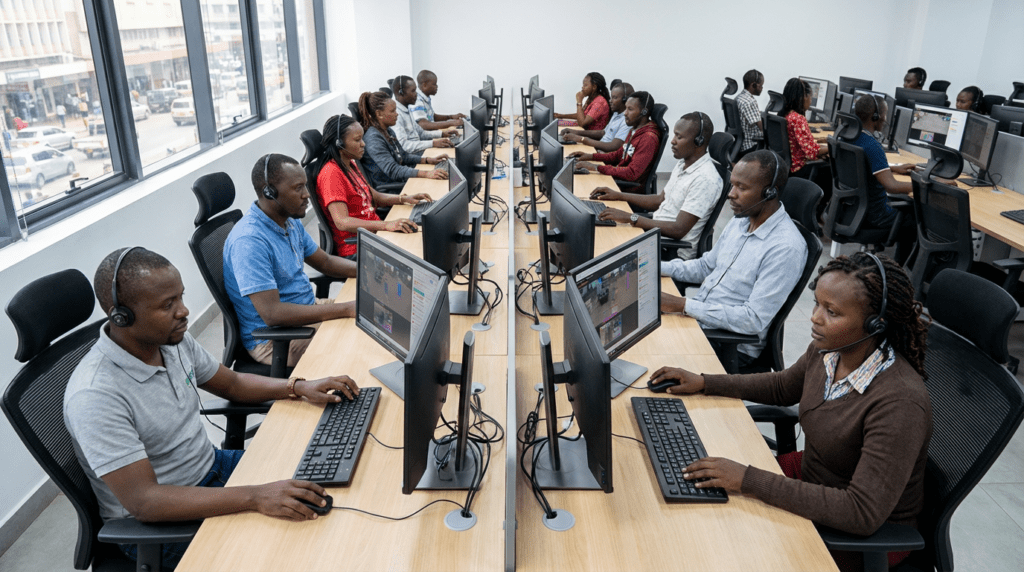

According to a report published by Svenska Dagbladet and Göteborgs-Posten, Meta’s AI-powered glasses are sending sensitive footage, including bathroom visits, nudity, and sexual activity, to human reviewers in Nairobi, Kenya. These aren’t rogue employees. They’re contracted workers, hired specifically to help train Meta’s AI systems. And they’ve seen things most people would never want a stranger to see.

The fallout has been swift. Regulators are asking questions. Lawsuits are being filed. And millions of people who own a pair of Ray-Ban Meta glasses are suddenly wondering: Who’s been watching me?

What the Swedish Investigation Actually Found

Svenska Dagbladet didn’t stumble onto this story by accident. Journalists spent time speaking directly with data annotators, workers employed by a Nairobi-based company called Sama, a Meta subcontractor. These workers label images, text, and audio to help AI systems learn how to interpret the world.

What they described was shocking.

“We see everything, from living rooms to naked bodies,” one worker told the outlet. “Meta has that type of content in its databases.”

The footage they reviewed came directly from glasses wearers using Meta’s AI assistant. When a user activates the AI, either manually or through a voice command like “Hey Meta” the glasses can capture images and video. That content, according to the investigation, sometimes ends up in the hands of human reviewers halfway around the world.

One particularly disturbing account involved a man whose glasses were left recording in a bedroom. The camera later captured a woman, apparently his wife, undressing. She had no idea. He may not have realized the glasses were still recording either.

Workers also reported seeing bank cards in footage. Faces, too, even though Meta claims it blurs them automatically. According to the Kenyan contractors, that blurring system “does not always work as intended.”

Meta’s Response: “We Take Privacy Seriously”

Meta didn’t deny the practice. Instead, the company leaned on its privacy policy.

“When people share content with Meta AI, we sometimes use contractors to review this data for the purpose of improving people’s experience, as many other companies do,” a Meta spokesperson told The Verge. “We take steps to filter this data to protect people’s privacy and to help prevent identifying information from being reviewed.”

The company also clarified that media captured by the glasses “stays on the user’s device” unless the user chooses to share it with Meta or others. But that’s the catch, using the AI features counts as sharing. And most users probably didn’t realize that.

Meta pointed to its Supplemental Terms of Service and its UK AI terms, which do mention that interactions with AI may be reviewed by humans. But buried in a lengthy legal document isn’t the same as clearly telling someone their intimate moments might be watched by a stranger in Nairobi.

The company also noted that its glasses display a small LED light when recording is active. It advises users to show others when the light is on and to avoid recording in private spaces. Good advice. But it doesn’t address what happens to footage after it’s captured.

Who Is Sama — and Why Does It Matter?

The company at the center of this story is Sama, a Nairobi-based data annotation firm with a complicated history.

Sama started as a non-profit. Its mission was to create tech jobs in East Africa and lift people out of poverty through digital work. It earned B-corp status, a designation meant to signal ethical business practices.

But Sama’s reputation took a hit when it provided content moderation services to major tech companies. Former employees filed legal action. Critics raised concerns about the psychological toll of reviewing disturbing content without adequate support. Sama eventually stopped offering content moderation services and later said it regretted taking on that kind of work.

Now, Sama is back in the spotlight, this time for AI annotation work. The company told the BBC it operates in “secure, access-controlled facilities,” bans personal devices on production floors, and conducts background checks on all staff. It said its work complies with international regulations and that team members “receive living wages and full benefits.”

But the workers who spoke to Swedish journalists painted a different picture of what they were actually seeing on their screens.

Regulators Are Already Knocking

The UK’s data watchdog didn’t waste time. The Information Commissioner’s Office (ICO) told the BBC it found the claims “concerning” and announced it would write to Meta to request information on how the company is meeting its obligations under UK data protection law.

“Devices processing personal data, including smart glasses, should put users in control and provide for appropriate transparency,” the ICO said in a statement. “Service providers must clearly explain what data is collected and how it is used.”

That’s a pointed message. And it signals that regulators aren’t satisfied with privacy policies buried in fine print. Users need to actually understand what they’re agreeing to, not just technically consent to it.

The ICO’s intervention matters. The UK has some of the world’s strongest data protection laws, and a formal inquiry into Meta’s practices could have significant consequences, both legally and reputationally.

A Lawsuit Is Already in Motion

Regulators aren’t the only ones pushing back. At least one proposed class action lawsuit has already been filed in response to the Swedish investigation, as reported by The Verge.

The complaint accuses Meta of violating false advertising and privacy laws. It zeroes in on a specific tension: Meta marketed the Ray-Ban glasses as devices “designed for privacy,” while simultaneously routing footage to overseas contractors.

The lawsuit argues:

“By affirmatively claiming that the Glasses were designed to protect privacy, Meta assumed a duty to disclose material facts that would inform a reasonable consumer’s decision to purchase the product. Instead, Meta hid the alarming reality: that use of the AI features results in a stranger halfway around the world watching the most private moments of a person’s life.”

That’s a powerful framing. And it could resonate with a jury. False advertising claims are often easier to prove than privacy violations, especially when a company’s own marketing materials make specific promises about privacy that the product doesn’t actually deliver.

The Bigger Problem: AI Needs Human Eyes

Here’s the uncomfortable truth that this scandal forces into the open: AI systems don’t train themselves.

Behind every smart assistant that recognizes faces, understands speech, or interprets images, there are thousands of human workers labeling data. They watch footage. They listen to recordings. They read transcripts. It’s painstaking, often disturbing work, and it’s essential to how modern AI functions.

Meta’s Kenyan contractors weren’t doing anything unusual by industry standards. They were doing exactly what data annotators do. The problem is that most consumers have no idea this process exists.

When someone puts on a pair of smart glasses and asks the AI what’s in front of them, they probably imagine a sophisticated algorithm processing the image in real time. They don’t picture a worker in Nairobi watching the same footage a few days later, labeling it for a training dataset.

The Tech Buzz put it plainly: “The reality is far messier, involving vast networks of contractors who annotate, label, and quality-check the data that makes AI systems work.”

This isn’t unique to Meta. Apple, Google, Amazon, all of them have faced scrutiny over human review of AI-generated data. But smart glasses are different. They sit on your face. They capture ambient life. They record bystanders who never agreed to anything.

The Sales Numbers Make This Worse

This isn’t a niche product affecting a small group of early adopters. EssilorLuxottica, the eyewear giant that partners with Meta on the glasses, sold over 7 million AI-powered glasses in 2025 alone, more than tripling combined sales from 2023 and 2024, according to The Verge.

Seven million pairs of glasses. Seven million potential streams of footage flowing into Meta’s systems. And until this investigation, most of those users had no idea their intimate moments could end up in a review queue in Kenya.

Meta also made a significant policy change last year. It updated its privacy settings so that Meta AI with camera use stays enabled on the glasses “unless you turn off ‘Hey Meta.'” It also removed the option for users to opt out of storing voice recordings in the cloud. Both changes quietly expanded the scope of data collection, without much fanfare.

What This Means for the Future of Wearable AI

The Ray-Ban Meta glasses aren’t going away. Neither is ambient AI computing. Apple, Google, and Snap are all developing or iterating on smart glasses of their own. The technology is advancing fast. The privacy frameworks are not keeping up.

This scandal should serve as a wake-up call, not just for Meta, but for the entire industry. Companies building AI wearables need to be radically transparent about where footage goes, who reviews it, and what users are actually agreeing to when they activate AI features.

The Electronic Privacy Information Center has already raised alarms about Meta’s alleged plans to build facial recognition into its smart glasses, calling it a “grave risk to privacy, safety, and civil liberties.” If that technology gets added to a product that’s already sending intimate footage to overseas contractors, the implications are staggering.

For now, Meta faces a choice. It can treat this as a PR problem to manage. Or it can treat it as a genuine reckoning, an opportunity to rebuild trust by being honest about how its AI systems actually work.

The Swedish investigation didn’t just expose a privacy gap. It exposed a fundamental disconnect between how AI companies talk about their products and how those products actually function. Fixing that gap requires more than a policy update. It requires a different kind of honesty.

And right now, that honesty is long overdue.

Sources

- BBC News — Regulator contacts Meta over workers watching intimate AI glasses videos

- MacDailyNews — Meta smart glasses said to be sending footage of ‘bathroom visits, sex, and other intimate moments’ to human reviewers in Kenya

- The Verge — Meta’s AI glasses reportedly send sensitive footage to human reviewers in Kenya

- The Tech Buzz — Meta AI Glasses Send Intimate Footage to Kenya Reviewers

- Svenska Dagbladet — Original Investigation

- Class Action Complaint (DocumentCloud)