The Oversight Board Is Sounding the Alarm And Meta Needs to Listen

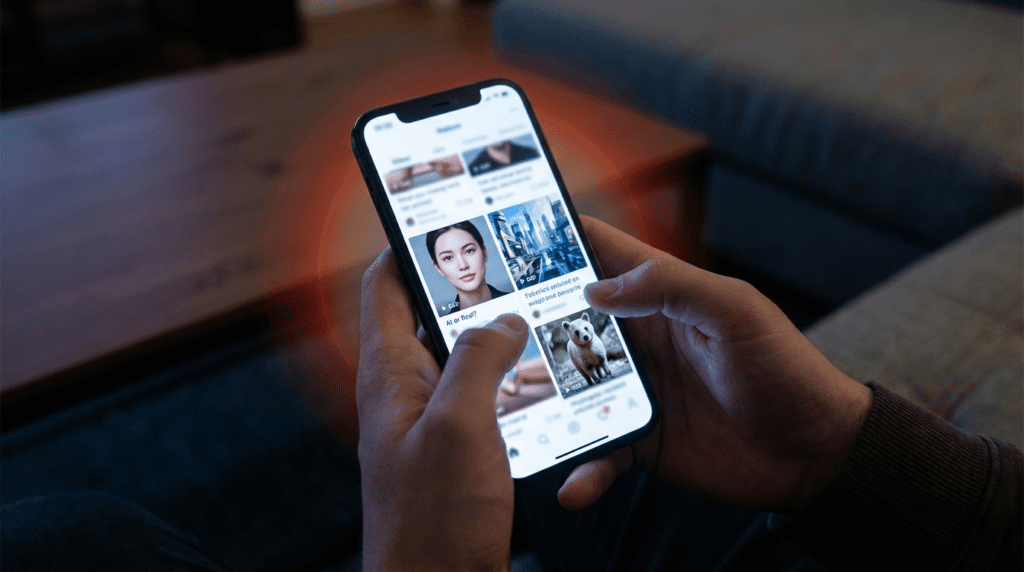

Scroll through Facebook or Instagram on any given day. You’ll see videos of war zones, political speeches, and breaking news. Some of it is real. A lot of it isn’t. And right now, Meta doesn’t have a reliable way to tell the difference.

That’s the uncomfortable truth sitting at the center of a new report from Meta’s Oversight Board, the semi-independent body that guides the company’s content moderation decisions. The Board isn’t mincing words. Meta’s current system for identifying and labeling AI-generated content is, in their words, “not robust or comprehensive enough.” And with armed conflicts escalating across the Middle East, the stakes couldn’t be higher.

This isn’t just a tech problem. It’s a public safety crisis hiding in plain sight.

What Sparked the Investigation

The Oversight Board’s latest push didn’t come out of nowhere. It stems from a specific incident, a fake AI-generated video that showed alleged damage to buildings in Israel. The video spread across Meta’s platforms last year. It looked convincing. It wasn’t real.

What made it worse? The content didn’t even originate on Facebook or Instagram. It started on TikTok, then jumped to Facebook, Instagram, and X. By the time anyone flagged it, the damage was done.

The Board called this out directly. “The case also highlights the challenges with cross-platform proliferation of such content,” the Board said in its announcement. That’s a polite way of saying: fake content travels fast, and Meta’s tools aren’t keeping up.

Now, with what the Board describes as “massive military escalations” throughout the Middle East, the urgency has only grown. People in conflict zones rely on social media for real-time information. When that information is fabricated, lives can be put at risk.

The Core Problem: Meta Relies Too Much on Self-Disclosure

Here’s where things get frustrating. Meta’s current system for catching AI-generated content leans heavily on one thing, people admitting they used AI. That’s it. If a user doesn’t disclose that a video or image was AI-generated, Meta’s system often won’t catch it.

The Oversight Board put it plainly: Meta’s approach is “overly dependent on self-disclosure of AI usage and escalated review.” In other words, the company is essentially trusting bad actors to flag their own misinformation. That’s not a strategy. That’s wishful thinking.

The Board is now pushing Meta to build better AI detection tools, establish clearer community standards specifically for AI-generated content, and be more transparent about what happens when users violate those standards. These aren’t radical demands. They’re basic expectations for a platform that reaches billions of people every day.

One of the most pressing recommendations involves C2PA, a technical standard also known as Content Credentials. C2PA embeds metadata into digital content that shows where it came from and whether AI was used to create it. Think of it as a digital certificate of authenticity.

The problem? Meta isn’t consistently using it. The Board says it’s “concerned by reports that Meta is inconsistently implementing the C2PA standard, even on content generated by its own AI tools.” Only a portion of Meta AI outputs are being properly labeled. That’s a significant gap, especially when Meta’s own AI tools are generating content that ends up on its own platforms.

You can read the full Oversight Board findings at The Verge.

A Board Built for a Different Era

To understand why this is so hard to fix, you need to understand how the Oversight Board actually works.

Meta established the Board in 2018 as an independent external body to review content moderation decisions across Facebook, Instagram, and Threads. Its 21 global members function like a “supreme court” they take appeals from users, review cases, and issue binding decisions as well as broader recommendations. Meta has implemented all of the Board’s binding decisions and roughly 75% of its recommendations.

That sounds impressive. But here’s the catch: the Board’s process takes months. It requires public comment periods, nuanced deliberation, and careful review. That model made sense before generative AI exploded. It’s struggling to keep pace now.

“We are always trying to reduce time taken and trying to get more done,” said Sudhir Krishnaswamy, a legal scholar and the only Indian member on the global board, in an interview with Rest of World. “Maybe because of the gen-AI space, some of our work would be less individual case-based and more structured. That’s a possibility. And we’re very open to that.”

The numbers tell the story. Seven in ten social media images are now AI-generated using tools like Midjourney or DALL-E. Eight in ten content recommendations rely on AI. By 2026, nearly half of all social media content created by businesses will be AI-generated. The Board was built to handle individual cases. It’s now facing a flood.

Machines Are Getting Better — But Not at the Hard Stuff

Meta doesn’t rely solely on human reviewers. Machine moderation has been the backbone of content enforcement across all major platforms for years. And the tools have gotten more sophisticated. But they have a serious blind spot.

Krishnaswamy acknowledged that machine moderation has improved in certain areas. It’s gotten good at identifying adult nudity, gathering context signals, and scaling enforcement. But when it comes to misinformation, disinformation, and hate speech? Those remain “too complicated” for machines.

“Machines get better in some policy areas and then get worse at others,” Krishnaswamy said.

That’s a problem that hits hardest outside the Western world. AI moderation tools are trained predominantly on English-language data. They underperform in other languages and cultural contexts. The consequences are real.

According to Aditya Vashistha, head of Cornell University’s Global AI Initiative, AI frequently misses disability-related slurs in Hindi. Facebook didn’t implement a hate speech classifier for Bengali, one of the most widely spoken languages in the world, until 2020, years after similar tools existed for Western languages.

Rachel Adams, founder and CEO of the Global Center on AI Governance and author of The New Empire of AI: The Future of Global Inequality, put it bluntly: “With AI-generated content and AI-driven enforcement and moderation, the volume, velocity, and cross-language nature of problems the board was established to monitor have exploded.”

The Global Majority Bears the Cost

This isn’t just a technical failure. It’s an equity issue.

The Oversight Board has made a point of taking on cases from underrepresented communities around the world. Some of its most impactful decisions have come from places like Kenya, Ethiopia, Pakistan, Ukraine, and India. In one case, the Board pressed Meta to end its blanket ban on the Arabic word shaheed (martyr), which is used by extremists in some contexts but carries entirely different meanings for millions of Arabic, Urdu, Persian, Punjabi, Bengali, and Hindi speakers.

A Kenyan case, the Board overturned Meta’s decision to remove a political comment, ruling that the term used was not an ethnic slur but a form of political criticism. From Ethiopia, Pakistan, Ukraine, and Italy, the Board reversed Meta’s takedowns of comments directed at authorities, finding they were “rhetorical expressions of criticism, disdain, or disapproval and not credible threats.”

These decisions matter. They show that context is everything. And they show that a purely machine-driven moderation system, trained on Western data, will consistently fail the global majority.

Adams said AI moderation across social media platforms is “critically uneven” around the world. “The global majority often bears the cost of that unevenness,” she said, “whether through under-enforcement of moderation rules or limited contextual understanding.”

Krishnaswamy was even more direct: “What won’t work is if you see some of the early AI safety boards that some of the big majors set up, they’ve got all American boards. That is not going to work. It’s not going to work in Turkey, it’s not going to work in India, it is not going to work in Somalia.”

What Needs to Change

The Oversight Board has issued a clear set of recommendations. Meta needs to act on them — and fast.

First, Meta should improve its existing rules on misinformation to specifically address deceptive deepfakes. Right now, the policies are too vague. Second, Meta should establish a new, separate community standard for AI-generated content. Deepfakes deserve their own category, with their own rules and penalties.

Third, and most urgently, Meta needs to fix its C2PA implementation. “High-Risk AI” labels should be applied to synthetic images and videos far more frequently. Content Credentials should be clearly visible and accessible to users, not buried in metadata that most people will never see.

Adams also recommends that the Oversight Board itself expand its expertise. Beyond lawyers and journalists, the Board should bring in AI evaluation experts, sociolinguists, regional language specialists, child safety experts, and gender-based violence specialists. The problems are complex. The team needs to match that complexity.

The Board has also flagged a transparency gap. It’s easy to see when Meta takes down or reinstates posts based on Board decisions. It’s much harder to track whether Meta actually adopts broader recommendations, particularly those calling for algorithmic transparency. The Board says its calls to demystify how certain algorithms work have only been “partially addressed.”

The Clock Is Ticking

Meta isn’t legally required to implement the Oversight Board’s recommendations. But the pressure is mounting from multiple directions. Instagram head Adam Mosseri has previously raised concerns about the need to better identify authentic photographs and videos on Meta’s platforms. The Board’s findings align with those concerns.

The broader AI landscape is shifting fast. Social media platforms are already seeing a mix of humans, humans assisted by AI agents, and fully automated agents coexisting in the same spaces. Krishnaswamy described it as a “motley crew” and said the complicated cases will arise “when the platforms share humans and agents.”

“We don’t have a mandate on gen-AI,” he admitted, “but it’s something we’re trying to understand.”

That’s an honest answer. But understanding isn’t enough anymore. The technology is moving faster than the governance. Deepfakes are spreading faster than labels. And real people, in conflict zones, in marginalized communities, in countries where AI tools barely speak their language, are paying the price.

Meta built the platforms, the AI tools generating this content. Meta has the resources to fix this. The Oversight Board is telling them exactly what to do. The question now is whether Meta will actually listen.

Sources

- Meta’s deepfake moderation isn’t good enough, says Oversight Board — The Verge, Jess Weatherbed, March 10, 2026

- Meta’s Oversight Board races to govern the AI surge — Rest of World, Ananya Bhattacharya, March 5, 2026

- Global Center on AI Governance

- The New Empire of AI: The Future of Global Inequality by Rachel Adams

- Oversight Board Five-Year Assessment, December 2025

- C2PA / Content Credentials Standard