The Wild West of AI Regulation Has a New Sheriff — and She Lives in Sacramento

Let’s be real for a second. The United States does not have a unified AI law. Congress keeps talking about it. The White House keeps nudging. And meanwhile, artificial intelligence keeps evolving at a pace that makes everyone’s head spin. So who stepped up? California. Again.

Gov. Gavin Newsom signed a sweeping AI executive order this week. It puts guardrails on how AI companies do business with the state, It demands accountability. It pushes back — hard — against the federal government’s hands-off approach. And it signals something bigger: California isn’t waiting for Washington to figure this out.

This isn’t just a California story. It’s an American one. Maybe even a global one.

What Newsom’s Order Actually Does — In Plain English

Okay, so what did Newsom actually sign? Let’s break it down without the legal jargon.

The executive order requires companies that want state contracts to prove their AI systems don’t generate illegal content, don’t reinforce harmful biases, and don’t violate civil rights. That’s the baseline. No exceptions.

State agencies must also watermark AI-generated images and videos. Think of it as a digital “made by a robot” label. Transparency, people. It matters.

Here’s the kicker: within 120 days, California’s procurement and technology agencies must develop new AI certification standards. Companies will need to demonstrate responsible AI practices before they can do business with the state. That’s not a suggestion. That’s a requirement.

And the order goes even further. It gives California the power to separate its procurement process from the federal government’s — if needed. Translation? If Washington blacklists an AI company, California will conduct its own review and decide for itself.

That’s a bold move. And it’s not accidental.

The Anthropic Drama That Sparked It All

You can’t talk about this executive order without mentioning the Anthropic situation. It’s the subplot that turned into the main event.

The Department of Defense designated Anthropic as a supply chain risk. That designation effectively barred the San Francisco-based AI company from competing for certain military contracts. The reason? Anthropic pushed back on contract terms that would have allowed its AI to be used for domestic mass surveillance and fully autonomous weaponry.

Let that sink in. A company said “no” to building autonomous weapons. The Pentagon responded by labeling them a security risk. A federal judge later issued a temporary injunction to block the designation — but the damage to the relationship was done.

Newsom watched all of this unfold. Then he signed an order saying California will make its own calls about which companies it works with. The message to Washington was crystal clear: We’ll handle it from here.

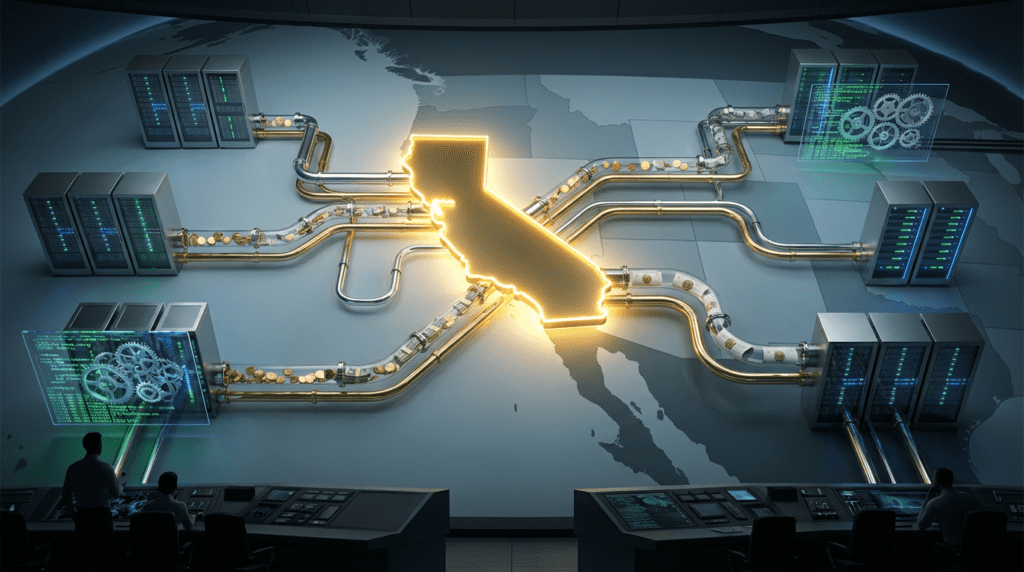

California’s Playbook: Use the Wallet as a Weapon

Here’s what makes California’s approach so powerful. It’s not just about laws. It’s about money.

California is the world’s fourth-largest economy. Any AI company that wants access to that market has to play by California’s rules. Full stop. You don’t get to opt out.

Joseph Hoefer, principal and chief AI officer at public affairs firm Monument Advocacy, put it perfectly: “If you want access to the world’s fourth-largest economy, you’re going to need to demonstrate baseline responsible AI practices. That’s a pretty powerful signal to the market.”

This is procurement as policy. It’s not glamorous. It doesn’t make for great headlines. But it works. Companies adapt. They always do.

And here’s the pattern that keeps repeating itself: California acts first. Companies adjust their practices to keep doing business there. Then those adjusted practices become the de facto national standard — because it’s easier to have one set of rules than fifty. Congress watches from the sidelines, gridlocked and ineffective, and eventually the states fill the vacuum.

We’ve seen this with data privacy. We’ve seen it with emissions standards. Now we’re watching it happen with AI.

Meanwhile, in Washington: A Blueprint With No Builder

To be fair, the White House isn’t completely silent on AI. On March 20, 2026, the Trump administration unveiled its National Policy Framework for Artificial Intelligence. It’s a seven-pillar framework that covers everything from protecting children to promoting innovation to preempting state laws.

Seven pillars. Sounds comprehensive, right?

Here’s the problem. The framework is a wish list. It’s a blueprint handed to a Congress that can’t agree on what to have for lunch, let alone how to regulate one of the most transformative technologies in human history.

The seven pillars include some genuinely important ideas. Protecting children from AI exploitation. Safeguarding communities from AI-driven fraud. Respecting intellectual property rights. Encouraging free speech. Promoting AI innovation through regulatory sandboxes. Empowering the workforce with AI training programs.

But here’s what’s missing: enforcement. The framework doesn’t propose specific penalties. It doesn’t create compliance mechanisms, It doesn’t establish oversight structures. It doesn’t address AI-generated discrimination or algorithmic accountability, It doesn’t even explain how existing agencies should coordinate enforcement.

As legal experts at McGuireWoods noted, “the absence of a federal AI framework has left existing legal doctrines — privilege law, constitutional Commerce Clause analysis, decades-old fraud statutes — to absorb questions they were never designed to answer.”

Old laws. New problems. That’s the situation right now.

The Preemption Problem: Who Gets to Make the Rules?

One of the most contentious parts of the White House framework is its push for federal preemption. The administration wants a single national AI standard that overrides state-level AI laws. The argument? AI development is “an inherently interstate phenomenon with key foreign policy and national security implications.”

That’s a reasonable argument on its face. Fifty different state AI laws would be a compliance nightmare for companies. Consistency matters.

But here’s the tension. The federal framework takes a light-touch approach to regulation. It doesn’t address bias. It doesn’t address discrimination, It doesn’t address civil rights. If that framework preempts California’s more robust protections, consumers lose.

California isn’t buying it. The state keeps pushing forward with its own legislation. Assemblymember Rebecca Bauer-Kahan said it plainly: “While Washington steps back from its responsibility to protect Americans from AI harms, California is stepping up on every front. We can lead the world in AI and still demand that it works for people, not against them.”

That’s the core disagreement. Washington says: innovate first, regulate later. California says: we can do both at the same time.

Big Tech Is Watching — and Lobbying

Don’t think for a second that Silicon Valley is sitting this one out. The big players are deeply involved in shaping California’s AI legislation. OpenAI and Anthropic have both been highly active in pushing various bills and ballot initiatives, often partnering with online safety groups.

The results have been mixed. Some bills pass. Some don’t. The influence is real, but it’s not absolute.

OpenAI, for its part, seemed supportive of Newsom’s order. The company said in a statement: “We are glad to see Governor Newsom continuing to lead on AI so California can continue to lead the world on AI.”

Google and Anthropic declined to comment on the order. Make of that what you will.

What’s clear is that California’s regulatory environment shapes company behavior — even when companies don’t love the rules. The state has leverage. It uses it.

What’s Actually at Stake for Regular People

Let’s zoom out for a second. Why does any of this matter to someone who isn’t a tech executive or a policy wonk?

Because AI is already in your life. It’s in your doctor’s office, It’s in your bank, It’s in your kid’s school. It’s in the hiring process when you apply for a job, It’s in the content you see on social media, It’s everywhere.

And right now, the rules governing how that AI behaves are a patchwork. Some states have protections. Some don’t. The federal government has a framework but no enforcement. Companies are largely self-regulating.

Newsom’s order requires state agencies to develop AI tools that help Californians access government services. It mandates transparency through watermarking, It protects civil liberties, It guards against discrimination. These aren’t abstract policy goals. They’re protections for real people.

The order also comes at a time when more than 20 California departments and agencies are developing or using Poppy — a generative AI assistant for state employees. Half a dozen state agencies are testing AI to assist employees and help homeless people and businesses. State courts and city governments are increasing their use of the technology.

AI is already running parts of government. The question is whether it runs responsibly.

Newsom’s Bigger Play

Here’s something worth noting. Gavin Newsom is widely considered a 2028 Democratic presidential contender. His handling of AI is being watched closely — by union leaders who want stronger worker protections, by Big Tech donors pouring money into California politics, and by voters who are increasingly aware of AI’s impact on their lives.

Newsom is positioning himself as the anti-Trump on AI. Where Trump says “innovate freely,” Newsom says “innovate responsibly.” Where the White House rolls back protections, Sacramento adds them.

“California’s always been the birthplace of innovation,” Newsom said in a statement. “But we also understand the flip side: in the wrong hands, innovation can be misused in ways that put people at risk. While others in Washington are designing policy and creating contracts in the shadow of misuse, we’re focused on doing this the right way.”

Whether you agree with his politics or not, the strategy is clear. California leads. The nation follows.

The Bottom Line: California Is Writing the First Draft of America’s AI Future

Here’s where we land. The federal government has a framework but no law. Congress has a blueprint but no builder. The White House wants preemption but lacks the legislative muscle to achieve it.

California, meanwhile, keeps moving. It signs executive orders, It advances legislation. It uses procurement power to shape company behavior, It protects civil rights when Washington won’t.

Legal experts at McGuireWoods put it bluntly: “Until Congress acts, states retain room to pursue their own AI regimes and the AI legal landscape will remain in flux.”

That flux is uncomfortable. But it’s also where progress happens. California has always been the testing ground for American policy. What works there tends to spread. What fails there gets discarded before it goes national.

Right now, California is testing something important: the idea that you can embrace AI and regulate it at the same time. That innovation and accountability aren’t opposites. That the world’s most powerful technology deserves the world’s most thoughtful guardrails.

The rest of the country is watching. So is the rest of the world.

Sources

- California cements its role as the national testing ground for AI rules — Axios

- White House Releases AI Legislative Recommendations — Password Protected Law (McGuireWoods)

- California sets its own AI rules for state contractors — The Decoder

- Newsom orders government to consider AI harm in contract rules — SFGate / CalMatters