In the world of software development, we’ve been conditioned to believe in a sacred process: idea, discussion, planning, approval, and then execution. The planning meeting—a cornerstone of this process—is seen as a due diligence checkpoint, a necessary cost to avoid building the wrong thing.

But what if that fundamental assumption is now wrong?

What if the cost of that single 30-minute planning meeting, with its attendees, its calendar invites, and its devastating impact on focus, is now literally higher than the cost of just building a prototype of the feature and seeing if it works?

This isn’t a hypothetical question. We are at a tipping point where the economics of software creation have been quietly inverted. The relentless, exponential decline in the cost of AI-generated code, coupled with the stubbornly high—and largely hidden—cost of human coordination, has created a new reality. For a growing number of tasks, it is now cheaper, faster, and more effective to ask an AI to build a functional first draft than it is to gather a few engineers in a room to talk about it.

Consider this: unproductive meetings cost U.S. businesses somewhere between $259 billion and $399 billion annually. Meanwhile, the cost to generate a thousand lines of working code with a state-of-the-art AI model has collapsed to about a dime. We are not talking about marginal efficiency gains here. We are talking about a fundamental restructuring of what it means to explore an idea.

This article isn’t just a thought experiment. It’s a practical, data-backed analysis of this new economic equation. We will break down the real cost of a meeting, compare it to the pennies required to generate thousands of lines of code, and explore the profound implications for how we build software. The conclusion is both simple and revolutionary: the era of speculative meetings is ending, and the era of instant, AI-driven experimentation has begun.

The Math: A Tale of Two Costs

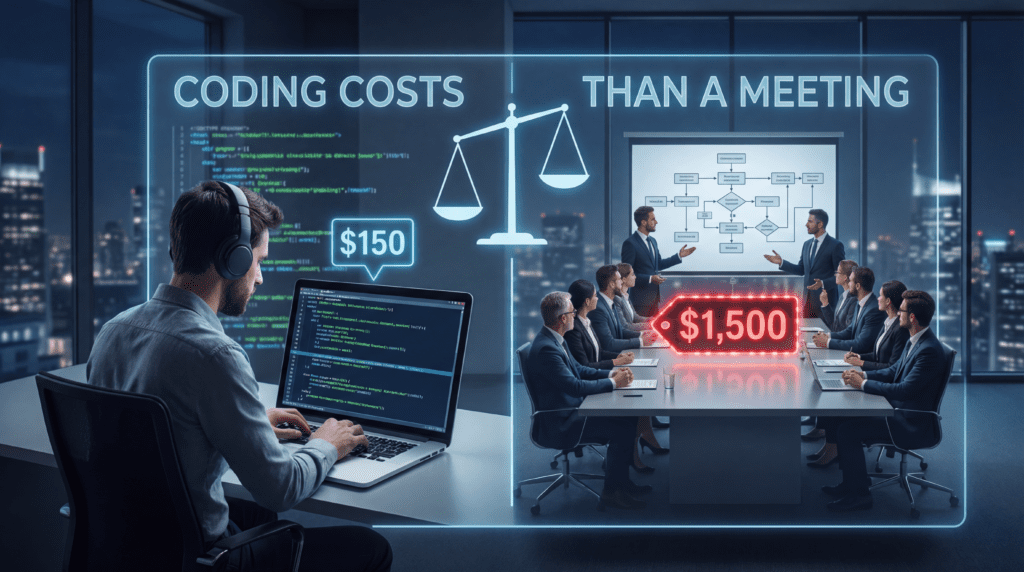

To prove this provocative thesis, we need to do the math. Let’s dissect the true cost of a standard 30-minute planning meeting and place it side-by-side with the cost of generating a functional software prototype using today’s leading AI models. The results are staggering.

The True Cost of a 30-Minute Meeting

The cost of a meeting is not just the sum of salaries for the time spent in the room. It’s a multi-layered expense, composed of direct salary costs and the far more insidious indirect costs of cognitive disruption. Most organizations dramatically underestimate this true cost because they only see the calendar time, not the devastation it wreaks on the surrounding hours.

Part 1: The Direct Salary Cost

First, let’s establish a baseline for developer compensation. According to the U.S. Bureau of Labor Statistics and other industry sources, the median salary for a software developer in the United States hovers between $131,450 and $144,570 annually. Talent.com reports similar figures. This translates to a conservative blended hourly rate of about $65 per hour.

The range, of course, is significant. Entry-level positions may start around $105,000, while the top 10% of earners can command salaries exceeding $211,450. Senior executives in software development can earn an average of $225,000. But for our purposes, we’ll use the median—a reasonable proxy for the “typical” developer in that planning meeting.

Now, let’s schedule a common scenario: a 30-minute meeting to discuss the feasibility of a new feature. The attendees are a product manager and three mid-level developers.

- Number of attendees: 4

- Hourly rate per attendee: $65

- Meeting duration: 0.5 hours

The direct salary cost is a simple calculation:

4 attendees × $65/hour × 0.5 hours = $130.00

So, before anyone has even written a line of code, the company has spent $130 just to talk about the feature. Scale this up to a one-hour meeting with five developers—a common occurrence—and the direct salary cost alone jumps to $325. These numbers add up with alarming speed.

But this is only the tip of the iceberg. The direct salary cost is, in many ways, the least interesting part of the calculation.

Part 2: The Hidden “Focus Tax”

The most significant cost of meetings isn’t the time spent in them, but the productive time they destroy around them. This is the cost of context switching, and it is a silent killer of developer productivity.

When a developer is pulled out of a state of deep work—the “flow state” where complex problem-solving happens—they can’t simply flip a switch to get back in. The human brain doesn’t work like that. Landmark research from the University of California, Irvine, quantified this recovery time precisely: it takes an average of 23 minutes and 15 seconds to regain deep focus after a single interruption.

Think about that for a moment. A 30-minute meeting doesn’t cost 30 minutes. For a developer who was immersed in a complex debugging session or architecting a new module, that meeting costs 30 minutes plus another 23 minutes just to get back to where they were. Every meeting, therefore, imposes a “focus tax” on each attendee.

For our four developers, this tax is substantial.

- Time to refocus per developer: 23.25 minutes (or 0.3875 hours)

- Cost of refocusing per developer: 0.3875 hours × $65/hour = $25.19

Now, let’s calculate the total cost of this cognitive disruption for the team:

3 developers × $25.19 = $75.57

(Note: We exclude the product manager from this calculation, as their work is often more interrupt-driven by nature, though they too suffer from context switching.)

The impact is even worse than just lost time. Studies show that interrupted tasks take twice as long to complete and contain twice as many errors. A brief interruption—even one as short as 5 seconds—can triple the error rate in complex cognitive work.

The Destruction of Flow State

The flow state is a mental state of deep immersion and focus, where an individual is fully absorbed in an activity. This is when developers are at their most productive, solving complex problems and writing high-quality code. Research suggests that operating in a flow state can increase productivity by up to 500%.

Here’s the cruel irony: it takes approximately 15 minutes of uninterrupted work just to enter a flow state. But this state is incredibly fragile. It can be shattered by a single notification, a single Slack message, a single calendar ping reminding you that a meeting starts in 10 minutes. Even if the notification is not acted upon, the damage is done. The spell is broken.

The modern work environment exacerbates this problem to an almost absurd degree. Knowledge workers switch between different applications and websites an average of 1,200 times per day. This constant toggling costs them about four hours per week simply reorienting themselves—a “toggle tax” that amounts to 9% of their annual work time. Meetings, often scheduled during peak productivity hours between 9-11 AM and 1-3 PM, systematically prevent developers from achieving the flow state that makes them most valuable.

The Final Tally

When we combine the direct salary cost with the hidden focus tax, the true cost of our seemingly innocuous 30-minute meeting becomes clear.

Total Meeting Cost = Direct Salary Cost + Context Switching Cost

Total Meeting Cost = $130.00 + $75.57 = $205.57

A single, half-hour planning meeting costs the organization over $200. And this assumes it starts and ends on time, stays on topic, and involves only four people. A one-hour meeting with five developers would cost $325 in salary alone, with a context-switching tax of over $125, pushing the total cost towards $450.

Consider the cumulative impact. A typical software developer spends approximately 10 hours per week in meetings. At a $65 hourly rate, this translates to a direct cost of $650 per week, or $32,500 per developer, per year, just in salary for meeting attendance. This doesn’t include the incalculable cost of the context switching surrounding each of those meetings.

This is the number to beat. This is the cost of speculation.

The Cost to “Build the Thing” with AI

Now, let’s look at the alternative. Instead of debating whether to build the feature, what does it cost to generate a first-draft implementation using an AI model’s API?

To make this a fair comparison, let’s define “the thing” as a moderately complex feature requiring about 1,000 lines of Python code. This is enough for a functional backend service, a data processing script, or a set of frontend components—exactly the kind of thing a team might discuss in a planning meeting.

Understanding the cost requires knowing how code translates to “tokens”—the units of text that AI models process. While it varies, a reasonable industry estimate for a language like Python is about 10 tokens per line of code. JavaScript is slightly more efficient at around 7 tokens per line, while SQL is more verbose at approximately 11.5 tokens per line. For practical cost estimation, using 10 tokens per line for Python is a reasonable starting point.

Assumptions for our AI build:

- Input Prompt: A detailed, 500-token prompt describing the feature requirements.

- Output Code: 1,000 lines of generated Python code, translating to 10,000 tokens.

Now, let’s calculate the cost using the API pricing for today’s most popular and capable models. Prices are typically given per one million tokens (MTok).

| Model | Provider | Input Cost (500 tokens) | Output Cost (10,000 tokens) | Total Cost to Build |

|---|---|---|---|---|

| Gemini 3.1 Flash-Lite | $0.000125 | $0.015 | $0.015 | |

| Claude 4.5 Haiku | Anthropic | $0.0005 | $0.050 | $0.051 |

| GPT-4o | OpenAI | $0.00125 | $0.100 | $0.101 |

| Gemini 3.1 Pro | $0.001 | $0.120 | $0.121 | |

| Claude 4.6 Sonnet | Anthropic | $0.0015 | $0.150 | $0.152 |

| Claude 4.6 Opus | Anthropic | $0.0025 | $0.250 | $0.253 |

| GPT-4 Turbo | OpenAI | $0.005 | $0.300 | $0.305 |

Sources: OpenAI, Anthropic, Google AI for Developers

The results are breathtaking.

Using a balanced, high-performance model like OpenAI’s GPT-4o, generating a 1,000-line feature costs just 10 cents. Using Anthropic’s powerful Claude 4.6 Sonnet, it’s about 15 cents. Even if you opt for the most powerful (and expensive) models like Claude 4.6 Opus, the cost is still under 26 cents. On the budget end, Google’s Gemini Flash-Lite can do it for less than two cents.

Let’s put the two costs side-by-side:

- Cost of a 30-Minute Planning Meeting: $205.57

- Cost of Building a 1,000-Line Prototype with GPT-4o: $0.10

The meeting is 2,055 times more expensive than the AI-generated prototype.

You could generate over two thousand versions of the feature for the price of one meeting to discuss it. This isn’t just a quantitative difference; it’s a qualitative, paradigm-shifting chasm. The economic foundation upon which we’ve built our software development lifecycle has crumbled.

How AI Coding Tools Changed the Equation

This radical inversion of costs didn’t happen overnight. It’s the result of two parallel trends: the commoditization of cutting-edge AI and the rise of sophisticated tools that integrate this power directly into the developer’s workflow. Understanding these trends helps explain not just where we are, but how fast we got here—and where we’re heading next.

The Great AI Price Collapse

The cost of AI “intelligence” has been in freefall. The fierce competition between OpenAI, Anthropic, and Google has led to a pricing war that benefits every developer. OpenAI’s flagship model pricing provides a stark illustration of this trend.

- March 2023: The original GPT-4 launched with what was then considered premium pricing: $30 per million input tokens and $60 per million output tokens. This was cutting-edge technology, priced accordingly.

- November 2023: GPT-4 Turbo was introduced, slashing prices by more than 50% to $10 (input) and $30 (output). OpenAI was clearly signaling its intent to drive adoption through affordability.

- May 2024: The highly efficient GPT-4o launched, halving prices again to $5 (input) and $15 (output). The pace of deflation was accelerating.

- August 2024: Further optimizations dropped GPT-4o’s price to its current rate of $2.50 (input) and $10 (output).

In just over 16 months, the cost of using OpenAI’s top-tier model plummeted by 91.7% for inputs and 83.3% for outputs. This aggressive deflation has been mirrored across the industry. Anthropic and Google have followed suit with their own competitive pricing, offering additional cost-saving features like Batch APIs (50% discount for asynchronous jobs) and Prompt Caching (up to 90% reduction for repeated prompts).

The result? What was once a computationally expensive luxury—access to world-class AI reasoning—has become a trivial operating cost, cheaper than most teams’ monthly coffee budget.

The New Generation of AI-Native Tools

Plummeting token prices are only half the story. The other half is the explosion of tools that have weaponized this cheap intelligence and embedded it directly into the development process. These aren’t just simple auto-complete features; they are becoming true collaborative partners that fundamentally change how code gets written.

GitHub Copilot: The Ubiquitous Partner

GitHub Copilot is the 800-pound gorilla of the AI coding market. With over 20 million users and adoption by 90% of Fortune 100 companies, it has become the de facto standard. More than 50,000 organizations, from scrappy startups to global enterprises, now rely on it. Its market share among paid AI coding tools sits at approximately 42%.

Copilot has fundamentally changed the act of coding. Research by GitHub shows that Copilot now generates an average of 46% of all code for its users—up from 27% when it launched in 2022. For Java developers, this figure climbs to 61%. When nearly half the code in a project is being written by an AI assistant, the economics of software development shift dramatically.

For a monthly fee of around $19 per user for a business plan, Copilot has become an indispensable utility, quietly writing nearly half the world’s new code.

Cursor: The AI-First Challenger

If Copilot represents AI integration into a traditional workflow, Cursor represents the next logical step: an editor built from the ground up for AI. And the market has responded with astonishing enthusiasm.

Cursor’s meteoric rise—becoming the fastest SaaS company ever to hit $100 million in annual recurring revenue—signals a massive appetite for a more deeply integrated AI experience. The numbers tell a remarkable story:

- Revenue trajectory: $1 million in revenue (2023) → $100 million ARR (2024) → projected $500 million ARR (mid-2025)

- Valuation explosion: $2.6 billion (January 2025) → $9.9 billion (June 2025) → $29.3 billion (November 2025)

- Users: Over 1 million users within 16 months of launch, with 360,000+ paying customers

- Enterprise customers: 40,000+, including OpenAI, Stripe, Amazon, NVIDIA, Uber, Adobe, and PayPal

What’s remarkable is that Cursor achieved this with a remarkably lean team—approximately 40-60 employees initially, growing to around 300 by late 2025. The company reports users experiencing a 126% productivity increase and 20-25% time savings on common tasks. With features for editing across an entire codebase and understanding project context, Cursor moves beyond simple code generation to architectural modification.

Claude Code: The Conversational Architect

Anthropic’s entry, Claude Code, pushes the boundary even further, introducing concepts like “vibe coding” and agentic workflows. A developer can now use natural language in a command-line interface to instruct Claude to perform complex, multi-file operations, stage the changes in Git, write commit messages, and even open a pull request. It can spawn “agent teams” to tackle different parts of a problem in parallel.

The financial success has been striking: Claude Code achieved $1 billion in run-rate revenue within six months of public release, accumulating $2.5 billion+ since launch. Recent features include multi-agent code review systems, voice mode for natural voice commands, and enterprise security safeguards.

This moves the interaction from a line-by-line partnership to a high-level, conversational delegation of entire tasks—much like a senior developer mentoring a junior one, except the “junior” can work around the clock at nearly zero marginal cost.

The Supporting Cast

Beyond the big three, a vibrant ecosystem has emerged:

- Replit AI: A cloud-based IDE focused on full-stack app generation and rapid prototyping. It supports 50+ languages and offers one-click deployment, with agent costs running about $0.25 per checkpoint.

- Amazon Q Developer (formerly CodeWhisperer): AWS-centric with built-in security scanning and optimization for Lambda, DynamoDB, and other AWS services. The free tier offers 50 agentic requests per month; the professional tier runs $19/month.

- Tabnine: Differentiated by its privacy-first approach, offering local, private cloud, and air-gapped deployment options. GDPR, SOC 2, and ISO 27001 certified, it appeals to security-conscious enterprises.

These tools, powered by the incredibly cheap tokens we analyzed, have created a new workflow. The question is no longer “How do I write this code?” but “How do I best describe the code I want to the AI?” This shift from manual labor to high-level direction is the engine driving the new economics of software development.

The Rise of “Vibe Coding”

A term coined by AI pioneer Andrej Karpathy in early 2025, “vibe coding” describes a new paradigm where developers use natural language prompts to guide LLMs in generating software. Instead of painstakingly writing every line, developers focus on describing the desired functionality and “vibe” of what they want.

The benefits are transformative:

- Speed: Prototypes that once took weeks can be created in hours or days

- Accessibility: Non-technical users can create functional applications

- Iteration: Rapid experimentation with UI and layouts becomes trivial

- Reduced overhead: Eliminates the “blank page” syndrome that slows down many projects

Tools like v0 by Vercel generate React components from text prompts. Bolt.new offers browser-based full-stack development with zero DevOps. Lovable enables chat-based app building for both technical and non-technical users.

Vibe coding isn’t without challenges—code quality concerns, “hallucination” of non-existent functions, and maintainability issues are real. But for rapid prototyping and experimentation, it represents exactly the kind of capability that renders planning meetings economically obsolete.

The Proof: Real-World Productivity Gains

The math is compelling, but what does this look like in practice? The data from real-world usage and controlled studies is overwhelming. AI coding assistants don’t just offer marginal gains; they deliver transformative leaps in speed and efficiency.

The Landmark Study: 55% Faster

The most widely cited research in this space comes from GitHub’s quantitative study involving 95 professional developers. The results were unambiguous. The group using GitHub Copilot completed a task to build an HTTP server in JavaScript 55% faster than the control group.

- Copilot Group Average Time: 1 hour, 11 minutes

- Control Group Average Time: 2 hours, 41 minutes

The statistical significance was strong (P=.0017). The AI-assisted group didn’t just finish faster; they were also more successful, with a 78% completion rate compared to the control group’s 70%. This wasn’t a fluke. It was a statistically significant demonstration that these tools provide a massive, measurable speed advantage.

The benefits extended beyond raw speed:

- 73% of developers maintained their flow state when using Copilot

- 87% reported less mental energy spent on repetitive tasks

- 88% of AI-generated code was kept in final submissions

- Code reviews were 15% faster with Copilot Chat

From the Trenches: How Enterprises Are Winning

This academic finding is being replicated and validated inside some of the world’s leading tech companies. They aren’t just allowing these tools; they are actively measuring their impact and reaping the rewards.

Dropbox has seen 90% of its engineers adopt AI tools, leading to a sustained 20% increase in the number of pull requests merged every week. This is a direct, bottom-line improvement in development throughput. The company tracks daily and weekly active users, customer satisfaction scores, time saved, and AI spend as key metrics.

Saxo Bank, the financial institution, equipped its 700 developers with Copilot and found they are now coding approximately 30% faster. The bank reports that AI-written code is present in almost every new application it builds.

Bancolombia, the major Latin American bank, credits GitHub Copilot with a 30% increase in code generation, enabling an incredible 42 productive deployments per day and 18,000 automated application changes per year.

Accenture, in a controlled deployment, saw an 8.69% increase in pull requests per developer. Even more impressively, the time it took for a developer to open their first pull request on a project plummeted by 75%, from 9.6 days to just 2.4 days. PR merge rates increased by 11%, and successful builds jumped by 84%. This dramatically accelerated onboarding and time-to-contribution.

Google reports that 21% of code at the company is now AI-assisted.

Individual Developer Testimonials

Beyond enterprise data, individual developers report remarkable gains in specific scenarios:

- 85% time savings on CRUD operations

- 95% fewer copy-paste errors with AI-generated boilerplate

- 130% increase in code output over a six-month experiment

- 40% faster feature delivery

- 65% faster prototyping

- 200% faster refactoring speed

- Learning curve reduction: Time to learn new technologies dropped from 2-3 months to 2-3 weeks

The consensus from enterprise users is clear: OpenAI’s 2025 report found that engineers using AI tools save 60-80 minutes per day. General enterprise users save 40-60 minutes daily. This isn’t just about writing code faster; it’s about automating boilerplate, accelerating debugging, and reducing the mental friction of development.

The ROI Calculation

When you factor in these productivity gains against tool costs, the return on investment becomes staggering:

- $2,000-$5,000 per year value per developer from time savings

- ~2000% ROI based on a $20/month tool cost versus time saved

- 15-25 hours saved per month per developer

At enterprise scale, this translates to millions of dollars in recaptured productivity.

A New Development Lifecycle: Bias for Building

If it’s thousands of times cheaper to build a prototype than to hold a meeting about it, our entire approach to software development must change. The old model, optimized for a world where developer time was the primary scarce resource and coding was a costly manual process, is obsolete. We need a new lifecycle, one with a radical bias for building.

From “Should We?” to “Show Me”

The default response to a new feature idea should no longer be “Let’s schedule a meeting.” It should be “Let’s have the AI build a v1 in the next hour.”

The New “Spike”: In agile development, a “spike” is a time-boxed investigation to reduce uncertainty. Traditionally, this meant research and whiteboarding. The new spike is a 60-minute session with an AI like Claude Code or Cursor, culminating in a functional, deployable prototype. The deliverable is not a document; it’s a URL.

Rapid Prototyping as Debate: Instead of debating abstract pros and cons in a conference room, teams can now debate two or three different working versions of the feature. Tools like v0 by Vercel, which generates React components from text prompts, allow for instant experimentation with UI/UX. This shifts the conversation from the hypothetical to the tangible, leading to better decisions, faster.

Democratizing Creation: The rise of “vibe coding”—using natural language to describe desired functionality—lowers the barrier to creation. A product manager can now use a tool like Replit AI to generate a full-stack application from a prompt, test the core user flow, and then bring a working model to engineering, completely short-circuiting the initial, speculative planning phase.

The Evolving Role of the Developer

This new reality doesn’t make developers obsolete; it elevates them. Their role shifts from manual code production to high-level architectural direction and quality control.

From Coder to Conductor: The most valuable skill is no longer typing syntax but artfully describing systems, constraints, and desired outcomes to an AI. The developer becomes a conductor, orchestrating a team of incredibly fast, but not always wise, AI assistants. This requires a new kind of expertise—understanding not just what you want, but how to communicate it effectively to a machine.

Reviewer-in-Chief: As the volume of AI-generated code explodes, the most critical human function becomes rigorous review. The senior developer’s primary job is to catch subtle bugs, identify security flaws, and ensure the AI’s output aligns with the broader system architecture. Their expertise is applied at a higher leverage point—reviewing and refining thousands of lines rather than writing each one manually.

Prompt Engineering as a Core Competency: Crafting a prompt that elicits the correct, efficient, and secure code from an LLM is a new and essential engineering discipline. It requires a deep understanding of both the problem domain and the AI’s “thought process.” The best prompt engineers will become force multipliers, extracting dramatically more value from the same tools that everyone else uses.

This shift demands new metrics. Instead of just measuring lines of code or story points, teams should start tracking “time-to-first-prototype,” “cost-per-experiment,” and the percentage of code that is AI-generated versus human-refined. The companies that adapt their measurement systems will be the first to fully capture the benefits of this new paradigm.

A Balanced Analysis: When Meetings Still Matter

To argue that all meetings are useless would be naive and counterproductive. The goal is not to eliminate human interaction but to redeploy it to where it creates the most value. The target of this thesis is a specific, pernicious type of meeting: the unproductive, speculative, could-have-been-an-AI-prototype meeting.

The statistics on meeting dysfunction are damning. A staggering 71% of senior managers report that their meetings are unproductive. During meetings, 75% of attendees admit to multitasking, and 65% report daydreaming. This highlights a deep disconnect between the intended purpose of meetings and their actual outcome.

For a large company, this dysfunction can amount to $100 million per year in wasted meeting costs. The average large organization spends roughly $80,000 per employee on meetings annually, with over $25,000 of that investment being “wasted” on unproductive sessions. This is the waste we are targeting.

Human-to-human meetings remain indispensable for:

- Strategic Alignment: High-level discussions about long-term vision, company direction, and market strategy. These are decisions that require nuanced human judgment and cannot be delegated to an AI.

- Complex Architectural Debates: When senior engineers need to whiteboard a novel, system-wide architecture that has no precedent. The creative collision of multiple expert perspectives is genuinely valuable here.

- 1-on-1s and Mentorship: Building relationships, providing career feedback, and fostering personal growth. The human connection in these interactions is irreplaceable.

- Team Cohesion and Morale: The social rituals that build trust and a shared sense of purpose. Remote and hybrid work has made these deliberately social interactions more important, not less.

- Stakeholder Communication: Presenting results, aligning expectations, and navigating organizational politics often require the nuance of human-to-human interaction.

The principle is simple: use meetings for connection, strategy, and resolving high-stakes ambiguity. Use AI for execution, exploration, and resolving low-stakes ambiguity. The key question before scheduling any meeting should be: “Could we learn the same thing faster by having an AI build it and seeing what happens?”

The AI Productivity Paradox and Its Limitations

Furthermore, we must acknowledge the limitations and risks of over-reliance on AI. While individual productivity soars, it can create new bottlenecks at the organizational level—a phenomenon known as the “AI Productivity Paradox”.

The PR Deluge: One study found that while AI helped generate 98% more pull requests, the time spent reviewing them increased by 91%. The bottleneck simply shifts from code creation to human review. If your organization’s review capacity remains fixed while code generation explodes, you haven’t actually accelerated delivery—you’ve just created a different kind of traffic jam.

Quality and Trust Issues: The 2025 Stack Overflow Developer Survey revealed a concerning trend: while adoption is high (84% of developers using or planning to use AI tools), trust is surprisingly low. Only 3% of developers “highly trust” AI-generated code, and 46% actively distrust it—up from 31% in 2024. Many report spending significant time fixing “almost-right” code that looks plausible but contains subtle, hard-to-find bugs. The survey found that 66% of developers spend more time fixing AI-generated code than they would have writing it manually in certain scenarios.

Bugs and Security Vulnerabilities: The speed of AI generation comes with risks. Research indicates that AI-augmented teams see a 9% increase in bugs per developer. More alarmingly, 48% of AI-generated code contains potential security vulnerabilities. AI can also lead to a 4x increase in code cloning, planting the seeds of future technical debt. PRs are on average 154% larger, making them harder to review thoroughly.

Organizational Bottlenecks: Beyond review capacity, organizations face challenges in testing capacity constraints, deployment pipeline limitations, and a 47% increase in context switching as developers juggle more AI-assisted tasks simultaneously.

These are not reasons to reject AI, but they are critical guardrails. They reinforce the evolving role of the human developer as a diligent and skeptical reviewer. The solution to the PR deluge is not to generate less code, but to use AI to assist in the review process itself—a feature now emerging in tools like Claude Code’s multi-agent code review system. The goal is to scale human judgment, not replace it.

The Future Is Faster Than You Think

The trends driving this economic inversion are not slowing down. If anything, they’re accelerating. The cost of AI intelligence will continue to fall, while its capabilities will continue to expand. The developer of tomorrow will operate less like a craftsman and more like a manager of a vast, tireless, and inexpensive digital workforce.

Market Trajectory

The numbers tell a story of explosive growth:

- 2024 Market Value: $4.91 billion for AI coding tools

- 2032 Projection: $30.1 billion

- CAGR: 27.1%

- Potential GDP Impact: $1.5+ trillion from improved developer productivity

Currently, 41% of all code is AI-generated or AI-assisted globally (2025). This figure is growing rapidly. By some estimates, within five years, the majority of new code written will have significant AI involvement.

The Agentic Future

We will see the rise of fully autonomous agents capable of taking a high-level feature request from a tool like Jira, developing the entire feature, writing the tests, and deploying it to a staging environment—all before a human has had their morning coffee. The role of the human will be to set the goals, define the constraints, and give the final approval.

This future requires a fundamental rewiring of our instincts as developers and leaders. We have been trained for decades to be cautious, to measure twice and cut once, because the “cut” was expensive. Today, the cut is virtually free. It’s the measuring—the meetings, the debates, the speculation—that has become the exorbitant luxury.

Adapting or Being Left Behind

Organizations that cling to meeting-heavy, speculation-first workflows will find themselves at a severe competitive disadvantage. While they’re debating whether to build a feature, their competitors will have built it, tested it, gotten user feedback, iterated twice, and shipped it to production.

The companies that thrive will be those that:

- Embrace a “prototype-first” culture where building is cheaper than talking

- Invest in review capacity to match their newfound generation capacity

- Train developers as AI conductors rather than just code writers

- Measure what matters—time-to-prototype, cost-per-experiment, iteration velocity

- Reserve meetings for high-value human activities like strategy, mentorship, and team building

Conclusion: Your Calendar Is a Financial Statement

We began with a bold claim: it is literally cheaper to build the thing than to have a 30-minute meeting about it. The math is undeniable.

A single meeting to discuss a feature can easily cost over $200 when accounting for direct salary costs and the hidden tax of shattered focus. Generating a functional, 1,000-line prototype of that same feature using a state-of-the-art AI model costs about a dime.

This 2,000x cost difference is not an incremental change. It is a seismic shift that redefines the very economics of innovation. It renders the traditional, meeting-heavy software development lifecycle an expensive anachronism—a relic of an era when coding was manual labor and developer time was the primary constraint.

The implications are profound. Every speculative planning meeting on your team’s calendar represents a decision not to build and test a dozen alternatives. Every hour spent debating hypotheticals is an hour that could have been spent analyzing real user feedback on a working prototype. Every “let’s think about this more” is a missed opportunity to know whether the idea works.

It’s time to look at your calendar as a financial statement. Each meeting is a line item, an expenditure of capital—not just in direct salary costs, but in the priceless currency of developer focus and creative energy. The question every tech leader and engineer must now ask is simple but radical: “Is this conversation providing more value than the 2,000 prototypes I could build for the same price?”

Increasingly, the answer is no.

The era of speculative meetings is ending. The era of instant, AI-driven experimentation has begun. The competitive advantage will go to those who recognize this shift fastest and adapt their processes accordingly.

Stop talking. Start building.

References

- Software developers: Occupational Outlook Handbook – U.S. Bureau of Labor Statistics

- Software Developer Salary in United States in 2026 – Talent.com

- Software Developer Salary – US News Best Jobs

- Context Switching: The Developer Productivity Killer – Jellyfish

- Context Switching & Productivity: The Hidden Cost of Modern Work – CannElevate

- Code to Tokens Conversion: How to Estimate and Manage LLM Context for Coding – prompt.16x.engineer

- OpenAI API Pricing – OpenAI

- Pricing – Claude by Anthropic – Anthropic

- Gemini Developer API Pricing – Google AI for Developers

- OpenAI GPT-4 API Pricing – Nebuly

- OpenAI releases new models and lowers API pricing – Artificial Intelligence News

- LLM Cost & Pricing: OpenAI – Helicone

- GitHub Copilot Statistics (2025): Users, Revenue & More – SecondTalent

- Research: quantifying GitHub Copilot’s impact on developer productivity and happiness – The GitHub Blog

- About GitHub Copilot plans – GitHub Docs

- Cursor – Sacra

- Claude Code Overview – Anthropic

- How Tech Companies Measure the Impact of AI on Developer Productivity – The Pragmatic Engineer

- AI-powered success with 1,000 stories of customer transformation and innovation – Microsoft Cloud Blog

- The ROI of GitHub Copilot for Your Organization: A Metrics-Driven Analysis – Worklytics

- v0 by Vercel

- Replit AI

- Statistics About Meetings You Need to Know in 2024 – Standup Alice

- Time Wasted in Meetings Statistics – The Treetop

- The AI Productivity Paradox in Software Engineering – Faros AI

- Stack Overflow Developer Survey 2025: AI – Stack Overflow

- Context switching is the main productivity killer for developers – TechWorld with Milan

- Meetings & effective collaboration – Network Perspective

- Unnecessary meetings cost big companies $100 million annually – CBS News

- Meeting Statistics 2025: Culture, Productivity, and Costs – ISEO Blue

- AI Coding Assistant Statistics – SecondTalent

- Developer Productivity Statistics with AI Tools – Index.dev

- Cursor Statistics – DevGraphiq

Appendix: The Global Perspective

While this analysis has focused primarily on U.S. developer salaries and costs, the fundamental economics apply globally—though the specific numbers shift.

Global Salary Variations

Developer compensation varies dramatically by region:

- Switzerland consistently ranks among the highest-paying countries, with average salaries often exceeding $100,000 annually

- Australia averages around $86,004

- Denmark ranges from $63,680 to $84,000

- Canada ranges from $61,680 to $80,000

- Germany ranges from $52,275 to $64,000

- United Kingdom ranges from $45,000 to $55,275

- Poland and Ukraine represent a middle ground at $48,000-$60,000

- India averages around $8,000

- Brazil averages approximately $11,337

Here’s the key insight: even in lower-cost regions, the ratio between meeting costs and AI generation costs remains massively skewed toward AI. A 30-minute meeting with four developers in India might cost $15-20 instead of $130—but generating 1,000 lines of code with AI still costs a dime. The AI is still 150-200x cheaper than the meeting.

The cost advantage of AI coding is not a U.S. phenomenon. It’s a global economic shift that affects every software team, everywhere.

The Meeting Epidemic

The problem of meeting overload has worsened dramatically in recent years. Since 2020, the number of meetings has tripled, largely driven by the shift to remote and hybrid work models. Executives spend an average of 23 hours per week in meetings—nearly 60% of a typical work week.

This creates a troubling dynamic. The people with the most organizational context and decision-making authority are also the ones with the least time for deep work. They’re trapped in an endless cycle of synchronous communication, unable to leverage the new AI tools that could make their limited focused time dramatically more productive.

Developer Sentiment: A Trust Gap

The rapid adoption of AI coding tools has been accompanied by a surprising development: declining trust. The 2025 Stack Overflow Developer Survey reveals this tension clearly:

- 84% of developers are using or planning to use AI tools (up from 76% in 2024)

- 51% use AI tools daily

- 82% use AI coding tools daily or weekly

- Yet only 3% report “highly trusting” AI output

- 46% actively distrust AI outputs (up from 31% in 2024)

- 66% spend more time fixing AI-generated code in certain scenarios

This trust gap represents both a challenge and an opportunity. The challenge is clear: teams need robust review processes to catch AI mistakes. The opportunity? Tools that can address this trust gap—through better code review AI, more transparent generation processes, or improved accuracy—will capture enormous market share.

The most common use cases for AI coding tools reflect this pragmatic, trust-but-verify approach:

- Searching for answers (54.1%)

- Generating content/synthetic data (35.8%)

- Learning new concepts (33.1%)

- Documenting code (30.8%)

- Debugging/fixing code (20.7%)

- Writing code (16.9%)

Notably, “writing code”—the task most directly relevant to our thesis—ranks lowest. Developers are most comfortable using AI as a research assistant and least comfortable delegating actual code writing. This suggests significant room for growth as tools improve and trust builds.

The Enterprise ROI Case

For enterprise leaders considering this shift, the ROI calculation is compelling:

- Average tool cost: $10-40 per developer per month

- Time saved: 15-25 hours per developer per month (60-80 minutes daily)

- Implied hourly value: At $65/hour, saving 20 hours/month = $1,300/month value

- Monthly tool cost: ~$20

- Net monthly benefit: ~$1,280 per developer

- Annual benefit: ~$15,000 per developer

Even accounting for the costs of AI-generated bugs (the 9% increase documented by Faros AI), review overhead (91% increase in review time), and the occasional need to throw away AI code entirely, the math still favors aggressive adoption.

A team of 100 developers could potentially recapture $1.5 million in productivity annually by fully embracing AI coding tools. That’s not revenue—that’s pure efficiency gain, equivalent to adding 20-30 developers to the team without the hiring, onboarding, and management overhead.

A Note on Code Quality

Individual studies on code quality show promising results:

- 13.6% fewer errors per line in Copilot-authored code

- 53.2% more likely to pass all unit tests

- 5% higher approval rate from code reviewers

- 60% fewer comments in human code reviews after AI self-review

These findings contrast with the organizational-level data showing more bugs. The resolution? Individual AI-generated code is often higher quality, but the sheer volume of code being generated—combined with human review bottlenecks—leads to more bugs slipping through at the organizational level. The solution isn’t to generate less code; it’s to scale review capacity to match generation capacity.

The Transformation Is Already Underway

The AI coding tools market stands at approximately $4.91 billion in 2024, projected to reach $30.1 billion by 2032—a compound annual growth rate of 27.1%. Current adoption patterns show:

- 29-49% of companies allow AI coding tools

- 30-40% actively encourage adoption

- 59% of developers use 3+ AI tools in parallel

The companies that will thrive in this new landscape are those that recognize a fundamental truth: the cost structure of software development has permanently changed. The old calculus—where developer time was precious and therefore planning was cheap by comparison—has been inverted.

Today, planning is expensive. Building is cheap. The meeting is the luxury. The prototype is the commodity.

Every organization must now ask itself: Are our processes optimized for the old economics or the new ones? The answer to that question will increasingly determine competitive success.

This article was written for Medium. If you found it valuable, please share it with your team—preferably instead of scheduling another planning meeting.

Practical Takeaways for Your Team

If you’re convinced by this analysis, here are concrete steps you can take starting tomorrow:

For Individual Developers:

- Before your next planning meeting, ask: “Could I prototype this in 30 minutes with AI instead of discussing it for 30 minutes?” If yes, do that instead and bring the prototype to the meeting.

- Track your AI usage for a week. Note the tokens consumed, the code generated, and the time saved. Compare this to the meetings you attended. The data will likely reinforce everything in this article.

- Invest in prompt engineering skills. The developers who can effectively communicate with AI assistants will see 2-3x the productivity gains of those who can’t.

For Engineering Managers:

- Audit your team’s meeting load. The average developer spends 10 hours weekly in meetings—that’s potentially $32,500 per developer per year in direct costs alone, not counting context switching.

- Institute a “prototype-first” policy for feature discussions. Before any planning meeting, require a rough AI-generated prototype. This shifts the conversation from “should we build this?” to “how should we refine this?”

- Invest in code review capacity. The bottleneck is shifting from generation to review. Consider AI-assisted review tools and dedicated review time blocks.

For CTOs and Technical Leadership:

- Update your metrics. Start tracking time-to-first-prototype, AI-generated code percentage, and cost-per-experiment alongside traditional velocity metrics.

- Rethink your planning processes. If you have standing meetings dedicated to speculative planning, consider converting them to asynchronous prototype reviews.

- Budget for AI tools aggressively. At $20/month per developer with potential returns of $1,000+/month in productivity, this is one of the highest-ROI investments available.

The shift from meeting-heavy to prototype-heavy development is not just an efficiency gain—it’s a competitive necessity. The companies that figure this out first will ship faster, iterate more, and ultimately win markets while their competitors are still scheduling the next planning session.

The choice is clear. The math is undeniable. Now the only question is: will you act on it?