The most consequential economic transition in human history may already be underway — and most of us are still debating whether it’s real.

There is a thought experiment that economists and technologists have been quietly running for several years now, one that has suddenly become a great deal less theoretical. Imagine a point — not a distant, science-fiction horizon, but a near-future threshold — at which scalable machine intelligence becomes reliably better than most humans at most of the tasks that take place on a computer screen. Writing, coding, financial analysis, legal research, customer service, graphic design, data synthesis, project management, content creation, report drafting, and the hundreds of other cognitive tasks that together constitute the modern knowledge economy.

What happens then?

This is not a question about robotic arms replacing factory workers. That story is decades old. This is a question about the displacement of the white-collar workforce — the accountants, the consultants, the junior lawyers, the marketing managers, the software engineers, the journalists — people who, for the past half-century, believed their education and their cognitive capital insulated them from the ravages of automation. For the first time in economic history, the machines are not just coming for the assembly line. They are coming for the office.

The evidence that this transition is already beginning is no longer ambiguous.

The Scope of What Is Being Automated

To understand what is at stake, we first need to be precise about what “screen-based work” actually means in aggregate. It is not a small corner of the economy. In the United States, nearly 80 percent of employment is concentrated in the services sector, and the majority of higher-skilled, higher-paid jobs within that sector are what economists call “knowledge activities” — roles that primarily involve processing information, producing analysis, generating language, making decisions based on data, and communicating those decisions. Finance, insurance, law, business services, government administration, media, and technology: these are the industries where screen-based cognition is the primary product.

In March 2023, a team of Goldman Sachs economists led by Jan Hatzius published what became one of the most widely cited research papers of the AI era. The report concluded that generative AI could expose the equivalent of 300 million full-time jobs globally to automation. More precisely, the economists found that roughly two-thirds of current jobs in the US and Europe are exposed to some degree of AI automation, and that AI could substitute up to one-quarter of all current work tasks. Administrative and legal professions were the most vulnerable: 46 percent of tasks in office and administrative support roles, and 44 percent of legal tasks, could plausibly be automated.

The implications are staggering, and as CNN reported, they represent the most sweeping potential disruption to the labor market since the Industrial Revolution — except this disruption targets the professional class rather than the working class.

But Goldman Sachs was careful to note that disruption is not the same as erasure. “Most jobs and industries are only partially exposed to automation and are thus more likely to be complemented rather than substituted by AI,” the economists wrote. The picture they painted was one of transformation rather than total replacement — a world in which the nature of cognitive work changes profoundly, even if total employment levels eventually recover. “The good news is that worker displacement from automation has historically been offset by creation of new jobs, and the emergence of new occupations following technological innovations accounts for the vast majority of long-run employment growth.”

That caveat matters. But it may also be less reassuring than it sounds. Historical precedent cuts both ways. Every previous wave of technological disruption eventually created new employment — but “eventually” can mean decades of dislocation, wage depression, and social upheaval for the workers caught in the transition. The handloom weavers displaced by the mechanized loom did not, for the most part, become steam engine operators. They became poorer.

The Numbers Are Already Moving

The theoretical projections are one thing. The early empirical data is another, and it is beginning to tell a consistent story.

A working paper from Harvard Business School, covered by HBS Working Knowledge, examined job postings across the US from 2019 through March 2025. The researchers, led by Professor Suraj Srinivasan, found that after the public launch of ChatGPT in November 2022, job postings for occupations involving structured and repetitive tasks decreased by 13 percent. At the same time, demand for jobs requiring more analytical, technical, or creative work — roles likely to be enhanced rather than replaced by AI — grew by 20 percent. The largest reductions were in the finance and technology sectors.

“Rather than solely eliminating jobs,” Professor Srinivasan observed, “generative AI creates new demand in augmentation-prone roles, suggesting that human-AI collaboration is a key driver of labor market transformation.”

The finding is both reassuring and alarming in equal measure. Reassuring because it suggests that AI, at least in its current phase, tends to reshape rather than simply eliminate work. Alarming because 13 percent of job postings disappearing in automation-prone roles is not a rounding error — it is a structural signal arriving well ahead of what most economists expected.

Meanwhile, Microsoft and LinkedIn’s 2024 Work Trend Index — which surveyed 31,000 people across 31 countries and analyzed trillions of Microsoft 365 productivity signals — found that 75 percent of global knowledge workers are already using AI at work, with adoption having nearly doubled in just six months. The scale of this adoption is unprecedented in modern technological history. For context, it took the internet years to reach the same penetration among office workers that generative AI achieved in months.

The same report found that 90 percent of users say AI helps them save time, 85 percent say it helps them focus on more important work, and 84 percent say it makes them more creative. These are not the numbers of a technology that workers find marginal or incidental. These are the numbers of a tool that is beginning to reshape the cognitive infrastructure of work itself.

Understanding the New Taxonomy of Work

To understand where this transition leads, it helps to think carefully about what knowledge work actually consists of.

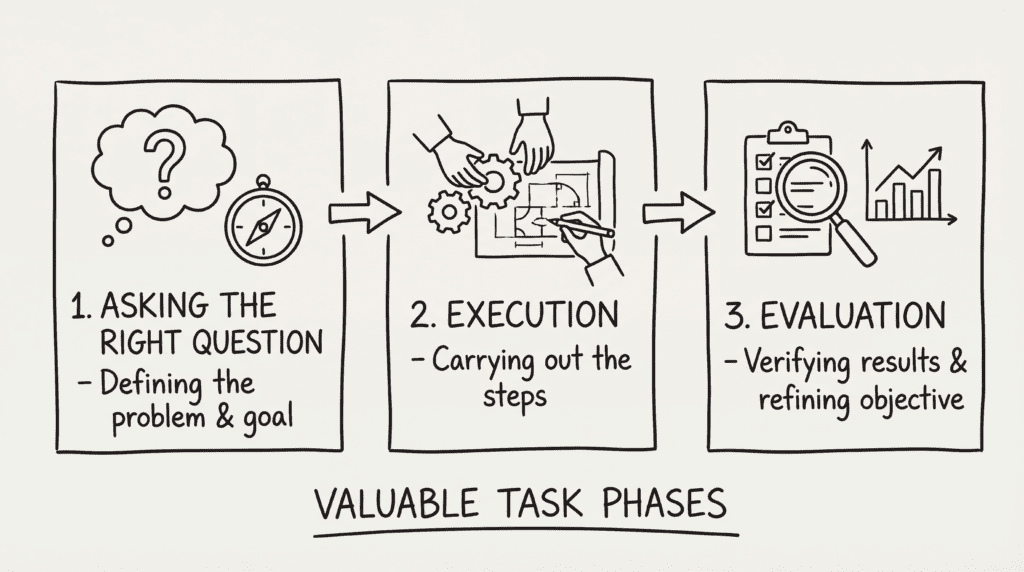

Erik Brynjolfsson, director of the Stanford Digital Economy Lab and one of the most respected economists working on these questions, has proposed a framework that cuts to the heart of the matter. Almost every valuable task, he argues, can be broken into three distinct phases:

- Asking the right question — defining the problem and the goal.

- Execution — carrying out the steps to reach that goal.

- Evaluation — verifying the results and refining the objective.

“For most of human history,” Brynjolfsson wrote in TIME, “human workers have had to do all three. But the defining characteristic of this era is that AI is getting astonishingly good at Part 2: Execution.”

This is not a trivial point. Execution has historically been the most time-consuming and labor-intensive component of most cognitive jobs. A junior lawyer’s work is primarily execution — researching precedents, drafting memos, reviewing documents. A financial analyst’s work is primarily execution — gathering data, building models, running scenarios. A software engineer’s work is primarily execution — translating specifications into working code. If AI can now handle a substantial fraction of that execution burden at near-zero marginal cost, the economics of hiring junior knowledge workers undergoes a fundamental change.

Brynjolfsson argues that this shifts the economic value toward what he calls “Chief Question Officers” — people with the judgment to know what to ask, why it matters, and how to evaluate whether the AI has actually succeeded. “We will be the architects,” he writes. “The AI will be the builders.”

This is an appealing vision. It is also, frankly, a description of a world in which far fewer people are needed to do the same amount of cognitive work.

The Agentic Turn: When AI Stops Assisting and Starts Acting

Much of the current public discussion about AI in the workplace focuses on tools like ChatGPT or Copilot — AI assistants that help individual workers draft emails, summarize documents, or write first-pass code. These tools are genuinely useful and are already changing daily workflows. But they are also, relative to what is coming, fairly primitive.

The emerging frontier is what technologists call “agentic AI” — systems that do not merely respond to prompts but actively pursue goals, take sequences of actions, use tools, browse the web, write and execute code, and coordinate with other AI agents to complete complex, multi-step tasks.

According to a PwC survey of business executives cited by Brynjolfsson in TIME, 79 percent of companies are already leveraging agentic AI in some capacity. These are not experimental pilots at tech startups. These are real deployments, at scale, inside mainstream corporations.

When agentic AI matures — and the timelines keep accelerating — the question shifts from “can AI help my analyst draft a report?” to “does my company need as many analysts?” The answer, at current and projected trajectories, is likely: fewer. Not zero. But fewer.

Google’s revelation that 25 percent of its code is now written by AI agents — with human engineers reviewing rather than writing — is the clearest early signal of what this restructuring looks like in practice. The junior developer role, which for decades was the entry point into a lucrative technology career, is being rapidly compressed. The ladder that millions of knowledge workers relied on to climb into middle-class prosperity is losing its lower rungs.

The Economic Consequences: Boom, Disruption, and Concentration

The macroeconomic story is genuinely complicated, and anyone who tells you they know exactly how it plays out is almost certainly wrong. But the broad contours are legible.

On the positive side, the productivity potential is extraordinary. Goldman Sachs estimates that widespread AI adoption could boost annual US labor productivity growth by nearly 1.5 percentage points over a decade and increase global GDP by as much as 7 percent. For context, 7 percent of global GDP is roughly $7 trillion at current output levels. This is not a rounding error. It is the equivalent of creating an economy the size of Germany out of thin air. If AI delivers on its productivity promise, the total volume of goods and services available to humanity grows enormously.

But here is the critical question that the GDP headline obscures: Who captures that value?

The history of technological revolutions suggests that productivity gains do not distribute themselves evenly or automatically. The PwC AI Jobs Barometer 2025 found that industries most exposed to AI are achieving three times higher revenue per employee growth than industries least exposed to AI. That sounds positive — and for firms and their shareholders, it is. But “higher revenue per employee” is often a euphemism for “fewer employees needed to generate the same output.” The productivity gains accrue to capital — to the owners of the AI systems, the companies deploying them, and the relatively small number of highly skilled workers who manage and direct them.

For workers displaced from the middle of the labor market, the picture is grimmer. The American Enterprise Institute’s De-Skilling the Knowledge Economy report draws a sharp historical parallel to the manufacturing displacement of the 1980s and 1990s. Just as robotics and information technology “de-skilled” routine factory labor — forcing workers either to upgrade their skills dramatically or accept lower-paid work in other sectors — AI is now doing the same to routine knowledge labor. “The next turn of the automation ratchet appears to, once again, be tightening the squeeze on middle-skill jobs,” the report concludes — “only this time in America’s offices rather than its factories.”

The report projects a compression of the middle of the talent distribution: as AI absorbs routine cognitive tasks, workers face a stark “up or out” dynamic. Those who can combine technical knowledge with strong interpersonal, creative, and judgment-based skills will likely prosper. Those who cannot may find themselves pushed into lower-paid work or out of the knowledge economy entirely.

This is the mechanism by which a genuine productivity boom can coexist with rising inequality: the gains are real, but they are concentrated.

The Industries at Greatest Risk

It is worth being specific about which screen-based industries face the most immediate structural disruption, because the aggregate statistics can obscure the sheer variety of what is at stake.

Financial services: Goldman Sachs identified financial and business operations roles as among the most exposed to automation (35 percent of tasks). Investment research, financial modeling, insurance underwriting, and claims processing are all highly automatable. The irony that the Goldman Sachs report itself was produced by analysts whose very profession it describes as vulnerable is not lost on observers.

Legal work: With 44 percent of legal tasks potentially automatable, junior lawyers and paralegals face the most acute near-term risk. Document review, contract analysis, legal research, and routine drafting are exactly the kinds of structured, information-intensive tasks that large language models perform well. Large law firms are already deploying AI tools that accomplish in minutes what once took junior associates hours. The billable hour — the profession’s fundamental pricing unit — is under structural threat.

Journalism and content creation: The Microsoft New Future of Work Report 2025 found that writers, editors, and news analysts rank among the occupations with the highest AI applicability scores. Basic content generation — news summaries, sports recaps, earnings reports, product descriptions — is already being automated at scale by major publishers. The craft survives; the volume of human practitioners required may not.

Software engineering: The compression of coding roles — particularly at the junior and mid-level — is already visible in hiring data. The HBS study found that the technology sector saw some of the largest reductions in job postings for automation-prone roles after ChatGPT’s launch. What once required a team of five engineers to build in a week is increasingly achievable by one senior engineer directing AI agents over a day.

Customer service and administrative support: The 46 percent automation exposure figure for office and administrative roles reflects just how many people in the modern economy are employed essentially to manage information flows — scheduling, correspondence, data entry, inquiry handling, routing of requests. Virtual agents and AI-powered customer service tools are absorbing these tasks rapidly, and the Microsoft Bing Copilot research — which analyzed 200,000 actual user conversations — found customer service representatives and sales roles among the highest AI applicability occupations.

Consulting and management analysis: Management analysts and consultants, roles that command premium salaries precisely because they synthesize large amounts of information and make strategic recommendations, scored high on AI applicability in the Microsoft study. When AI can ingest a year’s worth of financial data, compare it to industry benchmarks, identify anomalies, and draft a preliminary findings memo in seconds, the value proposition of paying $500 an hour for a human to do the same thing requires re-examination.

The Social Architecture of Disruption

Economic displacement numbers are abstract. What they represent in human terms is anything but.

For the past two generations, the social contract in most developed economies has run roughly as follows: invest in education, develop specialized cognitive skills, enter a profession, and trade those skills for a livable middle-class income. The knowledge economy was the escape route from manual labor’s precariousness. It offered not just money but identity, purpose, and social status.

If AI substantially erodes the economic foundations of that social contract, the consequences are not merely financial. They are deeply psychological and sociological. Professions are not just jobs. They are communities, identities, and sources of meaning. A lawyer is not simply someone who does lawyerly tasks for pay; they are a member of a professional guild with its own culture, traditions, and self-understanding. When that identity is destabilized — when a lawyer finds that a significant portion of what defined their value can now be done faster and cheaper by software — the psychological consequences can be severe.

The WBUR On Point discussion of AI and UBI surfaced this point vividly through the lens of science fiction. The authors of The Expanse, writing under the pen name James S.A. Corey, imagined a future Earth where AI and automation have rendered most jobs obsolete. The UN government provides “Basic” — not income, but services: housing, food, healthcare — to billions of people who have no meaningful economic role. The result is not contentment. It is a population adrift, stripped of agency, consumed by purposelessness. Characters on Basic are not described as liberated. They are described as broken.

This is speculative fiction, but it captures something real that economic models tend to miss: that work is not just an economic transaction. It is a source of structure, social connection, self-esteem, and meaning. Mass displacement from cognitive work — even if accompanied by financial transfers — risks producing a social and psychological crisis that income alone cannot address.

There is also the matter of intergenerational effects and educational misalignment. The degrees and credentials that millions of young people are currently pursuing — law degrees, MBAs, computer science bachelor’s degrees, finance qualifications — were designed for a labor market that may look substantially different by the time those students graduate.

The AEI report notes that the Federal Reserve Bank of New York found, by early 2025, that unemployment rates for liberal arts graduates had fallen to roughly half those of computer science and engineering graduates — a jarring reversal of the conventional wisdom about the economic value of technical versus humanistic education. If the skills that AI is fastest to commoditize are precisely the technical skills that higher education has been most urgently promoting, then an entire generation of students may be training for a labor market that is contracting beneath them.

The Concentration of Power Problem

Beyond the labor market disruption, there is a deeper structural concern that deserves serious attention: the concentration of economic and political power in the hands of whoever controls the AI systems.

If scalable machine intelligence becomes better than most humans at most screen-based work, the economic value it generates is immense. But that value flows, in the first instance, to the companies that own and operate the systems. A handful of large technology companies — and, increasingly, the nation-states that regulate, subsidize, and sometimes own them — stand to accumulate extraordinary leverage over economic activity.

As a 2025 study in Frontiers in Artificial Intelligence argued, drawing on the sociologist Pierre Bourdieu’s concept of “symbolic violence,” the AI elite’s advocacy for solutions like Universal Basic Income may itself be a form of soft power — a way of pre-empting criticism of AI-induced displacement by offering a palliative that does not challenge the fundamental concentration of productive capacity. “By advocating for UBI,” the study’s author notes, “AI elites position themselves as benevolent visionaries who are concerned about the wellbeing of humanity. However, this framing distracts from the fact that the same individuals who are pushing for UBI are also those who stand to gain the most from the proliferation of AI technologies.”

This is a provocative argument, and it should not be accepted uncritically. But it identifies a real tension: the organizations most capable of influencing how AI-driven productivity gains are distributed are the same organizations whose economic interests are served by rapid, unregulated AI deployment.

The geopolitical dimension compounds this. The United States and China are engaged in an intense competition for AI dominance that frames AI development as a matter of national security, making the kind of measured, internationally coordinated governance frameworks that might ensure broader benefit-sharing exceptionally difficult to achieve. The European Union has moved more aggressively toward regulation with the EU AI Act, but regulatory frameworks risk becoming impediments to innovation in the jurisdictions that adopt them while doing little to constrain AI development in jurisdictions that do not.

The Policy Landscape: What Are the Options?

Governments and policymakers are not passive spectators in this transition. The choices made over the next decade will do more to determine whether widespread AI deployment is a net positive or a net catastrophe for working people than the technology itself. Here are the policy levers most seriously discussed:

Universal Basic Income (UBI): The idea of providing all citizens with an unconditional cash payment, regardless of employment status, has gained significant traction as AI-driven displacement accelerates. The LSE Business Review has argued that UBI represents a promising avenue for a new social contract in the age of AI, with successful implementation dependent on sustainable funding mechanisms.

The most rigorous test of UBI to date was not encouraging, however. A multi-year study funded by OpenAI’s Sam Altman and conducted by OpenResearch provided $1,000 per month to 1,000 low-income individuals between 2020 and 2023. The results, as reported in the PMC study on AI and UBI, found that while the payments helped cover essential expenses, they “did not lead to significant improvements in employment quality, education, or overall health.” UBI, in other words, can cushion economic shocks, but it is not a substitute for meaningful work or social mobility.

The funding arithmetic is also daunting: the Tax Project Institute estimates that a poverty-level UBI for all US citizens would cost approximately $8.5 trillion annually — nearly double the entire current federal budget — before accounting for any offsetting reductions in existing programs.

AI taxation and wealth redistribution: Rather than direct income transfers, some economists propose taxing the productivity gains generated by AI systems and using those revenues to fund retraining programs, expanded public services, or targeted support for displaced workers. This approach has the advantage of capturing value at the source — from the companies that benefit most from AI — rather than distributing it universally regardless of need.

Mandatory reskilling programs: HBS Professor Srinivasan recommends that companies “[invest] in reskilling programs to transition workers to roles enhanced by AI” and engage in “[continuous upskilling] in generative AI to leverage new tools.” This is sensible advice, but it raises the question of what, exactly, workers are being reskilled for. If the trajectory of AI capability continues on its current curve, the half-life of any specific technical skill is shortening rapidly. Reskilling programs risk training people for jobs that will themselves be automated by the time the training is complete.

Reduced working hours and work-sharing: A less-discussed but historically significant policy option is the reduction of standard working hours — essentially distributing the productivity gains of automation in the form of leisure rather than unemployment. Germany’s Kurzarbeit (short work) system, which spreads reduced working hours across a workforce rather than concentrating layoffs, offers a model. If AI can do in four hours what currently takes eight, the humane policy response may be to work four hours rather than to fire half the workforce.

Strengthening labor institutions: Unions and collective bargaining mechanisms, weakened over the past four decades, could play a significant role in ensuring that AI productivity gains are shared with workers rather than captured entirely by capital. Several major unions in the US and Europe have already begun negotiating AI-specific clauses into labor contracts, addressing questions of notification, retraining obligations, and profit-sharing.

The Case for Cautious Optimism

It would be a mistake to read all of the above as a counsel of despair. There are genuine reasons to believe that an AI-transformed economy could be substantially better for human welfare in the aggregate, even if the transition is painful.

The democratization of expertise is perhaps the most underappreciated potential benefit. Legal advice, financial planning, medical second opinions, high-quality tutoring, sophisticated market research — these have historically been services available only to those who could afford them. AI has the potential to make these capabilities accessible to everyone, dramatically reducing the inequality in access to professional knowledge that currently tracks closely with income. A small business owner in a rural community who could never afford a corporate lawyer can now access sophisticated contract analysis at marginal cost. A first-generation college student who cannot afford tutoring can work with an AI that has unlimited patience and encyclopedic knowledge.

Scientific and medical acceleration represents another compelling upside. The bottleneck in solving humanity’s most pressing problems — climate change, antibiotic resistance, neurological disease, materials science — is frequently not money or will but the pace at which human researchers can process data, test hypotheses, and iterate on findings. If AI substantially accelerates that process, the long-run benefits to human health and wellbeing could dwarf the short-run displacement costs.

Liberation from cognitive drudgery is also real. Much of what constitutes screen-based work is not, if we are honest, intrinsically meaningful. Processing insurance claims, filling out compliance forms, writing boilerplate contracts, generating monthly reports that follow a standard template — these tasks occupy enormous amounts of human cognitive capacity and produce relatively little of intrinsic value. If AI absorbs this cognitive drudgery, the humans freed from it have more capacity for the work that actually requires human presence: teaching, caregiving, creative endeavor, political engagement, community building, and the many forms of human connection that resist systematization.

Brynjolfsson’s framing of the “Chief Question Officer” — the human who decides what problems are worth solving and evaluates whether the AI has actually solved them — is, at its best, a vision of work that is more intrinsically human than what it replaces.

The Philosophical Reckoning

Underneath all of the economics and policy is a deeper question that our civilization is not yet prepared to answer: What is human work for?

For most of the past two centuries, the answer has been assumed rather than examined. Work is for producing goods and services. It is for generating income. It is for GDP. And by extension, what we call a “good economy” is one in which most working-age adults are employed, and employment is the primary mechanism by which they obtain the resources they need to live and the status they need to participate in society.

But this instrumental conception of work has always coexisted, sometimes uneasily, with a deeper view: that work is a form of self-expression, a mode of participation in something larger than oneself, a way of developing skill and competence, a source of discipline and meaning. The classical philosophers knew this. So did the craft guild traditions of the medieval period. So do most people who have experienced the particular satisfaction of doing something difficult well.

If AI becomes capable of doing most screen-based work better and cheaper than humans, the instrumental case for human cognitive labor weakens. The deeper case — the case from meaning, identity, and human flourishing — does not. But it requires a fundamental rethinking of how we structure economic participation, social recognition, and the relationship between contribution and reward.

This is not an abstract philosophical puzzle. It is a practical political question that will need to be resolved within the lifetimes of people who are currently alive. Elon Musk, in a moment of unusual candor cited in the PMC study on AI and UBI, framed it starkly: “If a computer can do, and the robots can do, everything better than you, does your life have meaning? I do think there’s perhaps still a role for humans in that we may give AI meaning.”

That is not a reassuring answer. It suggests that the burden of meaning-making shifts from the economy to the individual — and to culture, philosophy, and community — at precisely the moment when those institutions are themselves under strain.

The “Turing Trap” and the Path Not Taken

Before concluding, it is worth dwelling on a concept that Brynjolfsson has introduced: the “Turing Trap.” The trap, he argues, is the temptation to use AI primarily to mimic and replace humans — to drive down labor costs, concentrate the benefits, and substitute machine output for human output wherever possible. This is economically rational from the perspective of any individual firm. It is socially catastrophic in aggregate.

The alternative path — what Brynjolfsson calls genuine augmentation — is one in which AI is designed and deployed not to maximize labor displacement but to maximize human capability. The question is not “what tasks can AI do instead of humans?” but “what can humans do, with AI assistance, that neither could do alone?” Medical diagnosis that combines AI pattern recognition with physician judgment and patient communication. Legal practice that combines AI document processing with lawyer advocacy and ethical reasoning. Education that combines AI personalization with teacher mentorship and relationship-building.

This is not a utopian fantasy. It is a design choice. The same technology that enables displacement enables augmentation. Which path is taken depends on how firms structure their AI deployments, how regulators constrain or incentivize certain uses, how workers and their representatives negotiate the terms of adoption, and how societies decide to define and reward valuable contribution.

The outcome is not determined by the technology. It is determined by the choices we make while the technology is still young enough to be shaped.

Conclusion: The Decade That Will Define the Century

We are at the beginning of what may prove to be the most significant labor market transition in human history. The question is not whether scalable machine intelligence will eventually surpass most humans at most screen-based work. The early evidence — the HBS job posting data, the Goldman Sachs analysis, the Microsoft workplace surveys, the PwC productivity data — all point in the same direction. The question is what kind of society we build in response.

Two futures are available. In one, the productivity gains from AI are captured by a small number of companies and individuals, the middle of the knowledge economy hollows out, displaced workers lack the social support to transition effectively, wealth and power concentrate, and the fraying of meaningful work produces a political and social crisis of the first order. This is not a far-fetched dystopia. It is, broadly, what happened to manufacturing communities when the factories closed, just on a much larger scale.

In the other future, policymakers move proactively — taxing AI productivity gains and reinvesting them in worker transitions, retraining, and expanded public services; redesigning educational systems to train for the skills that AI complements rather than replaces; building new forms of social recognition for the caregiving, community, and creative work that no machine will easily commoditize; and redesigning AI systems themselves to augment rather than replace human capability wherever possible.

The technology does not decide which future we get. We do.

The AEI report is right that “these outcomes, positive and negative, are not foreordained.” And Erik Brynjolfsson’s observation in TIME captures the essential choice with admirable clarity: “The promise of the second machine age is a world where machines augment our minds, not just replace our muscles. But whether AI leads to broad-based empowerment or rigid centralization isn’t a technological question; it is a societal one.”

The next decade will determine which answer we give. The machines are learning fast. The question is whether we are learning faster.