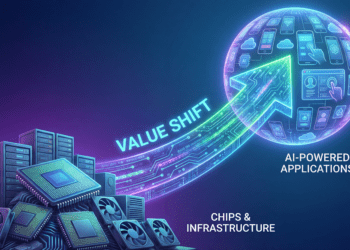

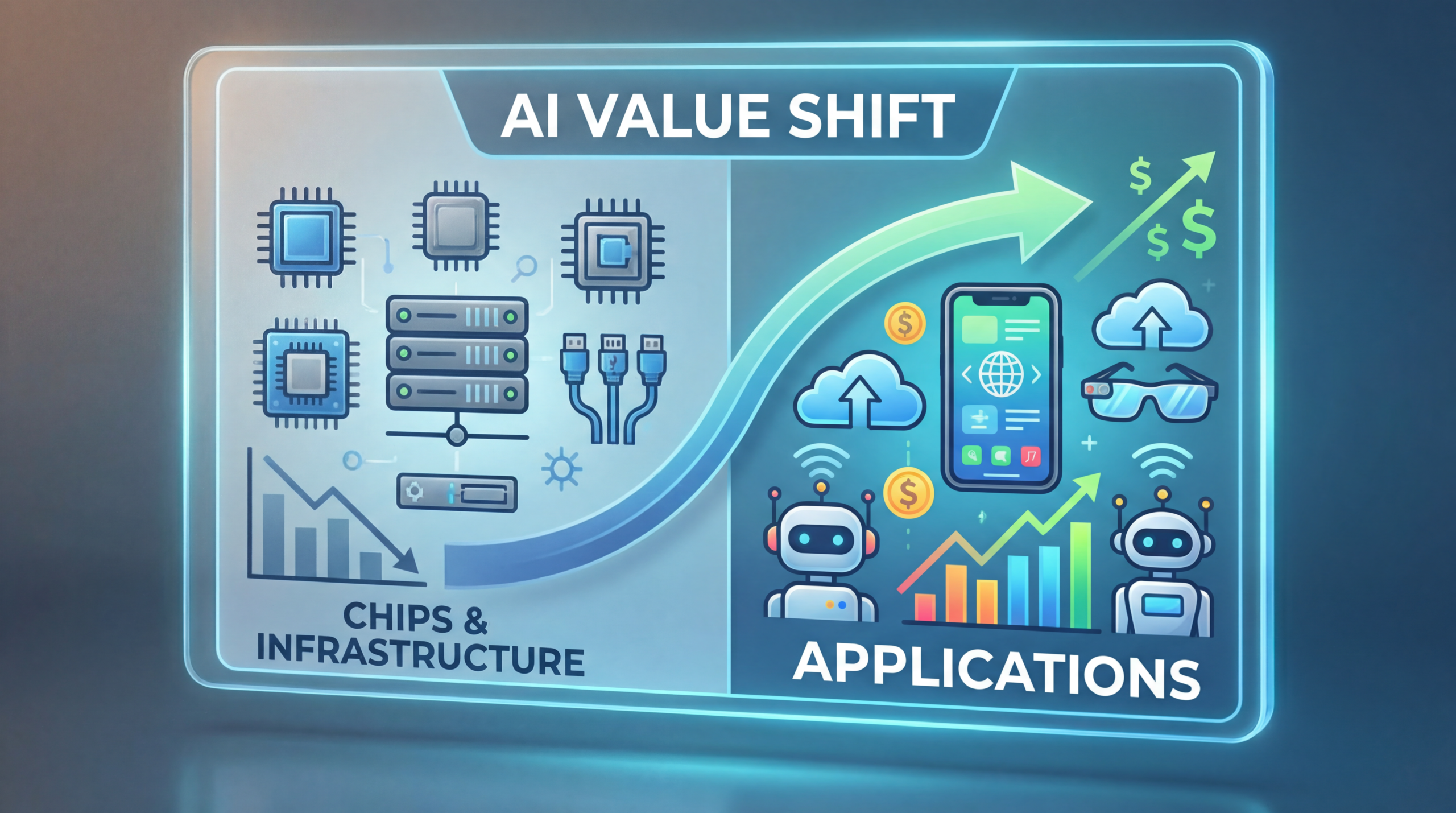

There is a pattern that every major technology wave follows, and it is so consistent that it borders on law. The people who sell the shovels get rich first. Then, quietly and inevitably, the people who use the shovels to build things get richer.

It happened with the railroads. The companies that laid track across America in the 19th century were celebrated as the defining enterprises of their era. But the freight companies, the agricultural businesses, the retailers who used those rails to move goods across a continent — they captured the lasting value. It happened with the internet. Cisco and Sun Microsystems built the routers and servers that made the web possible, and for a brief, glorious moment they were the most valuable companies in the world.

Then Google, Amazon, and Facebook arrived and built things on top of that infrastructure that were worth ten times as much. It happened with mobile. ARM Holdings and Qualcomm designed the chips inside every smartphone, but Apple and Uber and Instagram captured the value that actually mattered to consumers and investors.

We are now watching this same pattern play out in real time with artificial intelligence — and we are, right now, at the inflection point where the value begins its migration up the stack.

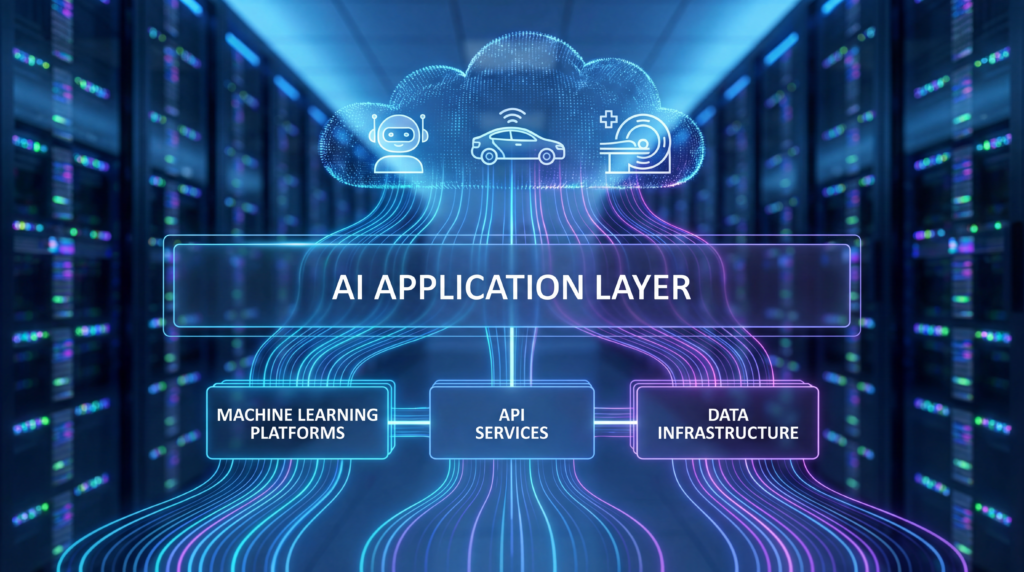

The thesis is straightforward: AI’s first phase was about chips. Its second phase was about infrastructure. Its third phase — the one we are entering — is about applications. The companies that build genuinely useful products on top of all that compute will capture the majority of the economic value that AI creates. And that value will be enormous.

This article is about understanding why that shift is happening, what it means for the companies competing to win, and how to think about identifying the winners before they become obvious.

Phase One: The Chip Wars

To understand where we are going, you have to understand where we have been.

The first bottleneck in the AI revolution was raw compute. Training a large language model requires running billions of matrix multiplications across billions of parameters, repeatedly, for weeks or months. The only hardware capable of doing this at scale was the GPU — specifically, NVIDIA’s GPU — and for most of the early 2020s, demand for those chips vastly outstripped supply.

NVIDIA’s ascent during this period was one of the most dramatic value creation stories in the history of capitalism. The company’s data center revenue grew from approximately $15 billion in fiscal year 2023 to $47.5 billion in fiscal year 2024, then surged to over $115 billion in fiscal year 2025 (ending January 2025), according to Silicon Analysts and NVIDIA’s own financial reports. By fiscal year 2026, NVIDIA reported record full-year revenue of $215.9 billion — up 65% year over year — with data center revenue of $62.3 billion in Q4 alone. At its peak in 2024, NVIDIA commanded approximately 87% of the AI accelerator market by revenue.

The economics were extraordinary. NVIDIA’s H100 GPU — which costs approximately $3,320 to manufacture — was selling for $28,000, yielding gross margins of 88%, according to Silicon Analysts. This is confirmed by The Decoder, which reported Raymond James estimates of $3,320 in manufacturing costs against retail prices of $25,000–$30,000. There is almost no precedent in industrial history for a manufactured product commanding that kind of markup at that kind of scale.

The moat was not just the hardware. NVIDIA’s real competitive advantage was CUDA — its proprietary software platform for GPU computing, built over more than 20 years, with over 4 million developers and every major machine learning framework optimized for it first. Switching away from CUDA is not a matter of dollars; it is a matter of years of engineering work. That software lock-in is what allowed NVIDIA to charge $28,000 for a chip that costs $3,320 to make.

Challengers emerged, of course. AMD’s MI300X offered competitive performance at lower prices. Google built its own TPUs. Amazon developed Trainium. Microsoft created Maia. Meta built MTIA. But as Silicon Analysts documents, AMD — the only real merchant alternative — held just 5–8% of the market, while hyperscaler custom silicon collectively accounted for perhaps 10–15% by 2026. NVIDIA’s absolute revenue continued growing even as its percentage share began to decline, because the total market was expanding faster than any competitor could capture.

But here is the thing about Phase One: it is maturing. The signs are everywhere.

The most dramatic signal came in January 2025, when a Chinese AI startup called DeepSeek released a model that sent shockwaves through the industry. DeepSeek’s V3 model was trained for approximately $5.6 million on 2,048 NVIDIA H800 GPUs over 55 days. Its R1 reasoning model — released shortly after — cost just $294,000 to train, using 512 H800 chips for 80 hours, according to a peer-reviewed paper published in Nature and reported by Reuters.

The combined disclosed training cost for both models was approximately $5.9 million — a figure that, while it excludes prior research and ablation experiments, was orders of magnitude below what Western labs had been spending. As IDC noted, DeepSeek’s approach challenged “some of the most fundamental cost and efficiency assumptions in AI development.”

The market reacted violently. NVIDIA lost $589 billion in market capitalization in a single day on January 27, 2025 — the largest single-day market cap loss in US stock market history, according to Arthnova. Combined tech sector losses exceeded $800 billion.

The deeper implication of DeepSeek was not about geopolitics or export controls. It was about the fundamental economics of compute. As IntuitionLabs documented, DeepSeek’s inference pricing came in at 20–50x cheaper than OpenAI’s equivalent — what costs tens of thousands of dollars per month on closed APIs could be done for hundreds of dollars on DeepSeek. The cost of running AI is falling fast, and when the cost of a raw material falls, the value migrates to whoever uses that raw material most cleverly.

The chip phase is not over. NVIDIA will remain a dominant and highly profitable company for years — its FY2026 data center revenue of $115 billion-plus makes that clear. But the era of chips as the primary locus of value creation in AI is drawing to a close. The shovels have been sold. Now someone has to build something with them.

Phase Two: The Infrastructure Build-Out

If Phase One was about the chips themselves, Phase Two was about everything required to run them at scale: the data centers, the power infrastructure, the networking, the cooling systems, and the cloud platforms that made AI accessible to enterprises that couldn’t build their own GPU clusters.

The numbers involved in this build-out are almost incomprehensible in their scale.

According to CreditSights, capital expenditure for the top five hyperscalers — Amazon, Alphabet/Google, Microsoft, Meta, and Oracle — grew from approximately $256 billion in 2024 to a projected $443 billion in 2025, and is forecast to reach $602 billion in 2026.

Roughly 75% of that spend is directly tied to AI infrastructure: servers, GPUs, data centers, and networking equipment. As the IEEE ComSoc Technology Blog reported, hyperscaler capex is now set to make up 2.2% of GDP — a figure that dwarfs the broadband buildout of the early 2000s, the Apollo program, and the Interstate Highway System.

The individual company commitments are staggering. Amazon projected capital expenditures of $125 billion in 2025, with 2026 expected to be even higher. Microsoft’s CFO Amy Hood acknowledged that the company had been “short on computing power for many quarters” and was doubling its data center footprint. Meta signaled that its capex — already nearly $72 billion in 2025 — would be “notably larger” in 2026. Google projected full-year capex of $91–93 billion. As IEEE ComSoc reported, big tech was on track to invest an aggregate of $400 billion into AI initiatives in 2025 alone.

The picks-and-shovels beneficiaries of this infrastructure wave were numerous and well-documented. Power and energy companies — Vistra, Constellation, NRG — saw their valuations surge as data centers consumed an ever-larger share of the electrical grid. Data center REITs like Equinix and Digital Realty became AI infrastructure plays.

Networking companies like Arista and Broadcom benefited from the insatiable demand for high-speed interconnects. Cooling specialists like Vertiv became critical suppliers. The Stargate project — backed by SoftBank, OpenAI, and Oracle, with a stated commitment of $500 billion in AI infrastructure over four years — became a symbol of the era’s ambition.

But Phase Two, like Phase One before it, is showing signs of maturation.

The most fundamental constraint is no longer chips — it is power. Data centers are consuming electricity at a rate that is straining grids across the United States and Europe. The bottleneck has shifted from silicon to kilowatts.

Meanwhile, the efficiency gains documented by DeepSeek and others are beginning to reduce the raw compute required for a given level of AI capability. As IDC noted, the industry is experiencing “a growing shift away from brute-force scaling toward intelligent optimization.” The Mixture-of-Experts architecture used by DeepSeek — where a 671-billion-parameter model activates only 37 billion parameters per request, as documented by IntuitionLabs — is a preview of how AI systems will become dramatically more efficient over time.

There is also a financial sustainability question. As IEEE ComSoc reported, Meta and Oracle issued $75 billion in bonds and loans in just two months of 2025 to fund data center construction. At some point, the market demands a return on that investment — and that return can only come from the application layer.

The highway has been built. Now someone has to drive on it.

Why Value Always Migrates Up the Stack

Before diving into what Phase Three looks like, it is worth pausing to understand why this migration happens. It is not random. It follows a consistent economic logic that has played out across every major technology wave.

The mechanism is commoditization. When a new technology emerges, the initial bottleneck is always the enabling infrastructure — the thing without which nothing else is possible. That scarcity creates enormous pricing power for whoever controls it. But as the technology matures, competition increases, efficiency improves, and the enabling infrastructure becomes cheaper and more widely available. The bottleneck moves up the stack, and so does the value.

The railroad analogy is instructive. In the 1860s and 1870s, the companies laying track across America were celebrated as the defining enterprises of their era. But by the 1880s and 1890s, the real value had migrated to the companies using those rails — the freight operators, the agricultural businesses, the retailers who could now reach national markets. The rails themselves became a commodity; the differentiated value was in what you did with them.

The internet followed the same pattern with almost eerie precision. Cisco Systems, which made the routers that powered the internet, reached a market capitalization of $555 billion at the peak of the dot-com bubble in 2000 — briefly the most valuable company in the world. Sun Microsystems made the servers. 3Com made the networking equipment. All of them were picks-and-shovels plays on the internet buildout. And all of them were eventually eclipsed by the companies that built things on top of that infrastructure: Google, Amazon, Facebook, Netflix. The infrastructure became a commodity; the applications captured the value.

Mobile followed the same arc. ARM Holdings designed the chip architecture inside virtually every smartphone. Qualcomm made the modems. But Apple — which used ARM chips and Qualcomm modems — became the most valuable company in the world by building a product that consumers loved and an ecosystem that locked them in. Uber, Instagram, and WhatsApp created billions of dollars of value on top of infrastructure they didn’t own.

The economic logic is simple: when the cost of a raw material falls, the value accrues to whoever uses that raw material most cleverly. As AI inference costs fall — with DeepSeek demonstrating 20–50x cost reductions versus incumbents per IntuitionLabs — the ROI of AI shifts from owning the compute to building the applications that run on it. The question is no longer “who has the most GPUs?” It is “who has built the most useful thing?”

Phase Three: The Application Layer

We are now entering Phase Three, and the opportunity is enormous.

The application layer in AI is not a single market. It is a collection of overlapping markets, each with its own dynamics, competitive landscape, and path to value creation. Understanding the distinctions between them is essential for anyone trying to navigate this landscape.

Vertical AI: The Biggest Near-Term Opportunity

The most compelling near-term opportunity in the AI application layer is vertical AI — companies that take foundation model capabilities and apply them to specific industries with deep domain expertise, proprietary data, and tight workflow integration.

The logic of vertical AI is straightforward. A general-purpose AI model like GPT-4 or Claude is extraordinarily capable, but it does not know the specific workflows, terminology, regulatory requirements, and edge cases of your industry. A vertical AI company that has spent years building a product for, say, legal document review or clinical documentation has something the foundation model providers cannot easily replicate: deep domain knowledge, proprietary training data, and integrations with the specific tools that practitioners actually use.

Harvey, the AI legal platform, is perhaps the clearest example of this thesis in action. Built specifically for law firms, Harvey understands legal terminology, case law, document structures, and the specific workflows that lawyers use. It is not a general-purpose chatbot with a legal prompt; it is a purpose-built system that integrates with the tools law firms already use and is trained on legal data that general models have not seen. The result is a product that is dramatically more useful to lawyers than any general-purpose AI — and that commands premium pricing and high retention as a result.

Abridge, which focuses on clinical documentation, follows the same pattern. Physicians spend an enormous amount of time on administrative documentation — writing up notes after patient visits, coding diagnoses, filling out forms. Abridge uses AI to automate this process, integrating directly with electronic health record systems and understanding the specific language and requirements of clinical documentation. The value proposition is not “AI for healthcare” in the abstract; it is a specific, measurable reduction in the administrative burden on physicians, with direct integration into the systems they already use.

Glean, which focuses on enterprise search and knowledge management, has built a product that connects to all of a company’s internal data sources — Slack, Google Drive, Salesforce, Confluence, email — and makes that information searchable and accessible through a natural language interface. The value is not the search technology itself; it is the integrations, the data connectors, and the understanding of how enterprise knowledge is actually structured and used.

Cursor, the AI-powered code editor, has become the tool of choice for a significant portion of professional software developers. By building AI assistance directly into the development environment — rather than as a separate tool that developers have to switch to — Cursor has achieved the kind of deep workflow integration that creates genuine switching costs. Developers who use Cursor daily are not going to switch to a competitor easily; the product has become part of how they work.

What these companies share is a set of characteristics that define the vertical AI playbook: deep domain expertise, proprietary data that improves the model over time, tight integration with existing workflows, and a value proposition that is specific and measurable rather than abstract and general. They are not selling “AI” — they are selling a specific outcome that their customers care about.

AI-Native SaaS: Replacing the Old Guard

Beyond vertical AI, there is a broader opportunity in what might be called AI-native SaaS — companies that are rebuilding existing software categories from scratch with AI at the core, rather than bolting AI onto legacy architectures.

The legacy SaaS industry — Salesforce, ServiceNow, Workday, SAP — was built on a fundamentally different set of assumptions about how software works. These systems were designed to store and retrieve structured data, automate rule-based workflows, and provide dashboards and reports. They are extraordinarily good at what they were designed to do. But they were not designed for a world where AI can understand natural language, generate content, make predictions, and take autonomous actions.

The threat to legacy SaaS is not that AI will make their products slightly worse. It is that AI enables a fundamentally different approach to the same problems — one that is so much better that it creates an opening for new entrants to displace incumbents who cannot move fast enough to adapt.

Consider customer relationship management. Salesforce was built on the assumption that salespeople would manually enter data about their customer interactions, and that managers would use that data to generate reports and forecasts. An AI-native CRM can automatically capture and analyze every customer interaction — emails, calls, meetings — synthesize that information into actionable insights, draft follow-up communications, and predict which deals are most likely to close. The workflow is not just automated; it is fundamentally reimagined.

The same logic applies to human resources software, enterprise resource planning, project management, and virtually every other category of business software. AI-native companies are not just building better versions of existing products; they are building products that make the existing category’s assumptions obsolete.

The pricing model disruption is equally significant. Legacy SaaS companies charge per seat — you pay for each user who has access to the software. AI-native companies are increasingly moving toward outcome-based pricing — you pay for the results the software delivers. This is a more natural alignment of incentives, and it allows AI companies to capture a larger share of the value they create. A company that charges per seat for a CRM is limited by the number of salespeople. A company that charges a percentage of revenue generated through AI-assisted sales has no such ceiling.

Consumer AI: Enormous TAM, Harder Path

The consumer AI opportunity is potentially the largest of all — and also the most difficult to execute.

The early proof points are compelling. ChatGPT reached 100 million users faster than any product in history. Perplexity has built a loyal user base for AI-powered search. Character.ai has demonstrated that consumers will engage deeply with AI companions and personas. These are not trivial achievements; they represent genuine product-market fit at scale.

But consumer AI faces challenges that enterprise AI does not. Consumer retention is notoriously difficult — users are fickle, switching costs are low, and the next shiny product is always one app store download away. Monetization is harder; consumers are less willing to pay for software than enterprises, and advertising-based models require enormous scale to be meaningful. And the competitive dynamics are brutal: every major tech company is building consumer AI products, and they have distribution advantages that startups cannot easily overcome.

The most promising consumer AI opportunities are in categories where the value proposition is deeply personal and the switching costs are high: AI tutors that know a student’s learning history and adapt to their specific needs; AI health coaches that track a user’s health data over time and provide personalized guidance; AI financial advisors that understand a user’s complete financial picture and help them make better decisions. These are categories where the AI gets better the longer you use it, creating the kind of data flywheel that makes switching costly.

AI Agents: The Next Frontier

The most transformative — and most hyped — development in the AI application layer is the emergence of AI agents: systems that can take autonomous actions in the world, not just generate text.

The distinction matters enormously. A chatbot answers questions. An agent books the flight, sends the email, updates the CRM, and schedules the follow-up meeting. An agent does not just help you do your job; it does parts of your job for you.

The enterprise implications are profound. Every company has workflows that are currently performed by humans — not because those workflows require human judgment, but because they require the ability to navigate multiple software systems, interpret ambiguous instructions, and take a sequence of actions over time. AI agents can do all of these things. The question is not whether agents will automate significant portions of white-collar work; it is how quickly, and which companies will capture the value.

Salesforce’s Agentforce, Microsoft’s Copilot, and a wave of startups are all racing to build the infrastructure and applications for the agentic era. The early enterprise deployments are showing real ROI: customer service agents that handle routine inquiries without human intervention, code review agents that catch bugs before they reach production, procurement agents that identify cost savings across supplier contracts.

The “agent economy” — where AI agents hire other AI agents to complete subtasks — is still largely theoretical, but the building blocks are being assembled. What is clear is that the companies that figure out how to deploy agents reliably in enterprise environments will capture enormous value.

What Makes an Application Layer Winner?

Not every AI application company will win. The history of technology is littered with companies that built products on top of new platforms and failed to create durable value. Understanding what separates the winners from the losers is essential.

Proprietary data is the most important moat. The foundation models that power AI applications are increasingly commoditized — OpenAI, Anthropic, Google, and Meta are all building capable models, and the gap between them is narrowing. What cannot be commoditized is proprietary data. A company that has accumulated years of domain-specific data — legal documents, clinical notes, financial transactions, customer interactions — has a training advantage that competitors cannot easily replicate. And if that data improves as more customers use the product, the moat compounds over time.

Workflow integration creates switching costs. The most durable AI applications are not standalone tools; they are deeply embedded in the daily workflows of their users. When an AI product becomes the way you do your job — not a tool you use occasionally, but the environment in which you work — switching costs become enormous. Cursor is a good example: developers who have configured their development environment around Cursor, who have trained it on their codebase, who have built their workflow around its capabilities, are not going to switch to a competitor easily.

Distribution is underrated. The best AI product does not always win. The product with the best distribution often does. This is why Microsoft’s Copilot — which is not necessarily the most capable AI assistant — has enormous potential: it is being distributed to hundreds of millions of Office users who already pay for Microsoft 365. The companies that already have the customer relationship have a significant advantage in the AI application layer, provided they can move fast enough to build products worth using.

Speed of iteration is a genuine competitive advantage. AI-native companies can ship products dramatically faster than incumbents burdened by legacy codebases, large engineering organizations, and complex approval processes. A startup with 20 engineers and a modern AI-native architecture can iterate on its product in days; a legacy SaaS company with 10,000 engineers and a 20-year-old codebase may take months. In a market moving as fast as AI, that speed differential is decisive.

Outcome-based pricing unlocks value-based revenue. The companies that will capture the most value are those that align their pricing with the outcomes they deliver. A company that charges per seat is limited by headcount. A company that charges a percentage of the value it creates — revenue generated, costs saved, time recovered — has a fundamentally different revenue ceiling.

The Incumbent vs. Upstart Battle

The most interesting competitive dynamic in the AI application layer is the battle between incumbents and upstarts — and it is not as one-sided as it might appear.

Incumbents have enormous advantages: distribution, customer relationships, brand trust, and the financial resources to invest in AI at scale. Microsoft’s integration of AI into Office 365 — used by hundreds of millions of people — is a genuine competitive advantage. Adobe’s Firefly, integrated directly into Photoshop and Illustrator, is a product that creative professionals are already using. Intuit’s AI features in TurboTax and QuickBooks are reaching tens of millions of small businesses and consumers.

But incumbents face a structural challenge that is difficult to overcome: the innovator’s dilemma. Their existing products generate enormous revenue, and building AI-native alternatives risks cannibalizing that revenue. A legacy SaaS company that charges per seat has a strong incentive not to build an AI product that reduces the number of seats required. A company whose revenue depends on users spending time in its software has a strong incentive not to build an AI that completes tasks faster. The financial incentives of incumbents are often misaligned with the direction that AI is pushing the market.

Startups, by contrast, have no legacy revenue to protect. They can build the product that is best for the customer without worrying about cannibalization. They can adopt outcome-based pricing without worrying about disrupting an existing seat-based revenue model. They can move fast without worrying about breaking things that existing customers depend on.

The sectors where this battle is most intense — and where the stakes are highest — include legal technology, healthcare documentation, financial services, education, customer service, and software development. In each of these sectors, there are both well-funded incumbents trying to add AI to existing products and well-funded startups trying to replace those products entirely. The outcome will vary by sector, but the pattern is likely to be similar to what we have seen in previous technology transitions: incumbents will win in some categories where their distribution and customer relationships are decisive, and startups will win in categories where the product needs to be rebuilt from scratch to be truly excellent.

The M&A wave is already beginning. Large technology companies — Microsoft, Google, Salesforce, ServiceNow — are acquiring AI application companies at a rapid pace, both to accelerate their own AI capabilities and to prevent competitors from gaining access to promising technology. This acquisition activity will accelerate as the application layer matures and the winners become clearer.

The Investment Landscape

For investors, the shift from infrastructure to applications represents both an opportunity and a challenge.

The opportunity is clear: the application layer is where the majority of AI’s economic value will ultimately be captured, and we are still in the early stages of that value creation. The companies that build category-defining AI applications in the next three to five years will be worth enormous amounts of money.

The challenge is equally clear: not every AI application company will win, and the current market is not always good at distinguishing between companies with genuine moats and companies that are, in the words of the industry, “wrappers” — thin layers on top of foundation models with no real competitive advantage.

The key metrics that matter for AI application companies are different from those that mattered for traditional SaaS. Net Revenue Retention — the percentage of revenue retained from existing customers, including expansions — is the most important single metric. A company with 130% NRR is growing its revenue from existing customers even without adding new ones; a company with 80% NRR is losing ground. AI application companies with strong NRR have demonstrated that their product delivers enough value that customers not only stay but expand their usage.

AI-attributed revenue — the portion of revenue that can be directly attributed to AI features — is increasingly important as a signal of genuine product-market fit versus AI-washing. Many legacy software companies are claiming AI capabilities that are superficial; the companies that can demonstrate that AI is driving measurable outcomes for their customers are the ones worth paying attention to.

The “platform vs. feature” distinction is critical. A feature is something that can be replicated by a competitor in a few months. A platform is a system that becomes more valuable over time as more users, data, and integrations accumulate. The most valuable AI application companies will be platforms — systems that create network effects, accumulate proprietary data, and become more useful the more they are used.

The risk of over-valuation is real. The AI application market is attracting enormous amounts of venture capital, and not all of it is being deployed wisely. Companies with impressive demos but no real moat are raising at valuations that assume they will become category leaders. Many of them will not. The discipline of distinguishing between genuine platform businesses and feature companies dressed up as platforms will be the defining skill for AI investors in the next few years.

Risks and Counterarguments

No honest analysis of the AI application layer opportunity can ignore the risks. There are several that deserve serious consideration.

The foundation model providers could eat the application layer. OpenAI, Anthropic, and Google are not passive infrastructure providers. They are actively building consumer and enterprise products that compete directly with the application layer companies that depend on their APIs. OpenAI’s move into enterprise software, its development of specialized models for specific use cases, and its acquisition of companies in adjacent markets all signal an intent to capture application layer value directly. The risk for application layer companies is that the foundation model providers will use their model capabilities and distribution to commoditize the applications built on top of them.

The “wrapper” problem is real. Many AI application companies are, at their core, thin layers on top of foundation models with no real competitive advantage. They have a nice user interface, a clever prompt, and a marketing story — but no proprietary data, no deep workflow integration, and no moat that would prevent a competitor from replicating their product in a few months. These companies will struggle to survive as foundation models become more capable and easier to access.

Model capability jumps can make today’s killer app obsolete. The pace of AI capability improvement is extraordinary, and it creates a specific risk for application layer companies: a product that is genuinely differentiated today may become obsolete when the next generation of foundation models is released. The application layer companies that will survive are those whose moat is in data and workflow integration, not in model capability.

Enterprise sales cycles are long. The enthusiasm for AI in the enterprise is real, but the procurement process is not. Large enterprises have complex approval processes, security reviews, compliance requirements, and change management challenges that slow the adoption of new software. Many AI application companies are discovering that the gap between a successful pilot and a company-wide deployment is wider than they expected.

Regulatory risk is growing. Governments around the world are developing AI regulations, and the regulatory landscape is particularly complex in the sectors where vertical AI is most promising — healthcare, financial services, and legal. Companies building AI products in these sectors need to navigate a complex and evolving regulatory environment, and the compliance costs can be significant.

The DeepSeek lesson cuts both ways. The same efficiency gains that are accelerating the shift to the application layer also mean that the cost of building AI products is falling rapidly. This lowers barriers to entry for application layer companies — but it also means that the moats of today’s leaders can be eroded faster than in previous technology cycles. As Arthnova documented, DeepSeek proved that a relatively unknown startup could match frontier model performance with a fraction of the investment that Western labs considered necessary. The same dynamic will play out in the application layer.

What This Means for Founders, Operators, and Investors

The implications of the AI value shift are different depending on where you sit.

For founders, the window to build category-defining vertical AI companies is open right now — but it will not be open forever. The companies that establish themselves as the default AI platform in a specific vertical over the next two to three years will be extraordinarily difficult to displace. The playbook is clear: pick a vertical with a large, specific pain point; build deep domain expertise and proprietary data; integrate tightly with existing workflows; and price based on outcomes rather than seats. The companies that execute this playbook well will be worth billions.

For operators — the executives and managers at existing companies trying to figure out how to use AI — the key insight is that AI adoption is not primarily a technology decision; it is a strategy decision. The companies that will win are not those that adopt AI the fastest, but those that adopt AI in ways that create genuine competitive advantages. That means identifying the specific workflows where AI can deliver measurable outcomes, building the data infrastructure to support AI at scale, and developing the organizational capabilities to iterate on AI products quickly. AI is not a cost-cutting tool; it is a competitive moat — but only if you build it that way.

For investors, the framework for identifying application layer winners is becoming clearer. Look for companies with proprietary data that compounds over time. Look for deep workflow integration that creates genuine switching costs. Look for outcome-based pricing that aligns the company’s incentives with its customers’ outcomes. Look for strong Net Revenue Retention as evidence that the product is delivering real value. And be skeptical of companies whose moat is primarily in model capability rather than data and workflow integration — those moats are fragile in a world where foundation models are improving rapidly, as DeepSeek demonstrated.

The three-to-five-year horizon looks like this: the AI application layer will consolidate around a relatively small number of category-defining platforms in each vertical. The companies that establish themselves as those platforms will be worth hundreds of billions of dollars. The companies that fail to establish genuine moats will be acquired, commoditized, or shut down. The winners will look, in retrospect, as obvious as Google and Amazon look today — but right now, they are still being built.

Conclusion

The arc of AI’s value creation follows the same pattern as every major technology wave before it. Chips were the first bottleneck, and NVIDIA captured extraordinary value by controlling that bottleneck — growing data center revenue from $15 billion to over $115 billion in just two fiscal years, before reporting $215.9 billion in total revenue for fiscal year 2026. Infrastructure was the second bottleneck, and the hyperscalers and their suppliers captured value by building the highways on which AI runs, committing over $600 billion in annual capex by 2026. Now the bottleneck is shifting to the application layer — to the companies that can build genuinely useful products on top of all that compute.

This is not a prediction about the distant future. It is a description of what is already happening. The venture capital is already flowing into application layer companies. The enterprise procurement decisions are already being made. The category leaders are already beginning to emerge. The question is not whether the value will migrate to the application layer; it is which companies will capture it.

The companies that will define the next decade of AI are not selling shovels anymore. They are not building highways. They are building the cities — the products and platforms that people and businesses will use every day, that will become embedded in how work gets done, and that will capture the majority of the economic value that AI creates.

The third wave is here. The question is whether you are positioned to ride it.