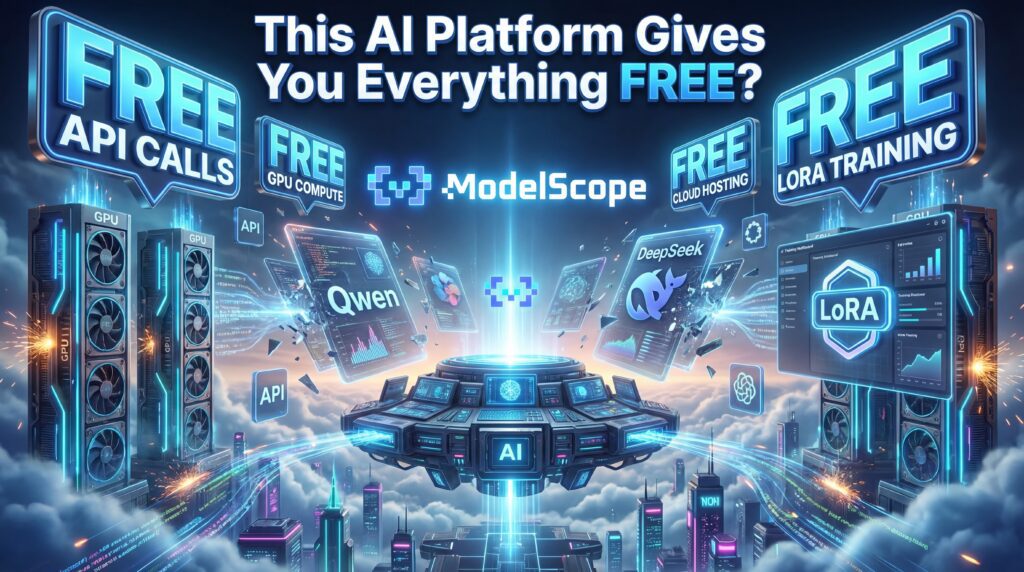

Free API calls. Free GPU compute. Free cloud hosting. Free LoRA training. If that sounds too good to be true, you haven’t heard of ModelScope yet.

There’s a growing frustration in the AI community that’s hard to ignore. Every week, a new tool launches with a free tier that lasts exactly long enough for you to get hooked before the paywall descends. GPU rental costs eat into project budgets. LoRA training on platforms like CivitAI comes with a price tag attached. Hugging Face’s inference endpoints, while excellent, aren’t exactly free once you start scaling. The barrier to entry for serious AI experimentation — particularly for independent developers, hobbyists, and creators — keeps climbing.

And then, quietly, from the east, comes ModelScope.

In a recent video that has now racked up over 164,000 views on the Kingy AI YouTube channel, ModelScope was put through its paces — and what was uncovered deserves far more attention than the platform has been getting in Western AI circles. This review will go deep on everything that was tested: the model library, API inference, cloud Studios, the Civision zone, LoRA training, and the full developer ecosystem surrounding it. The goal is simple — help you understand exactly what ModelScope is, what it genuinely offers for free, where it fits in the current AI landscape, and whether it deserves a permanent spot in your workflow.

What Exactly Is ModelScope?

ModelScope is an open-source “Model-as-a-Service” (MaaS) platform developed and backed by Alibaba Cloud. It operates as China’s largest AI model community, with over 5 million registered developers, and has been quietly building out an English-language international version that was formally launched in mid-2024. At its core, ModelScope aggregates thousands of pre-trained models spanning nearly every major AI domain — natural language processing, computer vision, speech recognition, multi-modal generation, and more — into a single, unified platform.

Think of it as the intersection of several services you likely already use: the model hosting breadth of Hugging Face, the image generation and LoRA community of CivitAI, and the cloud compute generosity that neither of those platforms quite matches. As the Kingy AI video describes it: “ModelScope is basically what you get if Hugging Face and CivitAI had a cloud-based AI baby.” That’s not hyperbole — it’s a surprisingly accurate description of what the platform has built.

What separates ModelScope from its Western counterparts isn’t just the breadth of models or the quality of the interface. It’s the sheer generosity of the free tier. We’re talking about free API inference, free cloud hosting and storage, and free GPU-powered LoRA training — all available to any registered user without a credit card required. Whether that business model is sustainable long-term is a separate question worth discussing. But right now, in 2026, what ModelScope is offering is genuinely extraordinary.

First Impressions: Landing on the Platform

When you first navigate to modelscope.ai, the homepage will feel immediately familiar to anyone who has spent time on Hugging Face. You’ve got model listings, categories, search filters, API options, and deployment links all organized cleanly across the top navigation. It’s structured, it’s functional, and it’s clearly built for developers first.

The Kingy AI video does a great job of walking through the initial orientation. As noted at the 00:40 mark: “You might notice this feels similar to Hugging Face. We’ve got model listings, categories, APIs, deployment options, all in one place.” That familiarity is actually a significant UX win for ModelScope — anyone who knows how to navigate Hugging Face will feel at home within minutes.

One notable UI difference is the presence of the Civision section — a dedicated creative zone for image generation, video generation, and LoRA training that sits alongside the more developer-centric model hub. This dual identity (developer hub + creative studio) is a deliberate design choice, and it’s one of the more interesting things about the platform. More on that shortly.

Registration is straightforward. If you use the invite code kingy at sign-up, you’ll receive an additional 100 Magic Cubes on top of your daily free allocation — a meaningful bonus that we’ll explain in detail later.

The Model Library: 20,000+ Models and Counting

Let’s start with the headline number: at the time of the video recording, ModelScope was hosting over 20,000 models. And these aren’t obscure or legacy models gathering digital dust — the library includes some of the most powerful and sought-after open-source AI models available anywhere today.

The roster includes:

- Qwen series — Alibaba’s flagship large language models, including the powerful Qwen 2.5 Max, optimized for reasoning, coding, and structured outputs

- DeepSeek — the model that has captured significant attention globally for its reasoning capabilities

- GLM family — including the fast and capable GLM 4.7 Flash

- MiniMax — another strong performer in the Chinese open-source ecosystem

- Z-Image Turbo — a high-performance image generation model

- LLaMA and Ziya variants — familiar Western open-source lineage

- Hundreds of diffusion models, speech models, vision models, and multi-modal architectures

What’s worth highlighting here is the filtering capability. If you’re specifically hunting for models that support free API inference (and you should be), you can navigate to the Experience dropdown in the model browser and filter by API-Inference. At the time of the video’s recording, this surfaces approximately 7,200 models that offer free API calls — a genuinely staggering number. As noted in the platform documentation, these models are hosted cloud-side and instantly callable via RESTful endpoints with no local deployment required.

The breadth of this library positions ModelScope not just as a Chinese AI hub, but as a legitimate global model repository. Popular models like Qwen, DeepSeek, and GLM often launch on ModelScope immediately upon release — meaning you get fast access to trending models within the same ecosystem where you’re already working.

Free API Inference: The Part That Matters Most for Developers

Here’s where ModelScope starts to genuinely distinguish itself from the competition. Every registered user receives 2,000 free API calls per day as a combined quota across all supported models. Individual models carry their own separate per-model call limits on top of that combined pool. The daily quota resets every 24 hours — there’s no rollover, and exceeding the limit simply returns a 429 status error, but for the vast majority of individual developers and experimenters, 2,000 daily calls is substantial.

The Kingy AI video walks through a live demonstration of this at around the 02:26 mark, using the GLM 4.7 Flash model as an example. Navigating to the model page and clicking on API Inference surfaces the API endpoint, the model ID, and ready-to-use OpenAI-compatible Python code that can be plugged directly into any internal tool you’re building. The code is already formatted and ready to copy — there’s no configuration maze to navigate.

This OpenAI compatibility is a big deal. It means that if you’ve already built applications or workflows around the OpenAI API, switching to ModelScope’s free inference tier requires minimal code changes. You’re effectively getting access to cutting-edge models like Qwen 2.5 Max and GLM 4.7 Flash at zero cost, using API patterns you already know. For small teams, indie developers, or anyone building proof-of-concept tools without a dedicated AI budget, this is the kind of feature that can change the economics of a project overnight.

The live Qwen 2.5 Max demo in the video is illustrative. The model was prompted with a business analysis task — “analyze this business idea: AI-powered fitness accountability coach, provide strengths, weaknesses, and potential risks in bullet format” — and returned a well-structured, coherent response covering all requested categories. No setup. No local install. No API key purchase. Just results.

Studios: Cloud Deployment Without the Cloud Bill

ModelScope’s Studios feature is where the platform makes its pitch to developers who want to go beyond API calls and actually build hosted applications. As described in the platform overview, Studios provide cloud IDEs and application hosting environments for packaging and deploying models or interactive demos.

The workflow demonstrated in the video is straightforward: navigate to Studios, click New Studio, select Programmatic Creation, fill in your project metadata (name, description, configuration), select your compute resources — CPU or GPU environments — and hit Create. What you get is a hosted project that lives inside ModelScope’s cloud infrastructure. Your app code lives there. Your files live there. Your project storage lives there. When you push your application, it gets hosted there.

The Kingy AI video specifically highlights Gradio integration at the 03:30 mark: if you choose a Gradio-powered Python framework for your studio, ModelScope handles the backend infrastructure. This means you can turn simple Python functions into web applications and have them hosted in the cloud without provisioning a single server or paying a hosting bill.

The key insight from the video’s infrastructure breakdown is this: ModelScope isn’t just giving you free API calls. They’re giving you cloud project infrastructure, a hosting environment, storage for your files, and compute resources to run it all inside one ecosystem. As noted in the Alibaba Cloud announcement of ModelScope’s English launch: this is the “Model-as-a-Service” vision made concrete — you bring the idea, the platform provides the infrastructure layer.

For anyone who has priced out even basic cloud GPU hosting — we’re talking $0.50 to $2.00+ per hour depending on the provider and GPU tier — the implications of truly free compute for prototyping and small-scale deployment are significant. The ModelScope GitHub repository notes that cloud notebooks provide CPU and GPU compute for prototyping, and community posts confirm that the platform offers what amounts to unlimited cloud storage for models and datasets.

Civision: The Creative Zone That Changes Everything for Image AI

If the developer-facing features make ModelScope a compelling Hugging Face alternative, then Civision is what makes it a genuine CivitAI competitor — and in some ways, a superior one.

Civision is ModelScope’s dedicated zone for AIGC (AI-Generated Content) creators. It’s accessible directly from the main navigation and hosts three core capabilities: image generation, video generation, and LoRA model training. All of it is free. None of it requires you to own a GPU. All of it runs in the browser.

The image generation demo in the Kingy AI video uses the Qwen-Image-2512 model with a prompt that’s become something of a signature for the review: “Up-close shot of a strawberry made out of glass. Cinematic.” The interface offers extensive controls — image size (including 16:9 landscape), model selection, negative prompts, and advanced options. The resulting image, as shown in the video at around the 05:00 mark, is genuinely striking: a hyper-realistic glass strawberry with impressive translucency and light refraction detail.

The video also demonstrates a second image generation pass using Qwen checkpoints, resulting in a portrait image of a man in an urban setting — cinematic, detailed, with light reflections visible in the eyes and realistic texture on skin and fabric. As the video commentary puts it: “Look at the reflections even in his eyes — that is some crazy, crazy AI work, and you didn’t even need your own high-performance PC for this.”

For video generation, Civision offers both text-to-video and image-to-video pipelines. At the time of the video, supported durations were 3 or 5 seconds, with 10 seconds noted as “coming soon.” The supported models include variants of the Wan 2.2 framework, with LoRA support for stylistic customization. The video generation demo in the review video shows a landscape-format 16:9 clip with reasonable motion coherence — not flawless, but genuinely impressive for a zero-cost output.

Free LoRA Training: No GPU. No Paywall. No Kidding.

This is the feature that arguably deserves the most attention, because it addresses one of the most persistent friction points in the AI creator community.

Training a LoRA — a lightweight model adapter that lets you bake a specific style, subject, or aesthetic into an image generation model — typically requires GPU time. On platforms like CivitAI or through services like Replicate, that GPU time costs money. Even on self-hosted setups, you’re looking at hardware investment or cloud rental fees. For creators who want to train custom LoRAs for personal projects, this cost barrier has historically filtered out a huge portion of the potential community.

ModelScope’s Civision zone eliminates that barrier entirely.

The Kingy AI video walks through a complete LoRA training session starting at around the 05:11 mark. The workflow is as follows: navigate to the Model Train section within Civision, select a base image model (Qwen-Image is the recommended choice, particularly for the ongoing hackathon), give your LoRA a name (the video uses “ASMR-Glass-Fruit-Pro”), upload your reference training images (the glass fruit photos from the earlier generation demo are used here), configure advanced settings if desired, and click Start Free Training.

Training runs in real-time in the browser. Progress is visible. The session completes successfully. The resulting LoRA adapter can then be exported — including direct compatibility with ComfyUI, the widely-used node-based Stable Diffusion interface. This means you can train a custom LoRA on ModelScope’s free infrastructure and then import it into your local ComfyUI setup for use in your own generation workflows. The creative loop is fully closed.

The supported base models for LoRA training, per the platform documentation at SuperGok, include Qwen-Image-2512, Z-Image-Turbo, Qwen-Image-Edit, and others. The entire workflow — data upload, parameter configuration, training, evaluation, and export — happens in-browser with no local dependencies.

It’s also worth noting the context of ModelScope’s DiffSynth-Studio, the open-source diffusion engine that powers much of the Civision infrastructure. DiffSynth-Studio 2.0, released in December 2025, introduced significant VRAM management upgrades, split training techniques, FP8 precision support, and new model integrations including Z-Image Turbo and FLUX.2-dev.

This isn’t a cobbled-together interface on top of borrowed infrastructure — it’s a purpose-built, actively maintained diffusion engine with over 12,000 GitHub stars and a growing research output, including published techniques like EliGen (entity-level controlled generation) and Nexus-Gen (unified LLM reasoning with diffusion).

The Magic Cubes System: Understanding the Credits

Nothing on ModelScope is truly unlimited — and it’s worth being transparent about the credit mechanics rather than overselling the free tier.

The platform uses a currency called Magic Cubes (sometimes stylized as “Magicubes”) to govern access to compute-intensive tasks like image generation, video generation, and LoRA training. These credits refresh daily. They can be earned through daily login bonuses, completing simple platform tasks, and through referral codes.

Speaking of which: if you register using the invitation code kingy (from the Kingy AI video), you receive an additional 100 Magic Cubes on top of your standard daily allocation. That’s a meaningful boost when you’re first getting started and want to experiment with generation and training without burning through your default quota.

The free API inference quota — 2,000 calls per day — operates separately from Magic Cubes and resets on its own daily cycle. Exceeding the API quota returns a 429 error; exceeding your Magic Cube allocation requires either waiting for the daily refresh or earning more through the platform’s engagement mechanics.

This is the honest caveat that the Kingy AI video addresses directly in its closing section: the free tier is generous, but it is a tier. Heavy professional use — large-scale API integration, intensive daily training runs, high-volume image generation — will bump into quota limits. The Magic Cubes system appears designed to balance community access with sustainable infrastructure load. For experimentation, learning, and small-to-medium project development, the free allocation is more than adequate. For production-scale deployment, you’ll want to evaluate whether ModelScope’s paid options (if and when they become relevant) fit your needs.

The Broader Ecosystem: More Than Just a Model Hub

One of the more underappreciated aspects of ModelScope is that it’s not a standalone platform — it’s the consumer-facing hub of a much larger open-source AI ecosystem built by the Alibaba Cloud AI team.

Beyond the main ModelScope platform, the ecosystem includes:

- ms-swift — the Scalable Lightweight Infrastructure for Fine-Tuning, which supports training and deployment of 600+ large language models and 400+ multimodal large models. As of March 2026, ms-swift v4.0 is the current release, with support for models including Qwen3, DeepSeek-R1, Llama4, GLM4.5, and many more. It integrates Megatron parallelism techniques, GRPO reinforcement learning algorithms, and supports DPO, KTO, and other preference learning methods.

- DiffSynth-Studio — the open-source diffusion model engine powering Civision’s image and video generation capabilities. With 12,000+ GitHub stars and active research contributions, DiffSynth-Studio supports FLUX, Wan, Qwen-Image, and other major diffusion model families.

- ModelScope-Agent — an agent framework for building AI applications on top of ModelScope’s model infrastructure.

- EvalScope — an evaluation backend supporting 100+ evaluation datasets for both text and multimodal models.

Together, these components form what the Kingy AI video describes as a “full-stack ecosystem for fast AI innovation.” Each piece is independently useful; together, they create an integrated pipeline from model selection through fine-tuning, evaluation, deployment, and application development — all within a single coherent ecosystem.

This is actually a competitive advantage over Hugging Face in certain respects. While Hugging Face has a broader international community and arguably more diverse model contributions, ModelScope’s tighter integration between its platform and its core tooling means less friction moving between development stages. You’re training with DiffSynth-Studio, hosting on ModelScope Studios, inferencing through ModelScope’s API, and evaluating with EvalScope — all within one account, one interface, one permission system.

ModelScope vs. The Competition: Where It Fits

Let’s be direct about the comparisons, because this is where most reviews either overclaim or undersell.

Against Hugging Face: ModelScope’s model library is comparable in size (20,000+ models) and quality for Chinese-origin models, but Hugging Face still has a larger and more diverse international community contribution base. ModelScope’s key advantage is the bundled free compute — Hugging Face’s inference API is rate-limited and the free tier is more restrictive. If you’re primarily building with Chinese-origin models like Qwen or DeepSeek, ModelScope is a more natural home. The Alibaba Cloud blog positions ModelScope explicitly as a model-as-a-service platform rather than just a model registry, and this distinction matters in practice.

Against CivitAI: Civision is a serious challenger to CivitAI’s creator community, especially for users who want to train their own custom LoRAs without paying for GPU time. CivitAI has a larger existing LoRA library and a more mature social community. But ModelScope’s free training pipeline — particularly with ComfyUI export support — is a compelling alternative for creators starting from scratch. The community description on LinkedIn nails it: “ModelScope = Hugging Face and CivitAI, and is 100% free.”

Against Replicate, RunPod, or AWS SageMaker: These are cloud GPU rental services, and ModelScope isn’t trying to replace them for production workloads. But for development, prototyping, and experimentation, ModelScope’s free compute tier eliminates a cost category that has historically priced out independent developers. If you’re spinning up proof-of-concept projects or learning the craft of fine-tuning without a budget, ModelScope is simply in a different category of accessibility.

The Qwen-Image LoRA Hackathon: Community Meets Competition

One of the live features highlighted in the Kingy AI video is ModelScope’s Qwen-Image LoRA Training Competition — a global hackathon inviting developers to train novel LoRA models on the Qwen-Image architecture. The competition, which ran until March 18, 2026, featured two tracks: AI for Production and AI for Good. Prizes included an iPhone 17 Pro Max, a PS5, and $800 gift cards.

The significance of this hackathon extends beyond the prizes. It’s a signal about how ModelScope intends to build its Western community — not just through passive model hosting, but through active engagement mechanics that reward creative contribution. The platform functions, in this sense, as both a repository and a talent magnet.

From a purely practical standpoint, the hackathon also provides a structured reason to try the LoRA training pipeline. If you’re going to experiment with free training anyway, why not enter your best work and potentially win hardware in the process? The Hackathon details are accessible directly through the platform.

Honest Limitations: What to Watch For

No platform review is worth reading if it doesn’t acknowledge the rough edges, and ModelScope has them.

Quota constraints: The 2,000 daily API call limit and the Magic Cubes refresh system are genuinely limiting for heavy professional use. If you’re building a production application that needs consistent high-volume inference, you’ll either need to plan around the quota carefully or explore paid options. The platform documentation confirms that excess API calls return status 429 errors, and there’s no carryover on unused daily quota.

OpenAI API compatibility caveats: The platform advertises OpenAI-compatible endpoints, and the code examples in the API inference section are genuinely compatible. However, as noted in the platform overview documentation, some advertised features may not be fully supported across all models. Testing with your specific target model before building a full integration pipeline is strongly recommended.

Queue times in Civision: The image and video generation services are shared infrastructure. During peak usage periods, you may find yourself in position 3 or 4 in a generation queue, adding 40 seconds to 3+ minutes of wait time depending on your settings and the current load. This is normal for free shared compute, but worth factoring into your workflow expectations.

Western community maturity: ModelScope’s international English-language presence is growing rapidly, but the platform’s documentation, community forums, and support resources are still more developed in Chinese than in English. For complex technical questions or edge-case troubleshooting, you may encounter limitations in the English-language support ecosystem.

Model availability and freshness: While the platform is excellent for Chinese-origin models, the coverage of Western-origin open-source models (Llama variants, Mistral, etc.) is present but less consistently updated than on Hugging Face. If your workflow depends primarily on Western-origin models, Hugging Face remains the more reliable primary source.

The Verdict: A Platform That Deserves Your Attention

ModelScope is doing something rare in the AI industry: it’s giving away genuinely valuable infrastructure for free, at scale, with real models and real compute, and it’s doing so without burying the good stuff behind a trial period or a credit card requirement.

For developers: The combination of 20,000+ models, OpenAI-compatible API endpoints, 2,000 free daily API calls, free cloud Studios with compute and storage, and a full-stack open-source ecosystem in DiffSynth-Studio and ms-swift makes ModelScope a platform that should be in every developer’s toolkit — not necessarily as a primary production stack, but absolutely as a development and prototyping resource.

For creators: The Civision zone’s free LoRA training pipeline, free image generation with quality models like Qwen-Image-2512 and Z-Image Turbo, free video generation, and direct ComfyUI export compatibility make it one of the most accessible creative AI platforms available today. The Magic Cubes system keeps it sustainable without shutting out users who can’t afford to pay.

For learners: If you’re trying to understand how model fine-tuning works, how API inference integrates with application code, or how diffusion model generation pipelines are structured — ModelScope provides a fully functional environment to learn all of this without spending a dollar.

The Kingy AI video frames it well in its closing summary: “When it comes to using ModelScope, if you’re a developer, you’re getting access to free API, free hosting, free storage, free compute. And if you’re a creator, you’re getting access to free LoRA training, free image gen, ComfyUI compatibility, and no training paywall.”

There aren’t many platforms that can make that statement honestly. ModelScope can. And for a platform that’s still building out its English-language presence, the fact that it’s already this capable, this generous, and this well-engineered is a remarkable achievement.

Go to modelscope.ai, sign up with invite code kingy for your bonus 100 Magic Cubes, and start building. The cost of not trying it is higher than the cost of trying it — which is exactly zero.

Have you tried ModelScope? Let us know what you built in the comments below. For the full hands-on walkthrough, watch the original Kingy AI review video on YouTube.