There’s a long-standing tension at the heart of agentic AI development. On one side, developers want their AI coding assistant to just run — to kick off a multi-hour refactor, handle the 47 sequential tool calls it takes to finish, and come back with a working result while they grab a coffee. On the other side, nobody wants to hand an AI the keys to their production infrastructure without any guardrails. Until today, the choice was effectively binary: either babysit every command, or throw caution to the wind with a flag literally called --dangerously-skip-permissions.

Anthropic has just shipped a third option for Claude Code, and it’s called auto mode.

The Problem It Solves

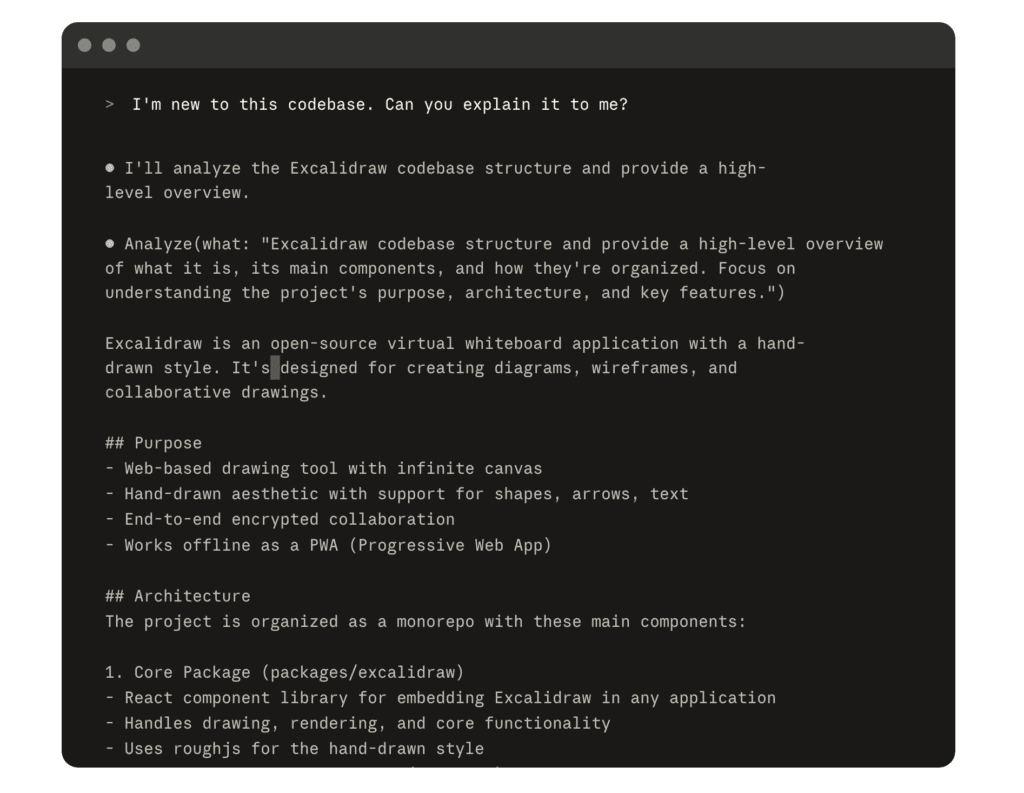

To understand why auto mode matters, you need to understand what Claude Code’s permission system looked like before it.

Claude Code ships with intentionally conservative defaults. Every single file write, every bash command — Claude stops and asks. This is the right call for sensitive or production-adjacent work, but it creates a practical problem for longer-running tasks. You can’t reasonably kick off a large-scale codebase refactor and then go to lunch. Claude will pause and wait for your approval every few minutes, stalling the task indefinitely.

The escape hatch that many developers reached for was --dangerously-skip-permissions. That flag does exactly what it says: it removes the permission layer entirely. Claude can write files, execute commands, interact with external services, and modify infrastructure without ever asking. For developers running Claude inside an isolated VM or container, this was an acceptable risk. But it’s a wide-open door — there’s no safety net if Claude misinterprets a task and starts doing something genuinely destructive.

Auto mode, as Anthropic describes it, is the “middle path.” It lets you run longer tasks with fewer interruptions, while introducing significantly less risk than skipping all permissions.

How Auto Mode Actually Works

The core mechanism is a background classifier — a separate AI model that runs in parallel with your main Claude session, reviewing each tool call before it executes.

Here’s the decision flow, in order:

- Your allow/deny rules resolve first. If you’ve pre-configured a permission rule for a specific tool or command, that takes precedence immediately.

- Read-only actions and file edits within your working directory are auto-approved. These are considered low-risk by definition.

- Everything else goes to the classifier. The classifier receives your conversation messages, your tool calls (but not Claude’s own responses or tool results, more on why that matters in a moment), and your

CLAUDE.mdproject instructions. - If the classifier deems the action safe, it proceeds automatically.

- If the classifier blocks it, Claude is informed of the reason and attempts a different approach.

The classifier itself runs on Claude Sonnet 4.6, even if your main session is using a different model. This adds some latency and token cost per tool call — specifically for shell commands and network operations, since read-only actions and local file edits don’t trigger a classifier call at all.

One architectural detail worth highlighting: tool results never reach the classifier. This is deliberate. It means that hostile content embedded in a file Claude reads — a malicious prompt injection buried in a third-party README or scraped web page — cannot manipulate the classifier’s decisions. The classifier evaluates the action Claude is about to take, not what Claude learned from the environment.

What the Classifier Blocks by Default

Auto mode ships with a set of default block rules that Anthropic describes as treating your working directory and your git repo’s configured remotes as “trusted,” while everything else is treated as external and unknown until explicitly configured.

According to the official documentation, blocked by default includes:

- Downloading and executing code — patterns like

curl | bashor running scripts pulled from cloned repositories - Sending sensitive data to external endpoints — preventing accidental exfiltration of credentials, API keys, or proprietary code

- Production deploys and migrations — Claude cannot push to production or run database migrations without explicit approval

- Mass deletion on cloud storage — bulk destructive operations on S3 buckets, GCS, or similar

- Granting IAM or repository permissions — no privilege escalation

- Modifying shared infrastructure — anything touching infrastructure that could affect other teams or systems

- Irreversibly destroying files that existed before the session started — protecting pre-existing work

- Destructive source control operations — force pushes, pushing directly to

main, rewriting shared history

Allowed by default includes:

- Local file operations in your working directory

- Installing dependencies already declared in your lock files or manifests (i.e.,

npm installfrom an existingpackage.json, not arbitrary package installation) - Reading

.envfiles and sending those credentials to their matching API - Read-only HTTP requests

- Pushing to the branch you started on, or a new branch Claude created during the session

This default set is designed around a practical reality: the actions that cause the most catastrophic developer accidents — mass deletes, force pushes to main, unintentional production deploys — are precisely the actions the classifier catches. Meanwhile, the mundane operations that make up 90% of agentic coding sessions — reading files, editing code, running tests, pushing feature branches — proceed without interruption.

You can run claude auto-mode defaults in the CLI to see the full default rule lists as the classifier receives them.

How Subagents Are Handled

This is where auto mode gets genuinely sophisticated. Claude Code increasingly uses subagents — delegating subtasks to separate Claude instances running in parallel or sequence. Auto mode has a thoughtful answer for this.

When Claude spawns a subagent, the classifier evaluates the delegated task description before the subagent even starts. A task that looks dangerous in isolation — “delete all remote branches matching this pattern” — gets blocked at spawn time, before any code runs.

Inside the subagent, auto mode runs with the same block and allow rules as the parent session. Any permissionMode the subagent tries to define in its own frontmatter is ignored — subagents can’t override the safety settings of the session that spawned them.

When the subagent completes, the classifier performs a post-run review of the subagent’s full action history. This is an important layer: a subagent might have been perfectly safe at launch but encountered malicious content mid-run that steered it in a dangerous direction. If the return check flags a concern, a security warning is prepended to the subagent’s results so the main agent can decide how to proceed.

This three-part check (pre-launch evaluation → in-session enforcement → post-run review) provides meaningful protection against the most plausible real-world attack vector in agentic systems: prompt injection through environmental content.

The Fallback Mechanism

Auto mode isn’t designed to be a wall that stops your session cold when things go wrong. It has a graceful fallback: if the classifier blocks an action three times in a row, or twenty times total in a session, auto mode pauses and Claude Code reverts to prompting you for each action.

These thresholds are not configurable — they’re fixed to prevent developers from inadvertently setting themselves up for an unattended session that silently fails.

What does a repeated block usually signal? Either the task genuinely requires actions the classifier is built to stop (in which case you should reconsider the task), or the classifier is missing context about your trusted infrastructure and treating safe actions as risky (in which case you need to configure your environment — more on that below).

In interactive sessions (the CLI), you see a notification and can approve the blocked action to reset the denial counters and continue in auto mode. In non-interactive mode with the -p flag, the session aborts entirely, since there’s no user to prompt.

The Permission Mode Ecosystem

Auto mode slots into a larger system of permission modes that Claude Code now offers. Understanding the full picture helps you choose the right mode for the right task:

| Mode | What Claude can do without asking | Best for |

|---|---|---|

default | Read files only | Sensitive work, getting started |

acceptEdits | Read and edit files | Code review, iterating on changes |

plan | Read files only, proposes changes | Exploration, planning a refactor |

auto | All actions, with classifier safety checks | Long-running tasks, reducing prompt fatigue |

bypassPermissions | Everything, no checks at all | Isolated containers and VMs only |

dontAsk | Only pre-approved tools | Locked-down CI environments |

Plan mode deserves a special mention here, because it pairs naturally with auto mode. You can use plan mode to have Claude research a codebase and propose a migration strategy — without touching a single file — and then, once you’re satisfied with the plan, approve it and let Claude execute in auto mode. This gives you the best of both worlds: high-level human oversight at the planning stage, and uninterrupted execution once the direction is set.

Enabling Auto Mode

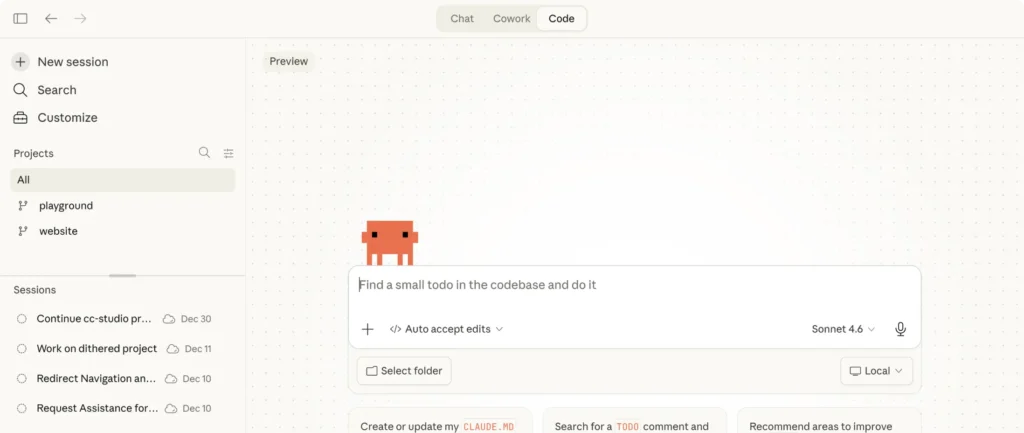

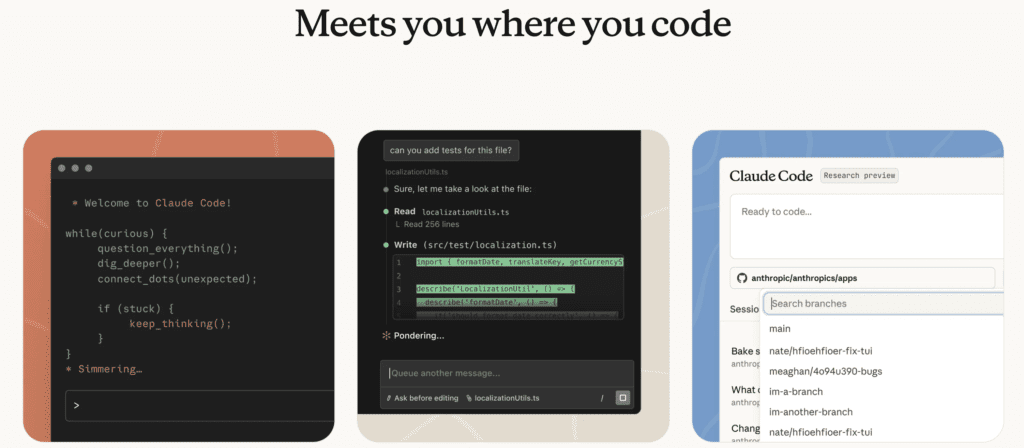

Auto mode is available in the CLI, VS Code extension, JetBrains IDEs, and the Claude desktop app.

For CLI users:

bashCopyclaude --enable-auto-mode

Once enabled, press Shift+Tab to cycle through permission modes: default → acceptEdits → plan → auto. The current mode appears in the status bar.

You can also set it as a persistent default in your settings file:

jsonCopy{"permissions":{"defaultMode":"auto"}}

Or activate it for a single non-interactive run:

bashCopyclaude -p "refactor auth module" --permission-mode auto

For VS Code and Desktop users: Toggle auto mode on in Settings → Claude Code, then select it from the permission mode dropdown in your session.

For Enterprise admins: Auto mode will be available for all Claude Code users on Enterprise, Team, and Claude API plans. To disable it for the CLI and VS Code extension, set "disableAutoMode": "disable" in your managed settings. Note that auto mode is disabled by default on the Claude desktop app and must be toggled on via Organization Settings → Claude Code.

Auto mode requires Claude Sonnet 4.6 or Claude Opus 4.6. It is not available on Haiku, Claude 3 models, or third-party providers including Amazon Bedrock, Google Cloud Vertex AI, or Microsoft Foundry.

Configuring Trusted Infrastructure

One of the most important things to understand about auto mode out of the box: the classifier only trusts your local working directory and your git repo’s configured remotes. Your company’s GitHub organization, your internal S3 buckets, your staging environment — all of that is unknown to the classifier until you explicitly tell it what to trust.

This is why teams might see auto mode blocking actions that feel perfectly routine, like pushing to a company org’s repository or writing to a team bucket. The classifier isn’t being paranoid — it genuinely doesn’t know that github.com/your-company is trusted infrastructure.

Administrators can configure trusted repos, buckets, and internal services via the autoMode.environment setting in managed settings. The classifier also reads your CLAUDE.md file, so project-level context about trusted services and approved operations can influence its decisions.

To report false positives or missed blocks, use the /feedback command inside Claude Code.

What Auto Mode Is Not

Anthropic is explicit about this, and it’s worth repeating: auto mode reduces risk but does not eliminate it. The classifier may still allow some risky actions if user intent is ambiguous, or if Claude doesn’t have enough context about your environment to know an action creates additional risk. It may also occasionally block benign actions.

Auto mode provides more protection than --dangerously-skip-permissions but is not as thorough as manually reviewing each action. Anthropic continues to recommend using it in isolated environments — containers, VMs, devcontainers — rather than running it directly against production systems or a shared developer machine without careful configuration.

The company says it will continue to improve the experience over time.

Availability and Pricing

Auto mode is available today as a research preview for Claude Team plan users. Enterprise plan and API access is rolling out in the coming days.

Since the classifier model runs as a separate call for each evaluated tool action, there is a small impact on token consumption, cost, and latency. Classifier calls count toward your token usage the same as main-session calls. Each checked action sends a portion of the conversation transcript plus the pending action to the classifier. The overhead is concentrated on shell commands and network operations; read-only file operations and edits within your working directory bypass the classifier entirely.

Anthropic has not announced specific pricing for the classifier overhead beyond noting it uses standard API token pricing.

The Bigger Picture

Auto mode is a meaningful step forward in solving one of the hardest problems in agentic AI: how do you build systems that can work autonomously for extended periods without either (a) constantly interrupting users for approval, or (b) running completely unchecked?

The approach Anthropic is taking — a separate classifier model that evaluates actions in context, with prose-based rules rather than rigid pattern matching, and with specific architectural choices to prevent prompt injection — is more sophisticated than a simple allow/block list. It’s a system that can reason about why an action might be risky, not just whether it matches a known-bad pattern.

Whether the classifier’s judgment is good enough for the most sensitive enterprise workflows remains to be seen during this research preview period. But for the vast majority of everyday development tasks — long refactors, multi-file feature implementations, automated test runs and commit cycles — auto mode looks like a genuine quality-of-life improvement for developers who’ve been forced to choose between micromanaging Claude or crossing their fingers with --dangerously-skip-permissions.

The era of “set it and go get coffee” agentic coding may have just arrived.