🤖 AI Model Picker Quiz

Answer 10 quick questions to find the best AI for your needs

Not sure which AI model is right for you? The quiz above matches your habits, budget, and priorities to one of six leading models — in about two minutes. Below, we break down exactly how the scoring works, what each model actually costs, and the hidden tradeoffs nobody talks about.

What This Quiz Does

The AI Model Picker Quiz asks you 10 targeted questions about the way you work: what tasks you need help with, how much you’re willing to spend, whether you live inside the Google or Microsoft ecosystem, and how much you care about data privacy and open-source freedom.

Behind the scenes, every answer feeds into a weighted scoring system that evaluates six of the most capable AI models available in early 2026:

- ChatGPT (OpenAI — GPT-5.4)

- Gemini (Google — Gemini 3.1 Pro)

- Claude (Anthropic — Opus 4.6)

- Llama 4 (Meta — Scout & Maverick)

- Mistral (Mistral AI — Large 3 & Small 4)

- DeepSeek (DeepSeek — R1 & Coder V2)

Not every question carries the same weight. A question about budget, for example, can swing the result more than a question about preferred writing style — because cost is a hard constraint while style is a soft preference. The result is a ranked recommendation rather than a single, rigid answer, so you can make an informed decision based on your own priorities.

Why Picking the Right AI Model Matters

The Hidden Cost of the Wrong Subscription

At $20 a month, a single AI subscription feels painless. But if that tool can’t actually do what you need — say, you’re paying for ChatGPT Plus but you really need native Google Docs integration — you’ll inevitably sign up for a second service. Now you’re spending $40/month, toggling between two chat windows, and neither one has the full picture of your work. Over a year, that adds up to almost $500 in wasted overlap.

Productivity Loss Is Real

An AI model that’s strong at creative writing but weak at complex debugging will slow a developer down, not speed them up. Conversely, a coder-focused model may produce stiff, awkward prose for a marketing team. The mismatch doesn’t just waste money — it wastes time, which is the resource you were trying to save in the first place.

The “One-Size-Fits-All” Myth

No single model is the best at everything. ChatGPT has the broadest plugin ecosystem. Gemini has the largest context window among the proprietary models and the deepest Google integration. Claude is widely considered the best instruction follower for long-form writing and production-quality code. Llama 4 and Mistral let you self-host with no subscription at all. DeepSeek offers strong multi-step reasoning at rock-bottom prices. Understanding these differences is the entire point of the quiz.

Cost-Benefit Analysis: What You Actually Pay

Price is usually the first question people ask, so let’s lay every option on the table.

| Model / Tier | Monthly Cost | API Pricing | Open Source? |

|---|---|---|---|

| ChatGPT Plus | ~$20/mo | Higher tier | No |

| ChatGPT Pro | ~$200/mo | Higher tier | No |

| Gemini Advanced | ~$20/mo (Google One) | $2 / $12 per M tokens (lowest of the three) | No |

| Gemini Workspace | ~$30/user/mo | $2 / $12 per M tokens | No |

| Claude Pro | $20/mo | Mid-range | No |

| Claude Max | $100–200/mo | Mid-range | No |

| Llama 4 (Scout / Maverick) | Free to download | Self-host or cloud provider | Yes – Community License |

| Mistral (Large 3 / Small 4) | Free open weights | Available via platform | Yes – Apache 2.0 |

| DeepSeek (R1 / Coder V2) | Free open weights | Among the cheapest available | Yes – MIT |

What the Numbers Mean in Practice

If you’re an individual user who mainly drafts emails and brainstorms, the ~$20/month tier of ChatGPT Plus, Gemini Advanced, or Claude Pro are all competitively priced. The deciding factor won’t be cost — it will be ecosystem fit (more on that below).

For developers and power users who hit API endpoints heavily, Gemini’s $2/$12-per-million-token pricing is the lowest among the three major proprietary offerings. DeepSeek’s API is even cheaper if you’re comfortable with a less established platform.

For budget-conscious teams or tinkerers, the open-source trio — Llama 4, Mistral, and DeepSeek — can be downloaded and run for the cost of the hardware alone. Meta’s Llama 4 Scout model, for example, fits on a single H100 GPU, making self-hosting surprisingly accessible. Mistral’s Small 4, with its 6–8 billion active parameters, can run on as little as 4 GB of VRAM via the tiny Ministral 3B variant.

Watch out for hidden costs: ChatGPT’s 1-million-token context window is impressive, but cost doubles once you exceed 272K tokens. Usage limits on the Plus plan can also be a bottleneck for heavy users. Always check the fine print.

Integration & Ecosystem Lock-In

Price only tells half the story. Where an AI model lives — and what it plugs into — can matter just as much.

ChatGPT → The Microsoft Universe

OpenAI’s GPT-5.4 is deeply embedded in Microsoft 365, Azure, and GitHub Copilot. If your company already runs on Outlook, Teams, and Excel, ChatGPT slots in with minimal friction. It also boasts thousands of custom GPTs in its GPT Store, a Computer Use API that can see your screen, click, and type, plus a Code Interpreter for sandbox data analysis. The trade-off: you’re tying your AI workflows to Microsoft’s cloud.

Gemini → The Google Universe

Gemini 3.1 Pro is baked into Google Docs, Sheets, Gmail, and Meet, with real-time Google Search integration and a free Gemini Code Assist extension for IDEs. It’s also the only model of the six with native video and audio input, plus the largest context window among proprietary models at 2 million tokens. But if your workplace runs on Microsoft or an independent stack, much of that value evaporates.

Claude → Multi-Cloud Flexibility

Anthropic deliberately avoided a single-vendor lock-in. Claude Opus 4.6 is available on AWS Bedrock, Google Vertex AI, and Microsoft’s Azure AI Foundry, giving enterprises the freedom to run it wherever their infrastructure already lives. The downside is fewer native consumer-facing integrations — there’s no “Claude inside Google Docs” equivalent, and it lacks native audio or video input.

Open-Source Models → Your Own Stack

Llama 4, Mistral, and DeepSeek don’t lock you into any ecosystem at all. You can run them on your own servers, inside a private cloud, or through third-party hosting providers. This is the ultimate flexibility — but it also means you are responsible for integration, updates, and uptime.

Switching & Replacement Ability

Choosing an AI model isn’t a tattoo — but some choices are harder to reverse than others.

Proprietary Models: Medium Switching Friction

Moving from ChatGPT to Gemini (or vice versa) means rebuilding custom prompts, migrating any automated workflows built on one company’s API, and retraining your team’s habits. If you’ve invested heavily in ChatGPT’s custom GPTs or Gemini’s Workspace add-ons, those assets don’t transfer. Claude’s multi-cloud availability makes it slightly easier to swap hosting providers without changing the model itself, but you’re still locked to Anthropic’s API format.

Open-Source Models: Low Switching Friction

Because Llama 4, Mistral, and DeepSeek all publish their weights, you can host any of them behind the same inference API (for example, an OpenAI-compatible endpoint). That means switching from Mistral to DeepSeek can be as simple as swapping one model file for another. The portability advantage is significant: you own the deployment, so you’re never one pricing change away from scrambling for an alternative.

Pro tip: If vendor lock-in worries you, start with an open-source model. You can always add a proprietary service later for specific tasks, but migrating away from a proprietary ecosystem is always harder than migrating to one.

Open Source vs. Proprietary: The Real Tradeoffs

The open-source vs. proprietary debate isn’t just philosophical — it has concrete implications for cost, control, compliance, and capability.

Self-Hosting: Control and Privacy

When you run Llama 4, Mistral, or DeepSeek on your own hardware, your data never leaves your network. For industries bound by strict regulations — healthcare, legal, finance — this can be a compliance requirement, not just a preference. Mistral is especially attractive in the European Union because of its strong European language support across 40+ languages and its inherent EU data sovereignty advantage. DeepSeek-R1’s MIT license and Mistral’s Apache 2.0 license are both fully permissive for commercial use, while Llama 4 uses a custom Community License that restricts companies with more than 700 million monthly active users and has certain EU-specific restrictions.

Managed Convenience: Why People Still Pay

Self-hosting isn’t free in practice. You need GPUs, DevOps time, monitoring, and security patches. The proprietary services handle all of that. ChatGPT offers SOC 2 Type 2 compliance plus GDPR/CCPA adherence out of the box. Claude is HIPAA-ready with data residency controls. For a five-person marketing team, paying $20/month per seat is vastly cheaper — and simpler — than renting GPU time and managing Kubernetes clusters.

Licensing at a Glance

- Mistral (Apache 2.0) — the most permissive. Use it, modify it, sell it, no strings attached.

- DeepSeek (MIT) — similarly permissive. Minimal restrictions on commercial deployment.

- Llama 4 (Community License) — free for most companies, but Meta imposes restrictions for organizations exceeding 700 million monthly active users and includes certain EU-specific limitations. Fine for the vast majority of businesses.

- ChatGPT, Gemini, Claude — proprietary. You access the model through an API or subscription; you never possess the weights.

Capability Gaps to Be Aware Of

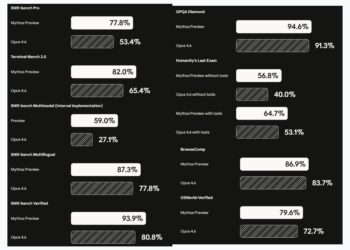

Open-source models have closed much of the quality gap, but differences remain. Llama 4 Maverick rivals GPT-4o and Gemini 2.0 Flash on public benchmarks, and its 400-billion-parameter mixture-of-experts architecture is genuinely impressive — yet it lacks the polished consumer app experience of ChatGPT or Gemini.

DeepSeek-R1 is strong in chain-of-thought reasoning and agentic tasks, but its leaderboard position has slipped compared to newer competitors like GLM-5 and Qwen 3.5. Meanwhile, ChatGPT can occasionally drift on long, multi-part prompts, and Gemini’s coding ability is weaker on complex debugging scenarios compared to Claude. Every model has blind spots — the question is whether your workflow hits them.

Final Thoughts

The AI landscape in 2026 is wider and more competitive than ever. You can spend $200 a month on a premium proprietary plan, or you can spend nothing and self-host an open-source model that rivals last year’s commercial leaders. Neither path is universally “better” — it depends on what you do every day, how much you value convenience over control, and which ecosystem your tools already live in.

That’s exactly what the quiz above is designed to sort out. Ten questions, two minutes, and you’ll have a data-informed starting point instead of a guess. The worst outcome isn’t picking the “wrong” model — it’s never evaluating your options and paying for a tool that’s only half-right for months on end.

Scroll back up and take the quiz. Your future, more-productive self will thank you.