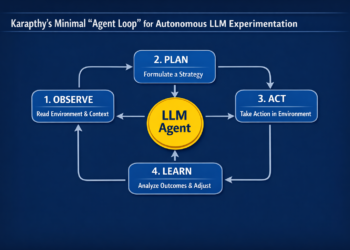

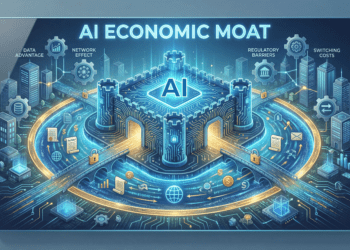

There is a concept in competitive strategy so foundational that Warren Buffett has spent decades evangelizing it, business school professors have built entire courses around it, and investors have used it as the primary lens through which to value companies. The concept is the economic moat — the durable competitive advantage that protects a business from rivals the way a water-filled trench once protected a castle from invading armies.

Buffett has described it simply: the best businesses are those that have a “moat” around them. Wide moats — the kind that come from network effects, high switching costs, cost advantages, and intangible assets like patents and brands — are what separate the businesses that endure from the ones that don’t. They’re what let a company raise prices without losing customers. They’re what let incumbents sleep soundly while competitors try and fail to eat their lunch.

AI is not going to let them sleep anymore.

That is, roughly speaking, the thesis Naval Ravikant has been developing in public. Ravikant — the co-founder of AngelList, one of Silicon Valley’s most intellectually consistent long-term investors, and the closest thing the startup world has to a philosopher-king — has been sounding this alarm in pieces, in tweets, in interviews, and in the framework he’s been building publicly for years. He’s not the only one saying it. But he’s one of the few who saw it early, said it clearly, and is now watching it happen in real time.

The thesis is not that AI will make competition marginally more intense. The thesis is that AI is systematically undermining the categories of advantage that incumbents have spent decades building. Not tweaking the rules of the game. Rewriting them.

This is worth sitting with for a moment. Every major industry in the modern economy — law, consulting, finance, software, medicine, media, marketing — was structured, at least in part, around the control of specialized knowledge and the difficulty of replicating complex processes at scale. Those industries built moats. Deep ones. AI is draining them.

The Coase Connection

To understand why, it helps to go back further than Naval. Back to a Nobel Prize-winning economist named Ronald Coase who, in 1937, published a paper called “The Nature of the Firm.” The paper asked a deceptively simple question: why do companies exist at all?

In a free market, the theory goes, you should be able to contract for everything. Need legal advice? Hire a lawyer for that specific task. Need marketing? Find a freelancer. Need code written? Outsource it. But in practice, companies hire employees, build organizational structures, and manage people full-time. Why?

Coase’s answer was transaction costs. Searching for the right contractor, negotiating terms, monitoring quality, managing delivery — all of that friction adds up. When it’s cheaper to bring someone in-house than to contract out every task, you build a company. When it’s cheaper to contract, you don’t.

Here is the implication that Naval and others have been working from: AI is collapsing transaction costs in every direction simultaneously. The cost of finding expertise, synthesizing information, generating work product, and quality-checking output is dropping toward zero. And when transaction costs drop, the efficient size of a company shrinks.

Back in 2012, Naval said at a PandaDaily fireside chat that he believed “the efficient size of a company is shrinking very rapidly.” He predicted billion-dollar businesses built by four or five people. He said companies like Facebook and Google were bloated, that founders knew 80% of their employees weren’t really needed but couldn’t figure out which 80%. In 2012, this sounded like philosophical hand-waving. Today it sounds like a description of what’s already happening.

The moats, in this framing, were always downstream of transaction costs. They existed because certain kinds of knowledge were expensive to acquire, synthesize, or replicate. They existed because building complex software required large engineering organizations. They existed because serving customers at scale required large support teams. Remove those structural constraints, and the moats start draining.

That’s what we’re watching happen now.

The Knowledge Moat Is the First to Go

Let’s start with the most obvious one, because it’s the most consequential: the knowledge moat.

For most of human history, expertise was both genuinely scarce and genuinely expensive to access. Becoming a lawyer required years of education, passing a bar exam, and accumulating case experience that couldn’t be faked. Becoming a management consultant required a credential from a prestigious firm and thousands of hours of frameworks, case studies, and client engagements. Becoming a financial analyst required understanding accounting, modeling, market dynamics — stuff that took years to absorb.

This scarcity was real. But it was also exploited.

Law firms charged $500, $800, $1,200 an hour for work that — once the knowledge was acquired — was often largely formulaic. The premium wasn’t entirely for the knowledge itself; it was for the credentialed, verified, trust-bearing human who held it. Consulting firms sold frameworks that sounded proprietary but were often versions of publicly available ideas, packaged and delivered with enough prestige that clients paid for the theater of it. Financial advisory firms charged percentages of assets under management for advice that, in many cases, could have been delivered by a well-calibrated algorithm.

The knowledge moat was always thinner than the price tag suggested. AI is exposing that.

Thomson Reuters’ 2026 AI in Professional Services Report — drawn from insights across 1,500+ professionals — shows how comprehensively AI is embedding itself in legal, accounting, tax, risk, and financial services. The legal sector in particular has watched this shift accelerate with unusual speed. Just a few years ago, the standard line from the bar was “AI won’t replace lawyers; lawyers who use AI will replace those who won’t.” That line was always a bit defensive, a bit self-serving. It’s becoming harder to maintain.

Oliver Roberts, editor-in-chief of AI & the Law at The National Law Review, put it plainly: “AI technology is at its weakest point at this very moment. It is only improving.” He predicted that AI will replace entry-level lawyers within five years — not as alarmism but as a near-consensus observation among the legal professionals who work closest with the technology.

Think about what that means structurally. The entry-level associate at a major law firm has historically been a revenue center and a training pipeline. They bill hours while learning the craft. Their work — document review, research, memo drafting, contract analysis — can now be completed faster, at lower cost, and with comparable or better accuracy by AI systems trained on vast legal datasets. The Artificial Lawyer’s 2026 industry predictions are saturated with this acknowledgment: clients won’t go back to paying for associates to do routine work that AI can already do.

This is not speculative. It is already happening. And the moat — the one built on “we have trained lawyers who know this stuff and you don’t” — is not disappearing all at once. It’s draining.

Information Asymmetry Was the Game. AI Changes the Game.

Closely related to the knowledge moat is something more subtle: the information asymmetry moat.

A huge number of business models — not just in professional services, but across industries — were built on knowing something the customer didn’t. Car dealerships knew the invoice price; buyers didn’t. Real estate agents knew comparable sales data; buyers and sellers didn’t have easy access to it. Insurance brokers knew which products had the best margins; clients trusted they were getting objective advice. Financial advisors knew their fee structures were complicated enough to obscure their own interests.

The internet eroded many of these asymmetries. Websites like Zillow democratized home price data. Consumer review sites exposed quality gaps. Comparison engines leveled insurance and financial product selection.

AI is finishing the job.

The difference is that AI doesn’t just surface existing data — it synthesizes, interprets, and advises. You can now have a dialogue with an AI that understands your specific financial situation and explains your options clearly, not because it has access to secret information, but because it has been trained on enough of the universe of financial knowledge that it can reason through your problem. You can ask an AI whether you need a lawyer for a given contract dispute, get a credible answer, and in many cases discover that you don’t.

This collapse of asymmetry is worth sitting with, because so many businesses were built on it. Not maliciously — asymmetry often existed because acquiring the relevant knowledge genuinely required time, training, and resources. But AI changes the cost structure of access so dramatically that the business model evaporates.

Naval has framed this consistently: leverage, in his framework, is the ability to deploy outsized outputs for a given unit of input. Knowledge workers have historically derived their leverage from the scarcity of their knowledge. AI gives everyone that leverage. The knowledge moat had a wall around it. AI built a road around the wall.

The SaaS Moat: Lock-In at Risk

Software-as-a-Service built some of the stickiest moats of the digital age. Once you’d loaded your customer data into Salesforce, your HR data into Workday, your communications into Slack, and your project management into Jira, moving was an enormous undertaking. The switching cost was real. The lock-in was deliberate by design. And the compounding subscription revenue it generated was what drove SaaS valuations to 20, 30, sometimes 50 times revenue.

Now consider what Naval wrote on X: “Software will proliferate just as videos, music, writing did. The market structure will shift from a ‘fat middle’ to mega-aggregators and a long tail. It’ll be a slower process due to network effects, but many traditional vendor lock-ins will get eaten by AI.”

He’s describing a structural shift that follows the historical pattern of every creative medium that went through democratization. Writing went through it with blogging. Video went through it with YouTube. Music went through it with SoundCloud and streaming. In each case: the tools of creation cheapened, the barriers to entry dropped, the “fat middle” — the mid-tier publishers, mid-size studios, regional software vendors — got hollowed out. What survived was the platform layer and the long tail of specialists.

Software is following the same arc. The cost of building functional software is collapsing. Microsoft’s CTO Kevin Scott has predicted that 95% of code will be written by AI within five years. Anthropic’s Dario Amodei has suggested the timeline may be shorter. These aren’t fringe views. They’re the considered opinions of the people building the technology.

The downstream consequence for SaaS moats is stark. Why pay a five-figure annual subscription for a workflow management tool when the same tool can be generated on demand, customized to your specifications, and hosted for a fraction of the cost? The answer, historically, was that building software was expensive and switching was painful. Remove both constraints and the moat starts draining.

Klarna made this bet explicitly. The Swedish fintech reportedly eliminated over 1,200 external SaaS tools as part of its AI-first transformation. That’s not a rounding error. That’s a company deciding that the value of those subscriptions — built on lock-in, data stickiness, and switching costs — no longer justified the price. It’s a preview of what happens when AI lowers the cost of building alternatives.

The second layer of the SaaS moat — data stickiness — faces its own erosion. Once AI can migrate data schemas and port records between platforms with minimal human involvement, the argument for staying locked in weakens considerably. The EU’s Data Act, which came into force in 2024, requires data portability in certain contexts — regulatory and technological pressure moving in the same direction at the same time. That combination is unusually dangerous for incumbents counting on data lock-in.

The SaaS moat isn’t gone. But it’s narrowing to what was always its strongest part: the network effects of the true platforms, and the genuine workflow integration of tools too embedded to displace. The middle is at risk.

Customer Service: A Cautionary Tale in Real Time

The Klarna story deserves extended attention, because it is one of the most honest and complete case studies in what happens when a company tries to use AI to replace a workforce — and then has to reverse course.

Klarna was aggressive. Famously so. The company deployed an AI assistant in partnership with OpenAI in 2024 and announced that it was doing the work equivalent to 700 customer support agents. CEO Sebastian Siemiatkowski was an outspoken advocate, framing the move as both efficient and inevitable. Headcount fell from roughly 5,500 employees in late 2022 to around 3,000 by the time the company filed its IPO prospectus. He described AI as having helped shrink the workforce by 40%.

Then the quality collapsed.

At its peak, Klarna’s AI systems were handling two-thirds to three-quarters of all customer interactions. But what customers experienced — particularly on complex issues — was generic, repetitive, insufficiently nuanced responses. Complaints rose. Satisfaction scores fell. By early 2025, internal reviews made the problem undeniable.

In May 2025, Siemiatkowski told Bloomberg that the cost-cutting push had gone too far. “As cost unfortunately seems to have been a too predominant evaluation factor when organizing this, what you end up having is lower quality,” he said. Klarna began rehiring — piloting an Uber-style gig workforce model to bring human agents back. In a remarkable public moment, Siemiatkowski announced: “We just had an epiphany: in a world of AI nothing will be as valuable as humans.”

The story has been widely reported as a cautionary tale about AI overcorrection. But there’s a deeper reading. Klarna’s customer service moat — built on a large, trained, relationship-bearing human workforce — was something the company voluntarily tried to demolish in favor of an AI alternative. The AI couldn’t fully replicate it. But the lesson isn’t that the moat was safe. It’s that Klarna chose to drain it and then had to rebuild.

Other companies will make the same choice, with varying results. Some will succeed. The point is not that AI always replaces human service — it’s that the economic logic has changed. The moat built on “we have 700 support agents and that’s expensive for a competitor to replicate” is no longer a moat when an AI system can, for most interactions, do the same job at a fraction of the cost.

The moat erodes. The companies that navigate this well will do so by figuring out where the human element is genuinely irreplaceable and preserving it there while letting AI handle the rest. The ones that don’t navigate it well will oscillate between overcorrection and undercorrection, as Klarna did, before eventually landing somewhere calibrated.

Scale Advantages and the Lean Machine

One of the most powerful traditional moats is economies of scale — the idea that bigger is cheaper, and cheaper means you can undercut competitors while maintaining margins. Manufacturing has it. Distribution has it. Marketing has it to some degree.

White-collar knowledge work has historically had a more complicated version of it. Larger consulting firms could staff bigger projects, serve more clients simultaneously, and amortize the cost of proprietary research and training across a larger base. Larger law firms could handle complex multi-jurisdictional matters that smaller firms couldn’t. Larger software companies could invest more in R&D while subsidizing the cost across a larger customer base.

AI disrupts the scale advantage in knowledge work by enabling small teams to achieve the output of large ones.

Midjourney is the canonical example. Eleven employees at launch in 2022. By 2023, they’d hit $200 million in annual revenue with roughly 40 people and zero dollars of venture capital. As of 2025, they were generating an estimated $500 million in revenue with around 163 employees — over $3 million in revenue per employee, compared to the traditional tech company average of $150,000 to $300,000. They never needed scale. They needed leverage.

Cursor, the AI-powered code editor from Anysphere, went from $1 million to $500 million in ARR faster than any SaaS company in history with fewer than 50 people. Revenue per employee somewhere in the range of $1.67 million to $2.5 million. Unprecedented by any traditional metric.

These aren’t accidents. They’re the natural outcome of AI providing leverage that previously required large teams. A 10-person company with the right AI tools can now perform research, generate content, write code, manage customer interactions, run financial analysis, and produce marketing materials at the output level of a 100-person company a decade ago.

This is what Naval means when he talks about programmers gaining more leverage. In his framing, “programmers use AI to replace everybody else.” The creative side of coding doesn’t go away. If anything, programmers get more powerful. But the organizational overhead that once justified large teams — the project managers, the QA testers, the documentation writers, the junior developers grinding through boilerplate — that’s compressible in ways it wasn’t before.

The scale moat doesn’t disappear entirely. Physical infrastructure, hardware manufacturing, logistics, and certain networked businesses still benefit from genuine economies of scale that AI doesn’t neutralize. But the scale advantage in knowledge work — the one that justified large headcounts in law, consulting, finance, marketing, and software — is in serious decline.

Brand and Credential Moats: Thinner Than They Look

Brand is often cited as one of the most durable moats. And it is — but it’s worth distinguishing between brand as a signal of quality and brand as a proxy for access.

When McKinsey’s brand commanded premium fees, part of that was genuine quality signal: the firm attracted exceptional talent, built rigorous methodologies, and delivered real value. But another part was proxy-for-access: clients hired McKinsey because they trusted the firm’s deep knowledge of best practices that the client didn’t have and couldn’t easily access elsewhere.

AI chips away at the second part. The best practices aren’t secret. The frameworks aren’t proprietary. What a good consultant does — which is to synthesize information, apply frameworks, and identify the best path forward — is increasingly something that AI can do, faster and at a fraction of the cost, for the 80% of questions that are well-formed and have precedent.

This is what the legal industry is grappling with. As Omar Haroun of Eudia observed in his 2026 prediction, clients — facing growing scrutiny from CFOs — are asking a simple question: what incremental value are we getting from this specialized legal vendor versus what ChatGPT Enterprise or Microsoft Copilot can already deliver?

That question is uncomfortable. It’s uncomfortable because the honest answer, in many cases, is: not as much as you’re charging for. The brand moat at the mid-tier of professional services — the firms that aren’t truly elite but charged elite prices because clients didn’t have better options — faces real pressure.

The brand moats at the very top are more defensible. McKinsey and Goldman Sachs and White & Case will survive the transition because their brand signals access to people and relationships and judgment that genuinely can’t be replicated. But the firms that were coasting on credential-based pricing without world-class underlying value? The AI-accelerated commoditization of knowledge will find them.

Credential moats face their own version of this. The credential was always meant to signal competence — to give clients a reliable shortcut when they couldn’t directly evaluate quality. When AI can perform the tasks the credential was designed to certify, the credential’s gatekeeping function weakens. This is why we’re watching legal professionals debate what it means that AI can now pass the bar exam. It doesn’t mean AI is a lawyer. But it does mean the credential is a less reliable signal of irreplaceable expertise than it used to be.

Distribution Moats and the Marketing Leveling Field

Distribution has historically been a powerful moat, particularly in consumer goods and media. If you controlled the shelf space, the publishing deal, the broadcast slot, the app store ranking — you had a structural advantage that competitors couldn’t easily replicate. Distribution was expensive to build, slow to develop, and self-reinforcing once established.

The internet eroded this in media and software. AI is eroding it more broadly.

AI-powered marketing and SEO tools now let a small business run the same quality campaigns, write the same caliber of content, and perform the same audience analysis that previously required dedicated teams. The playing field doesn’t fully level — the company with existing brand recognition and customer relationships still has an advantage. But the advantage of having a large marketing department is diminishing when a solopreneur with the right tools can produce comparable output.

This is what Naval has observed about software proliferating like writing and music did. Writing proliferated because blogging tools and search engines made distribution free. Music proliferated because digital audio workstations and streaming platforms made production and distribution free. Software is next — the tools of production are cheapening, and the tools of distribution (app stores, cloud hosting, SaaS sales motions) are increasingly AI-assisted.

The distribution moat survives most robustly where network effects are genuine. Spotify and YouTube emerged as dominant platforms from the music and video democratization precisely because they had network effects on top of distribution — the more users they had, the better the experience for each user, which attracted more users, which further entrenched their position. That compounding logic is what makes a platform durable, and AI doesn’t necessarily dissolve it.

But the mid-tier distribution players — the companies that had advantaged distribution without strong network effects — are exposed. The regional software vendor with established sales relationships but no platform effects. The marketing agency with client relationships but no proprietary methodology. The media company with an audience but no community. These moats were built on friction. AI reduces friction.

Institutional Memory: The Forgotten Moat

Here’s one that rarely gets discussed but is real: institutional memory.

Large organizations build up vast, tacit knowledge over decades. How to navigate internal approval processes. Which regulatory interpretations have been challenged and succeeded. What client preferences were, what worked in previous negotiations, what the competitive landscape looked like three years ago. This knowledge lived in the heads of experienced employees, in email threads, in slide decks buried on internal servers, in the expertise of people who’d been around long enough to know where the bodies were buried.

This institutional memory was a genuine moat. It made long-tenured employees hard to replace, made organizational knowledge difficult to transfer, and gave large incumbents an advantage over new entrants who had to build this knowledge from scratch.

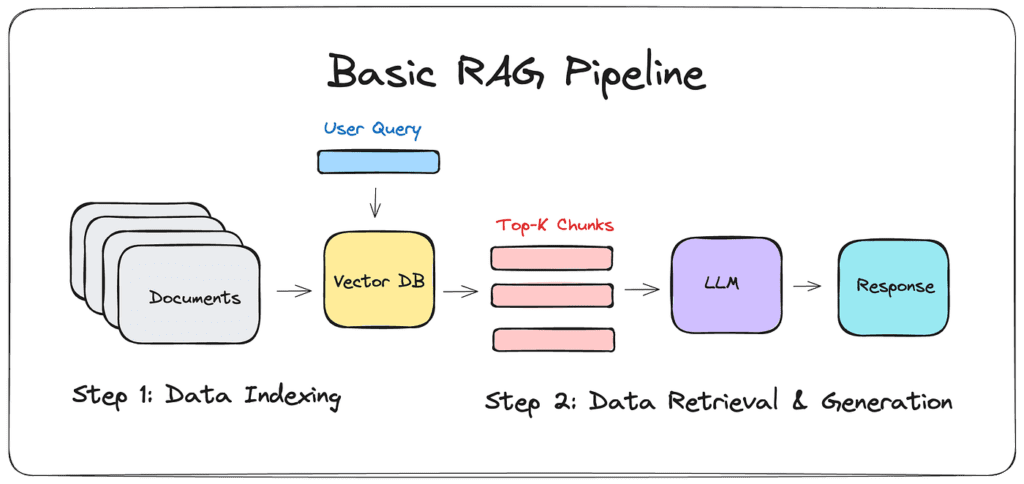

AI has changed the cost of accessing, organizing, and querying that institutional knowledge. RAG (Retrieval-Augmented Generation) systems can now ingest years of internal documents and make them queryable in natural language. The knowledge that used to live in a senior partner’s head can now live in a system that a junior employee can query.

This cuts both ways. It’s genuinely valuable for organizations — it makes their existing knowledge more accessible and reduces their dependence on any individual knowledge holder. But it also weakens the moat. The incumbent’s accumulated knowledge can be operationalized more cheaply, and new entrants who lack that history can compensate by training AI systems on the publicly available equivalent — industry reports, case studies, competitor filings, academic research.

The moat was the difficulty of knowledge transfer. AI reduces that difficulty dramatically.

The Moats That Survive

All of this could read as a declaration that moats are dead. They’re not. Some are getting drained. Others are deepening.

Proprietary data is increasingly the last true knowledge moat. Not data that’s publicly available — AI can access that. Not data that competitors could acquire — they can. But genuinely unique, continuously updated, real-world data that reflects something about the world that can’t be replicated. Clinical trial data. Proprietary financial transaction data. Behavioral data from a platform with network effects. The companies that have this and can use it to train and fine-tune models that perform better on their specific domain will have a meaningful and durable advantage.

Network effects, as discussed, remain powerful where they’re genuine. The platform that connects buyers and sellers, the two-sided marketplace where every new participant makes the platform more valuable for existing ones, the communication tool where everyone in your organization already is — these are defensible precisely because AI doesn’t change the fundamental logic of compounding network value.

Genuine trust and relationships survive, and perhaps become more valuable. In a world where AI-generated content, advice, and products are ubiquitous, the human element — the advisor you trust, the partner who knows your business, the supplier who has never let you down — becomes a scarcity premium. We are already seeing this in professional services: the firms and individuals who survive the AI-driven commoditization of knowledge work are those who’ve built genuine relationships of trust that clients don’t want to hand to a machine.

Taste, judgment, and curation are hard to replicate. Naval has noted this himself — the creative side of coding doesn’t go away, and neither does the creative side of anything else. Vision, aesthetics, judgment about what is worth building — these are things AI can assist with but cannot originate in the way a human with genuine taste can. The editor who knows what’s worth publishing. The designer with an instinct for what works. The founder who can see a market need before it’s articulable. These capabilities are not at risk from AI in the way that formulaic knowledge work is.

The Implications for Investment

If you accept the thesis that AI is draining the moats that have historically justified premium valuations in knowledge-intensive industries, the implications for how you value companies are significant.

The Earnout Investor put this clearly: the old equation of “bigger company equals bigger moat equals safer investment” is weakening. A five-person company can now generate $50 million in revenue. The traditional premium attached to scale in knowledge work is eroding. Revenue per employee is becoming one of the most important metrics to track — not because headcount doesn’t matter, but because the gap between high-AI-leverage companies and low-AI-leverage companies is widening fast. Companies still hiring aggressively without corresponding revenue growth are building cost structures that will become liabilities.

Klarna CEO Sebastian Siemiatkowski argued that AI-enabled software valuations should compress from the 20-30x price-to-sales multiples that the SaaS industry has historically commanded, down toward utility-like multiples of 1-2x. That’s a staggering prediction. It’s also not obviously wrong. If the software a company sells can be generated on demand by AI, the premium that once justified those multiples — the difficulty of building software, the defensibility of the product, the switching cost of the customer — is weakened. Software becomes more like infrastructure: essential, commoditized, low-margin.

The Y Combinator data is the clearest leading indicator of where this is going. In YC’s Winter 2025 batch, a quarter of the startups had codebases that were 95% AI-generated. These companies were growing at 10% per week. Companies reaching $10 million in revenue with fewer than 10 people. The old bottleneck was technical execution. The new bottleneck is judgment, taste, and knowing what to build — capabilities that can’t be easily automated.

Sam Altman predicted in early 2024 that we’d soon see companies with just 10 employees valued at over a billion dollars. Skild AI, a robotics startup with 25 employees, already carries a $1.5 billion valuation. These aren’t outliers. They’re early data points in a trend.

The Broader Restructuring

Zooming out to the macro level, what Naval’s thesis describes is a structural reorganization of the economy along lines that Coase’s theory predicted but that required AI to actually trigger.

Large organizations will survive where they have genuine justifications: capital-intensive physical infrastructure, regulatory relationships that require organizational credibility, physical logistics, and genuinely network-effect-driven platforms. Everything else is under pressure to compress.

The middle is the most exposed. The mid-tier consulting firm, the regional law practice, the marketing agency without a proprietary methodology, the SaaS vendor without platform network effects — these are the entities that built their business models on the friction that AI removes. They had moats. AI is draining them.

Roughly 20% of S&P 500 companies now have fewer employees than they did a decade ago while posting higher sales and profits. The software industry’s average revenue per employee jumped 27% in a single year, from $228,000 in 2023 to $290,000 in 2024. Amazon’s Andy Jassy put it plainly: “The once-in-a-lifetime rise of AI will eliminate the need for certain jobs in the next few years.”

Moderna’s CEO challenged his team to launch 10 new products without adding headcount. Salesforce stopped hiring software engineers. Klarna went from 5,500 employees to 3,000. These are not anecdotes. They are the early chapters of a structural trend.

Naval’s prediction that the future will be “almost all startups” is not a prediction that big companies disappear. It’s a prediction that the organizational logic of the 20th century — the large, vertically integrated, knowledge-hoarding enterprise — gives way to a barbell economy: massive infrastructure platforms at one end, lean, highly profitable small businesses at the other, and the bloated middle getting continuously hollowed out.

What the Survivors Understand

The companies and individuals who will navigate this well are not the ones who resist the technology or the ones who uncritically over-automate (see: Klarna). They’re the ones who understand, with precision, which parts of their moat were never real — which advantages were always proxy benefits of friction that’s now gone — and which parts are genuinely durable.

The lawyer who survives isn’t the one who memorizes case law. It’s the one who builds relationships of trust with clients who want human judgment in high-stakes moments. The consultant who survives isn’t the one who packages frameworks. It’s the one who has genuine judgment about organizational dynamics, built from years of pattern recognition across complex situations, that AI can assist but not replace.

The SaaS company that survives isn’t the one that has data stickiness because migrating is painful. It’s the one that has network effects so strong that even if migration were free, no one would leave. The media company that survives isn’t the one that publishes the most content. It’s the one that has a genuine community of people who are there for each other, not just for the content.

Naval has always been consistent on this point: the moats that survive in an AI world are built on things that AI cannot easily replicate. Unique data. Genuine relationships. True network effects. Irreplaceable human judgment. And perhaps most importantly — the ability to iterate and move fast, using AI as leverage, while others are still deliberating about whether to adopt it.

Speed of iteration is the new moat. Not because speed was never important before, but because the tools for moving fast have democratized so dramatically that the companies and individuals who use them skillfully will lap the ones who don’t. The advantage is not in the AI itself — everyone will have access to comparable AI. The advantage is in using it faster, more intelligently, and more daringly than everyone else.

A Final Note on the Reset

Every few decades, the economy undergoes a structural reset that rearranges the hierarchy of competitive advantage. The industrial revolution reset it. Electricity reset it. The internet reset it.

Each reset drained the moats that depended on the previous paradigm and deepened the moats that aligned with the new one. Factory owners who controlled scarce manufacturing capacity lost their advantage when mass production became universal. Publishers who controlled distribution lost it when the web made distribution free. Retailers who controlled physical shelf space lost it when Amazon made everything available to everyone.

AI is the next reset.

It is not coming for all moats simultaneously. As Naval noted, the process will be slower where network effects are strong. Some industries will resist longer. Some moats are deeper than they look and will take longer to drain. This is not a cliff — it’s a slope.

But the direction is clear. The moats built on information asymmetry, specialized knowledge, scale advantages in knowledge work, switching cost lock-in, and the high cost of software development are all under pressure from the same force: AI dramatically reduces the cost of the activities those moats were built on.

Naval’s observation is not pessimistic. It’s diagnostic. He’s not saying the economy will collapse. He’s saying it will restructure — and the people and companies who understand which moats are being drained, and start building the next generation of advantages before everyone else does, will be the ones who come out ahead.

The moats aren’t disappearing. They’re moving.

The question, for every company and every professional in every knowledge-intensive field, is the same: do you know which one you’re standing in, and whether the water level is rising or falling?

If you found this essay useful, follow Naval Ravikant’s X/Twitter for his latest thinking on AI, leverage, and wealth creation. For the data on lean company building, The Earnout Investor’s analysis is worth your time. And for the clearest window into how AI is reshaping professional services in real time, the AI & the Law newsletter at The National Law Review remains one of the most credible ongoing chronicles of the transition.AI and the Death of the Moat: What Every Business Leader Needs to Know Before It’s Too Late