China’s AI giant quietly unleashed its most powerful model yet. Here’s everything you need to know.

Hold Up — What Just Happened?

If you blinked, you might have missed it. On April 20, 2026, Alibaba quietly dropped a bombshell in the AI world. The company released Qwen 3.6-Max-Preview — its most powerful AI model to date — and the AI community is buzzing. This isn’t just another incremental update. This is Alibaba planting its flag firmly in frontier AI territory, going toe-to-toe with OpenAI’s GPT and Anthropic’s Claude.

But here’s the thing: this isn’t even the final version. It’s a preview. A work in progress. And it’s already turning heads.

So what exactly is Qwen 3.6-Max-Preview? Why does it matter? And what does it mean for the global AI race? Buckle up, because we’re about to break it all down.

Meet the New King of the Qwen Family

The Qwen series has been Alibaba’s AI flagship for a while now. But the 3.6-Max-Preview is something different. It sits at the very top of the Qwen 3.6 lineup — above Qwen 3.6-Plus, above Qwen 3.6-Flash, and above the open-source Qwen 3.6-35B-A3B.

Think of it like a product family. You’ve got the budget option, the mid-range, the premium — and then the Max. That’s where this model lives.

According to Qwen’s official blog, the Max-Preview delivers meaningful improvements over its predecessor, Qwen 3.6-Plus, in three core areas:

- Agentic coding capability — the model’s ability to autonomously write, debug, and execute code

- World knowledge — how much the model actually knows about the real world

- Instruction following — how well it understands and executes what you ask it to do

Those aren’t small wins. Those are the exact areas where frontier AI models compete most fiercely. And Alibaba just raised the bar.

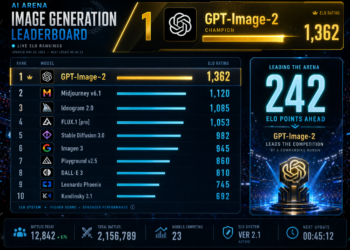

The Benchmark Blitz: Six First-Place Finishes

Let’s talk numbers, because the benchmarks are genuinely impressive.

Qwen 3.6-Max-Preview ranked first across six major benchmarks that test coding and agentic capabilities. Here’s the breakdown:

- SWE-bench Pro — real-world software engineering tasks

- Terminal-Bench 2.0 — command-line execution (improved by 3.8 points over Qwen 3.6-Plus)

- SkillsBench — general problem-solving (up 9.9 points)

- QwenClawBench — tool use

- QwenWebBench — web interaction

- SciCode — scientific programming (up 10.8 points)

Those aren’t just marginal improvements. A 10.8-point jump on SciCode is significant. A 9.9-point gain on SkillsBench is the kind of leap that makes researchers sit up straight.

And it doesn’t stop there. On world knowledge benchmarks, SuperGPQA (advanced reasoning) improved by 2.3%, while QwenChineseBench (Chinese language performance) jumped by 5.3%. On instruction-following ability, measured by ToolcallFormatIFBench, the model topped the rankings — beating Claude.

Yes, you read that right. It beat Claude.

As AI Engineering Trend on Medium noted, these gains span agent programming, world knowledge mastery, and real-world instruction comprehension. The model is still being actively iterated, which means these numbers could get even better.

What Makes This Model Tick?

Okay, so it scores well on benchmarks. But what’s actually inside this thing?

A few features stand out.

First, there’s the 256k token context window. That’s massive. For context (pun intended), a 256k token window means the model can process and reason over roughly 200,000 words in a single session. That’s like reading an entire novel and still having room to spare. For developers building long-running applications or complex document analysis tools, this is a game-changer.

Second, there’s preserve_thinking — a feature that carries reasoning traces across multi-turn conversations. In plain English: the model remembers how it was thinking, not just what it said. Alibaba specifically recommends this for agentic tasks where context continuity matters. If you’re building an autonomous agent that needs to maintain a coherent chain of thought across dozens of steps, this feature is exactly what you’ve been waiting for.

Third, the model’s API is compatible with both OpenAI and Anthropic specifications. That’s a smart move. It means developers can plug Qwen 3.6-Max-Preview into existing pipelines with minimal friction. No major rewrites. No headaches. Just swap in the model string — qwen3.6-max-preview — and you’re off to the races.

One thing to note: the model handles text only at launch. No image input yet. That’s a limitation worth keeping in mind, especially as multimodal capabilities become increasingly standard.

The Open-Source Twist: A Family Affair

Three days before the Max-Preview dropped, Alibaba open-sourced Qwen 3.6-35B-A3B — a 35-billion-parameter model with a clever trick up its sleeve. It only activates 3 billion parameters per inference.

Wait, what? How does that work?

This is called a Mixture of Experts (MoE) architecture. Instead of firing up all 35 billion parameters every time you ask it a question, the model selectively activates only the most relevant 3 billion. The result? Dramatically lower compute costs without sacrificing output quality. It’s like having a team of 35 specialists but only calling in the 3 you actually need for each specific task.

This open-source release was a gift to the developer community. And it set the stage perfectly for the Max-Preview announcement. Together, the Qwen 3.6 lineup now covers the full spectrum:

- Max-Preview — frontier performance, proprietary

- Plus — balanced workloads

- Flash — speed-first tasks

- 35B-A3B — local deployment, open-source

It’s a well-thought-out product strategy. Something for everyone, from hobbyist developers running models on their laptops to enterprise teams building production-grade AI systems.

The Big Shift: From Free to Fee

Here’s where things get interesting — and a little controversial.

Alibaba built its AI reputation largely on free, open-source models. The Qwen series exploded in popularity because developers could download and run these models without paying a dime. That strategy worked spectacularly well. Qwen overtook Meta’s Llama as the most deployed self-hosted model on the planet.

But now, the game is changing.

Qwen 3.6-Max-Preview is a proprietary, hosted model with no open weights. You can’t download it, You can’t run it locally. You access it through Qwen Studio or the Alibaba Cloud Model Studio API — and you pay for what you use.

This shift didn’t happen in isolation. Just days before the Max-Preview launch, Alibaba shut down the free tier of Qwen Code. Around the same time, fellow Chinese AI lab MiniMax rewrote its open-source license to block commercial use without written authorization. As Decrypt reported, both moves signal a broader trend: Chinese AI labs that built massive adoption on free, open services are now pivoting toward monetized, proprietary offerings.

It makes business sense. You can’t run frontier AI infrastructure for free forever. But it does mark the end of an era — and some developers aren’t thrilled about it.

China’s AI Moment: From 1.2% to 30% in One Year

Let’s zoom out for a second, because the Qwen story is part of something much bigger.

In late 2024, Chinese open-source AI models accounted for just 1.2% of global open-model usage. By the end of 2025, that number had exploded to roughly 30%. Qwen led that charge.

That’s not a gradual climb. That’s a rocket ship.

PANews reported that the Qwen 3.6 series — including the Max-Preview, Plus, Flash, and the open-source 35B-A3B — represents the full breadth of Alibaba’s current AI ambitions. The Max-Preview is the proprietary tip of that spear. It’s the model Alibaba is betting will compete directly with OpenAI and Anthropic at the frontier.

And based on early results, that bet looks pretty solid.

Independent benchmarking from Artificial Analysis puts Qwen 3.6-Max-Preview as the second best performing model in its class — right behind Muse Spark, and well above the median of comparable reasoning models in its price tier.

Second place in the world. For a model that’s still in preview. That’s remarkable.

What Developers Are Saying

The developer community has been quick to react. On social media, engineers and AI researchers have been sharing benchmark comparisons, integration guides, and early impressions.

The consensus? The model is fast, capable, and surprisingly easy to integrate. The OpenAI-compatible API means most teams can test it within hours, not days. The preserve_thinking feature has drawn particular praise from developers building multi-step agentic workflows.

The main complaints? The lack of image input is a real limitation for teams building multimodal applications. And the shift to a paid model — while understandable — stings a little for developers who built their workflows around free Qwen access.

But overall, the reception has been positive. When a model beats Claude on instruction-following benchmarks, people pay attention.

What Comes Next?

Alibaba has been explicit: Qwen 3.6-Max-Preview is a work in progress. The company expects further gains in future versions. The model is under active development, and the preview label is there for a reason.

What might those improvements look like? Multimodal capabilities seem like an obvious next step — image input, and potentially video. Further gains on reasoning benchmarks. Possibly expanded context windows. And almost certainly, continued improvements to the agentic capabilities that are already turning heads.

The global AI race is moving fast. OpenAI, Anthropic, Google, Meta, and now Alibaba are all pushing hard. Each new release raises the bar. Each benchmark win reshuffles the leaderboard.

But here’s the thing: Alibaba isn’t just competing anymore. With Qwen 3.6-Max-Preview, they’re leading in several key areas. That’s a significant shift from even a year ago.

The Bottom Line

Alibaba dropped something special on April 20, 2026. Qwen 3.6-Max-Preview is fast, capable, and benchmark-crushing. It tops six major coding and agentic benchmarks, It beats Claude on instruction following. It ships with a 256k token context window and a preserve_thinking feature that developers are already excited about.

Yes, it’s proprietary, it’s a preview and yes, it only handles text for now.

But as a signal of where Alibaba is headed — and where Chinese AI is headed more broadly — it’s hard to overstate the significance of this release.

The frontier AI race just got a new serious contender. And it speaks Mandarin.

Sources

- Decrypt — Alibaba Drops Qwen 3.6 Max Preview—Its Most Powerful Model Yet

- Qwen Official Blog — Qwen 3.6-Max-Preview

- PANews — Alibaba releases Qwen 3.6-Max preview version

- AI Engineering Trend on Medium — Qwen3.6-Max-Preview: An Early Look at the Next Generation Flagship Model

- Artificial Analysis — Qwen 3.6 Max Model Performance